Discrete HTPC GPU Shootout

by Ganesh T S on June 12, 2011 10:30 PM ESTWe ran the DXVA Checker benchmark on all the cards, but our graphing engine allows us to present only four series in each graph. This meant that we had to choose between the GDDR5 based AMD 6450 and the DDR3 based MSI 6450. Keeping in mind our focus on passively cooled GPUs, we went with the latter. It was observed that the 'No VPP (Video Post Processing)' frame rates were similar for both the candidates. However, as post processing algorithms were enabled, the MSI 6450 began to perform a bit worse than the AMD 6450. We will analyze the probable cause later. We were able to get DXVA2 acceleration with the EVR renderer for all codecs except the MPEG-4 variants.

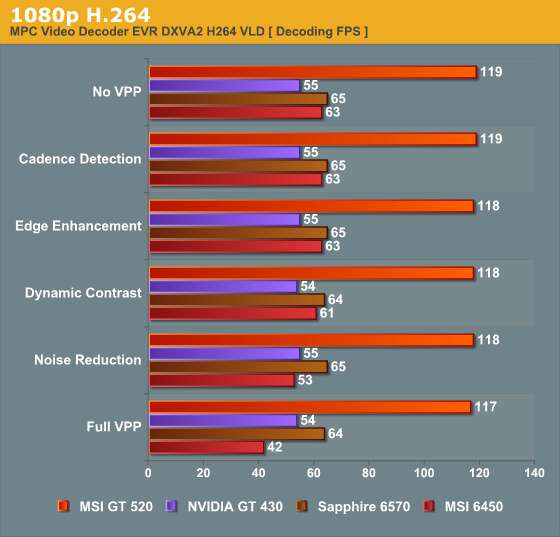

First, we look at a 1080p H.264 clip. The MPC Video Decoder v1.5.2.3134 was able to playback the clip without issues on all the GPUs. There were a couple of surprises in store when the DXVA Checker benchmark (as described in the previous section) was run.

While the GT 430 was unable to reach the magical 60 fps benchmark figure (I expect any GPU worth its salt to be able to decode 1080p60 H264 clips), the GT 520 sprang a surprise with some insane decoding speeds. Even considering the fact that the GT 520 took shortcuts by skimping on the post processing, it comfortably beats every other GPU in the race. The 430's benchmark result was even more puzzling, considering the fact that all the 1080p60 AVCHD and re-encoded broadcast clips that we threw at it played back flawlessly. We talked to NVIDIA about this, and it looks like the culprit in this case was the bitrate. Our sample was a 40 Mbps clip at 1080p30. At 60 fps, the VPU engine would have had to process a sample at 80 Mbps, and apparently, the VP4 engine in the GT 430 is simply not capable of that. We are willing to cut NVIDIA some slack here, because I have personally not seen any real 1080p60 content at 80 Mbps. We will cover both of the above aspects in detail in the next section.

With the exception of the 6450, we find that enabling various post-processing options doesn't bring down the decode frame rate. This shows that the latency of the post processing steps is completely hidden by the time spent in the UVD / VPU engines to obtain the decoded frame. For the 6450, we infer that the lower core clock for the stream processors slows down the post processing steps a bit too much.

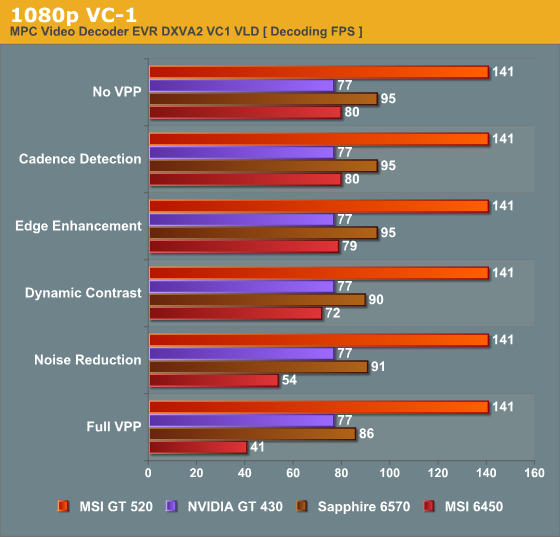

For the 1080p VC-1 clip, we again use MPC Video Decoder v1.5.2.3134 for flawless play back.

We find that the NVIDIA GPUs hide their post processing latency in the time taken by the VPU engine. However, the 6570 shows a gradual decline in the throughput as various options are enabled. The decline is not as alarming as the 6450's, and manages to comfortably stay above 60 fps.

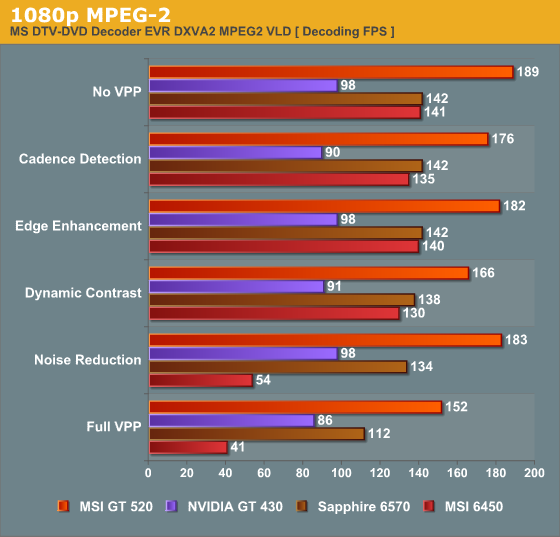

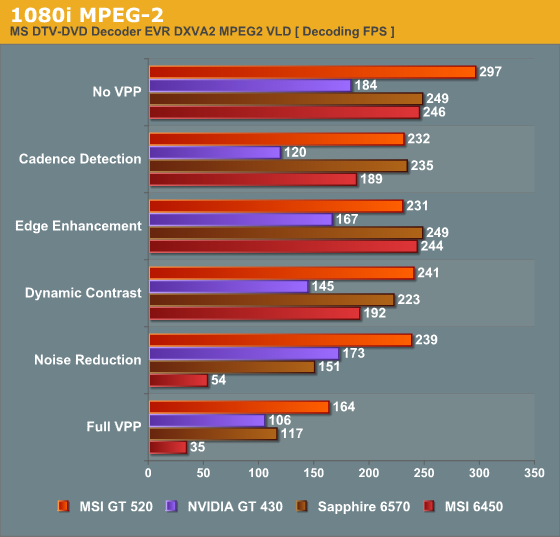

VLD acceleration for MPEG-2 was only recently introduced in the UVD 3 engine by AMD. The Microsoft DTV-DVD Video Decoder is able to provide DXVA2 acceleration for MPEG-2 clips.

It is not clear why turning on deinterlacing / cadence detection should affect the throughput of the decode of the progressive clip, but that is what we observe for all the candidates except the 6570. Compared to VC-1 and H.264 decoding which decided the throughput of the video pipeline, MPEG-2 is much easier on the UVD / VPU engine. This is reflected in the fact that the video post processing brings down the throughput quite a bit on all the GPUs.

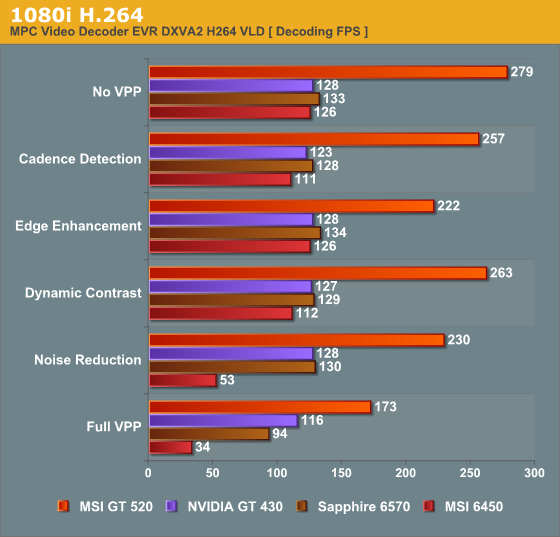

Moving onto interlaced streams, we will consider a 1080i H.264 clip first.

As expected, deinterlacing definitely kicks in to lessen the throughput of the frames. Unlike the 1080p H.264 decode performance, we find that all the GPUs are now limited by how fast the post-processing can be done. This makes sense, since the UVD/VPU engine needs to operate for only half the usual horizontal resolution for interlaced content. Note that the 'frames per second' figure presented for the interlaced streams is actually 'fields per second' (a 1080i clip showing 29.97 fps with MediaInfo actually has 59.94 fields per second).

The interlaced MPEG-2 performance is as below:

Results are very similar to what we got for the interlaced H.264 clip. One can conclude that interlaced clips spend more time getting post-processed compared to the progressive clips, but that is hardly surprising.

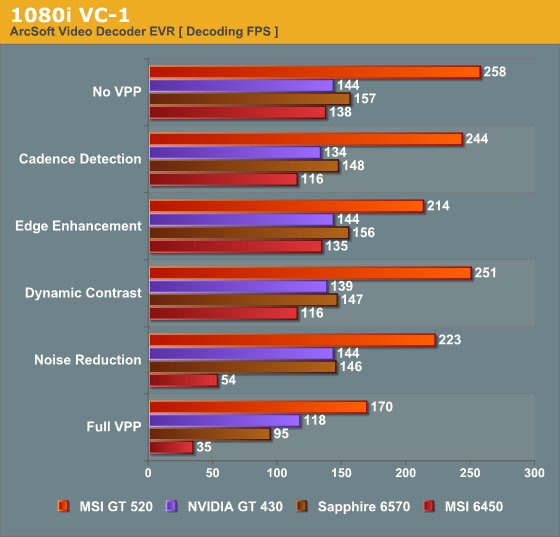

We had noted earlier that DXVA2 / EVR wasn't enabled for interlaced VC-1 streams on any of the GPUs. However, with the checkactivate.dll hack (described in the LAV Splitter section), we were able to make Arcsoft Video Decoder appear in the list of codecs when the 'Check DirectShow / MediaFoundation Decoders' was used for interlaced VC-1 clips. Though it wasn't explicitly indicated that the support was DXVA2 using EVR, we did find that playing back the stream using EVR consumed almost nil CPU resources and kept the GPU / VPU engine quite busy. Presented below is the interlaced VC-1 performance using the Arcsoft Video Decoder in Total Media Theater v5.0.187

The takeaway from this section is that cards which run too close to the 60 fps limit with all post processing steps enabled should be avoided, unless there are some convincing reasons for that. The results also need to be taken in conjunction with the day-to-day usage experience. As mentioned before, the 6450 fails on both counts. The GT 520 fails the day-to-day usage test (deinterlacing performance). The GT 430 gets a recommendation despite weighing in at less than 60 fps for the 1080p H.264 stress stream. The 6570 is the hands down winner in this section. It is able to carry out all the post processing steps even when it is forced to process very stressful video streams.

70 Comments

View All Comments

jwilliams4200 - Monday, June 13, 2011 - link

All the numbers add up correctly now. Thanks for monitoring the comments and fixing the errors!Samus - Monday, June 13, 2011 - link

Honestly, my Geforce 210 has been chillin' in my HTPC for 2+ years, and works perfectly :)josephclemente - Monday, June 13, 2011 - link

If I am running a Sandy Bridge system with Intel HD Graphics 3000, do these cards have any benefit over integrated graphics? What is Anandtech's HQV Benchmark score?I tried searching for scores, but people say this is subjective and one reviewer may differ from another. One site says 196 and another in the low 100's. What does this reviewer say?

ganeshts - Monday, June 13, 2011 - link

Give me a couple of weeks. I will be getting a test system soon with the HD 3000, and I will do detailed HQV benchmarking in that review too.dmsher99@gmail.com - Tuesday, June 14, 2011 - link

I recently built a HTPC with a core i5-2500k on a ASUS P8H67 EVO with a Ceton InfiniTV cable card. Note that the Intel driver is fundamentally flawed and will destroy a system if patched. See the Intel communities thread 20439 for more details.Besides causing BSOD over HDMI output when patched, the stable versions have their own sets of bugs including a memory bleed when watching some premium content on HD channels that crashed WMC. Intel appears to have 1 part time developer working on this problem but every test river he puts out breaks more than it fixes. Watching the same, content with a system running a NVIDIA GPU and the memory bleed goes away.

In my opinion, second gen SB chips is just not ready for prime time in a fully loaded HTPC.

jwilliams4200 - Monday, June 13, 2011 - link

"The first shot shows the appearance of the video without denoising turned on. The second shot shows the performance with denoising turned off. "Heads I win, tails you lose!

ganeshts - Monday, June 13, 2011 - link

Again, sorry for the slip-up, and thanks for bringing it to our notice. Fixed it. Hopefully, the gallery pictures cleared up the confusion (particularly the Noise Reduction entry in the NVIDIA Control Panel)stmok - Monday, June 13, 2011 - link

Looking through various driver release README files, it appears the mobile Nvidia Quadro NVS 4200M (PCI Device ID: 0x1056) also has this feature set.The first stable Linux driver (x86) to introduce support for Feature Set D is 270.41.03 release.

=> ftp://download.nvidia.com/XFree86/Linux-x86/270.41...

It shows only the Geforce GT 520 and Quadro NVS 4200M support Feature Set D.

The most recent one confirms that they are still the only models to support it.

=> ftp://download.nvidia.com/XFree86/Linux-x86/275.09...

ganeshts - Monday, June 13, 2011 - link

Thanks for bringing it to our notice. When that page was being written (around 2 weeks back), the README indicated that the GT 520 was the only GPU supporting Feature Set D. We will let the article stand as-is, and I am sure readers perusing the comments will become aware of this new GPU.havoti97 - Monday, June 13, 2011 - link

So basically the app store's purpose is to attract submissions of ideas for features of their next OS, uncompensated of course. All the other crap/fart apps not worthy are approved and people make pennies of those.