NVIDIA's GeForce GTX 560: The Top To Bottom Factory Overclock

by Ryan Smith on May 17, 2011 9:00 AM ESTNew Release 275 Drivers & The Test

Launching alongside the GTX 560 will be the newest branch of NVIDIA’s GeForce drivers: Release 275 beta. This actually comes hot on the heels of Release 270, which came out only a month and a half ago.

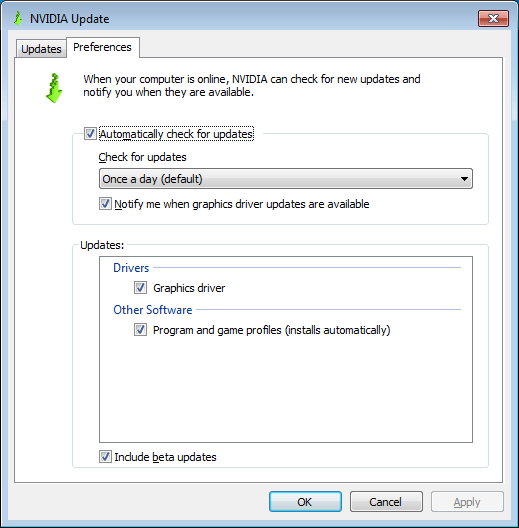

Unlike Release 270 NVIDIA isn’t making a lot of performance promises, so this is mostly a feature release. The big item in 275 for most gamers will be further refinements to the auto-update mechanism first introduced in 270. NVIDIA has finally fully ported over Optimus’ auto-update feature, meaning that NVIDIA’s drivers can now automatically find and install profile updates in the background. However whereas Optimus profile updates were necessary for switchable graphics, for desktop users the primary purpose of auto-updating profiles is for SLI and anti-aliasing compatibility, as NVIDIA uses compatibility flags in their profiles to make those features work.

Automatic profile updates won’t completely absolve SLI from periods of incompatibility, but it should help. NVIDIA has released out of band profile updates for SLI before, but these were rather rare. If they now release profile updates much more frequently, then this will be a boon for SLI users, particularly GTX 295/590 users. Otherwise SLI is mostly limited by what can be done with a profile – if NVIDIA has to update the driver itself, then users will still need to wait for a new driver release. Which on that note, NVIDIA hasn’t changed the auto-update procedure for the drivers: profiles will auto-download and install, but driver updates must still be manually approved.

NVIDIA tells us that in the future they will also be able to deliver 3D Vision compatibility updates with profiles, but this will probably require a bit of a rearchitecting to their drivers and profiles. Currently NVIDIA’s profiles contain a few flags for 3D Vision (mainly whether it’s allowed), but there aren’t any sweeping compatibility bits as there are for SLI and AA.

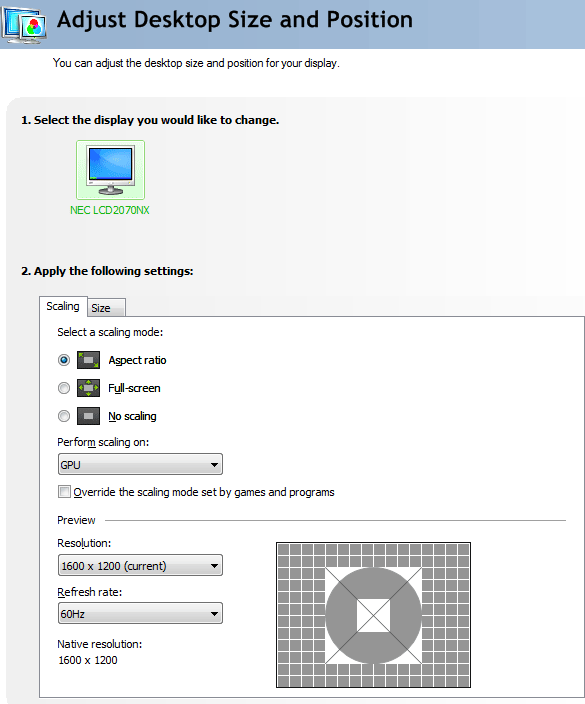

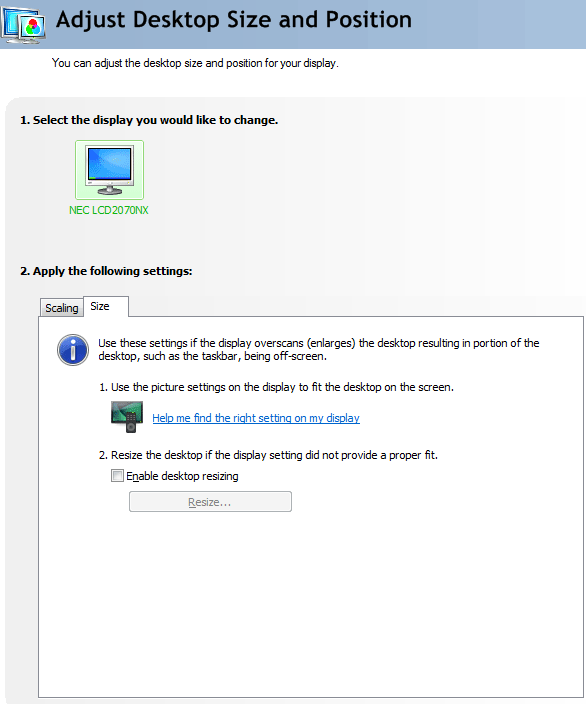

Moving on, the other big functionality update with 275 is a new resizing and scaling UI in the NVIDIA control panel. The core functionality of scaling hasn’t changed much as NVIDIA has offered these controls for quite some time, but now scaling controls are available for VGA and HDMI displays, versus just DVI and DisplayPort as it was previously. There’s also a new override option or Windows 7, for forcing misbehaving programs to use NVIDIA’s scaling options instead of their own. (Ed: We’ve never actually encountered this before. If you know of any games/applications that need this option, please let us known in the comments)

As for resizing, NVIDIA has tweaked the UI to help better guide users through using overscan correction and/or disabling overscan on their TVs. The ideal method of dealing with overscan is to disable it on the TV (thereby ensuring 1:1 pixel mapping), which is what NVIDIA now first directs users toward. For users that can’t disable overscan, they can then unlock NVIDIA’s resizing controls. NVIDIA tells us that they’ve also done some work to improve resizing compatibility for games/applications that try to force standard resolutions, but we have not had an opportunity to test this yet.

The Release 275 betas should be available later today, with WHQL drivers appearing within a month.

The Test

As we mentioned in our introduction, the lack of any reference-clocked cards means that the GTX 560’s clocks – and thereby the GTX 560’s performance – is not well rooted. As a result we’ve tested our ASUS GTX 560 DirectCU II Top at a few different clockspeeds. We’ve tested the card at NVIDIA’s reference clocks of 810/4004 (GTX 560 Base), along with the slowest "mid-grade" card on NVIDIA’s release list: 850/4104 (GTX 560 Mid). NVIDIA is pitching the GTX 560 as their $199 card, so for the purposes of our review we’ll be focusing primarily on the mid-clocked GTX 560, as this is the approximate speed of most of the $199 cards. If you buy a $199 GTX 560 today, this should closely represent the speed of the card you’re buying.

Ideally 810/4004 cards will be relegated to the OEM market, but if not we also have the base clocks included for appropriate consideration. It goes without saying that we’d rather NVIDIA just create two different product lines rather than having so many cards under the same umbrella, but at this point we’re just repeating ourselves.

We’ve also included our overclocking results with the ASUS GTX 560 DirectCU II Top, colored in orange. As we were only able to reach 950/4400, the performance gains are limited.

For drivers, on the NVIDIA side we’re using 275.20 beta for the GTX 560, the GTX 460 1GB, and GTX 560 Ti. In practice the average performance difference between release 275 and release 270 is around 1% in favor of 275. On the ATI side we’re using the Catalyst 11.5a hotfix; however do note that in our testing we’ve found that performance is identical to the 11.4 drivers.

| CPU: | Intel Core i7-920 @ 3.33GHz |

| Motherboard: | ASUS Rampage II Extreme (X58) |

| Chipset Drivers: | Intel 9.1.1.1015 (Intel) |

| Hard Disk: | OCZ Summit (120GB) |

| Memory: | Patriot Viper DDR3-1333 three x 2GB (7-7-7-20) |

| Video Cards: |

AMD Radeon HD 6970 AMD Radeon HD 6950 2GB AMD Radeon HD 6870 AMD Radeon HD 6850 AMD Radeon HD 5870 AMD Radeon HD 5850 AMD Radeon HD 5770 AMD Radeon HD 4870 NVIDIA GeForce GTX 580 NVIDIA GeForce GTX 570 NVIDIA GeForce GTX 560 Ti ASUS GeForce GTX DirectCU II Top NVIDIA GeForce GTX 550 Ti NVIDIA GeForce GTX 480 NVIDIA GeForce GTX 470 NVIDIA GeForce GTX 460 1GB NVIDIA GeForce GTS 450 NVIDIA GeForce GTX 285 NVIDIA GeForce GTX 260 Core 216 |

| Video Drivers: |

NVIDIA ForceWare 262.99 NVIDIA ForceWare 270.51 Beta NVIDIA ForceWare 275.20 Beta AMD Catalyst 10.10e AMD Catalyst 11.4 AMD Catalyst 11.5a |

| OS: | Windows 7 Ultimate 64-bit |

66 Comments

View All Comments

L. - Thursday, May 19, 2011 - link

You'll have some trouble doing an apples to apples comparison between a 580 and a 6970 ...The 580 tdp goes through the roof when you OC it, not so much with the 6970.

The 580 is a stronger gpu than the 6970 by a fair margin (2% @ 2560 to 10+%@1920), does not depend much on drivers.

The 580 costs enough to make you consider a 6950 crossfire as a better alternative . or even 6970 cf ...

The day drivers will be comparable is about a few months from now still as both cards are relatively fresh.

mosox - Tuesday, May 17, 2011 - link

And of course the only factory OCed card in there is from Nvidia.Can you show me ONE review in which you did the same for AMD? Including a factory OCed card in a general review and compare it to stock Nvidia cards?

Are you trying to inform your readers or to pander to Nvidia by following to the letter their "review guides"? No transparency in the game selection (again that TWIMTBP-heavy list), OCed cards, what's next? Changing the site's color from blue to green? Letting the people at Nvidia to do your reviews and you just post them in here?

:(

TheJian - Wednesday, May 18, 2011 - link

The heavily overclocked card is from ASUS. :)NV didn't send them a card. There is no ref design for this card (reference clocks, but not a card). They tested at 3 speeds, giving us a pretty good idea of multiple variants you'd see in the stores. What more do you want?

Nvidia didn't have anything to do with the article. They put NV's name on the SLOWER speeds in the charts, but NV didn't send them a card. Get it? They down-clocked the card ASUS sent to show what NV would assume they'd be clocked at on the shelves. AT smartly took what they had to work with (a single 560 from ASUS - mentioned as why they have no SLI benchmarks in with 560), clocked it at the speeds you'd buy (based on checking specs online) and gave us the best idea they could of what we'd expect on a shelf or from oem.

Or am I just missing something in this article?

Is it Anandtech's problem NV has spent money on devs trying to get them to pay attention to their tech? AMD could do this stuff too if they weren't losing 6Bil in 3 years time (more?). I'm sure they do it some, but obviously a PROFITABLE company (for many more years than AMD - AMD hasn't made a dime since existence as a whole), with cash in the bank and no debt, can blow money on game devs and give them some engineers to help with drivers etc.

http://moneycentral.msn.com/investor/invsub/result...

That link should work..(does in FFox). If you look at a 10 year summary, AMD has lost about 6bil over 10yrs. That's NOT good. It's not easy coming up with a top games list that doesn't include TWIMTBP games.

I tend to agree with the link below. We'd have far more console ports if PC companies (Intel,AMD,Nvidia) didn't hand some money over to devs in some way shape or form. For example, engineers working with said game companies etc to optimize for new tech etc. We should thank them (any company that practices this). This makes better PC games.

Not a fan of fud, but they mention Dirt2, Hawx, battleforge & Halflife2 were all ATI enhanced games. Assassins Creed for NV and a ton more now of course.

http://www.fudzilla.com/graphics/item/11037-battle...

http://www.bit-tech.net/news/gaming/2009/10/03/wit...

Many more sites about both sides on their "enhancements" to games by working with devs. It's not straight cash they give, but usually stuff like engineers, help with promotions etc. With Batman, NV engineers wrote the AA part for their cards in the game. It looks better too. AMD was offered the same (probably couldn't afford it, so just complained saying "they made it not like our cards". Whatever. They paid, you didn't so it runs better on their cards in AA. More on NV's side due to more money, but AMD does this too.

bill4 - Tuesday, May 17, 2011 - link

Crysis 1, but not Crysis 2? Wheres Witcher 2 benches (ok, that one may have been time constraints). Doesnt LA Noire have a PC version you could bench? Maybe even Homefront?It's the same old ancient tired PC bench staples that most sites use. I can only guess this is because of lazyness.

Ryan Smith - Tuesday, May 17, 2011 - link

I expect to be using Crysis 1 for quite a bit longer. It's still the de-facto ourdoor video card killer. The fact that it still takes more than $500 in GPUs to run it at 1920 with full Enthusiast settings and AA means it's still a very challenging game.Crysis 2 I'm not even looking at until the DX11 update comes out. We want to include more games that fully utilize the features of today's GPUs, not fewer.

LA Noire isn't out on the PC. In fact based on Rockstar's history with their last game, I'm not sure we'll ever see it.

In any case, the GPU test suite is due for a refresh in the next month. We cycle it roughly every 6 months, though we don't replace every single game every time.

mosox - Wednesday, May 18, 2011 - link

Make sure you don't slip in any game that Nvidia doesn't like or they might cut you off from the goodies. 100% TWIMTBP please, no matter how obscure are the games.

TheJian - Wednesday, May 18, 2011 - link

Ignore whats on a box. Go to metacritic and pick top scoring games from last 12 months up to now. If the game doesn't get 80+/100 you pass. Not enough like or play it probably below there. You could toss in a beta of duke forever or something like that if you can find a popular game that's about to come out and has a benchmark built in. There's only a few games that need to go anyway (mostly because newer ones are out in the same series - Crysis 2 w/dx11 update when out).Unfortunately mosox, you can't make an AMD list (not a long one) as they aren't too friendly with devs (no money or free manpower, duh), and devs will spend more time optimizing for the people that give them the most help. Plain and simple. If you reversed the balance sheets, AMD would be doing the same thing (more of it than now anyway).

In 2009 when this cropped up Nvidia had 220 people in a dept. that was purely losing money (more now?). It was a joke that they never made nvidia any money, but they were supplying devs with people that would create physx effects, performance enhancements etc to get games to take advantage of Nvidia's features. I don't have any problem with that until AMD doesn't have the option to send over people to do the same. AFAIK they are always offered, but can't afford it, decline and then whine about Nvidia. Now if NV says "mr gamemaker you can't let AMD optimize because you signed with us"...ok. Lawsuit.

mosox - Wednesday, May 18, 2011 - link

I don't want "AMD games" that would be the same thing. I just don't want obscure games that are fishy and biased as well.Games in which a GTX 460/768 is better than a HD 6870 AND they're not even popular - but are in there to skew the final results exactly like in this review. Take out HAWX 2 and LP2 and do the performance summary again.

Lately in every single review you can see some nvidia cards beating the AMD counterparts with 2-5% ONLY because of the biased game selection.

A HW site has to choose between being fair and unbiased and serve its readers or sell out to some company and become a shill for that company.

HAWX 2 is only present because Nvidia (not Ubisoft!) demanded that. It's a shame.

Spoelie - Wednesday, May 18, 2011 - link

Both HAWX 2 and Lost Planet 2 are not in this review?mosox - Wednesday, May 18, 2011 - link

I was talking in general. HAWX2 isn't but HAWX is. And Civ 5 in which the AMD cards are lumped together at the bottom and there's no difference whatsoever between a HD 6870 and a HD 6950.