The Intel SSD 320 Review: 25nm G3 is Finally Here

by Anand Lal Shimpi on March 28, 2011 11:08 AM EST- Posted in

- IT Computing

- Storage

- SSDs

- Intel

- Intel SSD 320

AnandTech Storage Bench 2011: Much Heavier

I didn't expect to have to debut this so soon, but I've been working on updated benchmarks for 2011. Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

I'll be sharing the full details of the benchmark in some upcoming SSD articles but here are some details:

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

AnandTech Storage Bench 2011 - Heavy Workload

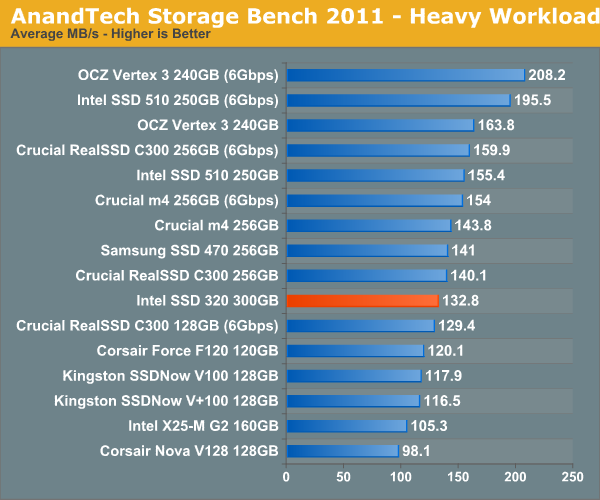

We'll start out by looking at average data rate throughout our new heavy workload test:

Overall performance is decidedly last generation. The 320 is within striking distance of the 510 but is slower overall in our heavy workload test.

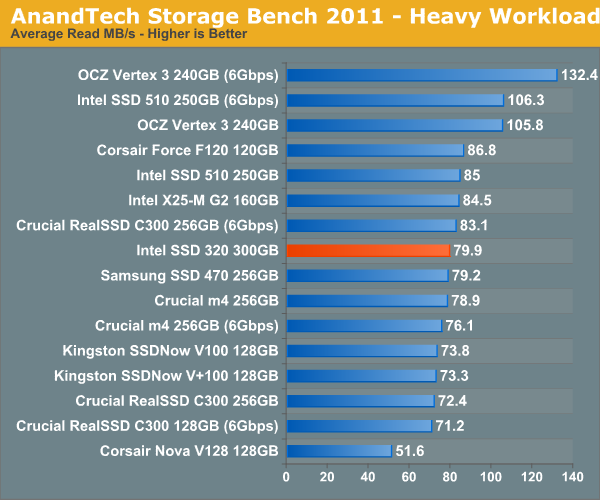

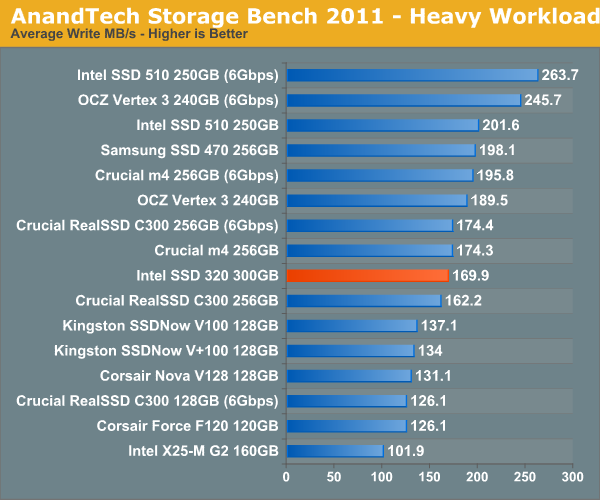

The breakdown of reads vs. writes tells us more of what's going on:

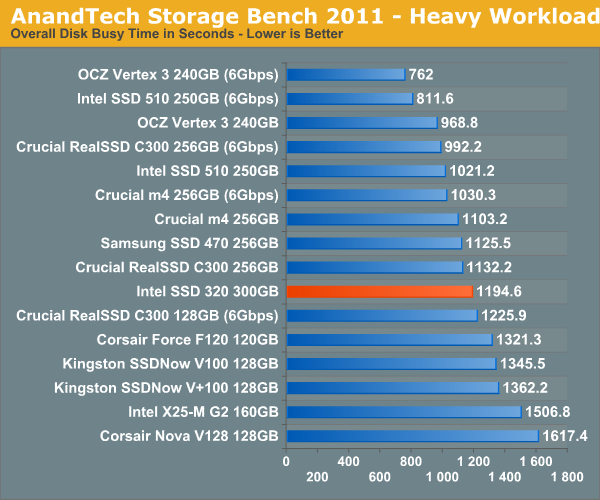

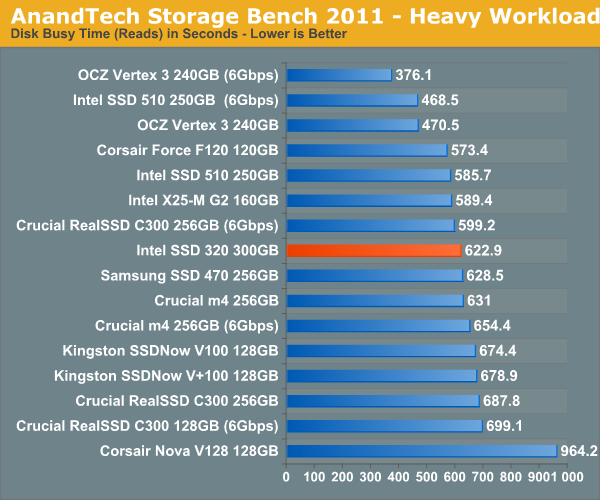

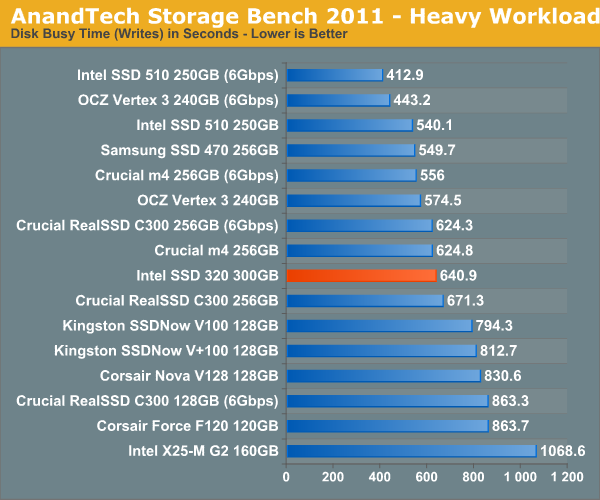

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

194 Comments

View All Comments

bji - Tuesday, March 29, 2011 - link

I think that garbage collection refers to a process of deferring block erases to a later time to be done when the drive is otherwise idle. I.e., if you need to rewrite a block you don't re-write it in place, you write it to a block from the spare area that is already cleared thus saving yourself the time of having to erase the old block before rewriting it. You still mark the old block as needing to be cleared and put into the spare area (to replace the block that was taken out of the spare area during this process), and you do that later during 'garbage collection'.There may also be some aspects to which individual blocks from an erase region (my understanding of the terminology is a bit off but I am pretty sure that flash memory can write to smaller regions than it can erase) are moved around during 'garbage collection' to consolidate them into single blocks; this takes blocks that are interspersed with dead area and collapses them down to a smaller fully populated region, then takes all of the now-free blocks and then erases them and puts them in the spare area.

Having TRIM makes both of these processes more efficient because it tells the drive that it can just mark blocks as ready-for-erase-and-put-into-the-spare-area immediately rather than having to be tracked and managed, and also increases the overall spare area available which means that more already-erased blocks are ready to be used for writes. Having to erase a block before writing it is the performance killer of SSDs and TRIM, along with intelligent algorithms listed above, in addition to things I haven't even thought of most likely, are what allow SSDs to get around the erase block performance penalty and to have such killer performance.

randomlinh - Monday, March 28, 2011 - link

I was excited to see this back when it was "announced." I was hoping we'd be closer to $1/GB for the mainstream performance around now, but looks like I've still got to wait.Hoping the 2011 round of controllers push intel to compete with pricing. I'm happy with the performance honestly, but need pricing.

Or maybe this will drive the x25-Ms down in price and I'll just RAID-0 a pair of 80GB's...

Ushio01 - Monday, March 28, 2011 - link

So all we get from Marvel and Intel are there old controllers working as they should of from the beginning with only Sandforce actually innovating, pathetic.darckhart - Monday, March 28, 2011 - link

"should have been" maybe. but we all know that's not how business works. sell it, revise it, sell it, revise it, ad nauseum. in any case, know this: they are getting comparable SF-12xx performance WITHOUT realtime compression and dedup which is mighty impressive in my book.darckhart - Monday, March 28, 2011 - link

oh i forgot to mention they're doing this on 25nm.Vlad T. - Monday, March 28, 2011 - link

Planned obsolescence or built-in obsolescence.http://en.wikipedia.org/wiki/Planned_obsolescence

That is even more obvious considering how Intel trashes G2 with the same controller.

thudo - Monday, March 28, 2011 - link

My gawd how the mighty have fallen? Doesn't remotely hold its own against the mighty Vertex 3 (SATA3). 120Gb Vertex 3 as now showing up in Canada for ~$290 -- a frick'n steal considering my boot drives have always been ~100-150Gb+ (all you need) and the performance increase is so well worth it. Shame Intel.. shame..davepermen - Monday, March 28, 2011 - link

you know that intel has the 520 ssds, too? those are to fight vertex3.not that i would ever consider ocz an ssd worth buying anyways, but lets not discuss that. anand loves them after he hated them. i still can't (as even after anand has forgiven them, they continue the same crap they did before).

so for a sata3 system, it's 520. for a sata2 system, the 320 is fine, actually nearly perfect.

sean.crees - Tuesday, March 29, 2011 - link

Say what you will of OCZ, at least they listen to their consumers, and attempt to make legitimate changes in their business practices to satisfy their existing customer base.Intel has it's advantages, but appeasing it's current customers are not one of them. Ask the numerous amounts of people that jumped on Intel's 1st gen SSD bandwagon to then be shunned from TRIM support forever, which would require nothing but a firmware upgrade. 2x 80gb for $500 each, and no TRIM support. These things have slowed to almost HDD performance.

shatteredx - Monday, March 28, 2011 - link

Performance is fine, but Intel isn't pricing these drives cheaply enough.Whatever happened to the prediction that 25nm drives would cost half as much as their 34nm siblings?