H67 – A Triumvirate of Tantalizing Technology

by Ian Cutress on March 27, 2011 6:25 PM EST- Posted in

- Motherboards

- Sandy Bridge

- H67

BIOS

One handy tip for a Gigabyte board is to update to the latest BIOS, then on the next boot, press CTRL + F12. The board will then ask if you wish to swap BIOSes between the two BIOS chips. Select yes, let the board copy them over, then when back in the OS, update the BIOS again. That way, if unbootable settings are chosen and the board needs to use the recovery BIOS, it will be the same version as the one you had before.

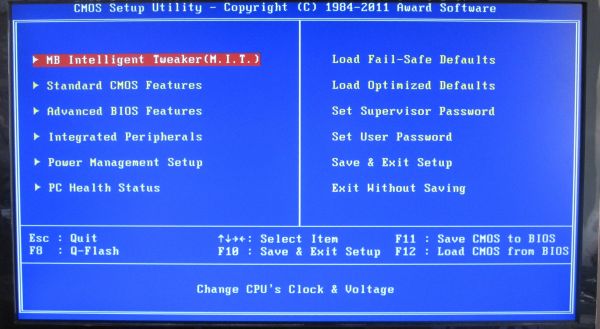

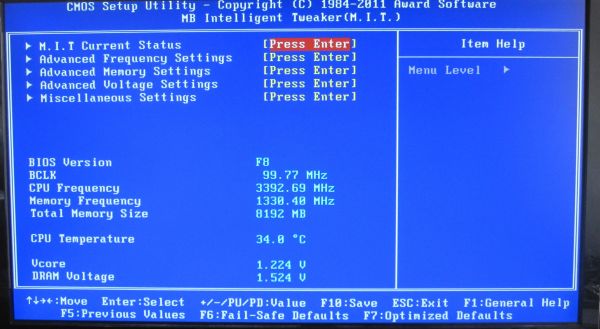

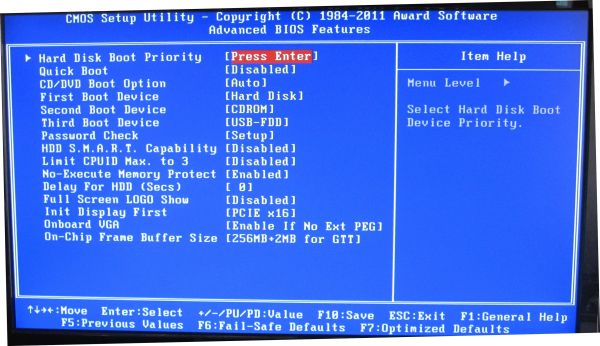

While still not on the full graphical UEFI bandwagon yet, Gigabyte’s BIOS-like UEFI system is relatively rock solid and simple to use. In the P67A-UD4 review, I did have a go at Gigabyte for not jumping on the graphical bandwagon, especially when P67 is where the majority of enthusiasts will be headed, but in H67 it is a bit of a different playing field.

The classic system splits the overclocking options into one menu (the MB Intelligent Tweaker), chipset options into another, boot options into another etc. It isn’t a flashy UEFI, but smile, Simple Makes It (a) Lot Easier.

Overclocking

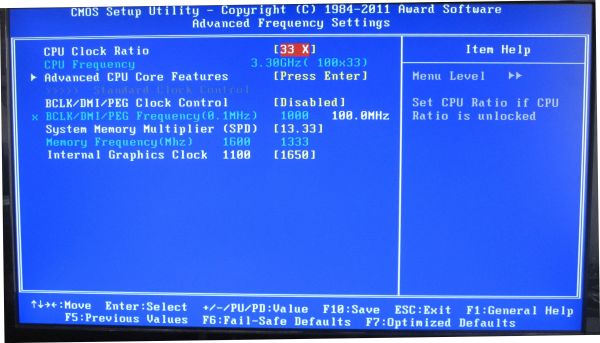

Due to this motherboard having that BCLK adjustable option, I’m splitting up the overclock section into two this time around, one for CPU and the other for the integrated GPU.

CPU Overclocking

The CPU overclock is straightforward – keep bumping up the BCLK until it’s unstable, then scale back a little. In terms of instability, the board made this clear for me by failing to boot into the OS. Take note of what raising the BCLK on a Sandy Bridge chipset actually does – as it raises the base clock of the whole system, everything is increased – CPU frequency, memory, PCIe lanes, SATA ports, USB ports etc. So here you really are limited by the lowest common denominator. If you push the BCLK hard and raise the CPU voltage, it may be pushing something else a bit too hard and lead to failure (like the B2 stepping SATA 3 Gb/s ports).

With my overclock, I knew my chip was capable of 103.5 MHz BCLK (and the memory of 2133 MHz C8, thus plenty of headroom for memory from 1333 MHz C9), so I put that in straight away, adjusting no voltages. The system booted into the OS fine, so I went back and kept raising the BCLK by 0.5 MHz. Instability came in at 105.5 MHz, so I downclocked back to 105.0 MHz, where it was stable. The 105.0 MHz gives the CPU a base clock of 33x105 = 3.465 GHz, and technically an all around 5% rise.

In the 3D Movement Algorithm test, the OC scores were:

- Single Thread: 118.37, up 4.95 % from 112.78

- Multi-Thread: 358.55, up 4.84% from 341.97

GPU Overclocking

One thing did disappoint me regarding GPU Overclocking on this board – there were no presets available to just select and go, like on the ASRock board. Perhaps we may get some when Gigabyte moves to a graphical UEFI.

However, given on previous GPU OC tests, I was aiming for 1800 MHz overclock, and to confirm what I saw on the ASRock H67 board, whereby a large overclock makes the integrated GPU scale back to a thermally more acceptable value. I started the OC at 1400 MHz, no change on any of the voltages.

Up to 1600 MHz in 100 MHz jumps worked fine. At 1700 MHz, when running through Metro2033, the game crashed. In the BIOS you have two graphics voltage options – Graphics Core and Graphics DVID. The DVID option was only changeable when Graphics Core was set to ‘normal’, but I started by raising the Graphics Core voltage. After testing all the way from 1.1 V to 1.2 V (the ASRock reached 1700 MHz with only a +0.05V offset), I switched it to normal and left the Graphics DVID on Auto. In this configuration, Metro2033 completed, but Dirt2 did not. Even adjusting the Graphics DVID to a +0.1V offset didn’t change anything, so I reset them both back to auto and scaled back to 1650 MHz. At 1650 MHz, both games ran very stable at auto voltages.

In the 3D tests, the OC scores were:

- Metro2033: 21.8 FPS, up 23.86% from 17.6 FPS

- Dirt2: 28.1 FPS, up 6.97% from 26.27 FPS

56 Comments

View All Comments

DominionSeraph - Monday, March 28, 2011 - link

Did you know that the laptop that is a college student's constant campus accessory is.. get this.. a computer?This isn't 1980. A laptop's a given either way.

Wilberwind - Sunday, March 27, 2011 - link

oh noes...that Console vs. PC debate again...Consoles are great for playing with friends. I have both, but If you're using a PC for work and internet, why not just spend a little more and make it into a cheap gaming rig?dingetje - Sunday, March 27, 2011 - link

consoles are great...for retarded kidssilverblue - Monday, March 28, 2011 - link

Just because someone chooses to play games on a console, doesn't make them retarded. You spent more money for a machine that will be utilised far less than theirs and doesn't lend itself as well to communal entertainment, but I'm not going to judge you or anyone else for whatever gaming option they've opted for.silverblue - Monday, March 28, 2011 - link

"utilised" i.e. the developers will generally program consoles to their strengths, whereas you have to hope the developers pay even half the attention to even one component in yours, be it CPU or GPU. Nothing's perfect, however for all the downsides of having a locked system, the ability to develop for only one or two permutations of hardware allows a studio to work at ekeing out every last amount of power from a supposedly limited machine.Voldenuit - Sunday, March 27, 2011 - link

Intel's hare-brained chipset segmentation strategies = failsauce.Taft12 - Monday, March 28, 2011 - link

... and don't forget -- preventing others from producing competing chipsets = monopolyabusesaucemariush - Sunday, March 27, 2011 - link

Page 8:Along the bottom are a plethora of USB headers, but no fan headers. In fact, this board is somewhat lacking USB headers – there is one for the CPU, which is oddly south of the CPU socket, and another next to the SATA ports. Trying to fit a Corsair H50 required some deft placing of the cooler or a fan extension lead, and the second fan required a 3-pin to molex connector.

Surely you mean "this board is somewhat lacking FAN headers", or it doesn't really make sense

KaarlisK - Sunday, March 27, 2011 - link

Does the power consumption at idle increase when overclocking the GPU?If the overclock affects the turbo frequency, it should not change. If the overclock changes the base frequency, I have no idea.

Concillian - Monday, March 28, 2011 - link

I really do not understand Intel's target with the H67.H61 is for the budget person + single GPU

P67 is for the overclocker with plenty of money to donate on a motherboard almost $!00 more expensive plus a CPU that has a price adder as well.

H67 is for the IGP overclocker? Wha?

The review is fine, but the products reviewed have no real target market in my mind. It's a marketing stunt that I'm surprised Anandtech didn't call them on by including an H61 motherboard here and pointing out that the real value, if there is one in this Intel generation, is H61.