AMD's Radeon HD 6990: The New Single Card King

by Ryan Smith on March 8, 2011 12:01 AM EST- Posted in

- AMD

- Radeon HD 6990

- GPUs

Power, Temperature, and Noise: How Loud Can One Card Get?

Last but not least as always is our look at the power consumption, temperatures, and acoustics of the Radeon HD 6990 series. This is an area where AMD has traditionally had an advantage, as their small die strategy leads to less power hungry and cooler products compared to their direct NVIDIA counterparts. Dual-GPU cards like the 6990 tend to increase the benefits of lower power consumption, but heat and noise are always a wildcard.

AMD continues to use a single reference voltage for their cards, so the voltages we see here represent what we’ll see for all reference 6900 series cards. In this case voltage also plays a big part, as PowerTune’s TDP profile is calibrated around a specific voltage.

| Radeon HD 6900 Series Voltage | ||||

| 6900 Series Idle | 6970 Load | 6990 Load | ||

| 0.9v | 1.175v | 1.12v | ||

The 6990 idles at the same 0.9v as the rest of the 6900 series. At load under default clocks it runs at 1.12v thanks to AMD’s chip binning, and is a big part of why the card uses as little power as it does for its performance. Overclocked to 880MHz however and we see the core voltage go to 1.175v, the same as the 6970. Power consumption and heat generation will shoot up accordingly, exacerbated by the fact that PowerTune is not in use here.

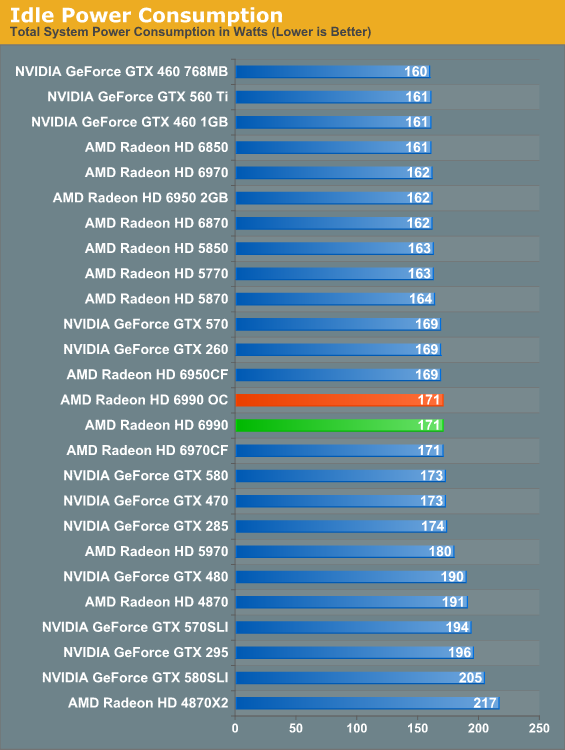

The 6990’s idle power is consistent with the rest of the 6900 series. At 171W it’s at parity with the 6970CF, while we see the advantage of the 6990’s lower idle TDP versus the 5970 in the form of a 9W advantage over the 5970.

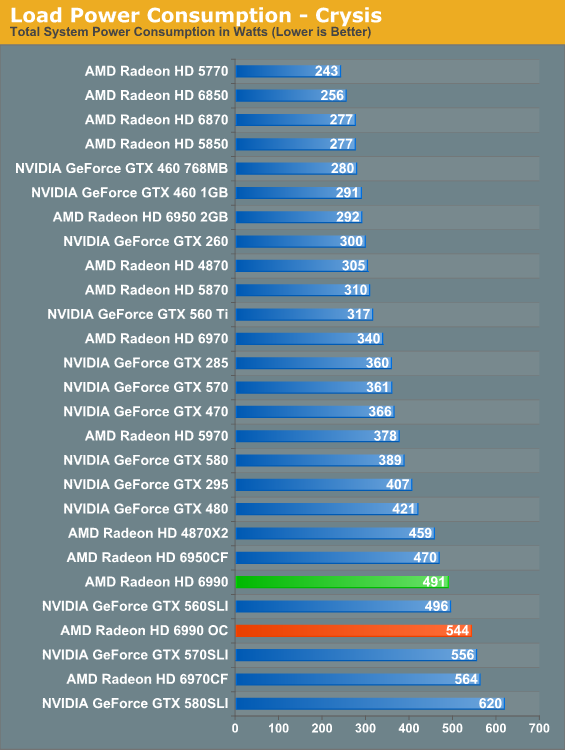

With the 6990, load power under Crysis gives us our first indication that TDP alone can’t be used to predict total power consumption. With a 375W TDP the 6990 should consume less power than 2x200W 6950CF, but in practice the 6950CF setup consumes 21W less. Part of this comes down to the greater CPU load the 6990 can create by allowing for higher framerates, but this doesn’t completely explain the disparity. Compared to the 5970 the 6990 is also much higher than the TDP alone would indicate; the gap of 113W exceeds the 75W TDP difference. Clearly the 6990 truly is a more power hungry card than the 5970.

Meanwhile overclocking does send the power consumption further up, this time to 544W. This is better than the 6970CF at the cost of some performance. Do keep in mind though that at this point we’re dissipating 400W+ off of a single card, which will have repercussions.

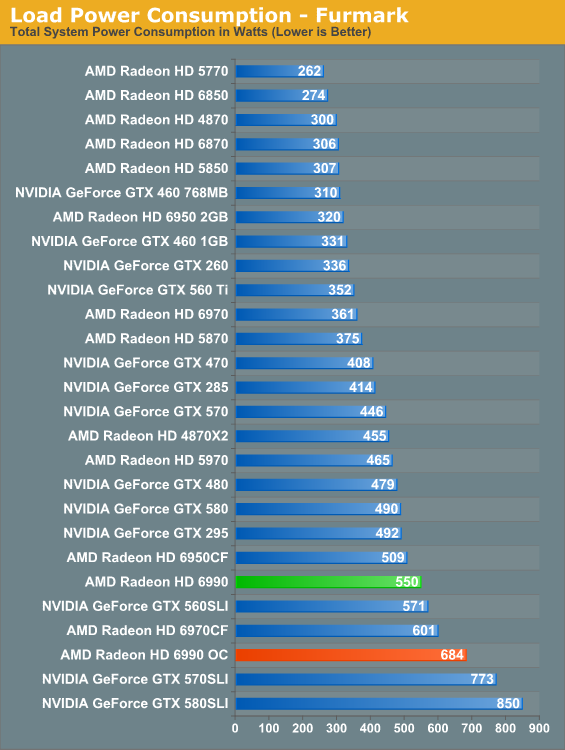

Under FurMark PowerTune limits become the defining factor for the 6900 series. Even with PT triggering on all three 6900 cards, the numbers have the 375W 6990 drawing more than the 2x200W 6950CF, this time by 41W, with the 6970CF in turn drawing 51W more. All things considered the 6990’s power consumption is in line with its performance relative to the other 6900 series cards.

As for our 6990OC, overclocked and without PowerTune we see what the 6990 is really capable of in terms of power consumption and heat. 684W is well above the 6970CF (which has PT intact), and is approaching the 570/580 in SLI. We don’t have the ability to measure the power consumption of solely the video card, but based on our data we’re confident the 6990 is pulling at least 500W – and this is one card with one fan dissipating all of that heat. Front and rear case ventilation starts looking really good at this point.

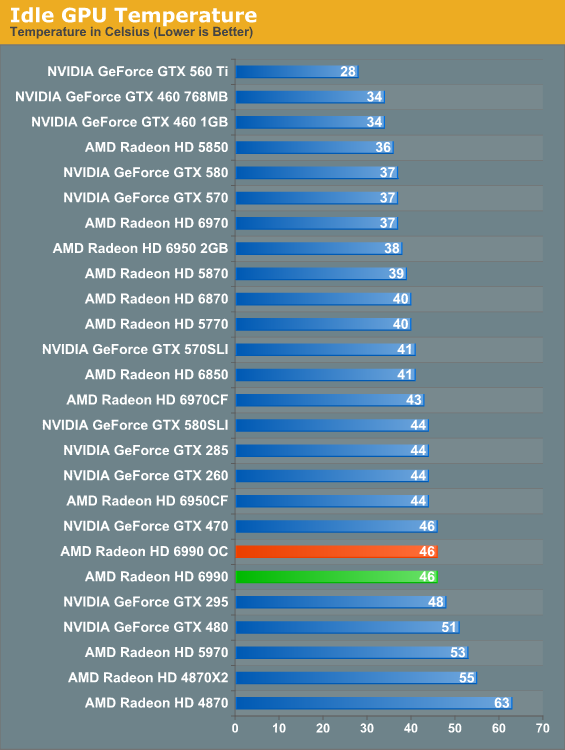

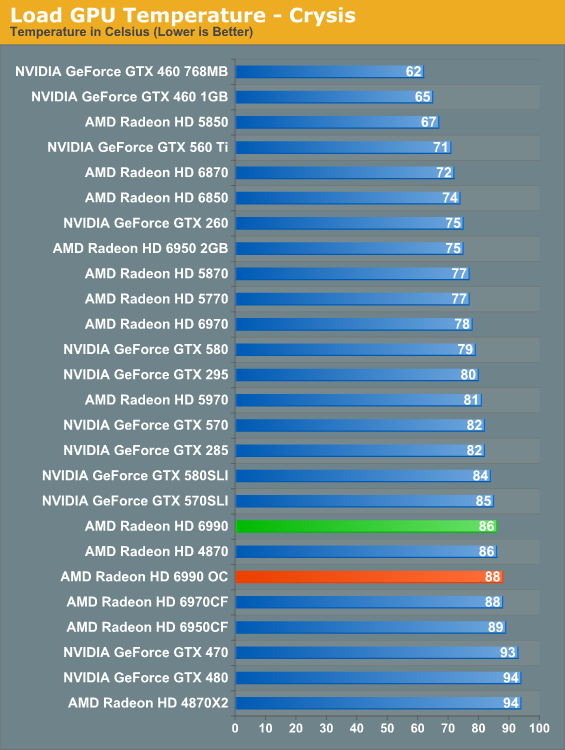

Along with the 6900 series’ improved idle TDP, AMD’s dual-exhaust cooler makes its mark on idle temperatures versus the 5970. At 46C the 6990 is warmer than our average card but not excessively so, and in the meantime it’s 7C cooler than the 5970 which has to contend with GPU2 being cooled with already heated air. A pair of 6900 cards in CF though is still going to beat the dual-exhaust cooler.

When the 5970 came out it was warmer than the 5870CF; the 6990 reverses this trend. At stock clocks the 6990 is a small but measurable 2C cooler than the 6970CF, which as a reminder we run in a “bad” CF configuration by having the cards directly next to each other. There is a noise tradeoff to discuss, but as far as temperatures are concerned these are perfectly reasonable. Even the 6990OC is only 2C warmer.

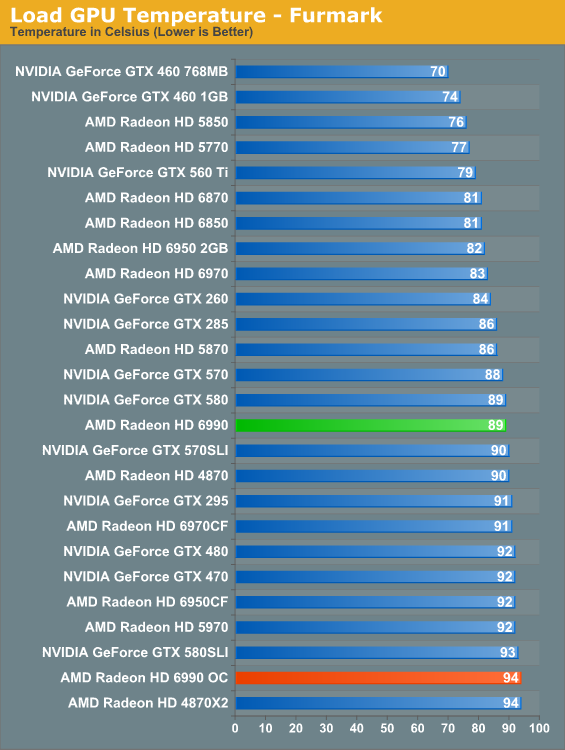

At stock clocks FurMark does not significantly change the picture. If anything it slightly improves things as PowerTune helps to keep the 6990 in the middle of the pack. Overclock however and the story changes. Without PowerTune to keep power consumption in check that 681W power consumption catches up to us in the form of 94C core temperatures. It’s only a 5C difference, but it’s as hot as we’re willing to let the 6990 get. Further overclocking on our test bed is out of the question.

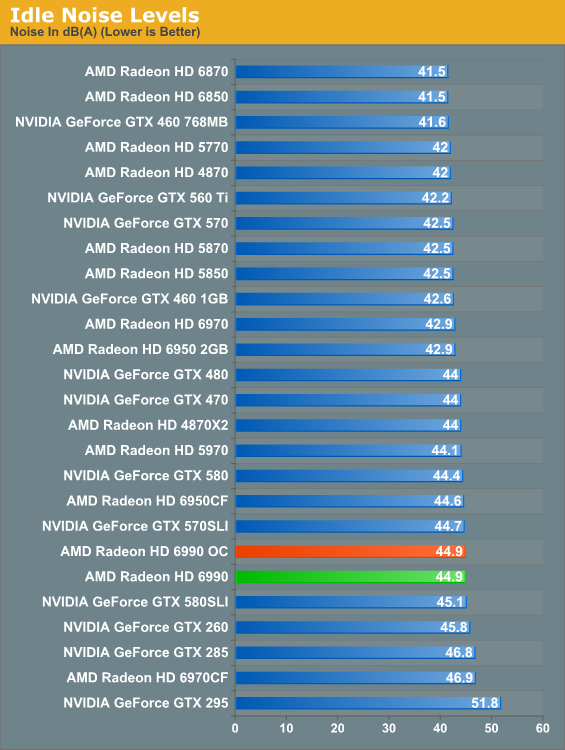

Finally there’s the matter of noise to contend with. At idle nothing is particularly surprising; the 6990 is an iota louder than the average card, presumably due to the dual-exhaust cooler.

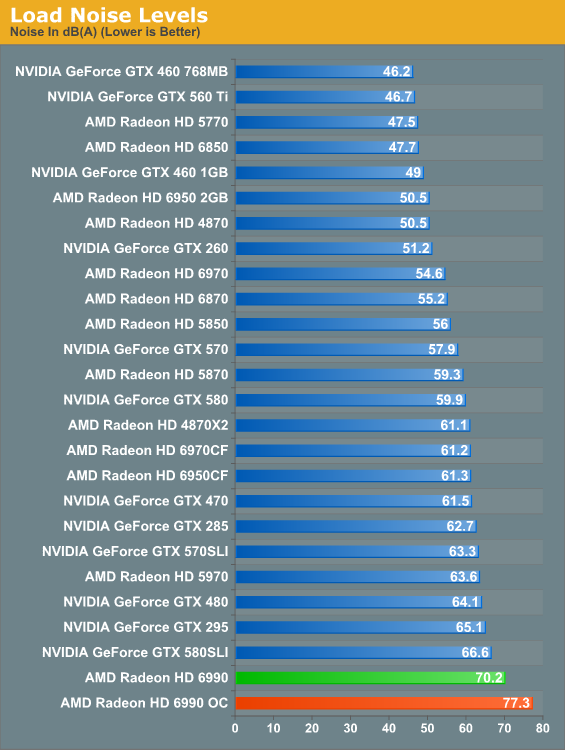

And here’s where it all catches up to us. The Radeon HD 5970 was a loud card, the GTX 580 SLI was even louder, but nothing tops the 6990. The laws of physics are a cruel master, and at some point all the smart engineering in the world won’t completely compensate for the fact that you need a lot of airflow to dissipate 375W of heat. There’s no way around the fact that the 6990 is an extremely loud card; and while games aren’t as bad as FurMark here, it’s still noticeably louder than everything else on a relative basis. Ideally the 6990 requires good airflow and good noise isolation, but the former makes the latter difficult to achieve. Water cooled 6990s will be worth their weight in gold.

130 Comments

View All Comments

Figaro56 - Tuesday, March 8, 2011 - link

2 HD 6970 Cards for $640? I don't think so! These cards are over $300 everywhere. I purchased 2 for $710 shipped and I thought that was a deal. Maybe reviews like yours here inflated the price and I purchased after the price adjustment. I have the same luck with gasoline on days I fill my tank.ViRGE - Tuesday, March 8, 2011 - link

Looking at the Egg, there's 2 different 6970s at $320, which is probably where AT got $640 from.http://www.newegg.com/Product/Product.aspx?Item=N8...

Figaro56 - Tuesday, March 8, 2011 - link

All right, you got me there. I only buy XFX double lifetime warranty cards when I start spending this much on replacing my dual GPU solution.I seem to manage to actually re-sell my used video cards when I can offer then to a buyer with a lifetime warranty. XFX double lifetime warranty is not a sales gimic, it works. Heck, I would buy a used card if it had a lifetime warranty, it's kind of a no brainer given you actually want to buy that card int he first place.

Arbie - Tuesday, March 8, 2011 - link

Thanks for keeping the Crysis Warhead minimum FPS charts!! To me, Crysis/Warhead remains the defining game (and not only technically). I don't even look at the numbers on the other titles.Also of prime importance to me are the idle power and, to a slightly lesser extent, idle noise.

Of course, like most people reading your review, I wouldn't be buying a 6990 even if it were silent. In fact, given that PC graphics requirements are apparently ramping down to console levels, I wonder how AMD/Nvidia are going to sell any significant number of cards above midrange. My HD 5770 will run everything at 1920x1200, though not always with all sliders maxed. However, I don't see much if any difference (in DX9) when I do enable 4xAA vs 2xAA etc. Certainly not enough to double the price of this $140 card.

A nit on the Crysis Warhead minimum fps chart for 1920x1200 Frost Bench - Gamer Quality - Enthusiast Shaders + 4xAA: Your Dec 10 chart shows 6970CF at 66.2 fps but this Mar 11 chart shows 66.6. Can you believe anyone would actually notice this, much less comment on it? We are too absorbed in this tech stuff (ain't it grand...).

strikeback03 - Tuesday, March 8, 2011 - link

They did say the new drivers made a slight difference, that seems likely to be one of the configurations they retestedmorphologia - Tuesday, March 8, 2011 - link

That isn't portrait orientation in the picture...it's landscape.taltamir - Tuesday, March 8, 2011 - link

The card was measured at 77.3db in the article.1. At what distance was it measured?

2. What is its db measurement 1 meter away?

taltamir - Tuesday, March 8, 2011 - link

I just looked it up, gold is worth 1430$/ounce right now.I highly doubt a watercooled 6990 will weigh half an ounce.

ekrash - Tuesday, March 8, 2011 - link

The performance bottleneck is also seen in nvidia's dual gpu offerings. Dual GPU cards operating in X16 PCIe slots must have their data lanes divided between the gpu's, so they are effectively operating at X8 data rates, not at X16 data rates. Whereas single gpu cards will utilize all X16 express lanes, and even then the PCIexpress standard may soon be obsoleted. I hope we can look forward to Intel's fiber optic technology effectively replacing all data bus signalling with 10GB fiber optic bus and peripheral device signalling which can simultaneously and independently utilize all of the different data protocols used for inter-device and system bus communications. Imagine soon AMD and Nvidia will be producing video cards with fiber-optic data buses which may change requirements for power supplied to present day PCI express slots and may change the standards in power supply manufacturing to require that additional power connector to a video card since the 75 watt PCIe slot will be obsolete.But ATI and Nvidia may also have to work with motherboard manufacturers to see if Intel's "Thunderbolt" fiber optic data buses can increase or freely throttle the video data bandwidth through its 10GB interface and would be tantamount to increasing data lanes from X16 to X32. It would be almost unlimited video bandwidth which far exceeds any bandwidth limitations vs availability that is needed today. Dual GPU's cannot promise the performance with the limitation of the PCIe X16 slot being divided to dual X8 channels, but it would be nice to see how they perform with unlimited bandwidth potential over a single 10GB fiber-optic. And that would change the battlefield between ATI-AMD and Nvidia.

My 4870 X2's (Can run Quadfire) still rocks on enthusiast settings in Crysis and Warhead without any hiccups and I've not seen a slowdown of any sort on any level in Crysis.

The price to performance ratio is declining and may affect my decision to purchase another dual GPU card, opting instead for single GPU card CF solutions that can utilize all X16 lanes by the GPU.

BTW I did notice the lack of DATA on Crysis @1920x1200 with full enthusiast settings, so that data is missing from this review. Its Gamer plus enthusiast shaders.....not full enthusiast. As above the 4870 X2 runs full enthusiast settings, not one setting is scaled back, and not one hiccup....just smooth play throughout on a single 28" display.

cmdrdredd - Tuesday, March 8, 2011 - link

Why are we still using Crysis Warhead at "Gamer Quality"????? With cards like these why not turn everything maxed in game and then fidget with AA and the like? I don't get it.