AMD's Radeon HD 6990: The New Single Card King

by Ryan Smith on March 8, 2011 12:01 AM EST- Posted in

- AMD

- Radeon HD 6990

- GPUs

Meet The 6990, Cont

Moving on from cooling, let’s discuss the rest of the card. From a power perspective, the 6990 is fed by 2 PCIe 8pin sockets on top of the PCIe bus power. At 150W + 150W + 75W this adds up to the 375W limit of the card. As was the case on the 5970, any increase in power consumption will result in exceeding the specifications for PCIe external power, requiring a strong power supply to drive the card. 375W is and of itself outside of the PCIe specification, and we’ll get to the importance of that in a bit. Meanwhile as was the case on the 5970, at default clocks the GPUs on the 6990 are undervolted to help meet the TDP. AMD is running the 6990 GPUs at 1.12v here, binning Cayman chips to get the best GPUs needed to run at 830MHz at this lower voltage.

At the end of the day power has a great deal of impact on GPU performance, so in increasing the performance of the 6990 over the 5970 AMD has played with both the amount of power the card can draw at default settings (which is why we’re at 375W now) and they have been playing with power management. By playing with power management we’re of course referring to PowerTune, which was first introduced on the 6900 series back in December. By capping the power consumption of a card at a set value and throttling the card back if it exceeds it, AMD can increase GPU clocks without having to base their final clocks around the power consumption of outliers like FurMark. The hardest part of course is finding balance – set your clocks too high for a specific wattage and everything throttles which is counterproductive and leads to inconsistent performance, but if clocks are too low you’re losing out on potential performance.

| AMD Radeon HD 6990 PowerTune Throttling | |||

| Game/Application | Throttled? | ||

| Crysis: Warhead | No | ||

| BattleForge | No | ||

| Metro | Yes (780Mhz) | ||

| HAWX | No | ||

| Civilization V | No | ||

| Bad Company 2 | No | ||

| STALKER | No | ||

| DiRT 2 | No | ||

| Mass Effect 2 | No | ||

| Wolfenstein | No | ||

| 3DMark Vantage | Yes | ||

| MediaEspresso 6 | No | ||

| Unigine Heaven | No | ||

| FurMark | Yes (580MHz) | ||

| Distributed.net Client | Yes (770MHz) | ||

It’s the increase in power consumption and the simultaneous addition of PowerTune that has allowed AMD to increase GPU clocks by as much as they have over the 5970. Cayman as an architecture is faster than Cypress in the first place, but having a 105MHz core clock advantage really seals the deal. At default settings PowerTune appears to be configured nearly identically on the 6990 as it is the 6970: FurMark heavily throttles, while Metro and the newly updated Distributed.net client experience slight throttling. The usual PowerTune configuration range of +/- 20% is available, allowing a card in its default configuration to be set between 300W and 450W for its PowerTune limit.

While we’re on the subject of PowerTune, there is one thing we were hoping to see that we did not get: dynamic limits based on CrossFire usage. This isn’t a complaint per-se as much as it is a pie-in-the-sky idea. Perhaps the biggest downside to a dual-GPU card for performance purposes is that they can’t match a single high-end card in terms of clocks when only a single GPU is in use, as clocks are kept low to keep total dual-GPU power consumption down. One thing we’d like to see in the future is for GPU1 to be allowed to hit standard GPU clocks (e.g. 880MHz) when GPU2 is not in use, with PowerTune arbitrating over matters to keep total power consumption in check. This would allow cards like the 6990 to be as fast as high-end single-GPU cards in tasks that don’t benefit from CrossFire, such as windowed mode games, emulators, GPGPU applications, and games that don’t have a CF profile. We’re just thinking out-loud here, but the potential is obvious.

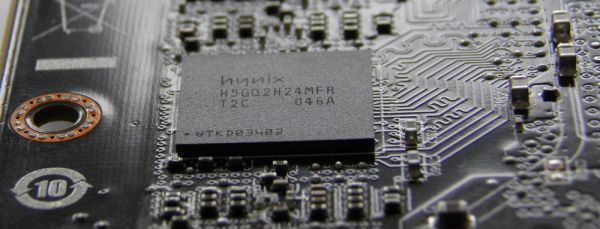

Moving on, as with the 5970 and 2GB 5870 the 6990 is outfitted with 16 RAM chips, 8 per GPU. Half are on the front of the PCB and the other half are on the rear. The card’s backplate provides protection and heat dissipation for the rear-mounted RAM. In one of the few differences from the 6970, the 6990 is using 5GHz GDDR5 instead of 6GHz GDDR5 – our specific sample is using 2Gb Hynix T2C modules. This means the 5GHz stock speed of the card already has the RAM running for as much as it’s spec’d for. Hynix’s datasheets note that 6GHz RAM is spec’d for 1.6v at 6GHz, versus 1.5v at 5GHz for 5GHz RAM. So the difference likely comes down to a few factors: keeping RAM power consumption down, keeping costs down, and any difficulties in running RAM above 5GHz on such a cramped design. In any case we don’t expect there to be much RAM overclocking headroom in this design.

Finally, display connectivity has once again changed compared to both the 5970 and 6970. As Cayman GPUs can only drive 1 dual-link DVI monitor, AMD has dropped the 2nd SL-DVI port and HDMI port in favor of additional mini-DisplayPorts. While all Cayman GPUs (and Cypress/5800 before it) can drive up to 6 monitors, the only way to do so with 1 slot’s worth of display connectors is either through 6 mini-DP ports (ala Eyefinity-6), or through using still-unavailable MST hubs to split DP ports. The 6990 is a compromise in this design – an E6 design requires an expensive DP to DL-DVI adaptor to drive even 1 DL-DVI monitor, while a 5970-like design of 2x DVI + 1 mini-DP doesn’t allow 6 monitors in all cases even with MST hubs. The end result is 1 DL-DVI port for 2560x1600/2560x1440 legacy monitors, and 4 more mini-DP ports for newer monitors. This allows the 6990 to drive 5 monitors today, and all 6 monitors in the future when MST hubs do hit the market.

As with the 5870E6 cards, AMD is going to be stipulating that partners include adapters in order to bridge the DisplayPort adoption. All 6990s will come with 1 passive SL-DVI adapter (taking advantage of the 3rd TDMS transmitter on Cayman), 1 active SL-DVI adapter, and 1 passive HDMI adapter. Between the card’s on-board connectivity options and adapters it’s possible to drive just about any combination short of multiple DL-DVI monitors, including the popular 3 monitor 1080P Eyefinity configuration.

Active SL-DVI Adapter

With all of this said, the change in cooling design and power consumption/heat dissipation does require an additional level of attention towards making a system work, beyond even card length and power supply considerations. We’ve dealt with a number of high-end cards before that don’t fully exhaust their hot air, but nothing we’ve reviewed is quite like the 6990. Specifically nothing we’ve reviewed was a 12” card that explicitly shot out 185W+ of heat directly out of the rear of the card; most of the designs we see are much more open and basically drive air out at all angles.

The critical point is that the 6990 is dumping a lot of hot air in to your case, and that it’s doing so a foot in front of the rear of the case. Whereas the 5970 was moderately forgiving about cooling if you had the space for it, the 6990 will not be. You will need a case with a lot of airflow, and particularly if you overclock the 6990 a case that doesn’t put anything of value directly behind the 6990.

To make a point, we quickly took the temperatures of a 500GB Seagate hard drive in our GPU test rig when placed in the drive cage directly behind the 6990 in PEG slot 1. As a result the 6990 is directly blowing on the hard drive. Note here that normally we have a low-speed 120mm fan directly behind PEG 1, which we have to remove to make room for the 5970 and 6990. All of these tests were run with Crysis in a loop – so they aren’t the highest possible values we could achieve.

| Seagate 500GB Hard Drive Temperatures | |||

| Video Card | Temperature | ||

| Radeon HD 6990 | 37C | ||

| Radeon HD 6990OC | 40C | ||

| Radeon 6970CF | 27C | ||

| Radeon HD 5970 | 31C | ||

At default clocks for the 6990 our hard drive temperature is 37C, while overclocked this reaches 40C. Meanwhile if we replace the 6990 with the 5970, this drops to 31C. Replace that with a pair of 6970s in CrossFire and our 120mm fan, and that drops even more to 27C. So the penalty for having a dual-exhaust card like the 6990 as far as our setup is concerned is 6C compared to a long directed card like the 5970, and 10C compared to a pair of shorter 6970s and an additional case fan. The ultimate purpose of this exercise is to illustrate how placing a hard drive (or any other component) behind the 6990 is a poor choice. As many cases do have hard drive bays around this location, you’d be best served putting your drives as far away from a 6990 as possible.

And while we haven’t been able to test this, as far as air overclocking is concerned the best step may to take this one step further and turn the closest air intake in to an exhaust. A number of cases keep an intake at the front of the case roughly in-line with PEG slot 1; turning this in to an exhaust would much more effectively dissipate the heat that the 6990 is throwing in to the case, and this may be what AMD was going for all along. Video cards that vent air out of the front and the rear of the case, anyone?

Ultimately the 6990 is a doozy, the likes of which we haven’t seen before. Between its greater power consumption and its dual-exhaust cooler, it requires a greater attention to cooling than any other dual-GPU card. Or to put this another way, it’s much more of a specialized card than the 5970 was.

130 Comments

View All Comments

iamezza - Tuesday, March 8, 2011 - link

This could make for an extremely valuable article for gamers on a budget. When does lack of PCIe bandwidth become an issue for running SLI/crossfire?Testing 580SLI at 2 x 8 and 2 x 16 modes would be a good place to start....

therealnickdanger - Tuesday, March 8, 2011 - link

It will be curious to see what impact the bandwidth will have... then again, even with the restriction, the current Sandy Bridge systems still dominate the previous chips.In reality, 16/16 or 8/8 really doesn't have much impact. The difference even at 2560x1600 with all the fixins in even the most demanding games is <1%. Unless AT's new test system will feature six displays and 4K+ resolutions, I'm not sure SNB-E is worth waiting so long for (yes, that could be perceived as a challenge!)

In any case, I'm looking forward to it! Thanks for the article!

shaggart5446 - Tuesday, March 8, 2011 - link

i hope u said the same thing when ur friend nvidia release their 590 card i also do hope u say the exact words that the 590 dont make any sence since a pair of 560 or 570 can give u the same performance as the 590 i cant wait to see ur article on the 590 ill be waiting for anand tfor this because we all know that the 590 are going to be down clockClownPuncher - Tuesday, March 8, 2011 - link

With cards designed specifically with multi monitor gaming in mind, you may want to include those resolutions. Buying this card for 1920x1200 would make zero sense.7Enigma - Wednesday, March 9, 2011 - link

I think it was good to have both. The number of people buying this card will likely have 30" displays, but I'm sure some (competetive FPS for example) will want extremely fluid display even in busy scenes, as well as the person that doesn't yet have the cash to upgrade to a big screen but plans to in the near future.I would also argue that there are likely vastly more people playing on large single-screen displays than eyefinity folks so this does make more sense. And honestly when some of the games are averaging in the sub 80-100 fps range, those minimum framerates approach questionable playability depending on type of game.

So basically as crazy as it is to say this, the graphical power isn't quite there yet to use Eyefinity at high detail settings in more recent and demanding games.

Nentor - Tuesday, March 8, 2011 - link

"With but a trio of exceptions, the 6990 doesn’t make sense compared to a pair of cards in Crossfire."This product is not meant to make any sense from a financial, performance or even practical standpoint.

It IS the fastest videocard and that is that.

I was watching a video last night on youtube of a chainsaw powered by a Buick's V8 engine (hG5sTLY0-V8). It goes through a tree trunk in a blink of an eye, but it had to be lifted by TWO men.

Sure is cool though.

Squuiid - Sunday, March 13, 2011 - link

It makes complete sense if you want SLI in a small form factor, mATX and such. (as do I).PCIe slots are at a premium, and so is space on a mATX board/case.

However, I think I'm going to wait and see what the 590 looks like...

Fhistleb - Tuesday, March 8, 2011 - link

I didn't even think that was possible. Though with what this is pushing out its a little expected I suppose.stangflyer - Tuesday, March 8, 2011 - link

I would like to see the 6990 and 5970 comparison in crysis and metro at eyefinity and single monitor res but with the 5970 at default clocks and close to 5870 clocks. When I am playing these games I have my 5970 at 850 core and 1150 memory and it runs all day without any throttling.The 5970 is handicapped at the default speeds as everyone can run at or real close to 5870 speeds. The core is easy at 850 but you may need to back down memory to 1150 or 1175.

Would love to see the true difference in the 5970 and 6990 this way.

The framebuffer will be the big difference at eyefinity res. with any aa applied.

stangflyer - Tuesday, March 8, 2011 - link

One thing I do like about the dual gpu amd cards is that I play a few games that use physx.. (I have a 5970) I have a 250gts in the second pcie slot. both my slots are 2x16. This way I have a powerfull gpu and physx! I play my games at 5040x1050 and a single card just don't cut it. I did use nvidia surround for 2 months but like my eyefinity setup better. To go crossfire and then have physx you need a motherboard that doesn't knock your pcie slot down to 8x with 3 cards which are few and expensive and also a case that has space for that 3rd card like a coolermaster haf 932X. I have a haf 932 (not X) and I could not go 3 cards unless the 3rd card is single slot.On a side note as to why I am sticking with my 5970 till the 28nm show up is that I like the way the cooler is set up. With the fan on the end I have my 250gts below it with about a 3/8 inch below it. BUT the 250gts is only about 7.5-8 inches long and does not cover the fan at all because the fan is at the end. I have a 120mm fan at the bottom of my haf 932 case that blows straight up into the 5970 fan.

If I used a 6990 the 250gts would cover the 6990 fan.

My choices would be then to sell the 250gts and get a single slot card. (450gts probably)

I think I am just going to stay with what I have for now.

Maybe! LOL!