OCZ Vertex 3 Pro Preview: The First SF-2500 SSD

by Anand Lal Shimpi on February 17, 2011 3:01 AM ESTFor the past six months I've been working on research and testing for the next major AnandTech SSD article. I figured I had enough time to line up its release with the first samples of the next-generation of high end SSDs. After all, it seems like everyone was taking longer than expected to bring out their next-generation controllers. I should've known better.

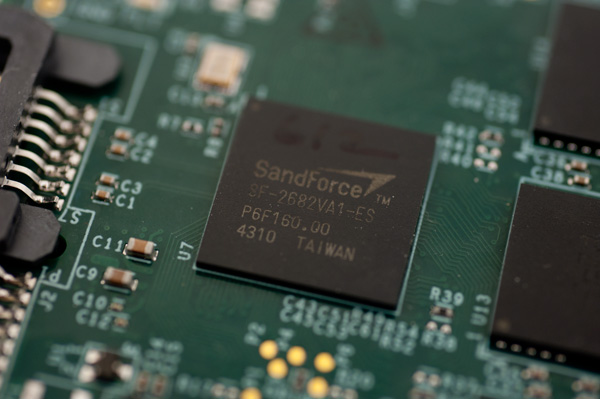

At CES this year we had functional next-generation SSDs based on Marvell and SandForce controllers. The latter was actually performing pretty close to expectations from final hardware. Although I was told that drives wouldn't be shipping until mid-Q2, it was clear that preview hardware was imminent. It was the timing that I couldn't predict.

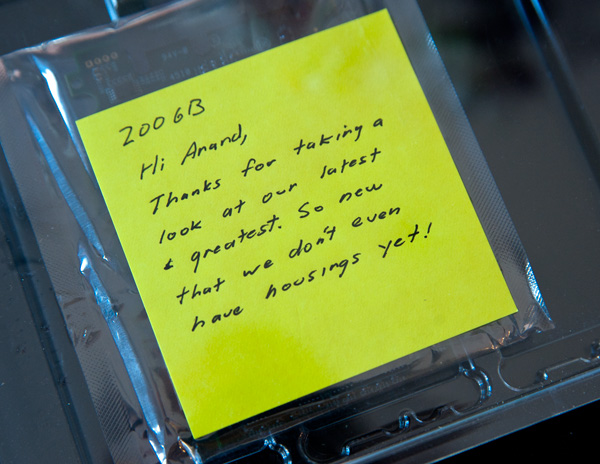

A week ago, two days before I hopped on a flight to Barcelona for MWC, a package arrived at my door. OCZ had sent me a preproduction version of their first SF-2500 based SSD: the Vertex 3 Pro. The sample was so early that it didn't even have a housing, all I got was a PCB and a note:

Two days isn't a lot of time to test an SSD. It's enough to get a good idea of overall performance, but not enough to find bugs and truly investigate behavior. Thankfully the final release of the drive is still at least 1 - 2 months away, so this article can serve as a preview.

The Architecture

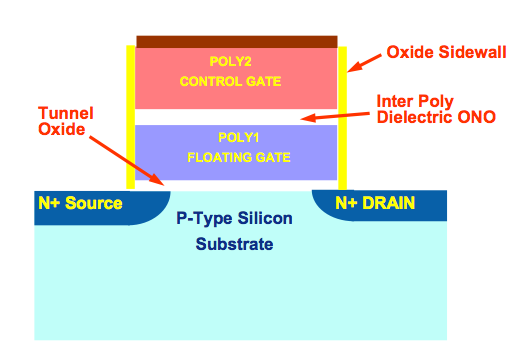

I've covered how NAND Flash works numerous times in the past, but I'll boil it all down to a few essentials.

NAND Flash is non-volatile memory, you can write to it and it'll store a charge even if you remove power from the device. Erase the NAND too many times and it will stop being able to hold a charge. There are two types of NAND that we deal with: single-level cell (SLC) and multi-level cell (MLC). Both are physically the same, you just store more data in the latter which drives costs, performance and reliability down. Two-bit MLC is what's currently used in consumer SSDs, the 3-bit stuff you've seen announced is only suitable for USB sticks, SD cards and other similar media.

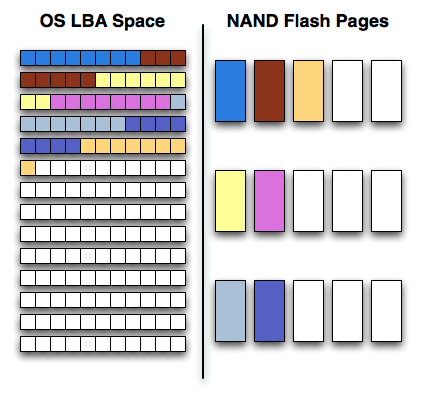

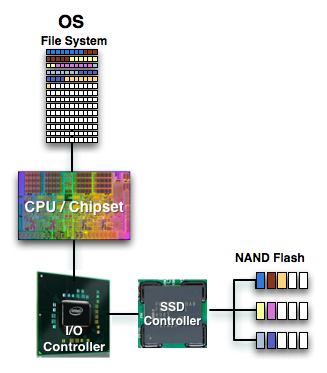

Writes to NAND happen at the page level (4KB or 8KB depending on the type of NAND), however you can't erase a single page. You can only erase groups of pages at a time in a structure called a block (usually 128 or 256 pages). Each cell in NAND can only be erased a finite number of times so you want to avoid erasing as much as possible. The way you get around this is by keeping data in NAND as long as possible until you absolutely have to erase it to make room for new data. SSD controllers have to balance the need to optimize performance with the need to write evenly to all NAND pages. Conventional controllers do this by keeping very large tables that track all data being written to the drive and optimizes writes for performance and reliability. The controller will group small random writes together and attempt to turn them into large sequential writes that are easier to burst across all of the NAND devices. Smart controllers will even attempt to reorganize data while writing in order to keep performance high for future writes. All of this requires the controller to keep track of lots of data, which in turn requires the use of large caches and DRAMs to make accessing that data quick. All of this work is done to ensure that the controller only writes data it absolutely needs to write.

SandForce's approach has the same end goal, but takes a very different path to get there. Rather than trying to figure out what to do with the influx of data, SandForce's approach simply writes less data to the NAND. Using realtime compression and data deduplication techniques, SandForce's controllers attempt to reduce the size of what the host is writing to the drive. The host still thinks all of its data is being written to the drive, but once the writes hit the controller, the controller attempts to reduce the data as much as possible.

The compression/deduplication is done in realtime and what results is potentially awesome performance. Writing less data is certainly faster than writing everything. Similar technologies are employed by enterprise SAN solutions, but SandForce's algorithms are easily applicable to the consumer world. With the exception of large, highly compressed multimedia files (think videos, MP3s) most of what you write to your HDD/SSD is pretty easily compressible.

You don't get any extra space with SandForce's approach, the drive still has to accommodate the same number of LBAs as it advertises to the OS. After all, you could write purely random data to the drive, in which case it'd behave like a normal SSD without any of its superpowers.

Since the drive isn't storing your data bit for bit but rather storing hashes, it's easier for SandForce to do things like encrypt all of the writes to the NAND (which it does by default). By writing less, SandForce also avoids having to use a large external DRAM - its designs don't have any DRAM cache. SandForce also claims to be able to use its write-less approach in order to use less reliable NAND, in order to ensure reliability the controller actually writes some amount of redundant data. Data is written across multiple NAND die in parallel along with additional parity data. The parity data occupies the space of a single NAND die. As a result, SandForce drives set aside more spare area than conventional controllers.

What's New

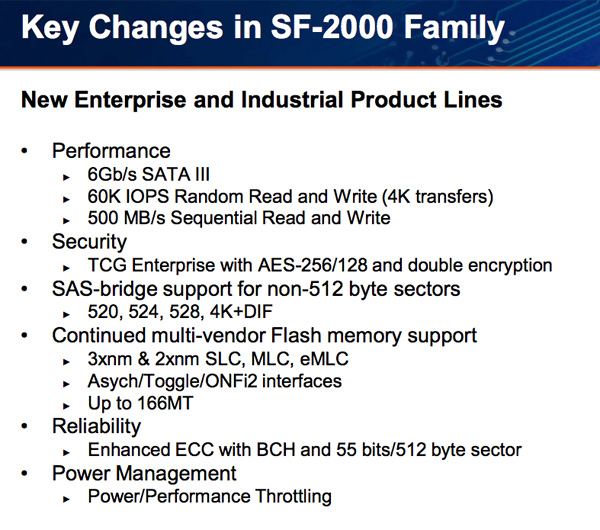

Everything I've described up to this point applies to both the previous generation (SF-1200/1500) and the new generation (SF-2200/2500) of SandForce controllers. Now let's go over what's new:

1) Toggle Mode & ONFI 2 NAND support. Higher bandwidth NAND interfaces mean we should see much better performance without any architectural changes.

2) To accommodate the higher bandwidth NAND SandForce increased the size of on-chip memories and buffers as well as doubled the number of NAND die that can be active at one time. Finally there's native 6Gbps support to remove any interface bottlenecks. Both 1 & 2 will manifest as much higher read/write speed.

3) Better encryption. This is more of an enterprise feature but the SF-2000 controllers support AES-256 encryption across the drive (and double encryption to support different encryption keys for separate address ranges on the drive).

4) Better ECC. NAND densities and defect rates are going up, program/erase cycles are going down. The SF-2000 as a result has an improved ECC engine.

All of the other features that were present in the SF-1200/1500 are present in the SF-2000 series.

144 Comments

View All Comments

HangFire - Friday, February 18, 2011 - link

As a software engineer, I can tell you that temp files are used over in-memory storage because the s/w was originally written that way, and no bug report concerning them will ever reach high priority status because it is ranked as a system configuration issue that can be fixed by the user.In other words, inertia of the "good enough" file writing code (written when RAM was sparse) will prevent software from being re-written to more optimal in-memory usage. The long backlog of truly important bugs taking precedence insures that.

You have a good point about ramdisks competing with disk caching. What is optimal depends on your application load, and to some extent your storage subsystem.

cdillon - Thursday, February 17, 2011 - link

The idea of moving the page-file to a RAM disk makes my head hurt. That's just retarded. You'd do better to turn off paging entirely, but that's also of questionable benefit because paging isn't really that hard on your SSD.Putting the temp directory along with browser caches and other non-critical frequently-written data is not a bad idea as long as you don't over-do it. The only problem with putting the temp drive on a volatile software-based RAM drive is that any software installation you do that requires a reboot with intermediate installer files kept in the temp directory which are expected to be there on the next reboot is going to fail.

Qapa - Saturday, February 19, 2011 - link

Hi Anand,I second this request :)

A few changes though:

- DISABLE page file

--- no matter if you have SSD or HDD, windows writes to the page file even if only using 10% of RAM), so you decrease writes to disk which does 2 thing: increase life of disk and increase speed of system. possibly both only marginally, but that's what benchs would show;

- browser caches

--- for sure this is one of the most wasteful disk writing and it should be more and more a great amount of writes since we are ever more on the web

- temporary folders

--- as someone else mentions you could come into problems if you need a install-reboot-finish_install kind of instalation

--- and I agree, with the sw engineer - if it works it won't get changed, so programs will put stupid stuff to files just because that was the way they did it at some point in time

I think a 1-2Gb RAM Disk is more than enough for browser and temp files, considering an initial starting RAM size of 4-8Gb of RAM. And yes, I do believe this improves system performance.

Can you do the benchs?

Thanks for the site - all reviews - and hope you can add this request as another review.

shawkie - Thursday, February 17, 2011 - link

I notice that the Intel SSD 510 has just started to appear on some retailer websites. It looks like it is SATA 6Gbs and comes in 120GB and 250GB versions. Pricing looks pretty high at this point.BansheeX - Thursday, February 17, 2011 - link

Color me unexcited. SSD is fast and reliable enough for people to want it. The price per GB isn't coming down anywhere near as fast as other technologies. I paid $200 1.5 years ago for an 80GB SSD drive that goes for $180 today.chrysrobyn - Friday, February 18, 2011 - link

Maybe 80GB for $200 is good enough for you, but I need twice that capacity, and I'm unwilling to pay more than $200. The next generation of SSDs that are coming out between now and May are going to come far closer to that price point for me.seapeople - Friday, February 18, 2011 - link

The point is that 1.5 years ago the OP purchased a SSD for $2.5/GB which had anywhere from a 2x-30x performance improvement over its predecessor (HD's), and here we are in 2011 reading a review about the next generation SSD which uses smaller, cheaper flash with half the available write-cycle life which is going to sell for... $5/GB and get a 1.2x-3x performance improvement over its predecessor (initial SSD's).What's next? A solid state drive that reads and writes at 2,000 GB/s and sells for $10,000 for the 1 TB model? Oh I can't wait for that.

ABR - Saturday, February 19, 2011 - link

I have to agree. Year after year we see more and more mind-boggling performance improvements over regular HDDs, but little or no price drop. Perhaps the materials costs are just insurmountable and the replacement of HDDs won't be happening after all. SSDs will be like SLR digital cameras -- premium and professional use only, and pricing a previous generation of amateur users out of a market they used to be in.FunBunny2 - Saturday, February 19, 2011 - link

From what I see: as each feature size drop in the NAND, the controller has to get increasingly more byzantine, needs more cache, and so on just to maintain performance. Word is that IMFT 25nm includes an ECC engine on die!!!Aernout - Saturday, February 19, 2011 - link

Maybe we wil hear more of the hybride disks like the momentus XT from seagate in the future, for 'standard' users they can offer a lot.now they have a 4 gb flash with 500 gb but its 10 months old.

I think lots of people are hoping they will multiply those specs.

I'm thinking of getting one for my laptop, but then on the otherside i am not sure if i will use 500 gig on my laptop, maybe i should buy a 64 ssd in stead.