AMD's Radeon HD 6970 & Radeon HD 6950: Paving The Future For AMD

by Ryan Smith on December 15, 2010 12:01 AM ESTCayman: The Last 32nm Castaway

With the launch of the Barts GPU and the 6800 series, we touched on the fact that AMD was counting on the 32nm process to give them a half-node shrink to take them in to 2011. When TSMC fell behind schedule on the 40nm process, and then the 32nm process before canceling it outright, AMD had to start moving on plans for a new generation of 40nm products instead.

The 32nm predecessor of Barts was among the earlier projects to be sent to 40nm. This was due to the fact that before 32nm was even canceled, TSMC’s pricing was going to make 32nm more expensive per transistor than 40nm, a problem for a mid-range part where AMD has specific margins they’d like to hit. Had Barts been made on the 32nm process as projected, it would have been more expensive to make than on the 40nm process, even though the 32nm version would be smaller. Thus 32nm was uneconomical for gaming GPUs, and Barts was moved to the 40nm process.

Cayman on the other hand was going to be a high-end part. Certainly being uneconomical is undesirable, but high-end parts carry high margins, especially if they can be sold in the professional market as compute products (just ask NVIDIA). As such, while Barts went to 40nm, Cayman’s predecessor stayed on the 32nm process until the very end. The Cayman team did begin planning to move back to 40nm before TSMC officially canceled the 32nm process, but if AMD had a choice at the time they would have rather had Cayman on the 32nm process.

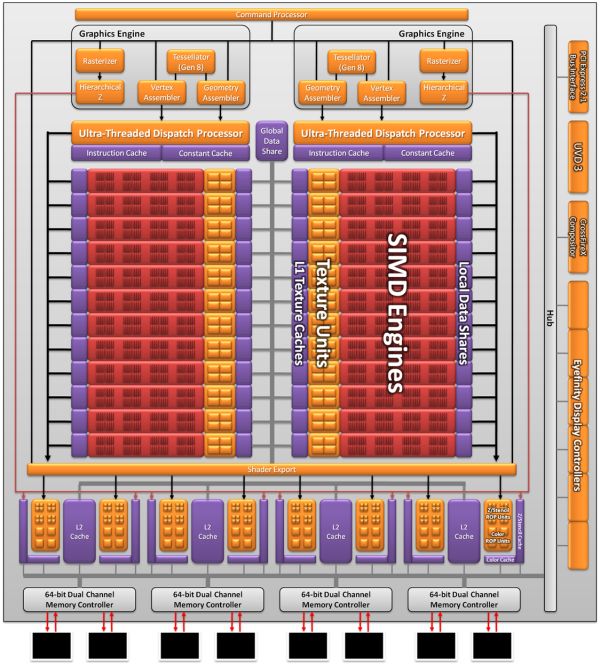

As a result the Cayman we’re seeing today is not what AMD originally envisioned as a 32nm part. AMD won’t tell us everything that they had to give up to create the 40nm Cayman (there has to be a few surprises for 28nm) but we do know a few things. First and foremost was size; AMD’s small die strategy is not dead, but getting the boot from the 32nm process does take the wind out of it. At 389mm2 Cayman is the largest AMD GPU since the disastrous R600, and well off the sub-300mm2 size that the small die strategy dictates. In terms of efficient usage of space though AMD is doing quite well; Cayman has 2.64 billion transistors, 500mil more than Cypress. AMD was able to pack 29% more transistors in only 16% more space.

Even then, just reaching that die size is a compromise between features and production costs. AMD didn’t simply settle for a larger GPU, but they had to give up some things to keep it from being even larger. SIMDs were on the chopping block; 32nm Cayman would have had more SIMDs for more performance. Features were also lost, and this is where AMD is keeping mum. We know PCI Express 3.0 functionality was scheduled for the 32nm part, where AMD had to give up their PCIe 3.0 controller for a smaller 2.1 controller to make up for their die size difference. This in all honesty may have worked out better for them: PCIe 3.0 ended up being delayed until November, so suitable motherboards are still at least months away.

The end result is that Cayman as we know it is a compromise to make it happen on 40nm. AMD got their new VLIW4 architecture, but they had to give up performance and an unknown number of features to get there. On the flip side this will make 28nm all the more interesting, as we’ll get to see many of the features that were supposed to make it for 2010 but never arrived.

168 Comments

View All Comments

Ryan Smith - Wednesday, December 15, 2010 - link

AMD rarely has Linux drivers ready for the press ahead of a launch. This is one such occasion.MeanBruce - Wednesday, December 15, 2010 - link

Great job on the review Ryan, hope you will cover the upcoming Nvidia 560 and 550 when they arrive. Peace Brother!gescom - Wednesday, December 15, 2010 - link

Please Anand make an update with a new 10.12 driver. Great review btw.knowom - Wednesday, December 15, 2010 - link

Until you keep into consideration1) Driver support

2) Cuda

3) PhysX

I also prefer the lower idle noise, but higher load noise than the reverse for Ati because when your gaming usually you have your sound turned up a lot it's when you aren't gaming is when noise is more of the issue for seeking a quieter system.

It's a better trade off in my view, but they are both pretty even in terms of noise for idle and load regardless and a far cry from quite compared to other solutions from both vendors if that's what your worried about not to mention non reference cooler designs effect that situation by leaps and bounds..

Acanthus - Wednesday, December 15, 2010 - link

AMD has been updating drivers more aggressively than Nvidia lately. (the last year)Anecdotally, my GTX285 has had a lot more game issues than my 4890. Specifically in NWN2 and Civ5.

Cuda is irrelevant unless you are doing heavy 1. photoshop, 2. video encoding.

PhysX is still a crappy gimmick at this point and needs to offer real visual improvements without a 40%+ performance hit.

smookyolo - Wednesday, December 15, 2010 - link

PhysX may be a gimmick in games, but it's one of the better ones.Also, guess what... it's being used all over the 3D animation industry.

And guess where the real money comes from? The industry.

fausto412 - Wednesday, December 15, 2010 - link

physx is a gimmick that has been around for some time and will never take hold. when physx came around it set a new standard but since then developers have adopted havok more commonly since it doesn't require extra hardware.it's all marketing and not a worthy decision point when buying a new card

jackstar7 - Wednesday, December 15, 2010 - link

Alternately, my triple-monitor setup makes AMD the obvious choice.beepboy - Wednesday, December 15, 2010 - link

Agreed on triple-monitor setup. You can make the argument that 2x 460s are cheaper and nets better performance but at the end of the day 2x 460s will be louder, use more power, more heat, etc over a single 69xx. I just want my triple monitor setup, damn it.codedivine - Wednesday, December 15, 2010 - link

Any info on cache sizes and register files?