AMD's Radeon HD 6970 & Radeon HD 6950: Paving The Future For AMD

by Ryan Smith on December 15, 2010 12:01 AM ESTCrysis: Warhead

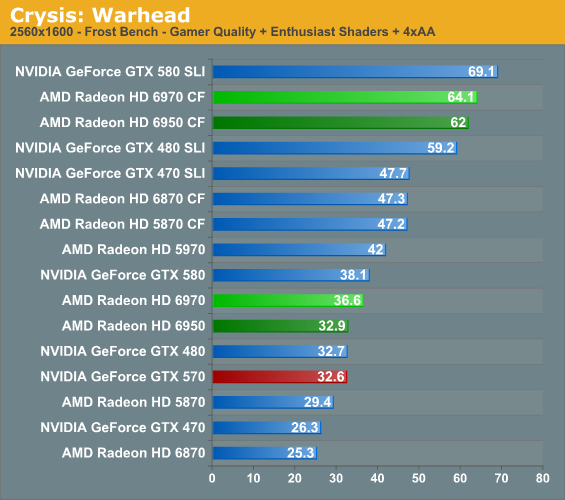

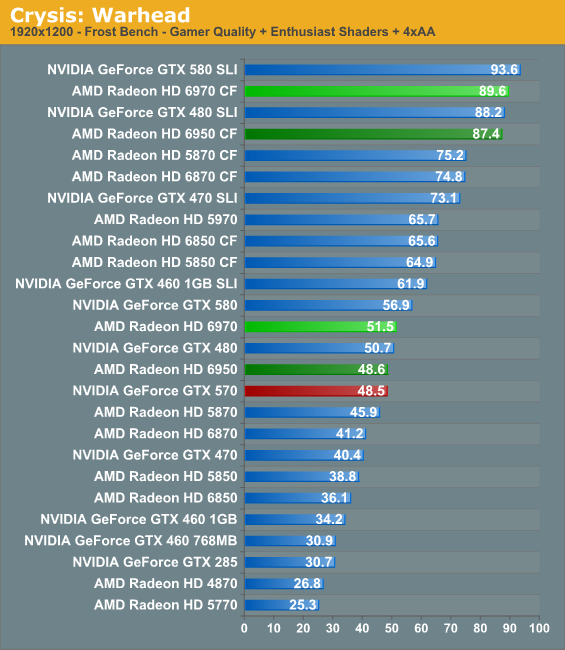

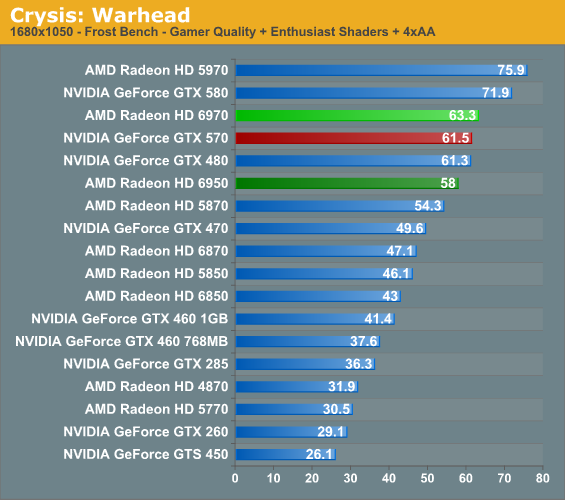

Kicking things off as always is Crysis: Warhead, still one of the toughest game in our benchmark suite. Even 2 years since the release of the original Crysis, “but can it run Crysis?” is still an important question, and the answer continues to be “no.” While we’re closer than ever, full Enthusiast settings at a playable framerate is still beyond the grasp of a single card.

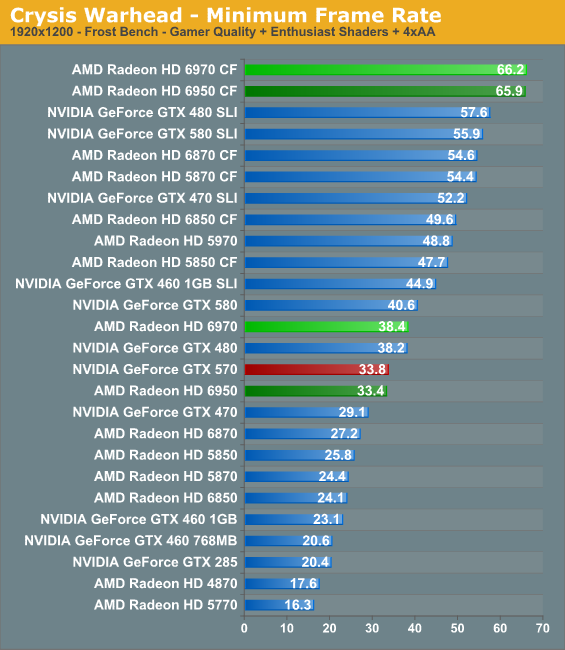

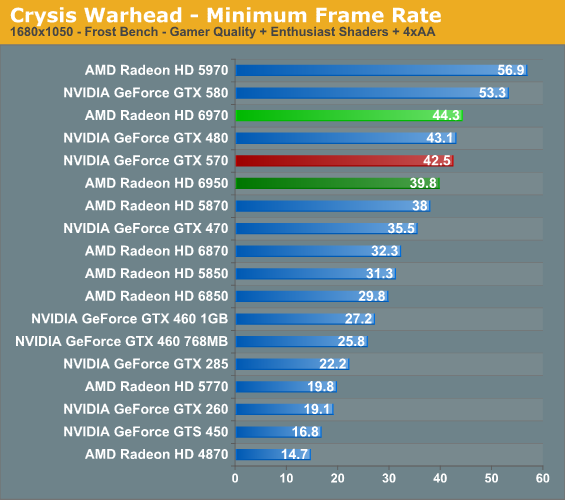

Crysis starts things off well for AMD. Keeping an eye on 2560 and 1920, not only does the 6970 start things off with a slight lead over NVIDIA’s GTX 570, but even the cheaper 6950 holds parity. In the case of the 6900 series it also hits a special milestone at 2560, being the first AMD single-GPU cards to surpass 30fps. This also gives us our first inkling of 6950 performance relative to 5870 performance – as expected the 6950 is faster, but at 5-10% not fantastically so. Crysis does push in excess of 2mil polygons/frame, but the 6900 series’ improvements are best suited for when tessellation is in use.

Meanwhile our CrossFire setups are unusually close, with barely 2fps separating the 6970CF and 6950CF. It’s unlikely we’re CPU limited at 2560, so we may be looking at being ROP-limited, as the ROPs are the only constant between the two cards.

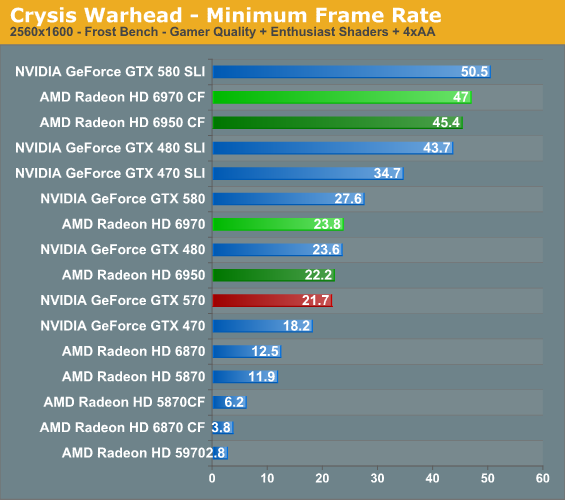

With 2GB of RAM our AMD cards finally break out of the minimum framerate crash Crysis experiences with 1GB AMD cards. Our rankings are similar to our averages, with the 6970 taking a small lead while the 6950 holds close to the 570.

168 Comments

View All Comments

mac2j - Wednesday, December 15, 2010 - link

Um - if you have the money for a 580 ... pick up another $80-100 and get 2 x 6950 - you'll get nearly the best possible performance on the market at a similar cost.Also I agree that Nvidia will push the 580 price down as much as possible... the problem is that if you believe all of the admittedly "unofficial" breakdowns ... it costs Nvidia 1.5-2x as much to make a 580 as it costs AMD to make a 6970.

So its hard to be sure how far Nvidia can push down the price on the 580 before it ceases to become profitable - my guess is they'll focus on making a 565 type card which has almost 570 performance but for a manufacturing cost closer to what a 460 runs them.

fausto412 - Wednesday, December 15, 2010 - link

yeah. AMD let us down on this here product. We see what gtx580 is and what 6970 is...i would say if you planning to spend 500...the gtx580 is worth it.truepurple - Wednesday, December 15, 2010 - link

"support for color correction in linear space"What does that mean?

Ryan Smith - Wednesday, December 15, 2010 - link

There are two common ways to represent color, linear and gamma.Linear: Used for rendering an image. More generally linear has a simple, fixed relationship between X and Y, such that if you drew the relationship it would be a straight line. A linear system is easy to work with because of the simple relationship.

Gamma: Used for final display purposes. It's a non-linear colorspace that was originally used because CRTs are inherently non-linear devices. If you drew out the relationship, it would be a curved line. The 5000 series is unable to apply color correction in linear space and has to apply it in gamma space, which for the purposes of color correction is not as accurate.

IceDread - Wednesday, December 15, 2010 - link

Yet again we do not get to see hd 5970 in crossfire despite it being a single card! Is this an nvidia site?Anyway, for those of you who do want to see those results, here is a link to a professional Swedish site!

http://www.sweclockers.com/recension/13175-amd-rad...

Maybe there is some google translation available or so if you want to understand more than the charts shows.

medi01 - Wednesday, December 15, 2010 - link

Wow, 5970 in crossfire consumes less than 580 in SLI.http://www.sweclockers.com/recension/13175-amd-rad...

ggathagan - Wednesday, December 15, 2010 - link

Absolutely!!!There's no way on God's green earth that Anandtech doesn't currently have a pair of 5970's on hand, so that MUST be the reason.

I'll go talk to Anand and Ryan right now!!!!

Oh, wait, they're on a conference call with Huang Jen-Hsun.....

I'd like to note that I do not believe Anadtech ever did a test of two 5970's, so it's somewhat difficult to supply non-existent into any review.

Ryan did a single card test in November 2009.That is the only review I've found of any 5970's on the site.

vectorm12 - Wednesday, December 15, 2010 - link

I was not aware of the fact that the 32nm process had been canned completely and was still expecting the 6970 to blow the 580 out of the water.Although we can't possibly know and are unlikely to ever find out what cayman at 32nm would have performed like I suspect AMD had to give up a good chunk of performance to fit it on the 389mm^2 40nm die.

This really makes my choice easy as I'll pickup another cheap 5870 and run my system in CF.

I think I'll be able to live with the performance until the refreshed cayman/next gen GPUs are ready for prime time.

Ryan: I'd really like to see what ighashgpu can do with the new 6970 cards though. Although you produce a few GPGPU charts I feel like none of them really represent the real "number-crunching" performance of the 6970/6950.

Ivan has already posted his analysis in his blog and it seems like the change from LWIV5 to LWIV4 made a negligible impact at the most. However I'd really love to see ighashgpu included in future GPU tests to test new GPUs and architectures.

Thanks for the site and keep up the work guys!

slagar - Wednesday, December 15, 2010 - link

Gaming seems to be in the process of bursting its own bubble. Graphics of games isn't keeping up with the hardware (unless you cound gaming on 6 monitors) because most developers are still targeting consoles with much older technology.Consoles won't upgrade for a few more years, and even then, I'm wondering how far we are from "the final console generation". Visual improvements in graphics are becoming quite incremental, so it's harder to "wow" consumers into buying your product, and the costs for developers is increasing, so it's becoming harder for developers to meet these standards. Tools will always improve and make things easier and more streamlined over time I suppose, but still... it's going to be an interesting decade ahead of us :)

darckhart - Wednesday, December 15, 2010 - link

that's not entirely true. the hardware now allows not only insanely high resolutions, but it also lets those of us with more stringent IQ requirements (large custom texture mods, SSAA modes, etc) to run at acceptable framerates at high res in intense action spots.