AMD's Radeon HD 6970 & Radeon HD 6950: Paving The Future For AMD

by Ryan Smith on December 15, 2010 12:01 AM ESTRedefining TDP With PowerTune

One of our fundamental benchmarks is FurMark, oZone3D’s handy GPU load testing tool. The furry donut can generate a workload in excess of anything any game or GPGPU application can do, giving us an excellent way to establish a worst case scenario for power usage, GPU temperatures, and cooler noise. The fact that it was worse than any game/application has ruffled both AMD and NVIDIA’s feathers however, as it’s been known to kill older cards and otherwise make their lives more difficult, leading to the two companies labeling the program a “power virus”.

FurMark is just one symptom of a larger issue however, and that’s TDP. Compared to their CPU counterparts at only 140W, video cards are power monsters. The ATX specification allows for PCIe cards to draw up to 300W, and we quite regularly surpass that when FurMark is in use. Things get even dicier on laptops and all-in-one computers, where compact spaces and small batteries limit how much power a GPU can draw and how much heat can effectively be dissipated. For these reasons products need to be designed to meet a certain TDP; in the case of desktop cards we saw products such as the Radeon HD 5970 where it had sub-5870 clocks to meet the 300W TDP (with easy overvolting controls to make up for it), and in laptop parts we routinely see products with many disabled functional units and low clocks to meet those particularly low TDP requirements.

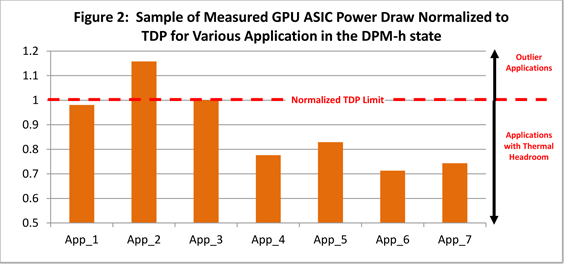

Although we see both AMD and NVIDIA surpass their official TDP on FurMark, it’s never by very much. After all TDP defines the thermal limits of a system, so if you regularly surpass those limits it can lead to overwhelming the cooling and ultimately risking system damage. It’s because of FurMark and other scenarios that AMD claims that they have to set their products’ performance lower than they’d like. Call of Duty, Crysis, The Sims 3, and other games aren’t necessarily causing video cards to draw power in excess of their TDP, but the need to cover the edge cases like FurMark does. As a result AMD has to plan around applications and games that cause a high level of power draw, setting their performance levels low enough that these edge cases don’t lead to the GPU regularly surpassing its TDP.

This ultimately leads to a concept similar to dynamic range, defined by Wikipedia as: “the ratio between the largest and smallest possible values of a changeable quantity.” We typically use dynamic range when talking about audio and video, referring to the range between quiet and loud sounds, and dark and light imagery respectively. However power draw is quite similar in concept, with a variety of games and applications leading to a variety of loads on the GPU. Furthermore while dynamic range is generally a good thing for audio and video, it’s generally a bad thing for desktop GPU usage – low power utilization on a GPU-bound game means that there’s plenty of headroom for bumping up clocks and voltages to improve the performance of that game. Going back to our earlier example however, a GPU can’t be set this high under normal conditions, otherwise FurMark and similar applications will push the GPU well past TDP.

The answer to the dynamic power range problem is to have variable clockspeeds; set the clocks low to keep power usage down on power-demanding games, and set the clocks high on power-light games. In fact we already have this in the CPU world, where Intel and AMD use their turbo modes to achieve this. If there’s enough thermal and power headroom, these processors can increase their clockspeeds by upwards of several steps. This allows AMD and Intel to not only offer processors that are overall faster on average, but it lets them specifically focus on improving single-threaded performance by pushing 1 core well above its normal clockspeeds when it’s the only core in use.

It was only a matter of time until this kind of scheme came to the GPU world, and that time is here. Earlier this year we saw NVIDIA lay the groundwork with the GTX 500 series, where they implemented external power monitoring hardware for the purpose of identifying and slowing down FurMark and OCCT; however that’s as far as they went, capping only FurMark and OCCT. With Cayman and the 6900 series AMD is going to take this to the next step with a technology called PowerTune.

PowerTune is a power containment technology, designed to allow AMD to contain the power consumption of their GPUs to a pre-determined value. In essence it’s Turbo in reverse: instead of having a low base clockspeed and higher turbo multipliers, AMD is setting a high base clockspeed and letting PowerTune cap GPU performance when it exceeds AMD’s TDP. The net result is that AMD can reduce the dynamic power range of their GPUs by setting high clockspeeds at high voltages to maximize performance, and then letting PowerTune cap GPU performance for the edge cases that cause GPU power consumption to exceed AMD’s preset value.

168 Comments

View All Comments

DoktorSleepless - Wednesday, December 15, 2010 - link

What benchmark or game is used to measure noise?Hrel - Wednesday, December 15, 2010 - link

I'm not 100% but I believe they test it under Crysis. It was either that or a benchmark that put full load on the system. It was in an article in last year or 2, I've been reading so long it's all starting to mesh together; chronologically. But suffice it to say it stresses the system.Hrel - Wednesday, December 15, 2010 - link

It's furmark, it's in the article.Adul - Wednesday, December 15, 2010 - link

nice Christmas gift from the GF :DAstroGuardian - Wednesday, December 15, 2010 - link

I saw my GF buying a couple of those. One is supposed to be for me and she doesn't play games...... WTF?MeanBruce - Wednesday, December 15, 2010 - link

Wow, you are getting a couple of 6950s? All I am getting from my 22yo gf is a couple of size F yammos lying on a long narrow torso, and a single ASUS 6850. Don't know which I like better, hmmmmm. Wednesday morning comic relief.Adul - Wednesday, December 15, 2010 - link

damn sounds good to me :) enjoy both ;)SirGCal - Wednesday, December 15, 2010 - link

I'm happy to see these power values! I did expect a bit more performance but once I get one, I'll benchmark it myself. By then the drivers will likely have changed the situation. Now to get Santa my wish list... :-) If it was only that easy...mac2j - Wednesday, December 15, 2010 - link

One of the most impressive elements here is that you can get 2x6950 for ~$100 more than a single 580. That's some incredible performance for $600 which is not unheard of as the price point for a top single-slot card.Second... the scaling of the 6950 combined with the somwhat lower power consumption relative to the 570 bodes well for AMD with the 6990. My guess is they can deliver a top performing dual-GPU card with under a 425-watt TDP .... the 570 is a great single chip performer but getting it into a dual-gpu card under 450-500w is going to be a real challenge.

Anyway exciting stuff all-around - there will be a lot of heavy-hitting GPU options available for really very fair prices....

StormyParis - Wednesday, December 15, 2010 - link

It's nice to have all current cards listed, and helps determine which one to buy. My question, and the one people ask me, is rather "is it worth upgrading now". Which depends on a lot of things (CPU, RAM...), but, above all, on comparative perf between current cards and cards 1-2-3 generations out. I currently use a 4850. How much faster would a 6850 or 6950 be ?