NVIDIA's GeForce GTX 580: Fermi Refined

by Ryan Smith on November 9, 2010 9:00 AM ESTGF110: Fermi Learns Some New Tricks

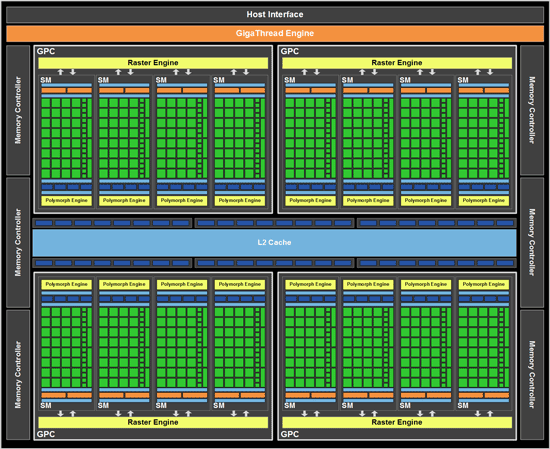

We’ll start our in-depth look at the GTX 580 with a look at GF110, the new GPU at the heart of the card.

There have been rumors about GF110 for some time now, and while they ultimately weren’t very clear it was obvious NVIDIA would have to follow up GF100 with something else similar to it on 40nm to carry them through the rest of the processes’ lifecycle. So for some time now we’ve been speculating on what we might see with GF100’s follow-up part – an outright bigger chip was unlikely given GF100’s already large die size, but NVIDIA has a number of tricks they can use to optimize things.

Many of those tricks we’ve already seen in GF104, and had you asked us a month ago what we thought GF110 would be, we were expecting some kind of fusion of GF104 and GF100. Primarily our bet was on the 48 CUDA Core SM making its way over to a high-end part, bringing with it GF104’s higher theoretical performance and enhancements such as superscalar execution and additional special function and texture units for each SM. What we got wasn’t quite what we were imagining – GF110 is much more heavily rooted in GF100 than GF104, but that doesn’t mean NVIDIA hasn’t learned a trick or two.

Fundamentally GF110 is the same architecture as GF100, especially when it comes to compute. 512 CUDA Cores are divided up among 4 GPCs, and in turn each GPC contains 1 raster engine and 4 SMs. At the SM level each SM contains 32 CUDA cores, 16 load/store units, 4 special function units, 4 texture units, 2 warp schedulers with 1 dispatch unit each, 1 Polymorph unit (containing NVIDIA’s tessellator) and then the 48KB+16KB L1 cache, registers, and other glue that brought an SM together. At this level NVIDIA relies on TLP to keep a GF110 SM occupied with work. Attached to this are the ROPs and L2 cache, with 768KB of L2 cache serving as the guardian between the SMs and the 6 64bit memory controllers. Ultimately GF110’s compute performance per clock remains unchanged from GF100 – at least if we had a GF100 part with all of its SMs enabled.

On the graphics side however, NVIDIA has been hard at work. They did not port over GF104’s shader design, but they did port over GF104’s texture hardware. Previously with GF100, each unit could compute 1 texture address and fetch 4 32bit/INT8 texture samples per clock, 2 64bit/FP16 texture samples per clock, or 1 128bit/FP32 texture sample per clock. GF104’s texture units improved this to 4 samples/clock for 32bit and 64bit, and it’s these texture units that have been brought over for GF110. GF110 can now do 64bit/FP16 filtering at full speed versus half-speed on GF100, and this is the first of the two major steps NVIDIA took to increase GF110’s performance over GF100’s performance on a clock-for-clock basis.

| NVIDIA Texture Filtering Speed (Per Texture Unit) | |||||

| GF110 | GF104 | GF100 | |||

| 32bit (INT8) | 4 Texels/Clock | 4 Texels/Clock | 4 Texels/Clock | ||

| 64bit (FP16) | 4 Texels/Clock | 4 Texels/Clock | 2 Texels/Clock | ||

| 128bit (FP32) | 1 Texel/Clock | 1 Texel/Clock | 1 Texel/Clock | ||

Like most optimizations, the impact of this one is going to be felt more on newer games than older games. Games that make heavy use of 64bit/FP16 texturing stand to gain the most, while older games that rarely (if at all) used 64bit texturing will gain the least. Also note that while 64bit/FP16 texturing has been sped up, 64bit/FP16 rendering has not – the ROPs still need 2 cycles to digest 64bit/FP16 pixels, and 4 cycles to digest 128bit/FP32 pixels.

It’s also worth noting that this means that NVIDIA’s texture:compute ratio schism remains. Compared to GF100, GF104 doubled up on texture units while only increasing the shader count by 50%; the final result was that per SM 32 texels were processed to 96 instructions computed (seeing as how the shader clock is 2x the base clock), giving us 1:3 ratio. GF100 and GF110 on the other hand retain the 1:4 (16:64) ratio. Ultimately at equal clocks GF104 and GF110 widely differ in shading, but with 64 texture units total in both designs, both have equal texturing performance.

Moving on, GF110’s second trick is brand-new to GF110, and it goes hand-in-hand with NVIDIA’s focus on tessellation: improved Z-culling. As a quick refresher, Z-culling is a method of improving GPU performance by throwing out pixels that will never be seen early in the rendering process. By comparing the depth and transparency of a new pixel to existing pixels in the Z-buffer, it’s possible to determine whether that pixel will be seen or not; pixels that fall behind other opaque objects are discarded rather than rendered any further, saving on compute and memory resources. GPUs have had this feature for ages, and after a spurt of development early last decade under branded names such as HyperZ (AMD) and Lightspeed Memory Architecture (NVIDIA), Z-culling hasn’t been promoted in great detail since then.

Z-Culling In Action: Not Rendering What You Can't See

For GF110 this is changing somewhat as Z-culling is once again being brought back to the surface, although not with the zeal of past efforts. NVIDIA has improved the efficiency of the Z-cull units in their raster engine, allowing them to retire additional pixels that were not caught in the previous iteration of their Z-cull unit. Without getting too deep into details, internal rasterizing and Z-culling take place in groups of pixels called tiles; we don’t believe NVIDIA has reduced the size of their tiles (which Beyond3D estimates at 4x2); instead we believe NVIDIA has done something to better reject individual pixels within a tile. NVIDIA hasn’t come forth with too many details beyond the fact that their new Z-cull unit supports “finer resolution occluder tracking”, so this will have to remain a mystery for another day.

In any case, the importance of this improvement is that it’s particularly weighted towards small triangles, which are fairly rare in traditional rendering setups but can be extremely common with heavily tessellated images. Or in other words, improving their Z-cull unit primarily serves to improve their tessellation performance by allowing NVIDIA to better reject pixels on small triangles. This should offer some benefit even in games with fewer, larger triangles, but as framed by NVIDIA the benefit is likely less pronounced.

In the end these are probably the most aggressive changes NVIDIA could make in such a short period of time. Considering the GF110 project really only kicked off in earnest in February, NVIDIA only had around half a year to tinker with the design before it had to be taped out. As GPUs get larger and more complex, the amount of tweaking that can get done inside such a short window is going to continue to shrink – and this is a far cry from the days where we used to get major GPU refreshes inside of a year.

160 Comments

View All Comments

wtfbbqlol - Thursday, November 11, 2010 - link

Most likely an anomaly. Just compare the GTX480 to the GTX470 minimum framerate. There's no way the GTX480 is twice as fast as the GTX470.Oxford Guy - Friday, November 12, 2010 - link

It does not look like an anomaly since at least one of the few minimum frame rate tests posted by Anandtech also showed the 480 beating the 580.We need to see Unigine Heaven minimum frame rates, at the bare minimum, from Anandtech, too.

Oxford Guy - Saturday, November 13, 2010 - link

To put it more clearly... Anandtech only posted minimum frame rates for one test: Crysis.In those, we see the 480 SLI beating the 580 SLI at 1920x1200. Why is that?

It seems to fit with the pattern of the 480 being stronger in minimum frame rates in some situations -- especially Unigine -- provided that the resolution is below 2K.

I do hope someone will clear up this issue.

wtfbbqlol - Wednesday, November 10, 2010 - link

It's really disturbing how the throttling happens without any real indication. I was really excited reading about all the improvements nVidia made to the GTX580 then I read this annoying "feature".When any piece of hardware in my PC throttles, I want to know about it. Otherwise it just adds another variable when troubleshooting performance problem.

Is it a valid test to rename, say, crysis.exe to furmark.exe and see if throttling kicks in mid-game?

wtfbbqlol - Wednesday, November 10, 2010 - link

Well it looks like there is *some* official information about the current implementation of the throttling.http://nvidia.custhelp.com/cgi-bin/nvidia.cfg/php/...

Copy and paste of the message:

"NVIDIA has implemented a new power monitoring feature on GeForce GTX 580 graphics cards. Similar to our thermal protection mechanisms that protect the GPU and system from overheating, the new power monitoring feature helps protect the graphics card and system from issues caused by excessive power draw.

The feature works as follows:

• Dedicated hardware circuitry on the GTX 580 graphics card performs real-time monitoring of current and voltage on each 12V rail (6-pin, 8-pin, and PCI-Express).

• The graphics driver monitors the power levels and will dynamically adjust performance in certain stress applications such as Furmark 1.8 and OCCT if power levels exceed the card’s spec.

• Power monitoring adjusts performance only if power specs are exceeded AND if the application is one of the stress apps we have defined in our driver to monitor such as Furmark 1.8 and OCCT.

- Real world games will not throttle due to power monitoring.

- When power monitoring adjusts performance, clocks inside the chip are reduced by 50%.

Note that future drivers may update the power monitoring implementation, including the list of applications affected."

Sihastru - Wednesday, November 10, 2010 - link

I never heard anyone from the AMD camp complaining about that "feature" with their cards and all current AMD cards have it. And what would be the purpose of renaming your Crysis exe? Do you have problems with the "Crysis" name? You think the game should be called "Furmark"?So this is a non issue.

flyck - Wednesday, November 10, 2010 - link

the use of renaming is that nvidia uses name tags to identify wether it should throttle or not.... suppose person x creates a program and you use an older driver that does not include this name tag, you can break things.....Gonemad - Wednesday, November 10, 2010 - link

Big fat YES. Please do rename the executable from crysis.exe to furmark.exe, and tell us.Get furmark and go all the way around, rename it to Crysis.exe, but be sure to have a fire extinguisher in the premises. Caveat Emptor.

Perhaps just renaming in not enough, some checksumming is involved. It is pretty easy to change checksum without altering the running code, though. When compiling source code, you can insert comments in the code. When compiling, the comments are not dropped, they are compiled together with the running code. Change the comment, change the checksum. But furmark alone can do that.

Open the furmark on a hex editor and change some bytes, but try to do that in a long sequence of zeros at the end of the file. Usually compilers finish executables in round kilobytes, filling with zeros. It shouldn't harm the running code, but it changes the checksum, without changing byte size.

If it works, rename it Program X.

Ooops.

iwodo - Wednesday, November 10, 2010 - link

The good thing about GPU is that it scales VERY well ( if not linearly ) with transistors. 1 Node Die Shrink, Double the transistor account, double the performance.Combined there are not bottleneck with Memory, which GDDR5 still have lots of headroom, we are very limited by process and not the design.

techcurious - Wednesday, November 10, 2010 - link

I didnt read through ALL the comments, so maybe this was already suggested. But, can't the idle sound level be reduced simply by lowering the fan speed and compromising idle temperatures a bit? I bet you could sink below 40db if you are willing to put up with an acceptable 45 C temp instead of 37 C temp. 45 C is still an acceptable idle temp.