NVIDIA's GeForce GTX 580: Fermi Refined

by Ryan Smith on November 9, 2010 9:00 AM ESTPower, Temperature, and Noise

Last but not least as always is our look at the power consumption, temperatures, and acoustics of the GTX 580. NVIDIA’s performance improvements were half of the GTX 580 story, and this is the other half.

Starting quickly with voltage, as we only have one card we can’t draw too much from what we know, but there are still some important nuggets. NVIDIA is still using multiple VIDs, so your mileage may vary. What’s clear from the start though is that NVIDIA’s operating voltages compared to the GTX 480 are higher for both idle and load. This is the biggest hint that leakage has been seriously dealt with, as low voltages are a common step to combat leakage. Even with these higher voltages running on a chip similar to GF100, overall power usage is still going to be lower. And on that note, while the voltages have changed the idle clocks have not; idle remains at 50.6MHz for the core.

| GeForce GTX 480/580 Voltages | |||||

| Ref 480 Load | Ref 480 Idle | Ref 580 Load | Ref 580 Idle | ||

| 0.959v | 0.875v | 1.037v | 0.962v | ||

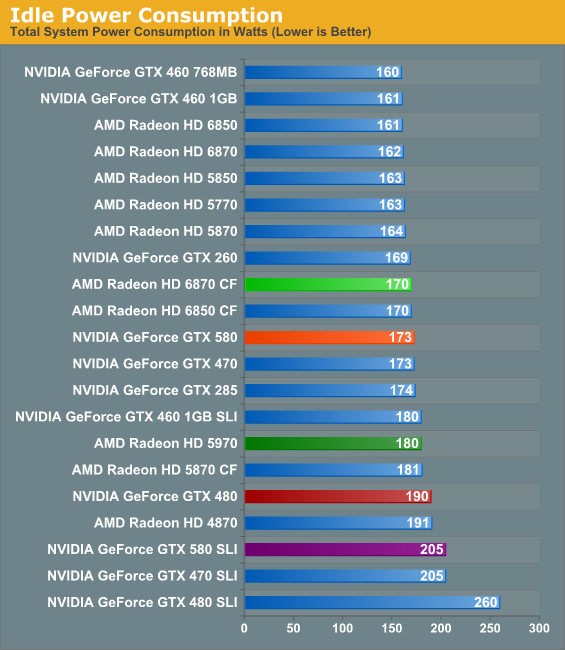

Beginning with idle power, we’re seeing our second biggest sign that NVIDIA has been tweaking things specifically to combat leakage. Idle power consumption has dropped by 17W on our test system even though the idle clocks are the same and the idle voltage higher. NVIDIA doesn’t provide an idle power specification, but based on neighboring cards idle power consumption can’t be far off from 30-35W. Amusingly it still ends up being more than the 6870 CF however, thanks to the combination of AMD’s smaller GPUs and ULPS power saving mode for the slave GPU.

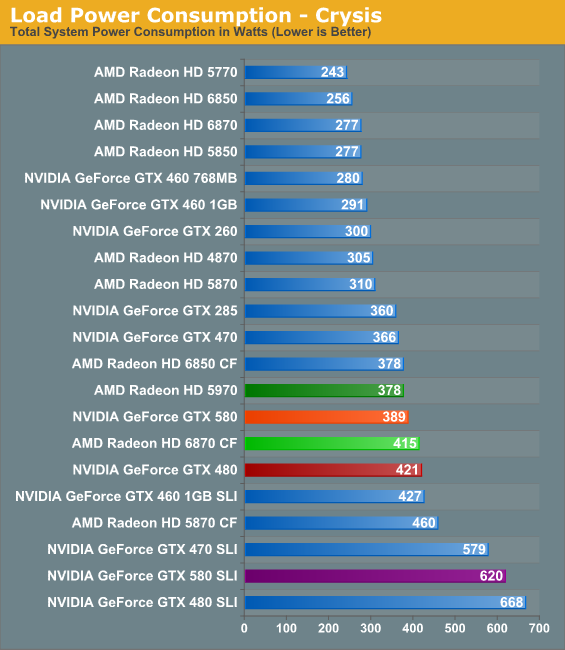

Looking at Crysis, we begin to see the full advantage of NVIDIA’s optimizations and where a single GPU is more advantageous over multiple GPUs. Compared to the GTX 480 NVIDIA’s power consumption is down 10% (never mind the 15% performance improvement), and power consumption comes in under all similar multi-GPU configurations. Interestingly the 5970 still draws less power here, a reminder that we’re still looking at cards near the peak of the PCIe specifications.

As for FurMark, due to NVIDIA’s power throttling we’ve had to get a bit creative. FurMark is throttled to the point where the GTX 580 registers 360W, thanks to a roughly 40% reduction in performance under FurMark. As a result for the GTX 580 we’ve swapped out FurMark for another program that generates a comparable load, Program X. At this point we’re going to decline to name the program, as should NVIDIA throttle it we may be hard pressed to determine if and when this happened.

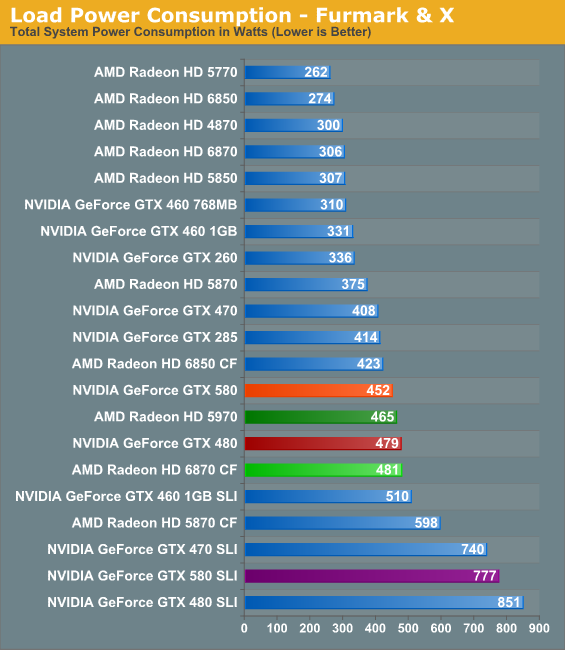

In any case, under FurMark & X we can see that once again NVIDIA’s power consumption has dropped versus the GTX 480, this time by 27W or around 6%. NVIDIA’s worst case scenario has notably improved, and in the process the GTX 580 is back under the Radeon HD 5970 in terms of power consumption. Thus it goes without saying that while NVIDIA has definitely improved power consumption, the GTX 580 is still a large, power hungry GPU.

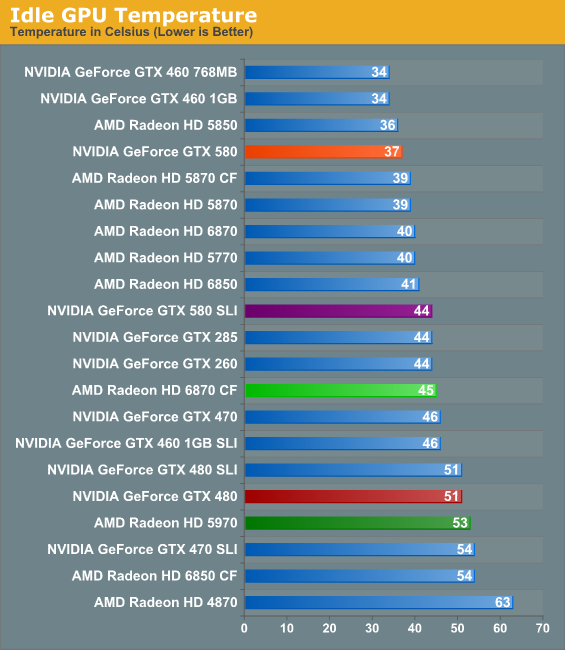

With NVIDIA’s improvements in cooling and in idle power consumption, there’s not a result more dramatic than idle GPU temperatures. The GTX 580 isn’t just cooler, it’s cool period. 37C is one of the best results out of any of our midrange and high-end GPUs, and is a massive departure from the GTX 480 which was at least warm all the time. As we’ll see however, this kind of an idle temperature does come with a small price.

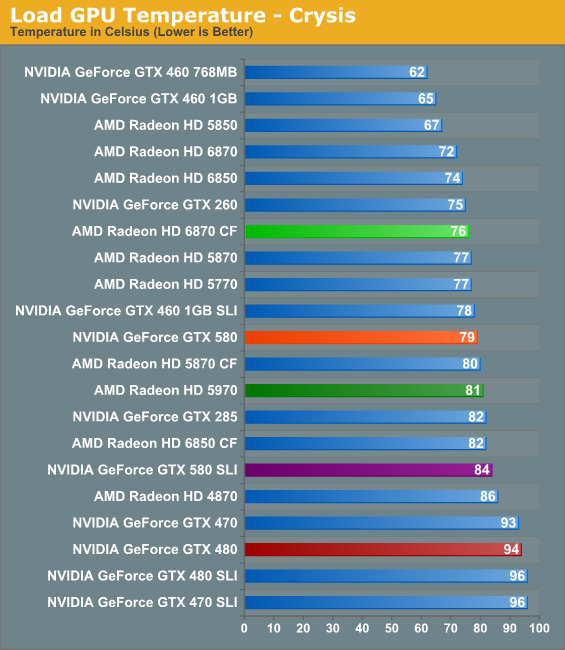

The story under load is much the same as idle: compared to the GTX 480 the GTX 580’s temperatures have dramatically dropped. At 79C it’s in the middle of the pack, beating a number of single and multi GPU setups, and really only losing to mainstream-class GPUs and the 6870 CF. While we’ve always worried about the GTX 480 at its load temperatures, the GTX 580 leaves us with no such concerns.

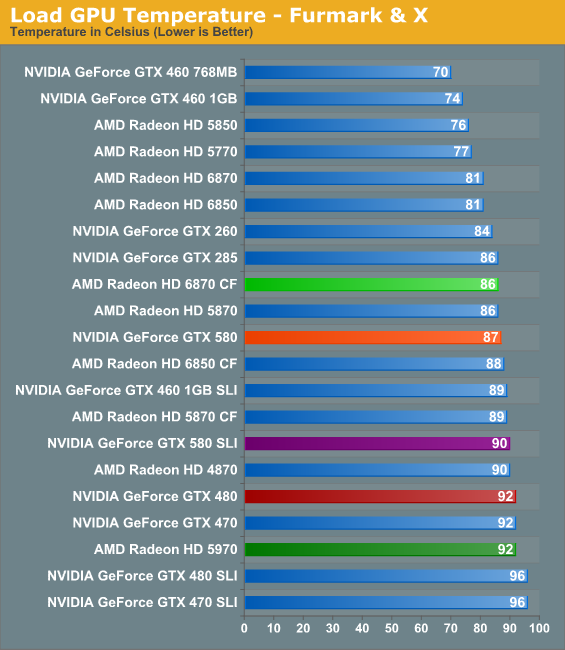

Meanwhile under FurMark and Program X, the gap has closed, though the GTX 580 remains in the middle of the pack. 87C is certainly toasty, but it’s still well below the thermal threshold and below the point where we’d be worried about it. Interestingly however, the GTX 580 is actually just a bit closer to its thermal threshold than the GTX 480 is; NVIDIA rated the 480 for 105C, while the 580 is rated for 97C. We’d like to say this vindicates our concerns about the GTX 480’s temperatures, but it’s more likely that this is a result of the transistors NVIDIA is using.

It’s also worth noting that NVIDIA seems to have done away with the delayed fan ramp-up found on the GTX 480. The fan ramping on the GTX 580 is as near as we can tell much more traditional, with the fan immediately ramping up with higher temperatures. For the purposes of our tests, this keeps the temperatures from spiking as badly.

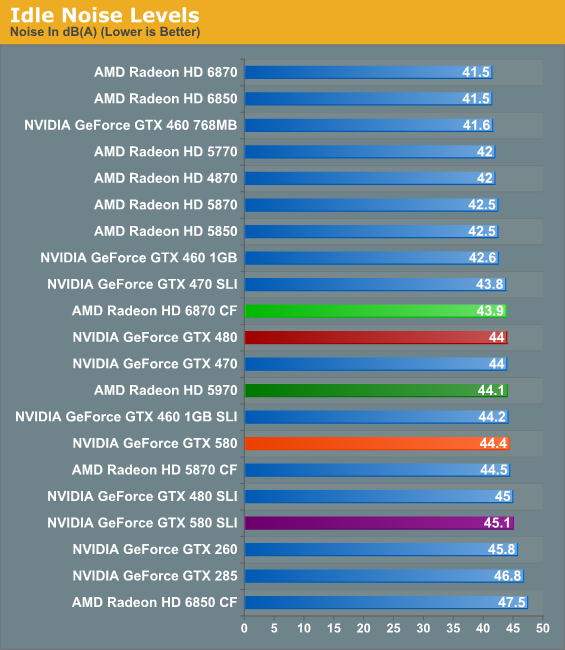

Remember where we said there was a small price to pay for such low idle temperatures? This is it. At 44.4dB, the 580 is ever so slightly (and we do mean slightly) louder than the GTX 480; it also ends up being a bit louder than the 5970 or 6870CF. 44.4 is not by any means loud, but if you want a card that’s whisper silent at idle, the GTX 580 isn’t going to be able to deliver.

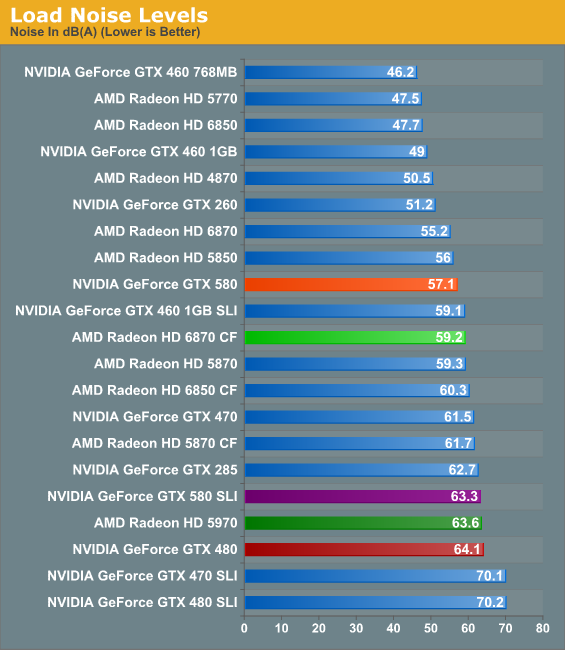

And last but not least is load noise. Between their improvements to power consumption and to cooling, NVIDIA put a lot of effort in to the amount of noise the GTX 580 generates. Where the GTX 480 set new records for a single GPU card, the GTX 580 is quieter than the GTX 285, the GTX 470, and even the Radeon HD 5870. In fact it’s only a dB off of the 5850, a card under most circumstances we’d call the epitome of balance between performance and noise. Graphs alone cannot demonstrate just how much of a difference there is between the GTX 480 and GTX 580 – the GTX 580 is not whisper quiet, but at no point in our testing did it ever get “loud”. It’s a truly remarkable difference; albeit one that comes at the price of pointing out just how lousy the GTX 480 was.

Often the mark of a good card is a balance between power, temperature, and noise, and NVIDIA seems to have finally found their mark. As the GTX 580 is a high end card the power consumption is still high, but it’s no longer the abnormality that was the GTX 480. Meanwhile GPU temperatures have left our self-proclaimed danger zone, and yet at the same time the GTX 580 has become a much quieter card under load than the GTX 480. If you had asked us in what NVIDIA needed to work on with the GTX 480, we would have said noise, temperature, and power consumption in that order; the GTX 580 delivers on just what we would have wanted.

160 Comments

View All Comments

AnandThenMan - Wednesday, November 10, 2010 - link

"Relevent models only please, that have the same performance as the GTX580."So we can only compare cards that have the same performance. Exciting graphs that will make.

RobMel85 - Tuesday, November 9, 2010 - link

I browsed through the 10 pages of comments and I don't think I saw anyone comment on the fact that the primary reason Nvidia corrected their heat problem was by blatantly copying ATi/Sapphire...not only did they plagiarize the goodies under the hood, but they look identical to AMD cards now! Our wonderful reviewer made the point, but no one else seemed to play on it.I say weak-sauce for Nvidia, considering the cheapest 580 on NewEgg is $557.86 shipped; the price exceeds what 480 was initially and the modded/OC'd editions aren't even out yet. It can't support more than 2 monitors by itself and is lacking in the audio department. Yes, it's faster than it's predecessor. Yes, they fixed the power/heat/noise issues, but when you can get similar, if not better, performance for $200 less from AMD with a 6850 CF setup...it seems like a no brainer.

Sure ATi re-branded the new cards as the HD6000 series, but at least they aren't charging top $ for them. Yes, they are slower than the HD5000 series, but you can buy 2 6850s for less than the price of the 480, 580, 5970(even 5870 for some manufacturers) and see similar or better performance AND end up with the extra goodies the new cards support.

I am looking forward to the release of the 69XX cards to see how well they will hold up against the 580. Are they going to be a worthy successor to the 5900, or will they continue the trend of being a significant threat in CrossFire at a reasonable price? Only time will tell...

The real question is, what will happen when the 28nm HD7000 cards hit the market?

tomoyo - Tuesday, November 9, 2010 - link

Actually the newegg prices are because they have a 10% coupon right now. I bet they'll go back to closer to normal after the coupon expires...assuming there's any stock.Sihastru - Wednesday, November 10, 2010 - link

Vapour chamber cooling technology was NOT invented by ATI/Sapphire. They are NOT the first to use it. Convergence Tech, the owner of the patent, even sued ATI/Sapphire/HP because of the infringement (basically means stolen technology).LOL.

RobMel85 - Sunday, November 14, 2010 - link

Where within my post did I say it was invented by ATi/Sapphire...nowhere. The point that I was trying to make was that Nvidia copied the design that ATi/Sapphire had been using to trounce the Nvidia cards. The only reason they corrected their problems was by making their cards nearly identical to AMD/ATi...And to tomoyo, when I made that post there was no 10% coupon on newegg. They obviously added it because everyone else was selling them cheaper.

Belard - Wednesday, November 10, 2010 - link

This is still a "400" series part as it's really technically more advanced than the 480.Does it have additional features? No.

Is it faster, yes.

But check out the advancement feature list.

The 6800s, badly named and should have been 6700s, are slightly slower than the 5800s, but costs a lot less and actually does some things differently from the 5000 series. And sooner or later, there will be a whole family of 6000s.

But here we are, about 6months later and theres a whole new "product line"?

dvijaydev46 - Wednesday, November 10, 2010 - link

Is there any problem with Mediaespresso? My 5770 is faster with mediashow than mediaespresso. Can you check with mediashow to see if your findings are right?Oxford Guy - Wednesday, November 10, 2010 - link

The 480 beats the 580, except at 2560x1600. The difference is most dramatic at 1680x1050.http://techgage.com/reviews/nvidia/geforce_gtx_580...

http://techgage.com/reviews/nvidia/geforce_gtx_580...

http://techgage.com/reviews/nvidia/geforce_gtx_580...

Why is that?

Sihastru - Wednesday, November 10, 2010 - link

Proof that GF110 is not just a GF100 with all the shaders enabled.Oxford Guy - Wednesday, November 10, 2010 - link

This seems to me to be related to the slight shrinkage of the die. What was cut out? Is it responsible for the lower minimum frame rates in Unigine?