AMD’s Radeon HD 6870 & 6850: Renewing Competition in the Mid-Range Market

by Ryan Smith on October 21, 2010 10:08 PM ESTSeeing the Future: DisplayPort 1.2

While Barts doesn’t bring a massive overhaul to AMD’s core architecture, it’s a different story for all of the secondary controllers contained within Barts. Compared to Cypress, practically everything involving displays and video decoding has been refreshed, replaced, or overhauled, making these feature upgrades the defining change for the 6800 series.

We’ll start on the display side with DisplayPort. AMD has been a major backer of DisplayPort since it was created in 2006, and in 2009 they went as far as making DisplayPort part of their standard port configuration for most of the 5000 series cards. Furthermore for AMD DisplayPort goes hand-in-hand with their Eyefinity initiative, as AMD relies on the fact that DisplayPort doesn’t require an independent clock generator for each monitor in order to efficiently drive 6 monitors from a single card.

So with AMD’s investment in DisplayPort it should come as no surprise that they’re already ready with support for the next version of DisplayPort, less than a year after the specification was finalized. The Radeon HD 6800 series will be the first products anywhere shipping with DP1.2 support – in fact AMD can’t even call it DP1.2 Compliant because the other devices needed for compliance testing aren’t available yet. Instead they’re calling it DP1.2 Ready for the time being.

So what does DP1.2 bring to the table? On a technical level, the only major change is that DP1.2 doubles DP1.1’s bandwidth, from 10.8Gbps (8.64Gbps video) to 21.6Gbps (17.28Gbps video); or to put this in DVI terms DP1.2 will have roughly twice as much video bandwidth as a dual-link DVI port. It’s by doubling DisplayPort’s bandwidth, along with defining new standards, that enable DP1.2’s new features.

At the moment the feature AMD is touting the most with DP1.2 is its ability to drive multiple monitors from a single port, which relates directly to AMD’s Eyefinity technology. DP1.2’s bandwidth upgrade means that it has more than enough bandwidth to drive even the largest consumer monitor; more specifically a single DP1.2 link has enough bandwidth to drive 2 2560 monitors or 4 1920 monitors at 60Hz. Furthermore because DisplayPort is a packet-based transmission medium, it’s easy to expand its feature set since devices only need to know how to handle packets addressed to them. For these reasons multiple display support was canonized in to the DP1.2 standard under the name Multi-Stream Transport (MST).

MST, as the name implies, takes advantage of DP1.2’s bandwidth and packetized nature by interleaving several display streams in to a single DP1.2 stream, with a completely unique display stream for each monitor. Meanwhile on the receiving end there are two ways to handle MST: daisy-chaining and hubs. Daisy-chaining is rather self-explanatory, with one DP1.2 monitor plugged in to the next one to pass along the signal to each successive monitor. In practice we don’t expect to see daisy-chaining used much except on prefabricated multi-monitor setups, as daisy-chaining requires DP1.2 monitors and can be clumsy to setup.

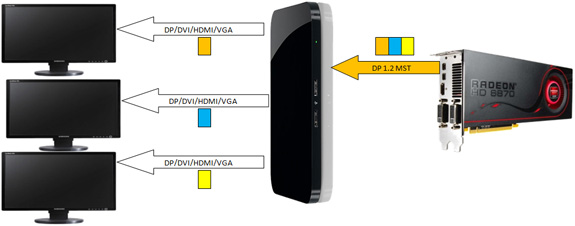

The alternative method is to use a DP1.2 MST hub. A MST hub splits up the signal between client devices, and in spite of what the name “hub” may imply a MST hub is actually a smart device – it’s closer to a USB hub in that it’s actively processing signals than it is an Ethernet hub that blindly passes things along. The importance of this distinction is that the MST hub does away with the need to have a DP1.2 compliant monitor, as the hub is taking care of separating the display streams and communicating to the host via DP1.2. Furthermore MST hubs are compatible with adaptors, meaning DVI/VGA/HDMI ports can be created off of a MST hub by using the appropriate active adaptor. At the end of the day the MST hub is how AMD and other manufacturers are going to drive multiple displays from devices that don’t have the space for multiple outputs.

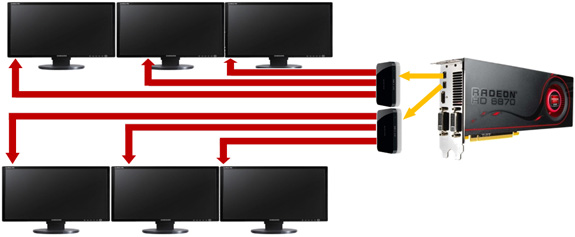

For Barts AMD is keeping parity with Cypress’s display controller, giving Barts the ability to drive up to 6 monitors. Unlike Cypress however, the existence of MST hubs mean that AMD doesn’t need to dedicate all the space on a card’s bracket to mini-DP outputs, instead AMD is using 2 mini-DP ports to drive 6 monitors in a 3+3 configuration. This in turn means the Eyefinity6 line as we know it is rendered redundant, as AMD & partners no longer need to produce separate E6 cards now that every Barts card can drive 6 DP monitors. Thus as far as AMD’s Eyefinity initiative is concerned it just became a lot more practical to do a 6 monitor Eyefinity setup on a single card, performance notwithstanding.

For the moment the catch is that AMD is the first company to market with a product supporting DP1.2, putting the company in a chicken & egg position with AMD serving as the chicken. MST hubs and DP1.2 displays aren’t expected to be available until early 2011 (hint: look for them at CES) which means it’s going to be a bit longer before the rest of the hardware ecosystem catches up to what AMD can do with Barts.

Besides MST, DP1.2’s bandwidth has three other uses for AMD: higher resolutions/bitdepths, bitstreaming audio, and 3D stereoscopy. As DP1.1’s video bandwidth was only comparable to DL-DVI, the monitor limits were similar: 2560x2048@60Hz with 24bit color. With double the bandwidth for DP1.2, AMD can now drive larger and/or higher bitdepth monitors over DP; 4096x2160@50Hz for the largest monitors, and a number of lower resolutions with 30bit color. When talking to AMD Senior Fellow and company DisplayPort guru David Glen, higher color depths in particular came up a number of times. Although David isn’t necessarily speaking for AMD here, it’s his belief that we’re going to see color depths become important in the consumer space over the next several years as companies look to add new features and functionality to their monitors. And it’s DisplayPort that he wants to use to deliver that functionality.

Along with higher color depths at higher resolutions, DP1.2 also improves on the quality of the audio passed along by DP. DP1.1 was capable of passing along multichannel LPCM audio, but it only had 6.144Mbps available for audio, which ruled out multichannel audio at high bitrates (e.g. 8 channel LPCM 192Khz/24bit) or even compressed lossless audio. With DP1.2 the audio channel has been increased to 48Mbps, giving DP enough bandwidth for unrestricted LPCM along with support for Dolby and DTS lossless audio formats. This brings it up to par with HDMI, which has been able to support these features since 1.3.

Finally, much like how DP1.2 goes hand-in-hand with AMD’s Eyefinity initiative, it also goes hand-in-hand with the company’s new 3D stereoscopy initiative, HD3D. We’ll cover HD3D in depth later, but for now we’ll touch on how it relates to DP1.2. With DP1.2’s additional bandwidth it now has more bandwidth than either HDMI1.4a or DL-DVI, which AMD believes is crucial to enabling better 3D experiences. Case in point, for 3D HDMI 1.4a maxes out at 1080p24 (48Hz total), which is enough for a full resolution movie in 3D but isn’t enough for live action video or 3D gaming, both of which require 120Hz in order to achieve 60Hz in each eye. DP1.2 on the other hand could drive 2560x1600 @ 120Hz, giving 60Hz to each eye at resolutions above full HD.

Ultimately this blurs the line between HDMI and DisplayPort and whether they’re complimentary or competitive interfaces, but you can see where this is going. The most immediate benefit would be that this would make it possible to play Blu-Ray 3D in a window, as it currently has to be played in full screen mode when using HDMI 1.4a in order to make use of 1080p24.

In the meantime however the biggest holdup is still going to be adoption. Support for DisplayPort is steadily improving with most Dell and HP monitors now supporting DisplayPort, but a number of other parties still do not support it, particular among the cheap TN monitors that crowd the market these days. AMD’s DisplayPort ambitions are still reliant on more display manufacturers including DP support on all of their monitors, and retailers like Newegg and Best Buy making it easier to find and identify monitors with DP support. CES 2011 should give us a good indication on how much support there is for DP on the display side of things, as display manufacturers will be showing off their latest wares.

197 Comments

View All Comments

Quidam67 - Friday, October 29, 2010 - link

Well that's odd.After reading about the EVGA FTW, and its mind-boggling factory overclock, I went looking to see if I could pick one of these up in New Zealand.

Seems you can, or maybe not. As per this example http://www.trademe.co.nz/Browse/Listing.aspx?id=32... the clocks are 763Mhz and 3.8 on the memory?!?

What gives, how can EVGA give the same name to a card and then have different specifications on it? So good thing I checked the fine-print or else I would have been bumbed out if I'd bought it and then realised it wasn't clocked like I thought it would be..

Murolith - Friday, October 29, 2010 - link

So..how about that update in the review checking out the quality/speed of MLAA?CptChris - Sunday, October 31, 2010 - link

As the cards were compared to the OC nVidia card I would be interested in seeing how the 6800 series also compares to a card like the Sapphire HD5850 2GB Toxic Edition. I know it is literally twice the price as the HD6850 but would it be enough of a performance margin to be worth the price difference?gochichi - Thursday, November 4, 2010 - link

You know, maybe I hang in the wrong circles but I by far keep up to date on GPUs more than anyone I know. Not only that, but I am eager to update my stuff if it's reasonable. I want it to be reasonable so badly because I simply love computer hardware (more than games per say, or as much as the games... it's about hardware for me in and of itself).Not getting to my point fast enough. I purchased a Radeon 3870 at Best Buy (Best Buy had an oddly good deal on these at the time, Best Buy doesn't tend to keep competitive prices on video cards at all for some reason). 10 days later (so I returned my 3870 at the store) I purchased a 4850, and wow, what a difference it made. The thing of it is, the 3870 played COD 4 like a champ, the 4850 was ridiculously better but I was already satisfied.

In any case, the naming... the 3870 was no more than $200.00 I think it was $150.00. And it played COD4 on 24" 1900x1200 monitor with a few settings not maxed out, and played it so well. The 4850 allowed me to max out my settings. Crysis sucked, crysis still sucks and crysis is still a playable benchmark. Not to say I don't look at it as a benchmark. The 4850 on the week of its release was $199.99 at Best Buy.

Then gosh oh golly there was the 4870 and the 4890, which simply took up too much power... I am simply unwilling to buy a card that uses more than one extra 6-pin connector just so I can go out of my way to find something that runs better. So far, my 4850 has left me wanting more in GTA IV, (notice again how it comes down to hardware having to overcome bad programming, the 4850 is fast enough for 1080p but it's not a very well ported game so I have to defer to better hardware). You can stop counting the ways my 4850 has left me wanting more at 1900 x 1200. I suppose maxing out Starcraft II would be nice also.

Well, then came out the 5850, finally a card that would eclipse my 4850... but oh wait, though the moniker was the same (3850 = so awesome, so affordable, the 4850 = so awesome, so affordable, the 5850 = two 6-pin connectors, so expensive, so high end) it was completely out of line with what I had come to expect. The 4850 stood without a successor. Remember here that I was going from 3870 to 4850, same price range, way better performance. Then came the 5770, and it was marginally faster but just not enough change to merit a frivolous upgrade.

Now, my "need" to upgrade is as frivolous as ever, but finally, a return to sanity with the *850 moniker standing for fast, and midrange. I am a *850 kind of guy through and through, I don't want crazy power consumption, I don't want to be able to buy a whole, really good computer for the price of just a video card.

So, anyhow, that's my long story basically... that the strange and utterly upsetting name was the 5850, the 6850 is actually right in line with what the naming should have always staid as. I wouldn't know why the heck AMD tossed a curve ball for me via the 5850, but I will tell you that it's been a really long time coming to get a true successor in the $200 and under range.

You know, around the time of the 9800GT and the 4850, you actually heard people talk about buying video cards while out with friends. The games don't demand much more than that... so $500 cards that double their performance is just silly silly stuff and people would rather buy an awesome phone, an iPad, etc. etc. etc.

So anyhow, enough of my rambling, I reckon I'll be silly and get the true successor to my 4850... though I am assured that my Q6600 isn't up to par for Starcraft II... oh well.

rag2214 - Sunday, November 7, 2010 - link

The 6800 series my not beat the 5870 yet but it is the start of the HDMI 1.4 for 3dHD not available in any other ATI graphics cards.Philip46 - Monday, November 15, 2010 - link

The review stated why was there a reson to buy a 460(not OC'ed).How about benchmarks of games using Physx?

For instance Mafia 2 hits 32fps @ 1080p(I7-930 cpu) when using Physx on high, while the 5870 manages only 16.5fps, while i tested both cards.

How about a GTA:IV benchmark?, because the Zotac 2GB GTX 460, runs the game more smoothly(the same avg fps, except the min fps on the 5850 are lower in the daytime) then the 5850 (2GB).

How about even a Far Cry 2 benchmark?

Co'me on anandtech!, lets get some real benchmarks that cover all aspects of gaming features.

How about adding in driver stability? Ect..

And before anyone calls me biased, i had both the Zotac GTX 460 and Saffire 5850 2GB a couple weeks back, and overall i went with the Zotac 460, and i play Crysis/Stalker/GTA IV/Mafia 2/Far Cry 2..ect @ 1080p, and the 460 just played them all more stable..even if Crysis/Stalker were some 10% faster on the 5850.

BTW: Bad move by anandtech to include the 460 FTC !

animekenji - Saturday, December 25, 2010 - link

Barts is the replacement for Juniper, NOT Cypress. Cayman is the replacement for Cypress. If you're going to do a comparison to the previous generation, then at least compare it to the right card. HD6850 replaces HD5750. HD6870 replaces HD5770. HD6970 replaces HD5870. You're giving people the false impression that AMD knocked performance down with the new cards instead of up when HD6800 vastly outperforms HD5700 and HD6900 vastly outperforms HD5800. Stop drinking the green kool-aid, Anandtech.