AMD Discloses Bobcat & Bulldozer Architectures at Hot Chips 2010

by Anand Lal Shimpi on August 24, 2010 1:33 AM ESTIt’s an Out of Order Atom

Ever since the Pentium Pro (P6), we have been blessed with out of order microprocessor architectures - these being designs that can execute instructions out of program order to improve performance. Out of order architectures let you schedule independent instructions ahead of others that are either waiting for data from main memory or waiting for specific execution resources to free up. The resulting performance boost comes at the expense of power and die size. All of the tracking logic to make sure that instructions executed out of order still retire in order eats up die area as well as more power.

When Intel designed the Atom processor it went back to an in-order design as a way of reducing power. Intel has committed to using in-order architectures in Atom for 4 - 5 years post introduction (that would end sometime in the 2012 - 2013 time frame).

For smartphones, Intel’s commitment to in-order makes sense. Average power consumption under load needs to remain at less than 1W and you simply can’t hit that with an out-of-order Atom at 45nm.

For netbooks and notebooks however, the tradeoff makes less sense. Jarred has often argued that a CULV notebook is a far better performer than a netbook at very similar price/battery life metrics. No one is pleased with Atom’s performance in a netbook, but there’s clearly demand for the form factor and price point. Where there’s an architectural opportunity like this, AMD is usually there to act.

Over the past decade AMD has refrained from copying an Intel design, instead AMD usually looks to leapfrog Intel by implementing forward looking technologies earlier than its competitor. We saw this with the 64-bit K8 and the cache hierarchy of the original Phenom and Phenom II processors. Both featured design decisions that Intel would later adopt, they were simply ahead of their time.

With Atom stuck in an in-order world for the near future, AMD’s opportunity to innovate is clear.

The Architecture

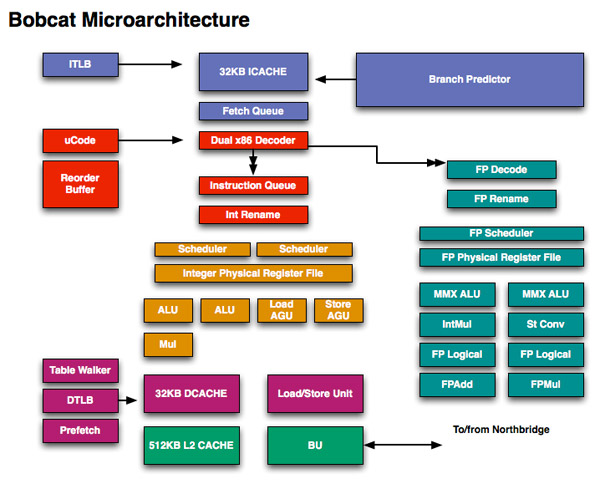

Admittedly I was caught off guard by Bobcat’s architecture: it’s a dual-issue design, the first AMD has introduced since the K6 and also the same issue width Intel chose for Atom. Where AMD and Intel diverge however is in the execution side: Bobcat is a fully out of order architecture.

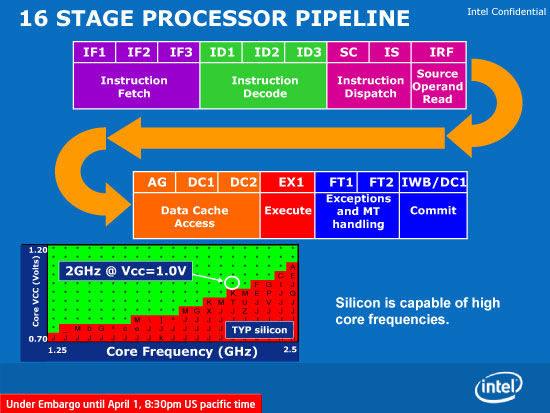

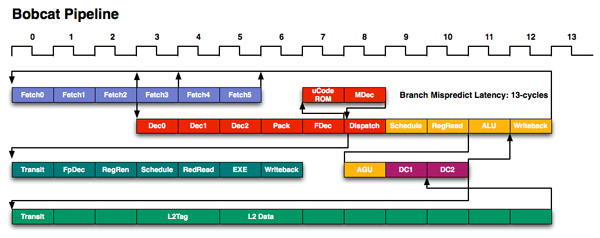

The move to out of order should provide a healthy single threaded performance boost over Atom, assuming AMD can ramp clocks up. Bobcat has a 15 stage integer pipeline, very close to Atom's 16 stage pipe. The two pipeline diagrams are below:

Intel's Atom pipeline

You’ll note that there are technically six fetch stages, although only the first three are included in the 15 stage number I mentioned above. AMD mentioned that the remaining three stages are used for branch prediction, but in a manner it is unwilling to disclose at this time due to competitive concerns.

Bobcat has two independent, dual ported integer scheduler. One feeds two ALUs (one of which can perform integer multiplies) while the other feeds two AGUs (one for loads and one for stores).

The FPU has a single dual ported scheduler that feeds two independent FPUs. Similar to the Atom processor, only one of the ports can handle floating point multiplies. The FP mul and add units can perform two single precision (32-bit) multiplies/adds per cycle. Like the integer side, the FPU uses a physical register file to reduce power.

Bobcat supports SSE1-3, with future versions adding more instructions as necessary.

Bobcat supports out of order loads and stores similar to Intel’s Core architecture as well.

The Bobcat core has a 3-cycle 64KB L1 (32KB instruction + 32KB data cache) that’s 8-way set associative. The L2 cache is a 17-cycle, 512KB 16-way set associative cache. I originally measured Atom’s L1 and L2 at 3 and 18 cycles respectively (I’ve heard numbers as low as 15 for Atom’s L2) so AMD is definitely in the right ballpark here.

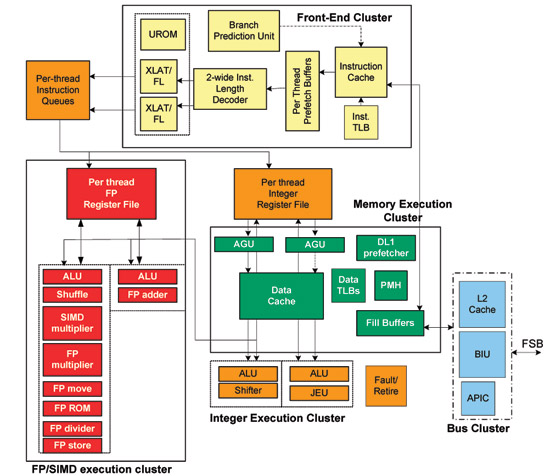

Intel's Atom Microarchitecture

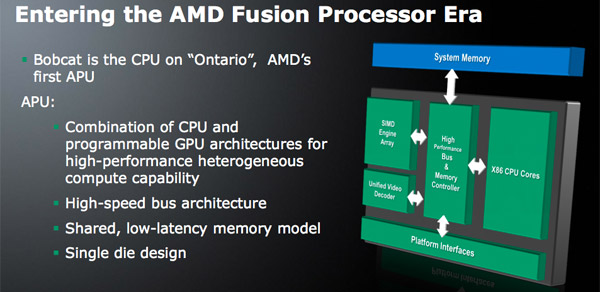

Unlike the original Atom, Bobcat will never ship as a standalone microprocessor. Instead it will be integrated with other cores and a GPU and sold as a single SoC. The first incarnation of Bobcat will be a processor due out in early 2011 for netbooks and thin and light notebooks called Ontario. Ontario will integrate two Bobcat cores with an AMD GPU manufactured on TSMC’s 40nm process (Bobcat will be the first x86 core made at TSMC). This will be the first Fusion product to hit the market.

Note that there's an on-die memory controller but it's actually housed in between the CPU and GPU in order to equally serve both masters.

76 Comments

View All Comments

stalker27 - Wednesday, August 25, 2010 - link

Those "some of you" don't make them R&D money... which, silly boy that you are... got you those fast chips in the first place.Oh boy, how important some people think they are.

iwod - Tuesday, August 24, 2010 - link

Deleted:iwod - Tuesday, August 24, 2010 - link

I am not a Professional Engineer, but i do have my degree in Electrics Engineering. I fully understand how Models and Simulations, MatLabs, Video Encoding, or CG Rendering requires as much Performance as it can get.But to the world, I am sorry you are right. You are exactly not counted in "the rest of the world". You are inside a professional niche whether you like it or not. That includes even Hardcore PC gamers, which is shrinking day by day due to completion from consoles. No this is not to say PC Gaming to going to die. It just means it is getting smaller. And this trend is not going to change until something dramatic happens.

The rest of the world, counted by BILLIONS, are moving, or looking to move to iPad, Netbook, Cheap Notebook, or something that just get things done as cheaply as possible. It is the reason why Netbook took off. Why Atom based All in One PC took off. No one in the marketing department knew such Gigantic market exists.

Lastly, i want to emphasis, by the "world" i really mean the World. Not an American view of the world which would just literally be America by itself. China, India, Brazil, and even countries like Japan are having trouble selling high end PC.

jabber - Wednesday, August 25, 2010 - link

Yep, all you computer engineer folks and render farms etc. account for a very small minority in the "world of computer users". You are not mainstream users.In general terms the world isnt really that interested anymore in CPU performance improvements.

Most folks out there just want smaller and lower power so they can carry a computer around with them. They dont give a damn what the CPU architecture is.

The leviathan CPU approach by AMD and Intel could go the way of the dinosaur for mainstream computing. ARM could well be the new mainstream CPU leader in just five years.

Just think outside your own little box.

B3an - Wednesday, August 25, 2010 - link

Ridiculous small minded comment.Render farms and the like may not be mainstream, but gaming is, then theres things like video encoding, servers, workstations, databases, all very popular mainstream stuff that millions of people use and the internet also relies on.

A very large percentage of computer users will always want faster CPU's.

If Intel or AMD did what you think most people want, then nothing would progress either. No 3D interfaces, no artificial intelligence, no anything, as the power needed for it would never be there.

BitJunkie - Wednesday, August 25, 2010 - link

It all comes down to usage models right? The point is that AMD and Intel are trying to capture as many usage models as possible within a given architecture.This is why modular design is kind of appealing - you can bolt stuff together to hit the desired spot in the thermal-computational envelope.

The thing that "engineers" fall foul of is that there is a divergence going on. On the one hand general computing is dominating, with a desire to drive down power usage. On the other hand there is the same appetite for improved computational performance as we get smarter and more ambitious in the way we tackle engineering problems.

The issue is that both camps are looking to the same architecture for answers.

The reason why that doesnt work for me is that some computations just don't benefit from parallelism - more cores doesn't mean more productivity. Therefore I want to see the few cores that I do use become super efficient at flipping bits on a floating point calculation.

Right now there's no clear answer to that problem - but it will probably come with Fusion and the point at which the GPU takes the role that math co-processors did before being swallowed into the CPU. For this to work we need Microsoft to handle the GPU compute stuff natively within windows so that existing code can execute and not think about what part of the hardware is lifting the load.

Therefore my sincere hope is that GPUs will become the new math co-processors and Windows 8 will make that happen.

Oh, and there's no need for any tribalism here wrt to usage models. It's all good.

jabber - Wednesday, August 25, 2010 - link

No its not small minded. Its looking at the big picture.The big picture is that for most users their CPU power needs were reached and surpassed some time ago.

CPUs are not the bottle neck in modern PCs. Storage systems are.

We need better, cheaper and faster storage.

I've been pushing out 1.6Ghz dual core Atoms to 95% of my small business customers and a good chunk of domestics for the past year.

I havent had one moan or complaint that the PCs were not fast enough. Very few customers are hardcore gamers. Gamers are still a small subsection of the computing world.

I'm not asking AMD/Intel to stop research in new and faster CPU designs. Keep going boys its all good.

I'm just saying that the majority of mainstream computing lays along a very different path going forward to those that require power at all costs.

Not all of us need octa-cores at 4Ghz+. A lot of us can get by with a 2Ghz dual core and a half decent 7200rpm HDD.

Most of the PCs I see are still single core. Folks are managing just fine right now.

Plenty folks are now managing with just 1Ghz or less on a mobile device. Thats why Intel are taking ARM more seriously as they see that future mainstream being more low power, mobile based than leviathan mega-core-mega-wattage beasts.

Things will change rapidly over the next three or four years.

Aries1470 - Friday, August 27, 2010 - link

Quote:"We need better, cheaper and faster storage.

I've been pushing out 1.6Ghz dual core Atoms to 95% of my small business customers and a good chunk of domestics for the past year.

I havent had one moan or complaint that the PCs were not fast enough. Very few customers are hardcore gamers. Gamers are still a small subsection of the computing world.

Well, I for one totally agree. I purchaed last year an Atom 330 dual core, and it does more than enough.

I already had a much more powerful system, of which I use about.... one or twice a month if that! It is a quad core, has 4 gigs and a 2gb 9600 gpu.

I have moved away from gaming and encoding and all that stuff.

The motherboard I have is:

ATOM-GM1-330 of which I imported to Australia from the U.S.A., since the distibuter here does not bring this model.

I have paired it with 4Gb of memory, but using only 3gb, since I am running 32bit systems (XP & win 7)

A Blu-ray writer....

and a LP 5450 used with an adapter from 16x -> 1x

It plays blu-ray great while browsing at the same time!

I browse the internet at the same time as my wife and kid watch a movie on the 50" plasma, with NO stutter.

Needless to say, I got it as a secondary pc... and has become my main pc. It is left on basically 24/7, with NO fan on the cpu! Low power consumption too.

It performs great for the functions I want, and can even play Civ IV on it... but not much else. If I want to play real gaming, I use my other pc.

So for what it is, it works great for my needs! No useless power consumption, does its Boinc too, albeit slow, but still better than my older P2-550... that was still alive a few years ago.

Most people I know, don't use their pc for gaming anymore, mostly for facebook/ twitter and video calling, they have their Wii's & Xbox and one has a PS3...

Ok, end of rant, but to conclude, I concur, your average Joe, has his gaming machine, and his pc is for htpc or not a gaming power pc.

gruffi - Thursday, August 26, 2010 - link

Sandy Bridge, the architecture that is suppose to leap again like Pentium 4 to C2D? Thanks for the joke of the day.Sandy Bridge looks more like a minor update of Nehalem/Westmere. More load/store bandwidth, improved cache, AVX and maybe a few other little tweaks. Nothing special. I think it will be less of an improvement as Core 2 (Core successor) and Nehalem (Core 2 successor) were.

In many ways Bulldozer looks superior to what Intel has to offer in 2011.

Lonbjerg - Tuesday, August 24, 2010 - link

I don't care for "Bobcat"...mediocre performance in a cramped formfactor (netbooks) have as much interest to me as being dragged naked across field filled with broken glass.The "Bulldozer" looks fine on paper...problem is that so did Phenom.

I look forward to the real reviews, and not PR slices :)