SeaMicro Announces SM10000 Server with 512 Atom CPUs and Low Power Consumption

by Anand Lal Shimpi on June 14, 2010 1:38 PM EST- Posted in

- IT Computing

- CPUs

- SeaMicro

Two years ago when I first covered Intel’s Atom architecture I proposed that Moore’s Law has paved the way for two things: 1) ridiculously fast microprocessors, and 2) fast enough microprocessors.

The first category is used to push the bleeding edge of software. Everything from scientific computation to 3D gaming. If it’d never been done before, Moore’s Law enabled companies like AMD, Intel and NVIDIA to build the microprocessors we needed to make it happen.

The second category is a more recent development. If you don’t need the compute power, Moore’s Law enabled the creation of smaller, cheaper, more power efficient microprocessors to deliver performance that’s good enough. These types of chips are found in everything from netbooks to smartphones.

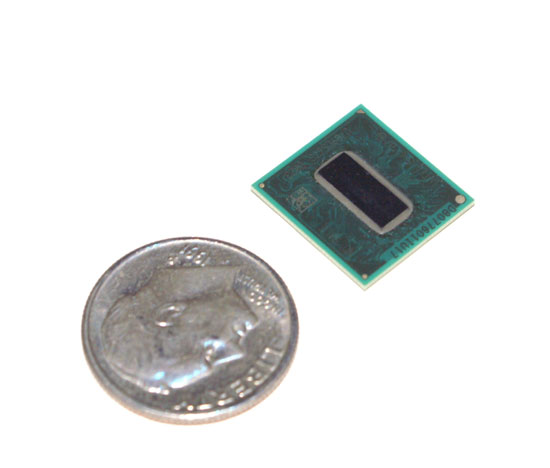

Intel's Atom (Silverthorne) Processor

A number of folks are arguing that the same approach can be applied to server workloads. They argue that the majority of time the servers that drive your favorite websites or cloud services are idle, at least from the CPU perspective. For servers that aren’t virtualized, this is largely true. You don’t build servers for average load, you build them to make sure they can withstand maximum load. The unfortunate result is that when these servers are running during periods of low traffic, they aren’t very power efficient.

For a small operation like AnandTech this isn’t much of a problem. But on a larger scale, it adds up. Data centers are easily power constrained. High density servers give us dozens of microprocessor cores in the space of several rack units. That’s great for compute, but terrible for power consumption.

Also keep in mind that even the slowest servers you can buy today are still pretty powerful. In many cases you need physical box redundancy but not the added horsepower of having more hardware. Add in live fail-over support to minimize downtime and you’ve got even more wasted power.

Today if you don’t need the performance a multi-core Xeon can offer you, but you need tons of physical servers, there are very few options. You can stick with a simple single core server but then your power consumption even at idle is still in the range of dozens of watts. You could make the argument that as CPUs get more powerful, there’s room for a category of “fast enough” servers. And thankfully we already have a processor that’s “fast enough”. The Atom.

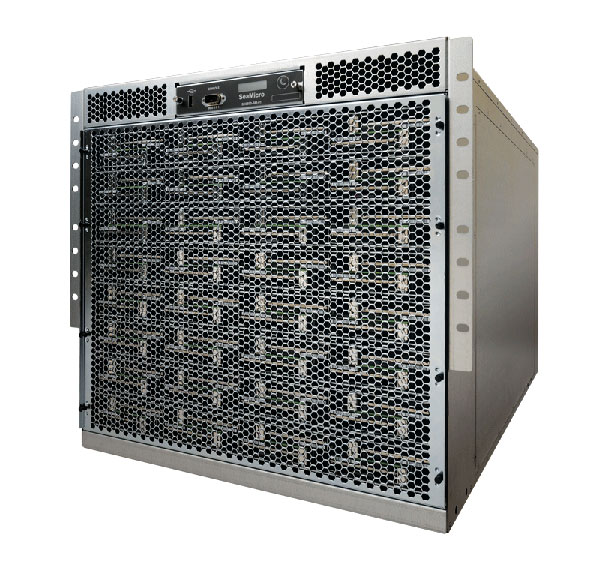

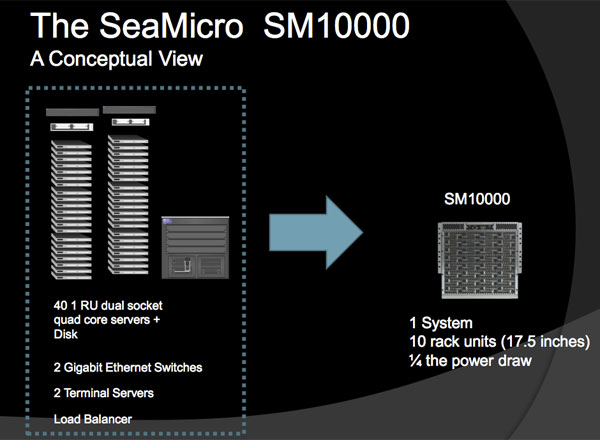

Take 512 Atom based servers, cram them into a box that consumes 2kW of power, give it boatloads of networking and you’ve got the SM10000 by SeaMicro. This $139K box is designed to replace dozens of quad core Xeon/Opteron boxes that remain idle most of the time. According to SeaMicro if your business model is that you’re giving something away for free on the Internet, then the SM10000 might be for you.

SeaMicro’s CEO comes from the network field, while its CTO is a former Sun and AMD microprocessor architect (he was apparently one of five chief architects on AMD’s Bulldozer core). The company not only makes the 512 Atom server but also a custom ASIC inside that makes the technology work.

The SM philosophy is simple; you don’t take a space shuttle to the grocery store. If you don’t need such a beefy server for your workload, why continue to use one?

53 Comments

View All Comments

nofumble62 - Tuesday, June 15, 2010 - link

So now this server need only 4 racks. Cheaper, more energy efficient.I think they have demonstrated something like 80 cores on a chip couple years ago. You bet it is coming.

joshua4000 - Tuesday, June 15, 2010 - link

I thought current AMD/Intel CPUs will save some power due to reduced clock speeds when unused. Wasn't there that core-parking thingy introduced with Win7 as well?So why opt for a slower solution which will probably take a good amount of time longer to finish the same task?

piroroadkill - Tuesday, June 15, 2010 - link

Why would I want a bunch of shite servers? Initially, I thought they were just unified into one big ass server, or maybe a couple of servers.But this as I understand it, is effectively a bunch of netbooks in an expensive box. No.

LoneWolf15 - Tuesday, June 15, 2010 - link

Silly question, but...Say I run VMWare ESX on this box. Is it possible to dedicate more than one server board to support a single virtual machine, or at least, more than one server board to running the VMWare OS itself? Or am I looking at it all wrong?

It seems like even if I could, VMWare's limit on how many CPU sockets you can have for a given license might be a limiting factor.

cdillon - Tuesday, June 15, 2010 - link

ESX and other virtualization systems can cluster, but not in that way. Each Atom on the DM10000 would have to run its own copy of the virtualization software. That's not what you want to do. The DM10000 is really the *opposite* of virtualization. Instead of taking fewer, faster CPUs and splitting them up into smaller virtual CPUs, which is a very flexible way of doing things, they're using far more slower CPUs in a completely inflexible way. I'm having a really hard time thinking of any actual benefits of this thing compared to virtualization on commodity servers.cl1020 - Tuesday, June 15, 2010 - link

Same here. For the money you could easlily build out 500 vm's on comodity blade servers running ESX and have a much more flexible solution.I just don't see any scenario where this would be a better solution.

mpschan - Tuesday, June 15, 2010 - link

Many people here are talking about how virtualization is a better solution and how they can't envision a market for this.Personally, I think that this isn't a bad first design for a server of this type. Sure, it could be better. But I doubt they whipped up this idea yesterday. They probably spent a great deal of time designing this sucker from the ground up.

The market that will find this useful might be small, but it also might be large enough to fund the next version. And who knows what that might be capable of. Better cross-"server" computation, better access to other CPUs' memory, maybe other CPU options, ECC, etc.

MGSsancho - Tuesday, June 15, 2010 - link

Would be an awesome server if you want to index things. 64 nics on it? yes I know 1 server could easy connect to thousands of connections but this beast has a market. maybe for server testing; use this box as a client node, have each atom have 25 concurrent connections. that is 12,800 connections to your server array. great for testing IMO. or how about network testing; with 64 nics you hook up 10 or so nics to each switch and test your setup. you can mix it up with 10gb Ethernet nics as well. I personally see this device being amazing for testing.ReaM - Thursday, June 17, 2010 - link

Hi,Atom has a horrible performance per watt value!!!

Using simple mobile core2duo will save you more power!!!

I mean, read every Atom test, the mobile chips are far better. (as I can remember up to 9 times more efficient, for the same performance)

this product is a failure

Oxford Guy - Friday, June 18, 2010 - link

"The things that suck about the atom:1. double precision. Use a double, and the Atom will grind to a halt.

2. division. Use rcp + mul instead.

3. sqrt. Same as division.

All of those produce unacceptable stalls, and annihilate your performance immediately. So don't use them!

Now, you'd imagine those are insurmountable, but you'd be wrong. If you use the Intel compiler, restrict yourself to float or int based SSE instuctions only, avoid the list of things that kill performance, and make extreme use of OpenMP, they really can start punching above their weight. Sure they'll never come close to an i7, but they aren't *that* bad if you tune your code carefully. Infact, the biggest problem I've found with my Atom330 system is not the CPU itself, but good old fashioned memory bandwidth. The memory bandwidth appears to be about half that of Core2 (which makes sense since it doesn't support dual channel memory), and for most people that will cripple the performance long before the CPU runs out of grunt.

The biggest problem with them right now is that they are so different architecturally from any other x86/x64 CPU that all apps need to be re-compiled with relevant compiler switches for them. Code optimised for a Core2 or i7 performs terribly on the atom."

How do these drawbacks compare to the ARM, Tegra, and Apple chips?