Windows Home Server v2 'Vail' Beta: Drive Extender v2 Dissected

by Ryan Smith on April 27, 2010 10:39 PM EST- Posted in

- Operating Systems

- Ryan's Ramblings

- WHS

- Windows

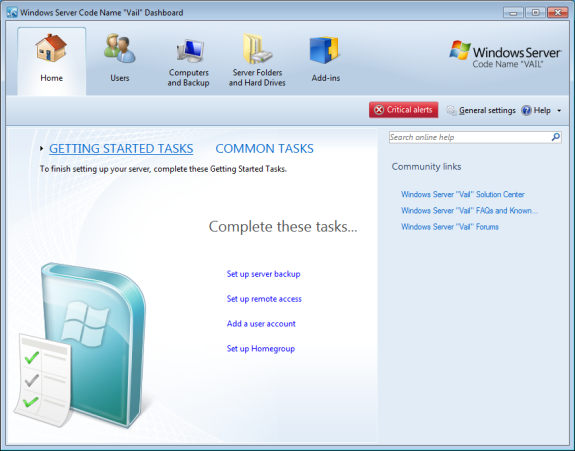

Yesterday Microsoft released the first public beta of the next version of Windows Home Server, currently going under the codename of Vail (or as we like to call it, WHS v2). WHS v2 has been something of a poorly kept secret, as word leaked out about its development as early as 2008. In more recent times an internal beta leaked out late last year, confirming that WHS v2 existed and giving everyone an idea of what Microsoft has in store for the next iteration of their fledgling home server OS.

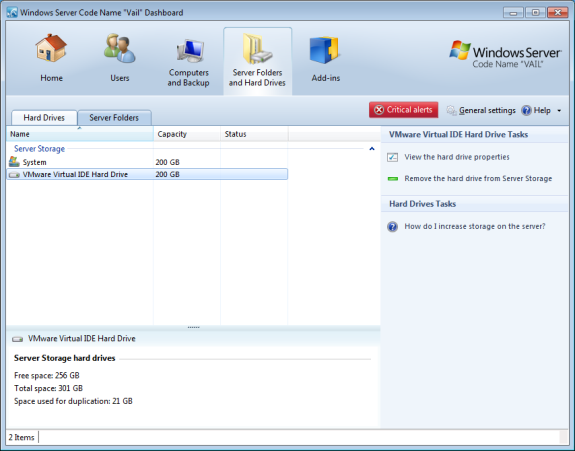

As was the case with WHS v1, WHS v2 is having what we expect to be a protracted open beta period to squish bugs and to solicit feedback. This is very much a beta release by Microsoft’s definition (as opposed to Google’s definition) so it’s by no means production-worthy, but it’s to the point where it’s safe to throw it on a spare box or virtual machine and poke at it. Being based on Windows Server 2008 R2 (WHS v1 was based on Server 2003), the underlying OS is already complete, so what’s in flux are WHS-specific features.

We’ll cover WHS v2 more in depth once it’s closer to shipping, but there’s one interesting thing that caught our eye with WHS v2 that we’d like to talk about: Drive Extender.

Drive Extender v2: What’s New

Drive Extender was the biggest component of the secret sauce that made WHS unique from any other Microsoft OS. It was Drive Extender that abstracted the individual hard drives from the user so that the OS could present a single storage pool, and it was Drive Extender that enabled RAID-1 like file duplication on WHS v1. Drive Extender was also the most problematic component of WHS v1 however: it had to be partially rewritten for WHS Power Pack 1 after it was discovered that Drive Extender was leading to file corruption under certain situations. The new Drive Extender solved the corruption issues, but it also slightly changed the mechanism of how it worked, doing away with the “landing zone” concept in favor of writing files to their final destination in the first place.

For WHS v2, Microsoft has once again rewritten Drive Extender, this time giving it a full overhaul. Microsoft hasn’t released the full details on how Drive Extender v2 works, but we do know a few things that are worth discussing.

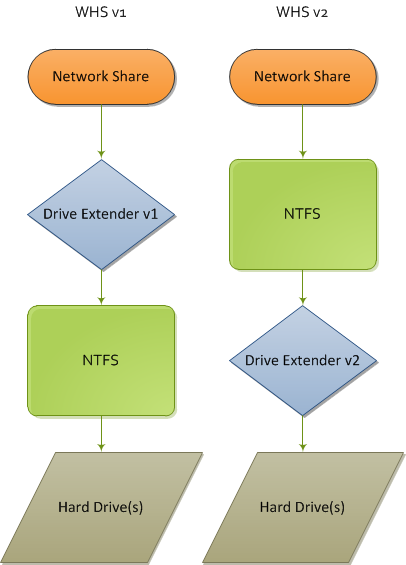

At the highest level, Drive Extender v1 was file based, sitting on top of the file system with the network shares going through it to access files. Hard drives in turn were NTFS formatted and contained complete files, with Drive Extender directing the network sharing service to the right drive through the use of NTFS tombstones and reparse points.

Drive Extender v2 is turning this upside down. The new Drive Extender is implemented below the file system, putting it between NTFS and the hard drives. With this change also comes a change in how data is written to disk – no longer are the drives NTFS formatted with the files spread among them, rather now they’re using a custom format that only WHS v2 can currently read. With Drive Extender v2 sitting between NTFS and the hard drives, anything that hits NTFS direct now receives full abstraction without the need to go through shared folders. In a nutshell, while Drive Extender v1 was file based, v2 is block based.

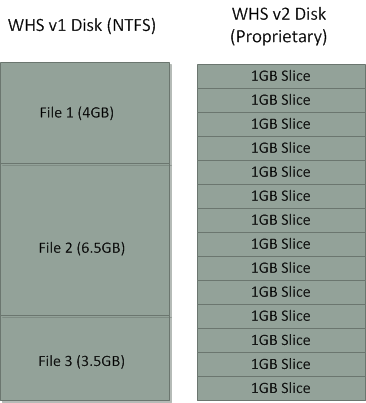

With the move to block based storage and a custom file system also comes a change in how data is stored on hard drives. In place of storing files on a plain NTFS partition, WHS v2 now uses a custom file system that stores data in 1GB chunks, with files and parts of files residing in these chunks. In the case of folder duplication, the appropriate chunks are duplicated instead of files/folders as they were on WHS v1.

Microsoft also threw in a bit more for data integrity, even on single-drive systems. Drive Extender v2 now keeps an ECC hash of each 512 byte hard drive sector (not to be confused with a chunk) in order to find and correct sector errors that on-drive ECC can’t correct – or worse, silently passes on. MS’s ECC mechanism can correct 1-bit and 2-bit errors, though we don’t know if it can detect larger errors. MS pegs the overhead at this at roughly 12% capacity, so our best guess is that they’re using a 64bit hash of each sector.

The Good & The Bad

All of these changes to Drive Extender will bring some significant changes to how WHS will operate. In terms of benefits, the biggest benefit is that from a high-level view WHS now operates almost exactly like NTFS. The NTFS volumes that Drive Extender v2 generates can be treated like any other NTFS volumes, enabling support for NTFS features such as Encrypted File System and NTFS compression that WHS v1 couldn’t handle. This also goes for application compatibility in general – Drive Extender v2 means that application compatibility is greatly improved for applications that want to interact directly with the drive pool, with this being one of Microsoft’s big goals for WHS v2.

Meanwhile at the low-level, using block based storage means that Drive Extender v2 doesn’t behave as if it was tacked on. Open files are no longer a problem for Drive Extender, allowing it to duplicate and move those files while they’re open, something Drive Extender v1 couldn’t do and which would lead to file conflicts. Speaking of duplication, it’s now instantaneous instead of requiring Drive Extender to periodically reorganize and copy files. The move to block based storage also means that WHS v2 data drives and their data can be integrated in to the storage pool without the need to move the data in to the pool before wiping and adding the drive, which means it’s going to be a great deal easier to rebuild a system after a system drive failure. Finally, thanks to chunks file size is no longer limited by the largest drive - a single file can span multiple drives by residing in chunks on multiple drives.

However this comes with some very notable downsides, some of which MS will have to deal with before launching WHS v2. The biggest downside here is that only WHS v2 can read MS’s new data drive file system – you can no longer pull a drive from a WHS and pull off files by reading it as a plain NTFS volume. This was one of WHS’s best recovery features, as a failing drive could be hooked up to a more liberal OS (i.e. Linux) and have the data pulled off that way.

The other major downside to the new Drive Extender is that the chunking system means that a file isn’t necessarily stored on a single disk. We’ve already touched on the fact that excessively large files can be chopped up, but this theoretically applies to any file big enough that it can’t fit in to leftover space in an existing chunk. This in turn gives WHS v2 a very RAID-0 like weakness to drive failures, as a single drive failure could now take out many more files depending on how many files have their pieces on the failed drive. Our assumption is that Drive Extender v2 is designed to keep files whole and on the same disk if at all possible, but we won’t be able to confirm this until MS releases the full documentation on Drive Extender v2 closer to WHS v2’s launch.

First Thoughts

In discussing WHS v2 and Drive Extender v2, there’s one thing that keeps coming to mind: ZFS. ZFS is Sun/Oracle’s next-generation server file system that has been in continuing development now for a few years. ZFS is a block based file system for use in creating storage pools that features easy expandability and block hashing (among other features) making it not unlike Drive Extender v2. ZFS ultimately goes well beyond what Drive Extender v2 can do, but the fundamental feature set the two share is close enough that Drive Extender v2 can easily be classified as a subset of ZFS. Whether this is intentional or not, based on what we know thus far about Drive Extender v2, Microsoft has created what amounts to a ZFS-lite low-level file system with NTFS sitting on top of it. Make no mistake, this could prove to be a very, very cool feature, and is something we’re going to be keeping an eye on as WHS v2 approaches release.

In the meantime, Microsoft is still taking feature suggestions and bug reports on WHS v2 through Microsoft Connect. We don’t have any specific idea on how long WHS v2 will be in beta, but we’d bet on a Winter 2010 release similar to WHS v1 back in 2007, which means it would be in beta for at least a couple more months.

52 Comments

View All Comments

ATimson - Wednesday, April 28, 2010 - link

Awesome as v2 might be, unless there's an in-place upgrade for my v1 server it'll be a hard sell for me...rrinker - Wednesday, April 28, 2010 - link

That's pretty much a given, since there is no in-place upgrade capability to move from a 32 bit to 64 bit OS. I am definitely planning to upgrade - my existing box has become maxxed out anyway so I need new hardware to add capacity. I'm thinkign an i3 Clarkdale would make a reasonable processor, witht he power save features - even if the video might actually be overkill since you only need video for the OS install.At any rate I'm really looking forward for this Vail to be finished and released, I'm making do with what I have now but I really need more space.

clex - Wednesday, April 28, 2010 - link

An option to upgrade was my #1 feature request for WHS v2. Its going to be a huge pain for me to put the 4.5TB of data currently on my WHS v1 somewhere while I install WHS v2 on my server. I guess I'm going to have to buy three 2TB drives and some SATA to USB dongles in order to upgrade. Bad part is that chances of a failure on new hardware are high. I might have to but the HDDs now, run them for 6 months and then do the transfer.davepermen - Wednesday, April 28, 2010 - link

well, whs does (on duplicated drives) something like raid1, which is just as secure as raid5. your data is always on two disks.where is which superior?

in data-loss-security terms, they are equal. one failing drive in the drivepool == no loss. two failing drives == loss.

in storage-loss for the security, raid5 is superior. if all your data is in duplication mode on whs, it needs 2x the storage space. raid5 needs "one additional disk".

in flexibility, whs wins (and this is why whs uses that method). need more space? drop in a new disk of any size, and the storage pool grows.

you can't simply do that. you can't set up a raid5 with a 500gb disk, a 1tb disk, a 1.5tb disk, a 750gb disk, 2 2tb disks, etc.. and switch as matching around when ever you see fit.

the home server is designed to be easy to use. expanding a raid5 isn't that easy. (esp not with varying disk sizes).

so what you lose size-efficiency on the data-duplication side. what you gain is massive flexibility.

you don't lose any data security.

Nomgle - Wednesday, April 28, 2010 - link

"in data-loss-security terms, they are equal. one failing drive in the drivepool == no loss. two failing drives == loss."The level of loss is vastly different though.

Two failing drives on a RAID5 pool == total loss of *everything* !

Two failing drives on a DriveExtender pool == loss of that data only. The other drives in the pool will still be readable - if you've got a lot of drives, this could add up to a *lot* of data :)

davepermen - Wednesday, April 28, 2010 - link

oh right! so it's actually a gain in security :)Nomgle - Thursday, April 29, 2010 - link

It was, yes.That feature is now gone with DriveExtender2 though - hence the confusion.

If you have two drives die in Vail, you could potentially potentially lose everything ... even WITH duplication enabled !

(because your files may be spread over all your drives)

-=Hulk=- - Wednesday, April 28, 2010 - link

"The NTFS volumes that Drive Extender v2 generates can be treated like any other NTFS volumes, enabling support for NTFS features such as Encrypted File System and NTFS compression that WHS v1 couldn’t handle."Are you sure that encryption is supported?

http://social.microsoft.com/Forums/en-US/whsvailbe...

Ryan Smith - Wednesday, April 28, 2010 - link

EFS (per file encryption), not BitLocker (whole drive encryption).Ryan Smith - Wednesday, April 28, 2010 - link

With respect to RAID 5, that's certainly an option. Going back to our ZFS comparison, ZFS has a not-quite-RAID mode called RAID-Z that functions very similar to RAID-5 while maintaining the storage pool concept. So it would be entirely possible to implement this on MS's new low-level file system if they wanted to do the work (and boy, it would be a lot of work!).However they won't, and I'll tell you why. Parity RAID is not user friendly. If you lose a drive not only does performance suffer, but you have to go through a long and slow rebuilding process. Drives fail and MS knows this, and this is why they have duplication, which in a fully duplicated storage pool is just as good (if not better) than RAID-5 when it comes to handling single drive failures. This issue has been hashed over in and out of MS since WHS became a product, and Microsoft's decision has been that it's Windows HOME Server - they want something that a novice can handle, and in their eyes duplication is easier than any kind of parity RAID system.

Duplication also involves a lot less CPU overhead, which is a very big deal for the price range MS is targeting. WHS is intended to run decently on Atom processors in order to keep system costs down along with power consumption and heat dissipation. Parity RAID is going to have a large amount of overhead, and while that shouldn't be a huge deal for a proper dual-core CPU (C2D, Athlon/Phenom II) it would be a big deal on an Atom. So for most of these OEM WHS systems, there's a great deal of truth to any claims that WHS duplication is faster than RAID-5.

With that said I don't doubt that parity RAID is still going to be the most popular option for techies. It takes more work to setup and ideally you want a dedicated controller ($$), but it's more space efficient than duplication.

As for the consumer side, there's a pretty vocal faction within MS that wants the WHS storage pool to always be duplicated (i.e. you can't turn duplication off), as these are the server guys looking at how to maximize uptime and minimize the chance of data loss. They won't get their way for WHS v2, largely thanks to the fact that such a requirement would drive up WHS computer costs due to the need for a second (or more) hard drive. But depending on how things go with WHS v2, I would not find it surprising if WHS v3 was was forcibly redundant.