High-End x86: The Nehalem EX Xeon 7500 and Dell R810

by Johan De Gelas on April 12, 2010 6:00 PM EST- Posted in

- IT Computing

- Intel

- Nehalem EX

The Uncore Power of the Nehalem EX

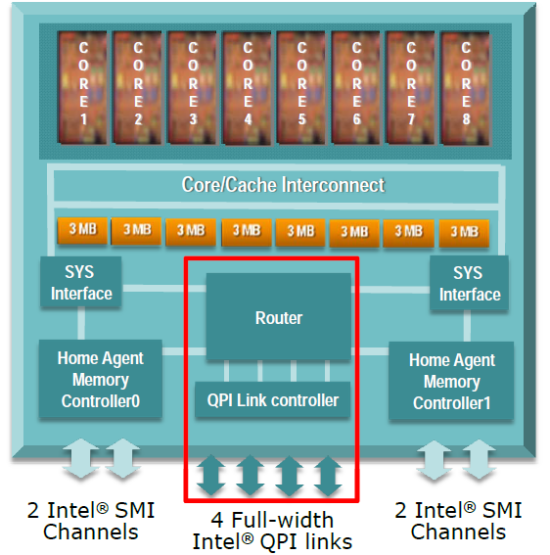

Feeding an octal-core is no easy feat. You cannot just add a bit of cache and call it a day. Much attention was paid to the uncore part. When you need to feed eight fast cores, the L3 cache bandwidth is critical. Intel used a 32 byte wide dual counter-rotating rings system and eight separate banks of 3MB to make sure that the L3 cache could deliver up to 200GB/s at a low 21ns load to use latency. The Last Level Cache is also a snoop filter to make sure that cache coherency traffic does not kill the performance scaling.

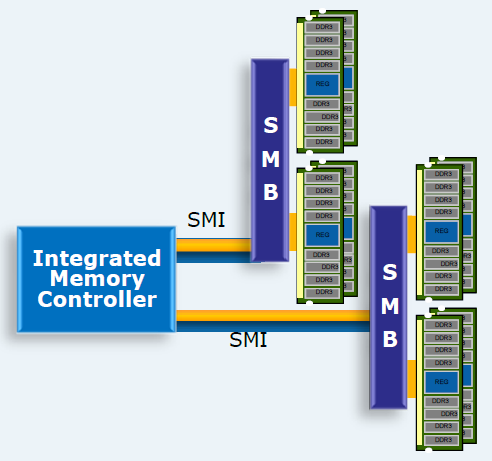

An 8-port router regulates the traffic between the memory controllers, the caches, and the QPI links. It adds 18ns of latency and should in theory be capable of routing 120GB/s. Each memory controller has two serial interfaces (SMI) working in lockstep to memory buffers (SMBs) which have the same tasks as the AMBs on FB-DIMMs (Fully Buffered DIMMs). The DIMMs send their bits out in parallel (64 per DIMM) and the memory buffer has to serialize the data before sending it to the memory controller. This allows Intel to give each CPU four memory channels. If the memory interface wasn't serial, the boards would be incredibly complex as hundreds of parallel lines would be necessary.

Each SMI can deliver 6.4GB/s in full duplex or 12.8GB/s of total bandwidth. Each SMB has two DDR3-1066 memory channels, which can deliver 17GB/s half duplex. To transform this 17GB/s half duplex data stream into a 6.4GB/s full duplex, the SMB needs about 10W at the most (TDP). In practice, this means that each SMB needs to dissipate about 7W, hence the small black fans that you will see on the Dell motherboard later.

So each CPU has two memory interfaces that connect to two SMBs that can each drive two channels with two DIMMS. Thus, each CPU supports eight registered DDR3 DIMMs at 1066MHz. By limiting the channels to two DIMMs per DDR channel, the system can support quad-rank DIMMs. So in total, a carefully designed quad-Xeon 7500 server can contain up to 64 DIMMs. As each DIMM can be a quad-ranked 16GB DIMM, a quad-CPU configuration can contain up to 1TB of RAM. So Intel's Nehalem EX platform offers high bandwidth and enormous memory capacity. The flipside of the coin is increased latency and—compared to the total system—a bit of power consumed by the SMBs.

23 Comments

View All Comments

JohanAnandtech - Tuesday, April 13, 2010 - link

"Damn, Dell cut half the memory channels from the R810!"You read too fast again :-). Only in Quad CPU config. In dual CPU config, you get 4 memory controllers, which connect each two SMBs. So in a dual Config, you get the same bandwidth as you would in another server.

The R810 targets those that are not after the highest CPU processing power, but want the RAS features and 32 DIMM slots. AFAIK,

whatever1951 - Tuesday, April 13, 2010 - link

2 channels of DDR3-1066 per socket in a fully populated R810 and if you populate 2 sockets, you get the flex memory routing penalty...damn..............!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! R810 sucks.Sindarin - Tuesday, April 13, 2010 - link

whatever1951 you lost me @ Hello.........................and I thought Sauron was tough!! lolJohanAnandtech - Tuesday, April 13, 2010 - link

"It is hard to imagine 4 channels of DDR3-1066 to be 1/3 slower than even the westmere-eps."On one side you have a parallel half duplex DDR-3 DIMM. On the other side of the SMB you have a serial full duplex SMI. The buffers might not perform this transition fast enough, and there has to be some overhead. I also am still searching for the clockspeed of the IMC. The SMIs are on a different (I/O) clockdomain than the L3-cache.

We will test with Intel's / QSSC quad CPU to see whether the flexmem bridge has any influence. But I don't think it will do much. You might add a bit of latency, but essentially the R810 is working like a dual CPU with four IMCs just like another (Dual CPU) Nehalem EX server system would.

whatever1951 - Tuesday, April 13, 2010 - link

Thanks for the useful info. R810 then doesn't meet my standard.Johan, is there anyway you can get your hands on a R910 4 Processor system from Dell and bench the memory bandwidth to see how much that flex mem chip costs in terms of bandwidth?

IntelUser2000 - Tuesday, April 13, 2010 - link

The Uncore of the X7560 runs at 2.4GHz.JohanAnandtech - Wednesday, April 14, 2010 - link

Do you have a source for that? Must have missed it.Etern205 - Thursday, April 15, 2010 - link

I think AT needs to fix this "RE:RE:RE...:" problem?amalinov - Wednesday, April 14, 2010 - link

Great article! I like the way in witch you describe the memory subsystem - I have readed the Intel datasheets and many news articles about Xeon 7500, but your description is the best so far.You say "So each CPU has two memory interfaces that connect to two SMBs that can each drive two channels with two DIMMS. Thus, each CPU supports eight registered DDR3 DIMMs ...", but if I do the math it seems: 2 SMIs x 2 SMBs x 2 channels x 2 DIMMs = 16 DDR3 DIMMs, not 8 as written in the second sentence. Later in the article I think you mention 16 at different places, so it seems it is realy 16 and not 8.

What about Itanium 9300 review (including general background on the plans of OEMs/Intel for IA-64 platform)? Comparision of scalability(HT/QPI)/memory/RAS features of Xeon 7500, Itanium 9300 and Opteron 6000 would be welcome. Also I would like to see a performance comparision with appropriate applications for the RISC mainframe market (HPC?) with 4- and 8-socket AMD, Intel Xeon, Intel Itanium, POWER7, newest SPARC.

jeha - Thursday, April 15, 2010 - link

You really should review the IBM 3850 X5 I think?They have some interesting solutions when it comes to handling memory expansions etc.