Intel X25-V in RAID-0: Faster than X25-M G2 for $250?

by Anand Lal Shimpi on March 29, 2010 8:59 PM ESTRandom Read/Write Speed

This test reads/writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time.

I've had to run this test two different ways thanks to the way the newer controllers handle write alignment. Without a manually aligned partition, Windows XP executes writes on sector aligned boundaries while most modern OSes write with 4K alignment. Some controllers take this into account when mapping LBAs to page addresses, which generates additional overhead but makes for relatively similar performance regardless of OS/partition alignment. Other controllers skip the management overhead and just perform worse under Windows XP without partition alignment as file system writes are not automatically aligned with the SSD's internal pages.

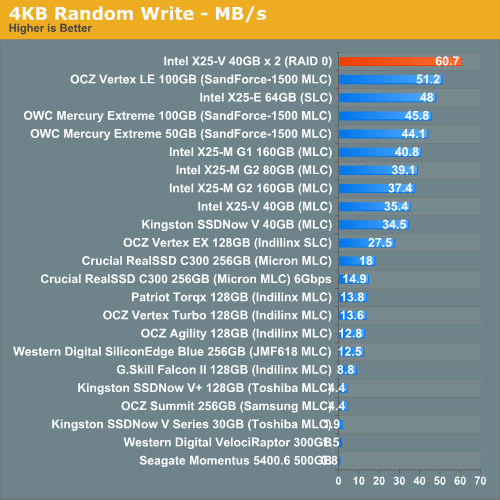

First up is my traditional 4KB random write test, each write here is aligned to 512-byte sectors, similar to how Windows XP might write data to a drive:

In sector-aligned 4K random writes, nothing is faster than our X25-V RAID 0 array. We're talking faster than Intel's X25-E, faster than SandForce...you get the picture.

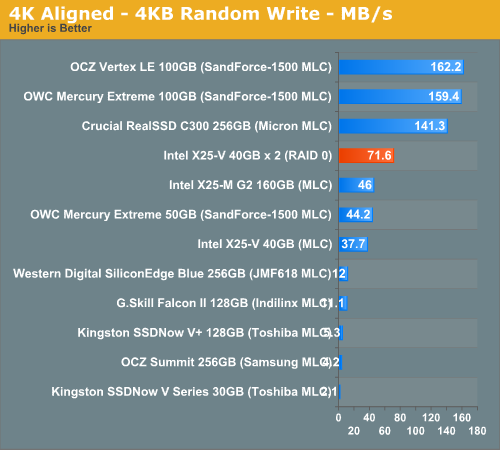

Our 4K aligned test, more indicative of random write performance under newer OSes puts a damper on the excitement:

At 71.6MB/s we're definitely faster than any other Intel drive here, as well as the 50GB SandForce offerings. But still no where near as fast as the C300 or OCZ Vertex LE.

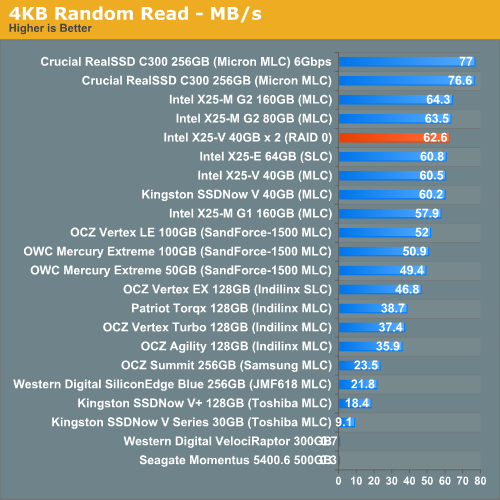

Random read performance didn't improve all that much for some reason. We're bottlenecked somewhere else obviously.

87 Comments

View All Comments

rhvarona - Tuesday, March 30, 2010 - link

Some Adaptec Series 2, Series 5 and Series 5Z RAID controller cards allows you to add one or more SSD drives as a cache for your array.So, for example, you can have 4x1TB SATA disks in RAID 10, and 1 32GB Intel SLC SSD as a transparent cache for frequently accessed data.

The feature is called MaxIQ. One card that has it is the Adaptec 2405 which retails for about $250 shipped.

The kit is the Adaptec MaxIQ SSD Cache Performance Kit, but it ain't cheap! Retails for about $1,200. Works great for database and web servers though.

GDM - Tuesday, March 30, 2010 - link

Hi I was under the impression that intel has new raid drivers that can pass through the TRIM command. Can you please rerun the test if that is true. Also can you test the 160gbs in raid?And although benchmarks are nice, do you really notice it during normal use?

Regards,

Makaveli - Tuesday, March 30, 2010 - link

You cannot do TRIM to an SSD Raid even with the new intel drivers.The drivers will allow you to pass trim to a single SSD+ HD RAID setup.

Roomraider - Wednesday, March 31, 2010 - link

Wrong, Wrong, Wrong!!!!!!!The new drivers does in fact pass Trim to Raid-0 in Windows 7. My 2 160 g2' striped in 0 now has trim running on the array "verified via Windows 7 Trim cmd" . According to Intel, this works with any Trim enabled SSD' No Raid 5 support yet.

jed22281 - Friday, April 2, 2010 - link

what so Anand is wrong when he speak to Intel engineers directly?I've seen several other threads where this claims has since been quashed.

WC Annihilus - Tuesday, March 30, 2010 - link

Well this is definitely a test I was looking for. I just bought 3 of the Kingston drives off Amazon cheap and was trying to decide whether to RAID them or use them separately for OS/apps and games. Would a partition of 97.5GB (so about 14GB unpartitioned) be good enough for a wear-leveling buffer?GullLars - Tuesday, March 30, 2010 - link

Yes, it should be. You can consider making it 90GiB (gibibytes, 90*2^30 bytes), if you anticipate a lot of random writes and not a lot of larger files going in and out regularly.You will likely get about 550MB/s sequential read, and enough IOPS for anything you may do (unless you start doing databases, WMvare and stuff). 120MB/s sustained and consistent write should also keep you content.

Tip: use a small stripe size, even 16KB stripe will work whitout fuzz on these controllers.

WC Annihilus - Tuesday, March 30, 2010 - link

Main reason I want to go with a 97.5GB partition is because that's the size of my current OS/apps/games partition. It's got about 21GB free, which I wanted to keep in case I wanted to install more games.In regards to stripe size, most of the posts I've seen suggest 64KB or 128KB are the best choices. What difference does this make? Why do you suggest smaller stripe sizes?

Plans are for the SSDs to be OS/apps/games, with general data going on a pair of 1.5TB hard drives. Usage is mainly gaming, browsing, and watching videos, with some programming and the occasional fiddling with DVDs and video editing

GullLars - Tuesday, March 30, 2010 - link

Then you should be fine with a 97,5GB partition.The reason smaller is better when it comes to stripe size on SSD RAIDs has to do with the nature of the storage medium combined with the mechanisms of RAID. I will explain in short here, and you can read up more for yourself you are more curious.

Intel SSDs can do 90-100% of their sequential bandwidth with 16-32KB blocks @ QD 1, and at higher queue depths they can reach it at 8KB blocks. Harddisks on the other hand reach their maximum bandwidth around 64-128KB sequential blocks, and do not benefit noticably from increasing the queue depth.

When you RAID-0, the files that are larger than the stripe size get split up in chucks equal in size to the stripe size and distributed amongs the units in the RAID. Say you have a 128KB file (or want to read a 128KB chunk of a larger file), this will get divided into 8 pieces when the stripe size is 16KB, and with 3 SSDs in the RAID this means 3 chunks for 2 of the SSDs, and 2 chukcs for the third. When you read this file, you will read 16KB blocks from all 3 SSDs at Queue Depth 2 and 3. If you check out ATTO, you will see 2x 16KB @ QD 3 + 1x 16KB @ QD 2 summarize to higher bandwidth than 1x 128KB @ QD 1.

The bandwidth when reading or writing files equal to or smaller the stripe size will not be affected by the RAID. The sequential bandwidth of blocks of 1MB or larger will also be the same since the SSDs will be able to deliver max bandwidth with any stripe size since data is striped over all in blocks large enough or enough blocks to reach max bandwidth for each SSD.

So to summarize, benefits and drawbacks of using a small stripe size:

+ Higher performance of files/blocks above the stripe size while still relatively small (<1MB)

- Additional computational overhead from managing more blocks in-flight, although this is negligable for RAID-0.

The added performance of small-medium files/blocks from a small stripe size can make a difference for OS/apps, and can be meassured in PCmark Vantage.

WC Annihilus - Tuesday, March 30, 2010 - link

Many thanks for the explanation. I may just go ahead and fiddle with various configurations and choose which feels best to me.