NVIDIA Introduces dual Cortex A9 based Tegra 2

by Anand Lal Shimpi on January 7, 2010 2:00 PM EST- Posted in

- Smartphones

- Mobile

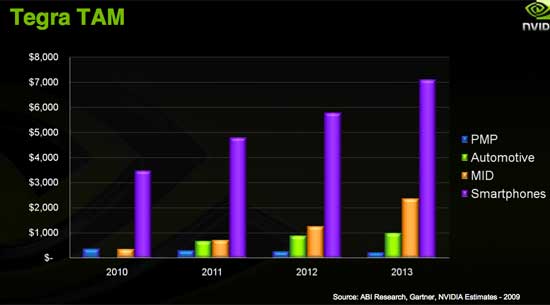

A month ago NVIDIA shared this slide with me:

It's a graph of the total available market, according to NVIDIA, for its Tegra SoC (System-on-Chip). This year alone NVIDIA estimates that there's around a $4B market for Tegra. Next year it grows to $6B. By 2013 the total available market for NVIDIA's Tegra SoC reaches over $10B. That's more money than NVIDIA ever made from the PC market.

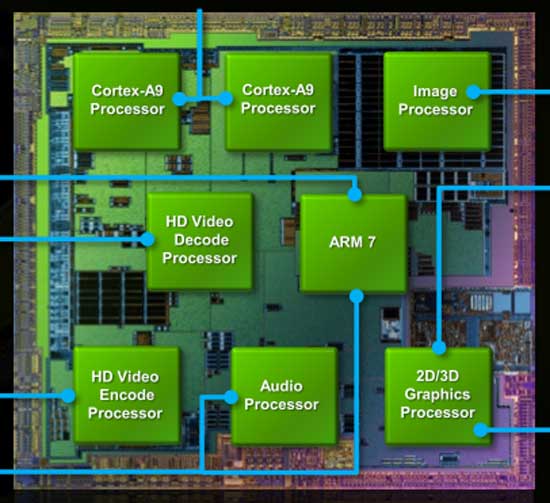

In order to compete in that space you need a competent chip. Today NVIDIA is announcing its second generation Tegra SoC. It's creatively named the Tegra 2 and this is what it looks like in block diagram form:

The SoC is made up of 8 independent processors, up from 7 in the original Tegra. The first two are the most exciting to me - a pair of ARM Cortex A9 cores. These are dual-issue out of order cores from ARM running at up to 1GHz. If you thought the A8 was fast, these things should be much faster.

The original Tegra used a single ARM11 core. It was multi-core capable but the only version NVIDIA ever shipped only had a single ARM11. By now you know that ARM11 is unreasonably slow and thus you see my biggest problem with Tegra 1. Tegra 2 addresses this in a grand way. NVIDIA skipped over Cortex A8 entirely and went to what it believes is a more power efficient, higher performing option with the A9. I'll go deeper into the A9's architecture shortly, but to put it bluntly - A8 is dead in my eyes, Cortex A9 is what you want.

The next processor is an audio decode core. NVIDIA acquired PortalPlayer in 2007 for somewhere around $350M. PortalPlayer SoCs were used in the first five generations of iPods. PortalPlayer contributed to much of NVIDIA's know how when it came to building SoCs and audio decoders. NVIDIA is particularly proud of its audio decode core, claiming that it can deliver system power in the low 10s of mW while playing an MP3. It's difficult to quality that claim. Microsoft lists Zune HD battery life at 33 hours while playing MP3s, while Apple claims the iPod Touch can do the same for 30 hours. Is NVIDIA responsible for the Zune's longer MP3 playback battery life? I've got no clue.

Given that this isn't 1995, audio decoding isn't very hard nor very interesting so let's move on. The next two cores are for video encode and decode. On the encode side NVIDIA claims to be able to accelerate the encode of 1080p H.264 video. This is up from 720p in the original Tegra and particularly important for any handsets that might include a video camera. Bitrates, power consumption and other pertinent details remain unknown.

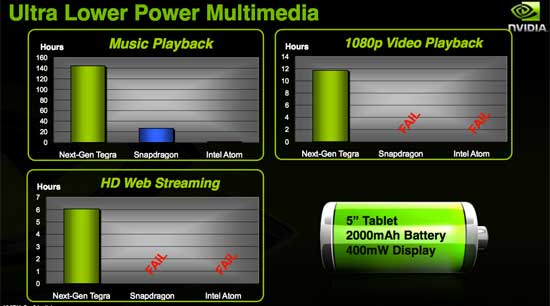

The video decode side is where NVIDIA believes it has an advantage. Tegra's video decode processor accelerates up to 1080p high profile H.264 video at bitrates in the 10s of megabits per second. The Samsung SoC in the iPhone 3GS is limited to only 480p H.264 decode despite Samsung claiming 1080p decode support on its public Cortex A8 SoC datasheets. NVIDIA insists that no one else can do 1080p decode at high bitrates in a remotely power efficient manner. Tegra's 1080p decode can be done in the low 100s of mW. NVIDIA claims that the competition often requires well over 1W of total system power to do the same because they rely on the CPU to do some of the decoding. Again, this is one of those difficult to validate claims. Imagination has demonstrated very low CPU utilization 1080p H.264 decode on its PowerVR SGX core, but I have no idea of the power consumption.

NVIDIA's numbers are interesting, but not 3rd party verified

So let's see, that's two ARM Cortex A9 cores, an audio core, video encode and video decode - we're up to five at this point. The next processor is used for image signal processing. In other words it's the core that drives a still/video camera in a Tegra handset. The processor supports up to 12MP sensors, auto whitebalance, auto focus and general video processing on either a still picture or a video stream. The output can be routed to the next core: Tegra 2's GeForce GPU.

NVIDIA wasn't willing to say much about Tegra's graphics core other than it was their own design. NVIDIA confirmed that the only 3rd party IP in Tegra 2 are the ARM cores, the rest is made in house. And if you were wondering, Tegra 2 is the other platform that Epic demonstrated its Unreal Engine 3 mobile technology on.

The GPU in Tegra 2 is the same architecture as Tegra 1 (OpenGL ES 2.0 is supported), just higher performance. NVIDIA expects a 2 - 3x performance increase thanks to improved efficiency, more memory bandwidth and a higher clock rate.

The original Tegra only supported LPDDR1, while Tegra 2 supports LPDDR2. The Zune HD's Tegra SoC had a 32-bit 333MHz datarate LPDDR1 memory bus, resulting in 1.33GB/s of memory bandwidth. Tegra 2 in a single package with integrated memory should deliver about twice that.

NVIDIA's believes while other SoC makers can promise higher theoretical performance, Tegra and Tegra 2 deliver better real world gaming performance thanks to everything from the hardware to the software stack. Given NVIDIA's experience in optimizing desktop GPU drivers, I've got no problems giving NVIDIA the benefit of the doubt here.

Tegra 1 was able to run Quake 3 at 720p with AA at over 40 fps, which according to NVIDIA was faster than any other SoC in a handset today. I haven't personally benchmarked Quake 3 on any SoCs so I can't really validate that claim either.

Ok, only one processor left and this one is simple. Tegra 2 (like Tegra) has an ARM7 processor that is used for chip management. It handles dataflow, power management and other similar tasks.

You'll notice the one thing missing from NVIDIA's Tegra 2 is a cellular modem. There simply isn't one. NVIDIA's philosophy is to focus on the core compute functions of an SoC that require no carrier or FCC testing. An OEM could mate a Tegra 2 with a tried and true modem, lose out on the integration side but win in time to market. Given the sheer number of different wireless networks in the world, leaving the modem out of the design makes sense to me. But then again I don't make smartphones. It may prevent Tegra 2 from going into the cheapest solutions, but that's not where NVIDIA wants to be in any case.

55 Comments

View All Comments

strikeback03 - Friday, January 8, 2010 - link

That would be a gigantic phone. I'd personally like to see something with this kind of processing power (minus the video acceleration) and small enough to use something like a 2.5-3" screenyyrkoon - Thursday, January 7, 2010 - link

on a smart phone, or a mp3 player . . . hmm am I missing something here ? Is this absolutely necessary ? Personally, I don't think so.Also comparing ARM with an Atom processor is like comparing apples to oranges isn't it ? One is x86, the other is not.

Personally, I would be more interested in seeing how viable nVidias Tegra 2 would be used in other SoC embedded applications, or if nVidia will make derivatives that are more suitable for other than smartphone / mp3 player applications. Based just on the ARM technology, I would have to say these are going to be well suited for any low power application provided they perform well.

FaaR - Thursday, January 7, 2010 - link

1080P decode support on a thing like this is... Well, virtually useless, really.When it's been shown that most people can't see the difference between blu-ray video and regular 'ol DVDs even on big-screen TVs, and the vast majority of people just don't see the point of HD video, then what the hell are we going to use this thing for? Watch BR rips on a 3" LCD screen, no I don't think so.

Plug it in to your big screen TV in the living room? Please. Don't you have a stationary player for that?

I've no idea who exactly this product is intended for.

And the dual A9 cores, well, I'm sure they're great - compared to whatever came before them anyway, but dual A9 cores, quad A9 cores or a quadrillion A9 cores doesn't really matter as long as they don't run any really useful software and THEY DON'T. As long as a portable isn't x86 compatible it'll never be more than a toy. Yeah sure, you can "do stuff" with an Iphone or whatever, but it's still just toy apps and it will stay that way until x86 becomes a realistic alternative in the mobile marketspace. Atom is a joke right now, it's slow AND power hungry. Maybe in another 5 years, who knows...

Visual - Friday, January 8, 2010 - link

it is nice if the device can decode the video in real time, even if it doesn't show it in its full resolution. then you don't need to re-encode stuff specially for the device, if size isn't a constraint - like if you are watching it from a network share.but the main advantage of tegra 2 isn't just some stupid video decode. its all-round general purpose cpu performance, and 3d acceleration, at very low power usage. the modular design allows it to use as little power as the current usage pattern of the device requires so it will make fantastic handheld game console/phone/media player hybrids

and you whining that there aren't useful apps is just stupid. x86 isn't the world, you know - properly developed apps can be ported to anything, and once the platform is in people's hands, the apps will be too.

FlyTexas - Friday, January 8, 2010 - link

Huh?What are you smoking???

If you can't tell the very obvious difference between Blu-Ray and DVD video on a large 1080P LCD, then you're blind...

None of that makes your other point invalid, 1080P isn't needed for a 4" screen, but it is nice that it can do it.

GeorgeH - Thursday, January 7, 2010 - link

Looking at that reference board it doesn't appear that it would be all that difficult to make a mini-ITX Atom alternative. You'd have to run Linux on it, but for an HTPC, NAS, or other low power single-purpose application spending $100+ on a fully featured Windows license is a little bit silly anyway.If it is faster than Atom (and better at HTPC-centric video tasks) and can be had for much less than an i3 system (especially if i3 can't be passively cooled) I'd think NVIDIA would be jumping at the chance to show Intel up a bit. Even if the actual marketshare and economic gains were minimal, it seems to me that the "mindshare" gains could be huge.

yyrkoon - Thursday, January 7, 2010 - link

Personally, I do not see it happening. There is a reason why companies like SGI moved from RISC to x86 hardware. However, with that said ther is simply no reason why these SoCs could not be used in an external NAS / SAN system with the right software to back it up. x86 has the advantage of running desktop classed Windows, even if only for gaming, which is a larger market than most think.Still, as a novice embedded designer, I see lots of potential in Tegra 2, but a lot of it would be unnecessary for my, and possibly others purposes. Smart phone, and MP3 players, sure, but not for a lot of other things. Perhaps if the graphics core were CUDA compliant and offered good number crunching performance . . .

yyrkoon - Thursday, January 7, 2010 - link

Personally, I do not see it happening. There is a reason why companies like SGI moved from RISC to x86 hardware. However, with that said ther is simply no reason why these SoCs could not be used in an external NAS / SAN system with the right software to back it up. x86 has the advantage of running desktop classed Windows, even if only for gaming, which is a larger market than most think.Still, as a novice embedded designer, I see lots of potential in Tegra 2, but a lot of it would be unnecessary for my, and possibly others purposes. Smart phone, and MP3 players, sure, but not for a lot of other things. Perhaps if the graphics core were CUDA compliant and offered good number crunching performance . . .

altarity - Thursday, January 7, 2010 - link

LOL... I seriously didn't know this when I posted earlier:http://blog.boxee.tv/2010/01/07/boxee-box-internal...">http://blog.boxee.tv/2010/01/07/boxee-box-internal...

sprockkets - Thursday, January 7, 2010 - link

Now if only it wasn't shaped so weird...Is that really the final look?