The Radeon HD 5970: Completing AMD's Takeover of the High End GPU Market

by Ryan Smith on November 18, 2009 12:00 AM EST- Posted in

- GPUs

The Card They Beg You to Overclock

As AMD equipped 5970 with a fully functional Cypress core, one particularly binned for its excellent performance, it’s a shame the 5970 is only clocked at 725MHz core, right? AMD agrees, and has equipped and will be promoting the 5970 in a manner unlike any previous AMD video card.

Officially, AMD and its vendors can only sell a card that consumes up to 300W of power. That’s all the ATX spec allows for; anything else would mean they would be selling a non-compliant card. AMD would love to sell a more powerful card, but between breaking the spec and the prospect of running off users who don’t have an appropriate power supply (more on this later), they can’t.

But there’s nothing in the rulebook about building a more powerful card, and simply selling it at a low enough speed that it’s not breaking the spec. This is what AMD has done.

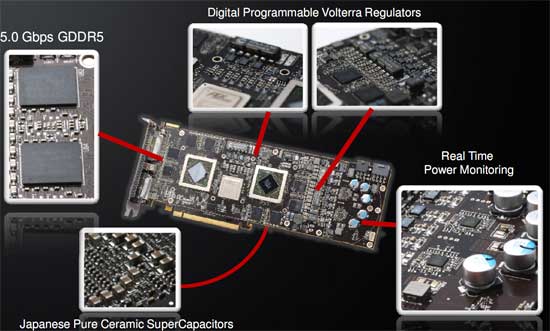

As a 300W TDP card, the 5970 is entirely overbuilt. The vapor chamber cooling system is built to dissipate 400W, and the card is equipped entirely with high-end electronics components, including solid caps and high-end VRMs.

Make no mistake: this card was designed to be a single-card 5870CF solution; AMD just can’t sell it like that. In our discussions with them they nearly (as much as Legal would let them) promised that every card will be able to hit 850MHz core (after all, these chips are binned to be better than a 5870), and memory speeds were nearly as optimistic, although we were given the impression that AMD is a little more concerned about GDDR5 memory bus issues at 5870 speeds.

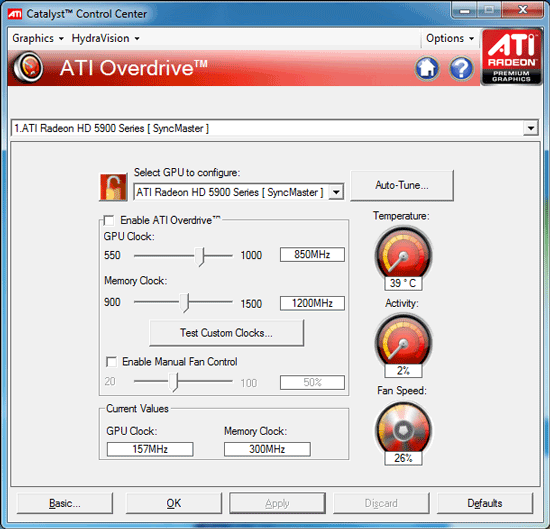

So with the card that is a pair of 5870s in everything except the shipping specifications, AMD has gone ahead and left it up to the user to put 2 + 2 together, and to bring the card to its full potential. The card ships with a much higher Overdrive cap than AMD’s other cards; instead of 10-20%, here the caps are 1GHz for the core and 1.5GHz for the memory, a 37% and 50% cap respectively (in comparison, on the 5850, the caps were set below the 5870’s stock speeds). The card effectively has unlimited overclocking headroom within Overdrive; we doubt that any 5970 is going to hit those speeds with air cooling.

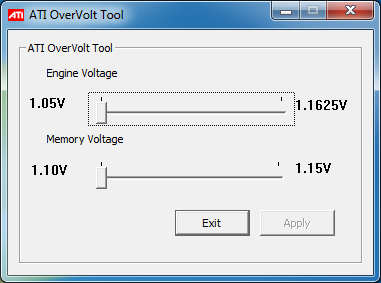

One weakness of Overdrive is that it doesn’t let you tweak voltages, which is a problem since AMD has to ship this card at lower voltages in order to meet the 294W TDP. In order to rectify that, AMD will be supplying vendors with a voltage tweaking tool specifically for the 5970, which will then be customized and distributed by vendors to their 5970 users.

Normally any kind of voltage tweaking on a video card makes us nervous due to the lack of guidance – a single GPUs doesn’t ship at a wide range of voltages after all. For overvolting the 5970, AMD has made matters quite simple: you only get one choice. The utility we’re using offers two voltages for the core, and two for the memory, which are the shipping voltages and the voltages the 5870 runs at. So you can run your 5970 at 1.05v core or 1.165v core, but nothing higher and nothing in between. It makes matters simple, and locks out the ability to supply the core with more voltage than it can handle. We haven’t seen any of the vendor-customized versions of the Overvolt utility, but we’d expect all of them to have the same cap, if not the same two-setting limit.

All of this comes at a cost however: power. Cranking up the voltage in particular will drive the power draw of the card way up, and this is the point where the card ceases to meet the PCIe specification. If you want to overclock this card, you’re going to need not just a strong power supply that can deliver its rated wattage, but you’re going to need a power supply that can overdeliver on the rails attached to the PCIe power plugs.

For overclocked operation, AMD is recommending a 750W power supply, capable of delivering at least 20A on the rail the 8pin plug is fed from, and another 15A on the rail the 6pin plug is fed from. There are a number of power supplies that can do this, but you need to pay very close attention to what your power supply can do. Frankly we’re just waiting for a sob-story where this card cooks a power supply when overvolted. Overclocking the 5970 will bring the power draw out of spec, its imperative you make sure you have a power supply that can handle it.

Overall the whole issue leaves us with an odd taste in our mouths. Clearly AMD would have rewritten the ATX spec to allow for more power if it were that simple, and we don’t believe anyone really wants to be selling a card that runs out of spec like this. Both AMD and NVIDIA are going to have to cope with the fact that power draw has been increasing on their cards over time, so this isn’t going to be the last over-300W card we see. I would not be surprised if we saw a newer revision of the ATX spec that allowed for more power for video cards – if you can cool 400W, then that’s where the new maximum is going to be for luxury video cards like the 5970.

Last, but certainly not least, there’s the matter of real-world testing. Although AMD told us that the 5970 should be able to hit 5870 clockspeeds, we actually didn’t have the kind of luck we were expecting to have. We have 2 5970s,one for myself, and one for Anand for Eyefinity and power/noise/heat testing. My 5970 hit 850MHz/1200MHz once overvolted (it had very little headroom without it), but the performance was sporadic. The VRM overcurrent protection mechanism started kicking in and momentarily throttling the card down to 550MHz/1000MHz, and not just in FurMark/OCCT. Running a real application (the Distributed.net RC5-72 Stream client) ultimately resulted in the same thing. With the core overvolted, our card kept throttling on FurMark all the way down to 730MHz. While the card is stable in terms of not crashing, or verdict is that our card is not capable of performing at 5870 clockspeeds.

We’ve attempted to isolate the cause of this, and we feel we can rule out temperature after feeding the card cold morning air had no effect. This leaves us with power. The power supply we use is a Corsair 850TX, which has a single 12V rail rated for 70A. We do not believe that the issue is the power supply, but we don’t have another unit on hand to test with, so we can not eliminate it. Our best guess is that in spite of the high-quality VRMs that are on this card, that they simply aren’t up to the task of powering the card at 5870 speeds and voltages.

We’ve gone ahead and done our testing at these speeds anyhow (since overcurrent protection doesn’t cause any quality issues), however it’s likely that these results are retarded somewhat by throttling, and that a card that can avoid throttling would perform slightly better. We're going to be retesting this card in the morning with some late suggestions from AMD (mainly forcing the fan to 100%) to see if this changes things, but we are fairly confident right now that it's not heat related.

As for Anand's card, his fared even worse. His card locked up his rig when trying to run OCCT at 5870 speeds. VRM throttling is one thing, but crashing is another; even if it's OCCT, it shouldn't be happening. We've written his card off as being unstable at 5870 speeds, which makes us 0-for-2 in chasing the 5870CF. Reality is currently in conflict with AMD's promises.

Note: We have since published an addendum blog covering VRM temperatures, the culprit for our throttling issues

114 Comments

View All Comments

GourdFreeMan - Friday, November 20, 2009 - link

Having not bought MW2, I can say conversely that the lack of differentiation between console and PC features hurts game sales. According to news reports, in the UK PC sales of MW2 account for less than 3% of all sales. This is neither representative of the PC share of the gaming market (which should be ~25% of all "next-gen" sales based on quarterly reports of revenue from publishers), nor the size of the install base of modern graphics cards capable of running MW2 at a decent frame rate (which should be close to the size of the entire console market based on JPR figures). Admittedly the UK has a proportionately larger console share than the US or Germany, but I can't image MW2 sales of the PC version are much better globally.I am sure executives will be eager to blame piracy for the lack of PC sales, but their target market knows better...

cmdrdredd - Wednesday, November 18, 2009 - link

[quote]Unfortunately, since playing MW2, my question is: are there enough games that are sufficiently superior on the PC to justify the inital expense and power usage of this card? Maybe thats where eyefinity for AMD and PhysX for nVidia come in: they at least differentiate the PC experience from the console.I hate to say it, but to me there just do not seem to be enough games optimized for the PC to justify the price and power usage of this card, that is unless one has money to burn.[/quote]

Yes this is exactly my thoughts. They can tout DX11, fancy schmancy eyefinity, physx, everything except free lunch and it doesn't change the fact that the lineup for PC gaming is bland at best. It sucks, I love gaming on PC but it's pretty much a dead end at this time. No thanks to every 12 year old who curses at you on XBox Live.

The0ne - Wednesday, November 18, 2009 - link

My main reason to want this card would be to drive my 30" LCDs. I have two Dell's already and will get another one early next year. I don't actually play games much but I like having the desktop space for my work.-VM's at higher resolution

-more open windows without switching too much

-watch movie(s) while working

-bigger font size but maintaining the aspect ratio of programs :)

Currently have my main on one 30" and to my 73" TV. TV is only 1080P so space is a bit limited. Plus working on the TV sucks big time :/

shaolin95 - Wednesday, November 18, 2009 - link

I am glad ATI is able to keep competing as that helps keep prices at a "decent" level.Still, for all of you so amazed by eyefinity, do yourselves a favor and try 3D vision with a big screen DLP then you will laugh at what you thought was cool and "3D" before.

You can have 100 monitors but it is still just a flat world....time to join REAL 3D gaming guys!

Carnildo - Wednesday, November 18, 2009 - link

Back in college, I was the administrator for a CAVE system. It's a cube ten feet on a side, with displays on all surfaces. Combine that with head tracking, hand tracking, shutter glasses, and surround sound, and you've got a fully immersive 3D environment.It's designed for 3D visualization of large datasets, but people have ported a number of 3D shooters to the platform. You haven't lived until you've seen a life-sized opponent come around the corner and start blasting away at you.

7Enigma - Wednesday, November 18, 2009 - link

But Ryan, I feel you might need to edit a couple of your comparison comments between the 295 and this new card. Based on the comments in a several previous articles quite a few readers do not look at (or understand) the charts and instead rely on the commentary below the charts. Here's some examples:"Meanwhile the GTX 295 sees the first of many falls here. It falls behind the 5970 by 30%-40%. The 5870 gave it a run for its money, so this is no surprise."

This one for Stalker is clear and concise. I'd recommend you repeat this format for the rest of the games.

"As for the GTX 295, the lead is only 20%. This is one of the better scenarios for the GTX 295."

This comment was for Battleforge and IMO is confusing. To someone not reading the chart it could be viewed as saying the 295 has a 20% advantage. Again I'd stick with your Stalker comment.

"HAWX hasn’t yet reached a CPU ceiling, but it still gets incredibly high numbers. Overclocking the card gets 14% more, and the GTX 295 performance advantage is 26%."

Again, this could be seen as the 295 being 26% faster.

"Meanwhile overclocking the 5970 is good for another 9%, and the GTX 295 gap is 37%."

This one is less confusing as it doesn't mention an advantage but should just mention 37% slower.

Finally I think you made a typo in the conclusion where you said this:

"Overclock your 5970 to 5870 speeds if you can bear the extra power/heat/noise, but don’t expect 5970CF results."

I think you meant 5870CF results...

Overall, though, the article is really interesting as we've finally hit a performance bottleneck that is not so easily overcome (due to power draw and ATX specifications). I'm very pleased, however, that you mention first in the comments that this truly is a card meant for multi-monitor setups only, and even then, may be bottlenecked by design. The 5870 single card setup is almost overkill for a single display, and even then most people are not gaming on >24" monitors.

I've said it for the past 2 generations of cards but we've pretty much maxed out the need for faster cards (for GAMING purposes). Unless we start getting some super-hi res goggles that are reasonably priced, there just isn't much further to go due to display limitations. I mean honestly are those slightly fuzzy shadows worth the crazy perforamnce hit on a FPS? I honestly am having a VERY difficult time seeing a difference in the first set of pictures of the soldier's helmet. The pictures are taken slightly off angle from each other and even then I don't see what the arrow is pointing at. And if I can't see a significant difference in a STILL shot, how the heck am I to see a difference in-game!?

OK enough rant, thanks for the review. :)

Anand Lal Shimpi - Wednesday, November 18, 2009 - link

Thanks for the edits, I've made some corrections for Ryan that will hopefully make the statements more clear.I agree that the need for a faster GPU on the desktop is definitely minimized today. However I do believe in the "if you build it, they will come" philosophy. At some point, the amount of power you can get in a single GPU will be great enough that someone has to take advantage of it. Although we may need more of a paradigm shift to really bring about that sort of change. I wonder if Larrabee's programming model is all we'll need or if there's more necessary...

Take care,

Anand

7Enigma - Wednesday, November 18, 2009 - link

Thank you for the edits and the reply Anand.One of the main things I'd like to see GPU drivers implement is an artificial framerate cap option. These >100fps results in several of the tests at insane resolutions are not only pointless, but add unneccesary heat and stress to the system. Drop back down to normal resolutions that >90% of people have and it becomes even more wasteful to render 150fps.

I always enable V-sync in my games for my LCD (75Hz), but I don't know if this is actually throttling the gpu to not render greater than 75fps. My hunch is in the background it's rendering to its max but only showing on the screen the Hz limitation.

Zool - Wednesday, November 18, 2009 - link

I tryed out full screen furmark with vsync on and off (in 640*480) and the diference was 7 degre celsius. I have a custom cooler on the 4850 and a 20cm side fan on the case so thats quite lot.7Enigma - Thursday, November 19, 2009 - link

Thanks for the reply Zool, I was hoping that was the case. So it seems like if I ensure vsync is on I'm at least limiting the gpu to only displaying the refresh rate of the LCD. Awesome!