AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Meet the Rest of the Evergreen Family

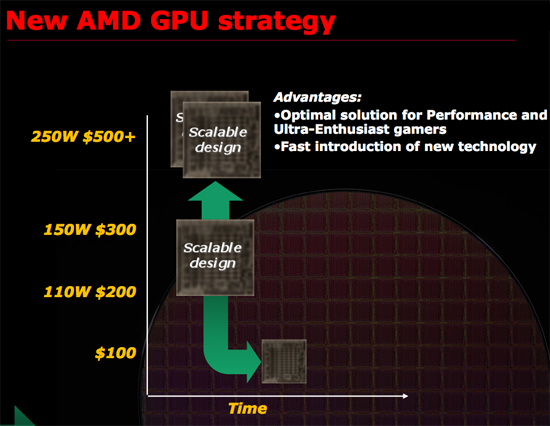

Somewhere on the way to Cypress, AMD’s small die strategy got slightly off-track.

AMD’s small-die strategy for RV770

Cypress is 334mm2, compared to 260mm2 for RV770. In that space they can pack 2.15 billion transistors, versus 956 million on the RV770, and come out at a load power of 188W versus 160W on the RV770. AMD called 256mm2 their sweet spot for the small die strategy, and Cypress missed that sweet spot.

The cost of missing the sweet spot is that by missing the size, they’re missing the price. The Cypress cards are $379 and $259, compared to $299 and $199 that the original small die strategy dictated. This has resulted in a hole in the Evergreen family, which is why we’re going to see one more member than usual.

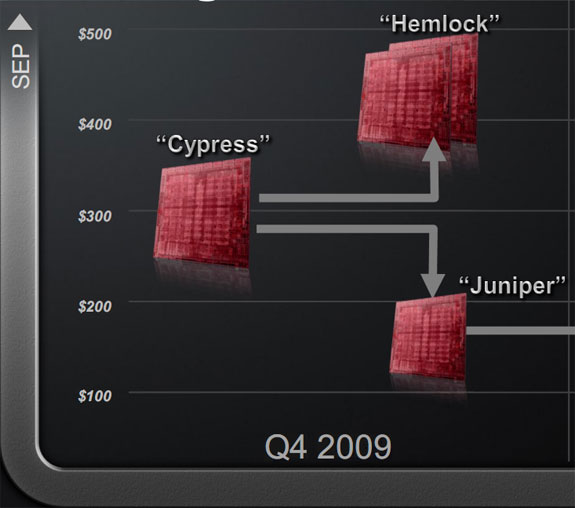

As Cypress is the base chip, there are 4 designs and 3 different chips that will be derived from it. Above Cypress is Hemlock, which will be the requisite X2 part using a pair of Cypress cores. Hemlock is going to be interesting to watch not just for its performance, but because by missing their sweet spot, AMD is running a bit hot. A literal pair of 5870s is 376W, which is well over the 300W limit of a 6-pin + 8-pin power configuration. AMD saves some power in a single card (which is how they got the 4870 under the limit) but it likely won’t be enough. We’ll be keeping an eye on this matter to see what AMD ends up doing to get Hemlock out the door at the right power load. As scheduled we should see Hemlock before the end of the year, although given the supply problems for Cypress that we mentioned earlier, it’s going to be close.

The “new” member of the Evergreen family is Juniper, a part born out of the fact that Cypress was too big. Juniper is the part that’s going to let AMD compete in the <$200 category that the 4850 was launched in. It’s going to be a cut-down version of Cypress, and we know from AMD’s simulation testing that it’s going to be a 14 SIMD part. We would wager that it’s going to lose some ROPs too. As AMD does not believe they’re particularly bandwidth limited at this time with GDDR5, we wouldn’t be surprised to see a smaller bus too (perhaps 192bit?). Juniper based cards are expected in the November timeframe.

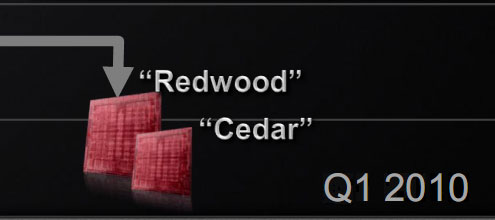

Finally at the bottom we have Redwood and Cedar, the Evergreen family’s compliments to RV710 and RV730. These will be the low-end parts derived from Cypress, and will launch in Q1 of 2010. All told, AMD will be launching 4 chips in less than 6 months, giving them a top-to-bottom range of DX11 parts. The launch of 4 chips in such a short time frame is something their engineering staff is very proud of.

327 Comments

View All Comments

ClownPuncher - Wednesday, September 23, 2009 - link

Absolutely, I can answer that for you.Those 2 "ports" you see are for aesthetic purposes only, the card has a shroud internally so those 2 ports neither intake nor exhaust any air, hot or otherwise.

Ryan Smith - Wednesday, September 23, 2009 - link

ClownPuncher gets a cookie. This is exactly correct; the actual fan shroud is sealed so that air only goes out the front of the card to go outside of the case. The holes do serve a cooling purpose though; allow airflow to help cool the bits of the card that aren't hooked up to the main cooler; various caps and what have you.SiliconDoc - Wednesday, September 23, 2009 - link

Ok good, now we know.So the problem now moves to the tiny 1/2 exhaust port on the back, did you stick your hand there and see how much that is blowing ? Does it whistle through there ? lol

Same amount of air(or a bit less) in half the exit space... that's going to strain the fan and or/reduce flow, no matter what anyone claims to the contrary.

It sure looks like ATI is doing a big favor to aftermarket cooler vendors.

GhandiInstinct - Wednesday, September 23, 2009 - link

Ryan,Developers arent pushing graphics anymore. Its not economnical, PC game supports is slowing down, everything is console now which is DX9. what purpose does this ATI serve with DX11 and all this other technology that won't even make use of games 2 years from now?

Waste of money..

ClownPuncher - Wednesday, September 23, 2009 - link

Clearly he should stop reviewing computer technology like this because people like you are content with gaming on their Wii and iPhone.This message has been brought to you by Sarcasm.

Griswold - Wednesday, September 23, 2009 - link

So you're echoing what nvidia recently said, when they claimed dx11/gaming on the PC isnt all that (anymore)? I guess nvidia can close shop (at least the gaming relevant part of it) now and focus on GPGPU. Why wait for GT300 as a gamer?Oh right, its gonna be blasting past the 5xxx and suddenly dx11 will be the holy grail again... I see how it is.

SiliconDoc - Wednesday, September 23, 2009 - link

rofl- It's great to see red roosters not crowing and hopping around flapping their wings and screaming nvidia is going down.Don't take any of this personal except the compliments, you're doing a fine job.

It's nice to see you doing my usual job, albiet from the other side, so allow me to compliment your fine perceptions. Sweltering smart.

But, now, let's not forget how ambient occlusion got poo-pooed here and shading in the game was said to be "an irritant" when Nvidia cards rendered it with just driver changes for the hardware. lol

Then of course we heard endless crowing about "tesselation" for ati.

Now it's what, SSAA (rebirthed), and Eyefinity, and we'll hear how great it is for some time to come. Let's not forget the endless screeching about how terrible and useless PhysX is by Nvidia, but boy when "open standards" finally gets "Havok and Ati" cranking away, wow the sky is the limit for in game destruction and water movement and shooting and bouncing, and on and on....

Of course it was "Nvidia's fault" that "open havok" didn't happen.

I'm wondering if 30" top resolution will now be "all there is!" for the next month or two until Nvidia comes out with their next generation - because that was quite a trick switching from top rez 30" DOWN to 1920x when Nvidia put out their 2560x GTX275 driver and it whomped Ati's card at 30" 2560x, but switched places at 1920x, which was then of course "the winning rez" since Ati was stuck there.

I could go on but you're probably fuming already and will just make an insult back so let the spam posting IZ2000 or whatever it's name will be this time handle it.

BTW there's a load of bias in the article and I'll be glad to point it out in another post, but the reason the red rooster rooting is not going beyond any sane notion of "truthful" or even truthiness, is because this 5870 Ati card is already percieved as " EPIC FAIL" !

I cannot imagine this is all Ati has, and if it is they are in deep trouble I believe.

I suspect some further releases with more power soon.

Finally - Wednesday, September 23, 2009 - link

Team Green - full foam ahead!*hands over towel*

There you go. Keep on foaming, I'm all amused :)

araczynski - Wednesday, September 23, 2009 - link

is DirectX11 going to be as worthless as 10? in terms of being used in any meaningful way in a meaningful amount of games?my 2 4850's are still keeping me very happy in my 'ancient' E8500.

curious to see how this compares to whatever nvidia rolls out, probably more of the same, better in some, worse in others, bottom line will be the price.... maybe in a year or two i'll build a new system.

of course by that time these'll be worthless too.

SiliconDoc - Wednesday, September 23, 2009 - link

Well it's certainly going to be less useful than PhysX, which is here said to be worthless, but of course DX11 won't get that kind of dissing, at least not for the next two months or so, before NVidia joins in.Since there's only 1 game "kinda ready" with DX11, I suppose all the hype and heady talk will have to wait until... until... uhh.. the 5870's are actually available and not just listed on the egg and tiger.

Here's something else in the article I found so very heartwarming:

---

" Wrapping things up, one of the last GPGPU projects AMD presented at their press event was a GPU implementation of Bullet Physics, an open source physics simulation library. Although they’ll never admit it, AMD is probably getting tired of being beaten over the head by NVIDIA and PhysX; Bullet Physics is AMD’s proof that they can do physics too. "

---

Unfortunately for this place,one of my friends pointed me to this little expose' that show ATI uses NVIDIA CARDS to develope "Bullet Physics" - ROFLMAO

-

" We have seen a presentation where Nvidia claims that Mr. Erwin Coumans, the creator of Bullet Physics Engine, said that he developed Bullet physics on Geforce cards. The bad thing for ATI is that they are betting on this open standard physics tech as the one that they want to accelerate on their GPUs.

"ATI’s Bullet GPU acceleration via Open CL will work with any compliant drivers, we use NVIDIA Geforce cards for our development and even use code from their OpenCL SDK, they are a great technology partner. “ said Erwin.

This means that Bullet physics is being developed on Nvidia Geforce cards even though ATI is supposed to get driver and hardware acceleration for Bullet Physics."

---

rofl - hahahahahha now that takes the cake!

http://www.fudzilla.com/content/view/15642/34/">http://www.fudzilla.com/content/view/15642/34/

--

Boy do we "hate PhysX" as ati fans, but then again... why not use the nvidia PhysX card to whip up some B Physics, folks I couldn't make this stuff up.