The Nehalem Preview: Intel Does It Again

by Anand Lal Shimpi on June 5, 2008 12:05 AM EST- Posted in

- CPUs

A Quick Path to Memory

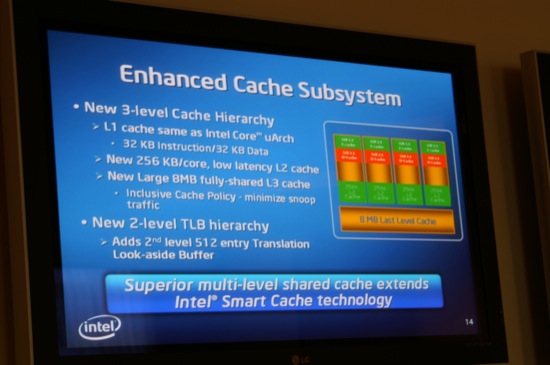

Our investigation begins with the most visibly changed part of Nehalem's architecture: the memory subsystem. Nehalem implements a very Phenom-like memory hierarchy consisting of small, fast individual L1 and L2 caches for each of its four cores and then a single, larger shared L3 cache feeding the entire chip.

Nehalem's L1 cache, despite being seemingly unchanged from Penryn, does grow in latency; it now takes 4 cycles to access vs. 3. The L2 cache is now only 256KB per core instead of being 24x the size in Penryn and thus can be accessed in only 11 cycles down from 15 (Penryn added an additional clock cycle over Conroe to access L2).

| CPU / CPU-Z Latency | L1 Cache | L2 Cache | L3 Cache |

| Nehalem (2.66GHz) | 4 cycles | 11 cycles | 39 cycles |

| Core 2 Quad Q9450 - Penryn - (2.66GHz) | 3 cycles | 15 cycles | N/A |

The L3 cache is quite possibly the most impressive, requiring only 39 cycles to access at 2.66GHz. The L3 cache is a very large 8MB cache, 4x the size of Phenom's L3, yet it can be accessed much faster. In our testing we found that Phenom's L3 cache takes a similar 43 cycles to access but at much lower clock speeds (2.0GHz). If we put these numbers into relative terms it takes 21.5 ns to get a request back from Phenom's L3 vs. 14.6 ns with Nehalem's - that's nearly 50% longer in Phenom.

While Intel did a lot of tinkering with Nehalem's caches, the inclusion of a multi-channel on-die DDR3 memory controller was the most apparent change. AMD has been using an integrated memory controller (IMC) since 2003 on its K8 based microprocessors and for years Intel has resisted doing the same, citing complexities in choosing what memory to support among other reasons for why it didn't follow in AMD's footsteps.

With clock speeds increasing and up to 8 cores (including GPUs) making their way into Nehalem based CPUs in the coming year, the time to narrow the memory gap is upon us. You can already tell that Nehalem was designed to mask the distance between the individual CPU cores and main memory with its cache design, and the IMC is a further extension of the philosophy.

The motherboard implementation of our 2.66GHz system needed some work so our memory bandwidth/latency numbers on it were way off (slower than Core 2), luckily we had another platform at our disposal running at 2.93GHz which was working perfectly. We turned to Everest Ultimate 4.50 to give us memory bandwidth and latency numbers from Nehalem.

Note that these figures are from a completely untuned motherboard and are using DDR3-1066 (dual-channel on the Core 2 system and triple-channel on the Nehalem system):

| CPU / Everest Ultimate 4.50 | Memory Read | Memory Write | Memory Copy | Memory Latency |

| Nehalem (2.93GHz) | 13.1 GB/s | 12.7 GB/s | 12.0 GB/s | 46.9 ns |

| Core 2 Extreme QX9650 - Penryn - (3.00GHz) | 7.6 GB/s | 7.1 GB/s | 6.9 GB/s | 66.7 ns |

Memory accesses on Conroe/Penryn were quick due to Intel's very aggressive prefetchers, memory accesses on Nehalem are just plain fast. Nehalem takes a little over 2/3 the time to complete a memory request as Penryn, and although we didn't have time to run comparable Phenom numbers I believe Nehalem's DDR3 memory controller is faster than Phenom's DDR2 controller.

Memory bandwidth is obviously greater with three DDR3 channels, Everest measured around a 70% increase in read bandwidth. While we don't have the memory bandwidth figures here, Gary measured a 10% difference in WinRAR performance (a test that's highly influenced by memory bandwidth and latency) between single-channel and triple-channel Nehalem configurations.

While we didn't really expect Intel to somehow do wrong with Nehalem's memory architecture, it's important to point out that it is very well implemented. Intel managed to change the cache structure and introduce an integrated memory controller while making both significantly faster than what AMD managed despite a four-year headstart.

In short: Nehalem can get data out of memory quick like bunnies.

108 Comments

View All Comments

Anand Lal Shimpi - Thursday, June 5, 2008 - link

I really wish I could've turned off hyperthreading :)DivX doesn't scale well beyond 4 threads so that's the best benchmark I could run to look at how Nehalem performs when you keep clock speeds and number of threads capped. With a 28% improvement that's at the upper end of what we should expect from Nehalem on average.

Take care,

Anand

SiliconDoc - Monday, July 28, 2008 - link

Great answer, expalins it to a tee....However that leaves myself and I'd bet most of the fans here with not much real world use for 4x4HT ...

I don't know should we all steal rented DVD's... by re-encoding - only use I know of that might work for the non-connected enduser.

Not like "folding" is all the rage, they would have to pay me to do their work - especially with all the "power savings" hullaballoo going on in tech.

That's great, 28% increase, ok...

So I want it in a 2 core or a single core HT... since that runs everything I do outside the University.

lol

I guess the all core useage all the time, will hit sometime....

Calin - Thursday, June 5, 2008 - link

First of all, the Hyperthreading in the Pentium 4 line brought at most a 20% or so performance advantage, with a -5% or so at worst. I don't have many reasons just now to think this new Hyperthreading would be vastly different.As for the scaling to 8 cores, maybe the scaling was limited due to other issues (latency, interprocessor communication, cache coherency)? It might be possible that DivX on this new platform to increase performance from 4 to 8 cores?

bcronce - Thursday, June 5, 2008 - link

Intel claims that the new HT is improved and gives 10%-100% increase. The main issue with the P4 is that it had a double pumped alu that could process 2 integers per clock. This was great for HT since you could do 2 instructions per clock. The problem came with competition for the FPU, which there was only 1. This would cause the 2nd thread in the logical cpu to stall and thread swapping has additional overhead.You also run into the issue of L2 cashe thrashing. If you have 2 threads trying to monopolize the FPU and also loading large datasets into cache, you're cache misses go up while each thread is bottlenecked at the fpu.

techkyle - Thursday, June 5, 2008 - link

I'd like to know what AMD fans are thinking. As one myself, I'm starting to wonder if I'm going to give in and become an Intel fan.Intel implementing the IMC:

I can only say two things. One, it's about time. Two, THIEF!

Return of Hyper Threading:

It seems to me that some sort of intelligence must go in to the design of multi-core hyper threading. If two intensive tasks are given to the processor, the fastest solution would be simple, devote one core to one thread, a second core to the other. With Hyper Threading back with a multi-core twist, what's stopping one thread the first core, first virtual and the second thread on the first core, second virtual?

Another nail in the coffin:

AMD can provide no competition to high end Core 2 Quad machines. Even if the K10 line can jump up to par performance with the Core architecture, can they really expect to have a Nehalem competitor ready any time remotely close to Intel's launch? AMD can't afford to keep playing the power efficient and price/performance game.

AMD is going to be in an even worse position than when it was X2 vs Core 2 if they can't pull something out of their sleeves. Barcelona already isn't clock for clock competitive with the Penryn and now we hear that early Nehalems are 20-40% above Core 2?

If AMD's next processor flops, is it possible for them to drop desktop and server processors and still be a functioning (not to forget profitable) company? It's no longer a race for the performance crown. It's becoming a race to simply survive.

bcronce - Thursday, June 5, 2008 - link

"Return of Hyper Threading:It seems to me that some sort of intelligence must go in to the design of multi-core hyper threading. If two intensive tasks are given to the processor, the fastest solution would be simple, devote one core to one thread, a second core to the other. With Hyper Threading back with a multi-core twist, what's stopping one thread the first core, first virtual and the second thread on the first core, second virtual? "

The way Windows lists cpu is first physicals, then logicals. So in task manager the first 4, on a quad core w/ HT, will be your physical cpu and the last 4 will be you logical.

Windows, by default, will put threads on cpu 1-4 first. It will move threads around to different CPUs if it feels that one is under-taxed and another is way over-taxed.

Programmers can also force Windows to use differnt cores for each thread. So, a program can tell Windows to lock all threads to the first 4 cpus, which will keep them off of the logical. You could then allow a thread that manages the worker threads to run on the logical cpus. You would then be keeping all your hard data-crunching threads from competing with themselves and let the UI/etc threads take advantage of HT.

Spoelie - Thursday, June 5, 2008 - link

Supposedly, Shanghai (the 45nm iteration of Phenom) will be around 20% faster clock per clock over Phenom. This is what AMD said itself some time ago and not verified by an independent source. Judging by current benchmarks, this would put Shanghai at the same or slightly higher performance level of Penryn.As such, a very crude estimate is that Shanghai should be as competitive to Nehalem as K8 is to Conroe. Not a very rosy outlook so let's hope this early information is not accurate and AMD can pull something more out of its hat.

BTW, last I heard is that Bulldozer will come at the 32nm node at the earliest, since the design is supposedly too complex for 45nm. So no instant relieve from that corner. AMD will be fighting a harsh battle the coming years.

Calin - Thursday, June 5, 2008 - link

"Two, THIEF"AMD's vector processing is 3DNow!, if I remember correctly. Yet, the Intel's versions of it is are touted on its processors instead (SSE2, SSE3). Now who's the thief?

swaaye - Thursday, June 5, 2008 - link

MMX? Or, MDMX? Who copied who? Nobody, really. SIMD has been around forever.Retratserif - Thursday, June 5, 2008 - link

I really would not try and think of it as a Fan base. A majority of the OC'er and Benchmarkers use what works for what they are doing. I have owned and water cooled AMD CPU's. It was great at the time.Once Conroes came out the door swung the other way. Technology is like the ocean, it comes in waves. All waves die out. Fortunately we as the user are living it up because of Intel's success. At the same time I truly hope that AMD/ATI does something in response to the high power cpu's. If not we get what ever intel wants to give us.

There will always be to sides to each story. Since Intel is on top and unscathed, they have time to perfect chips before they go mainstream. Same way we have seen the delay in Yorkfields. There was something seriously wrong, and they had time to address it before it was in the hands of thousands of users.

Ok, I can say I am a fan.... of what ever works the best for what I do. Price/Performance/Practicallity. You take what you can afford and make it work harder for every penny you put in it.

One thing you have to keep in mind. AMD is selling more budget CPU's and integrated/onboard video PC's to large companies like Dell and HP. They are moving more aggressively into typical home PC and mobile use. Intel just does not do very well there atm. With Ati in the pocket and being pretty green on power consumption, you can get a good mobile AMD that will do everything a typical PC user will ever need for 2 years at a good price.