Intel's Atom Architecture: The Journey Begins

by Anand Lal Shimpi on April 2, 2008 12:05 AM EST- Posted in

- CPUs

The Atom processor's architecture is not about being the fastest, but being good enough for the tasks at hand. A product like ASUS' EeePC would not have existed 5 years ago, the base level of system performance simply wasn't great enough. These days, there's still a need for faster systems but there's also room for systems that aren't pushing the envelope but are fast enough for what they need to do.

The complexity of tasks like composing emails, web browsing and viewing documents is increasing, but not at the rate that CPU performance is. The fact that our hardware is so greatly outpacing the demands of some of our software leaves room for a new class of "good enough" hardware. So far we've seen a few companies, such as ASUS, take advantage of this trend but inevitably Intel would join the race.

One of my favorite movies as a kid was Back to the Future. I loved the first two movies, and naturally as a kid into video games, cars and technology my favorite was the second movie. In Back to the Future II our hero, Marty McFly, journeys to the future to stop his future son from getting thrown in jail and ruining the family. While in the future he foolishly purchases a sports almanac and attempts to take it back in time with him. The idea being that armed with knowledge from the future, he could make better (in this case, more profitable) decisions in the past.

I'll stop the analogy there because it ends up turning out horribly for Marty, but the last sentence sums up Intel's approach with the Atom processor. Imagine if Intel could go back and remake the original Pentium processor, with everything its engineers have learned in the past 15 years and build it on a very small, very cool 45nm manufacturing process. We've spent the past two decades worrying about building the fastest microprocessors, it turns out that now we're able to build some very impressive fast enough microprocessors.

The chart below tells an important story:

| Manufacturing Process | Transistor Count | Die Size | |

| Intel Pentium (P5) | 0.80µm | 3.1M | 294 mm^2 |

| Intel Pentium Pro (P6) | 0.50µm | 5.5M* | 306 mm^2* |

| Intel Pentium 4 | 0.18µm | 42M | 217 mm^2 |

| Intel Core 2 Duo | 65nm (0.065µm) | 291M | 143 mm^2 |

| Intel Core 2 Duo (Penryn) | 45 nm | 410M | 107 mm^2 |

In 1993, it took a great deal of work for Intel to cram 3.1 million transistors onto a near 300 mm^2 die to make the original Pentium processor. These days, Intel manufacturers millions of Core 2 Duo processors each made up of 410 million transistors (over 130 times the transistor count of the original Pentium) in an area around 1/3 the size.

Intel isn't stopping with Core 2, Nehalem will offer even greater performance and push transistor counts even further. By the end of the decade we'll be looking at over a billion transistors in desktop microprocessors. What's interesting however isn't just what Intel can do to push the envelope on the high end, but rather what Intel can now do with simpler designs on the low end.

What's possible today on 45nm...

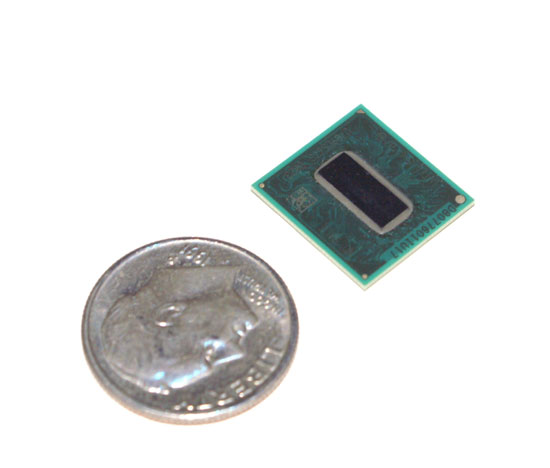

With a 294 mm^2 die size, Intel could not manufacture the original Pentium for use in low cost devices however, today things are a bit different. Intel doesn't manufacture chips on a gigantic 0.80µm process, we're at the beginnings of a transition to 45nm. If left unchanged, Intel could make the original Pentium on its latest 45nm process with a die size of less than 3 mm^2. Things get even more interesting if you consider that Intel has learned quite a bit in the past 15 years since the debut of the original Pentium. Imagine what it could do with a relatively simple x86 architecture now.

46 Comments

View All Comments

highlandsun - Thursday, April 3, 2008 - link

With all due respect to Fred Weber, with Atom at 47 million transistors, it's pretty obvious that the 10% figure for X86 ISA compatibility is not negligible, particularly in this performance-at-absolute-minimum-power space. Anybody using X86 in tiny embedded systems is automatically giving up a chunk of their power budget that someone using a cleaner instruction set encoding can apply directly to useful work. And as the previous poster already pointed out - source code portability is the only thing that matters to application developers, and that's a non-problem these days. Using the X86 instruction set encoding is stupid. Using it on a low-power-budget device is suicide.Jovec - Thursday, April 3, 2008 - link

I don't think the 10% reference meant 10% of all chips, but rather 10% of the current chip at the time the statement was made. In other words, x86 instruction decoding requires (roughly) a fixed amount of transistors for any chip, so the smaller the die size and larger the transistor count, less and less space is devoted to it.highlandsun - Thursday, April 3, 2008 - link

Yes, that's obvious. And it's also obvious that Atom at 47 million transistors is paying a greater proportionate cost than Core2 Duo at 410 million transistors. In 2002 when Fred made that statement, AMD's current chip was the AthlonXP Thoroughbred, with about 37 million transistors. At the same time the Pentium 4 had 55 million. Put in context, I'd guess that the Atom at 47M vs P4 at 55M has more than 10% of its resources devoted to X86 decoding.Also, Fred's statement in 2002 didn't take into account the additional complexity introduced by the AMD64 instruction extensions, where now a single instruction may be anywhere from 1 to 16 bytes long. Given that you're doing a completely clean ground-up chip design in the first place, it would have made more sense (from both a power budget and real estate perspective) to design a clean, orthogonal, uniform-length encoding at the same time.

Cross-platform ABI compatibility is stupid in the context they're aiming for; nobody is going to run their PC version of Crysis or MSWord on their cellphone. All that matters is API compatibility. With a consistent API, you can still run a separate binary translator if you really really want to move a desktop app to your mobile device but in most cases it would be a bad idea because a desktop app is unlikely to take advantage of power-saving APIs that would be important on a mobile. I.e., most of the time you're going to want purpose-built mobile apps anyway.

floxem - Tuesday, April 15, 2008 - link

I agree. But it's Intel. What do you expect?maree - Thursday, April 3, 2008 - link

I dont think MS will be ready before Windows 7 is released, which is another 3-5 years... and might coincide with Moorestown. Microsoft started work on WindowsLite only after releasing Vista. Vista is bloatware as of now. As of now MS has to rely on crippled versions of XP and Vista like starter and home, which is not very ideal.Apple and Linux are going to have a free run till then...

TA152H - Wednesday, April 2, 2008 - link

Bringing up the Pentium is a little strange, because the whole market is completely different.The Pentium wasn't supposed to be for everyone when it came out. The processor market was different back then where previous generations lasted a long, long time. The Pentium wasn't supposed to replace the 486 right away, or even quickly, and being huge and a terrible power hog was acceptable because the initial iteration was just for a very small group of people who absolutely needed it. The original Pentium had a lot of problems, and struggled badly to reach 66 MHz, so they sold most of their processors at 60 MHz. The second generation was intended more for mainstream.

Nowadays the latest generation replaces the earlier much more quickly, and has to cover more market segments more quickly. I still remember IBM releasing new machines for the 8086 in 1987. That's 9 years after the chip was made. It's just a different market.

The Pentium is nothing like the Silverthorne though, and it's a strange comparison. The Pentium executed x86 instructions, it wasn't decoupled. It also had both pipes, the U and V, lockstepped, which is limitation the Silverthorne doesn't have.

Saying the Pentium Pro was the first processor that allowed out of order processing is strange indeed. The only other processor this would have made sense with was the Pentium, since it was the only previous processor that was superscalar. So, they only made one in order processor, and then went to out of order with the next. It's difficult to see the extrapolation from this that it will be five years or more before Silverthorne goes out of order. It might be that long, but the backwards reference shouldn't be used to back that; it does more to contradict it.

Anand Lal Shimpi - Wednesday, April 2, 2008 - link

The Pentium reference was merely to show that what was once a huge, 300mm^2 design could now be built on a much, much smaller scale. And starting from scratch it's now possible to build something in-order that's significantly faster.The Pentium was an obvious comparison given that it was Intel's last two-issue in-order design, but I didn't mean to imply anything beyond that.

It won't be too long before we'll be able to have something the speed of a Core 2 in a similarly small/cool running package as well :)

Take care,

Anand

fitten - Wednesday, April 2, 2008 - link

I remember back in the days of the Mac FX we talked about 'what ifs' like making a 6502 with the (then) modern process technologies and how fast would it run. I wonder what about now :)crimson117 - Wednesday, April 2, 2008 - link

I am SO going to hold you to that! But I can only hope "won't be long" will mean within 12 months rather than within 12 years :P

Especially after my fiasco mounting a Freezer 7 Pro on an Abit IP35-E, I'd love if a heatsink weren't even necessary.

Anand Lal Shimpi - Wednesday, April 2, 2008 - link

12 months won't be a reality unfortunately :) But look at it this way, the first Pentium M came out in 2003? And 5 years later we're able to have somewhat comparable performance with the Atom processor.I'm really curious to see what happens with Atom on 32nm...