ATI Radeon HD 3870 X2: 2 GPUs 1 Card, A Return to the High End

by Anand Lal Shimpi on January 28, 2008 12:00 AM EST- Posted in

- GPUs

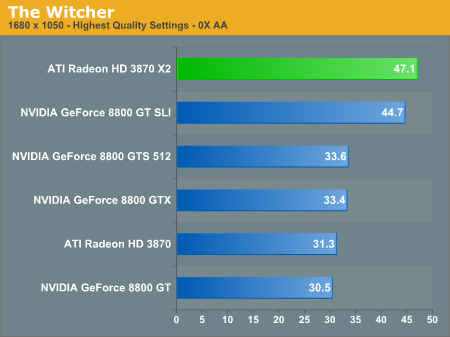

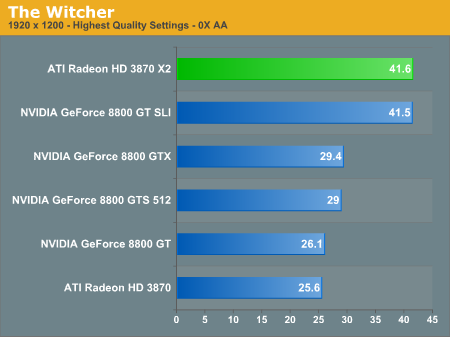

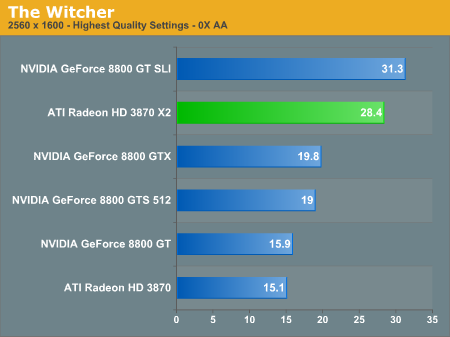

The Witcher

To measure performance when playing The Witcher we ran FRAPS during the game's first major cutscene at the start of play. We started recording frame rates as the cutscene faded in and stopped right before Geralt grabs his sword.

The Witcher really likes the Radeon HD 3870 X2, as it was faster than even a pair of 8800 GTs running in SLI except at 2560 x 1600. Once again, we're talking about a 40%+ performance advantage over the closest single-card NVIDIA solution.

74 Comments

View All Comments

HilbertSpace - Monday, January 28, 2008 - link

When giving the power consumption numbers, what is included with that? Ie. how many fans, DVD drives, HDs, etc?m0mentary - Monday, January 28, 2008 - link

I didn't see an actual noise chart in that review, but from what I understood, the 3870GX2 is louder than an 8800 SLI setup? I wonder if anyone will step in with a decent after market cooler solution. Personally I don't enjoy playing with headphones, so GPU fan noise concerns me.cmdrdredd - Monday, January 28, 2008 - link

then turn up your speakersdrebo - Monday, January 28, 2008 - link

I don't know. It would have been nice to see power consumption for the 8800GT SLI setup as well as noise for all of them.I don't know that I buy that power consumption would scale linearly, so it'd be interesting to see the difference between the 3870 X2 and the 8800GT SLI setup.

Comdrpopnfresh - Monday, January 28, 2008 - link

I'm impressed. Looking at the power consumption figures, and the gains compared to a single 3870, this is pretty good. They got some big performance gains without breaking the bank on power. How would one of these cards overclock though?yehuda - Monday, January 28, 2008 - link

No, I'm not impressed. You guys should check the isolated power consumption of a single-core 3870 card:http://www.xbitlabs.com/articles/video/display/rad...">http://www.xbitlabs.com/articles/video/...lay/rade...

At idle, a single-core card draws just 18.7W (or 23W if you look at it through a 82% efficient power supply). How is it that adding a second core increases idle power draw by 41W?

It would seem as if PowerPlay is broken.

erikejw - Tuesday, January 29, 2008 - link

ATI smokes Nvidia when it comes to idle power draw.Spoelie - Monday, January 28, 2008 - link

GDDR4 consumes less power as GDDR3, given that the speed difference is not that great.FITCamaro - Monday, January 28, 2008 - link

Also you figure the extra hardware on the card itself to link the two GPUs.yehuda - Tuesday, January 29, 2008 - link

Yes, it could be that. Tech Report said the bridge chip eats 10-12 watts.