ATI Radeon X1950 Pro: CrossFire Done Right

by Derek Wilson on October 17, 2006 6:22 AM EST- Posted in

- GPUs

Half-Life 2: Episode One Performance

Episode One of the new Half-Life 2 series makes use of recent Source engine

updates to include Valve's HDR technology. While some people have done HDR

that won't allow antialiasing (even on ATI cards), Valve put a high value on

building an HDR implementation that everyone can use with whatever settings

they want. Consistency of experience is usually not important enough to

developers who care about pushing the bleeding edge of technology, so we are

very happy to see Valve going down this path.

We use the built-in timedemo feature to benchmark the game. Our timedemo

consists of a protracted rocket launcher fight and features much debris and

pyrotechnics. The Source engine timedemo feature is more like the nettimedemo

of Id's Doom 3 engine, in that it plays back more than just the graphics. In

fact, Valve includes some fairly intensive diagnostic tools that will reveal

almost everything about every object in a scene. We haven't found a good use

for this in the context of reviewing computer hardware, but our options are

always open.

The highest visual quality settings possible were used including the "reflect

all" setting which is normally not enabled by default, and anisotropic

filtering was set at 8x. While the Source engine is notorious for giving great

framerates for almost any hardware setup, we find the game isn't as enjoyable

if it isn't running at at least 30fps. This is very attainable even at the

highest resolution we tested on most cards, and thus our target framerate is a

little higher in this game than others.

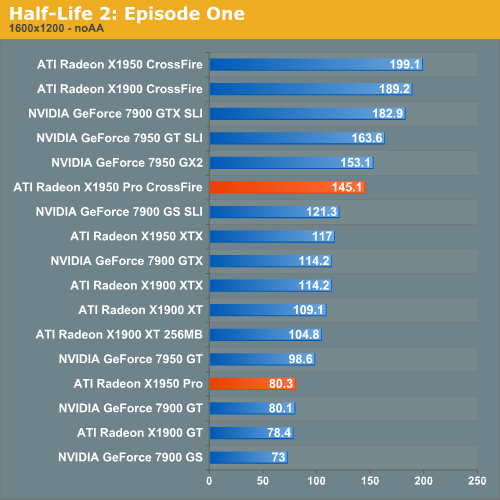

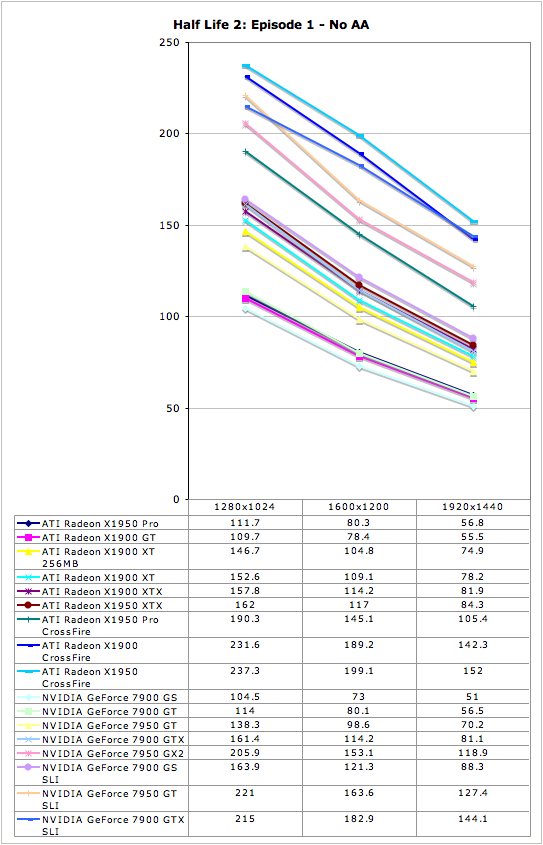

Showing about a 10% performance advantage over the 7900 GS, the X1950 Pro delivers a good level of performance under Half-Life 2: Episode One without AA enabled. Combine that with the fact that CrossFire delivers about an 80% performance improvement to SLI's 66%, and we have a clear winner in the mulit-GPU department here.

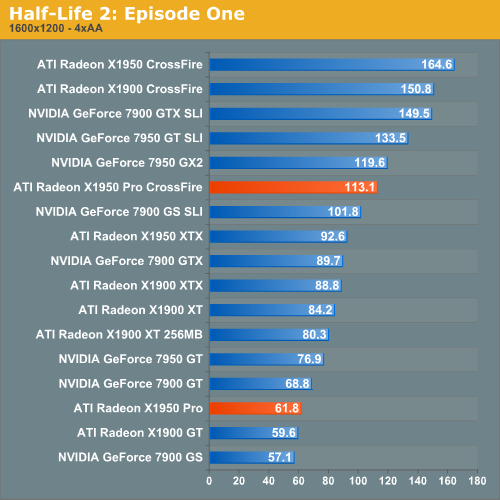

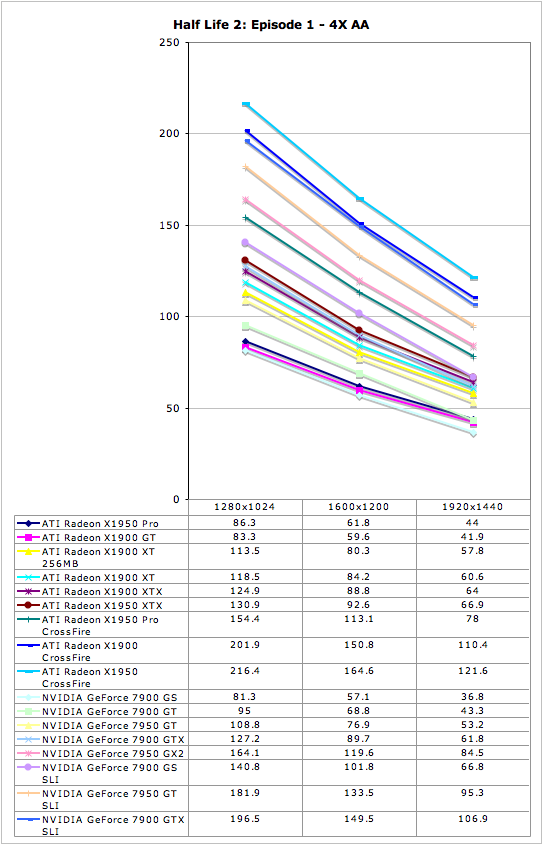

Enabling 4xAA under HL2:EP1 closes the gap between ATI and NVIDIA at the $200

mark, but still leaves ATI in the lead. The same is true in the multi-GPU

arena.

45 Comments

View All Comments

Spoelie - Tuesday, October 17, 2006 - link

They're total system power, we're not gonna see 180W gpu's till november :)Aikouka - Tuesday, October 17, 2006 - link

Those cards are going to be so hot.Sorry, that was my one and only bad pun of the day :P.

Aikouka - Tuesday, October 17, 2006 - link

First off, it was an intersting article and it's nice to see that ATi is making changes to "fix" the poor dual-card implementation :).But now akin to my subject line, I was wondering if you [Anandtech] would be keen on adding in a "Performance / Price" sort of chart at the end. The idea would be to keep different quality modes separate (i.e. No-AA/No-AF and #x-AA/#x-AF would need separate charts or only one chart but using one set of data) while ordering cards by their average FPS or "average ranking". Such as, if Video Card A is seen in spot #1 the most, it stays there in the chart regardless of the figure listed. I'd say this is more of a user-friendly idea than anything required to be practical. But here's what I think a typical bar would represent:

[ Video_Card_Name - (Average_FPS/Price) - Average_FPS ]

The idea is that some video cards may be return the same value (i.e. 120/$400 and 60/$200), but listing cards in their typical performance standing allows someone to say "well, hey... I like the performance ratio of this card, and I don't need 120 FPS!"

I think it may provide a way for people who read the benchmarks to get a real world idea of these cards rather than an "in box" idea, because as nice as it is to see a card produce 120 FPS... how much will we have to produce to purchase it ;).

Just something I thought up while looking at pages and pages of charts and not knowing how "worthwhile" the cards really were for the performance.

P.S. I'd love to hear comments!

Spoelie - Tuesday, October 17, 2006 - link

I don't think it'll be very practical for most people. When a graphic card purchase is being considered, most people just have a hard cash target in mind (for example, 200$) and just look for the fastest card at that price point. The only exception to this is when those people notice that a small rise in their budget allows a much more powerful graphic card purchase, e.g. 6600gt for 100$ but 7600gt for 130$So as long as anandtech keeps comparing on pricepoints and of course mentioning any caveats/featuredifferences/possible better deals higher up, there's no real need for such a chart.

but that's just my opinion

Aikouka - Tuesday, October 17, 2006 - link

That's really a view of someone who's looking to spend x amount of dollars trying to find the best item for that price, which is one thing that's kept in focus. My proposed chat method helps to show two things really, what's the best "bang for your buck" and allows you to see if that performance pricing is the level of performance that you want.To go into detail a bit about that, if the best performance ratio was .3 (120fps/$400 or 60fps/$200, etc), someone might go, "Well, hey... I like the performance rating on these two cards, but I don't need something that fast." So, the user would shoot for the 60fps card.

But in your case, you most likely benefit from what's currently done. For me, I could really care less how much a new video card costs me. I got tired of spending smaller amounts on mediocre performance years ago and I've tried to keep my dollars spent in the high-end sector (the range of high-end cards, not necessarily referring to the ultra-high-end-uber cards only) as much as possible to avoid having to perform constant upgrades to keep that level of detail that I like (I can't stand "jaggies" ... I'm just too anal about things like that).

For example, my current 6800GT was the 2nd top performing graphics card when I bought it, but it simply doesn't suit my needs anymore as below 30FPS (in more render-heavy areas) in WoW at 1280x1024 with high graphics settings is just subpar. Now, my card is old and about to be replaced by another generation (the G80). Or maybe I just need one of them Killer NICs (just kidding :P).

I think a good thing to mention is that the bar system that I mentioned may be better for a bulk review more than a single card review. Especially since a lot of people may come into the review of a single card knowing all the information about the other cards, so they're only performing minor mental comparisons. But in a bulk review, there's a lot more information to keep in check, so an overall comparison that takes a couple of the specifics about a card ( average performance and price mainly ) could be beneficial.

Thanks for the comments!

Aikouka - Tuesday, October 17, 2006 - link

"The idea is that some video cards may be return the same value"should be...

"The idea is that some video cards may return the same value"

Stupid me deleting and rewriting so much that I'll leave in a word from a prior revision XD. Oh yeah, and I had to make sure to post this fix before someone sought it upon themselves to ignore the entire post, but to make a comment on my little accident :P.

bupkus - Tuesday, October 17, 2006 - link

With other CrossFire configurations as a guide, we can easily expect X1950 Pro to nearly double its single card performance and put it on par with the 7950 GX2 and 7900 GT SLI configurations. As for single card performance, we see the trend of X1950 Pro domination continuing. Performance greater than that of the 7900 GS and GT for $200 is quite a plus.takumsawsherman - Tuesday, October 17, 2006 - link

Now all ATI has to do is get rid of that Catalyst Control Center. I have been using a Radeon 9700 Pro for two years now, and I have been almost perfectly happy with it except for two problems: It sometimes loses TV mode with no explanation, and I have to remove and re-add the TV in CCC. The other is that CCC takes anywhere from 10-20 seconds to load up on an Athlon XP 2500+. The old control panel set opened within 2 or three seconds (the advanced screens). I've set up a quick profile, but even right-clicking the taskbar icon has a huge delay (often ten seconds or more) before the menu appears, and then sometimes activating the profile doesn't work, and I have to set it manually (sometimes it does work). After initial launch, it does open more quickly (cached, I assume). I assume that this is because of the use of .NET.Also, ZoneAlarm reports that CLI.EXE (listed as various ATI apps) is listening to TCP ports 1052, 1057, and 1058. There are currently three CLI.exe processes running on my system, taking 6,132K, 5,800K, and 3,572K of memory respectively. That is about 15MB of memory, and I don't have CCC open. My old Matrox G400Max had tons of options in the advanced screens, had a better TV output quality, and didn't require all of this garbage. The old ATI control panel even seemed easier to use.

I would love to get a nice new x1950, as Oblivion gets pretty choppy even at fairly low settings. But I really want to get away from these bulky system tray apps. And look at the update process here: https://support.ati.com/ics/support/default.asp?de...">https://support.ati.com/ics/support/def...mp;task=...

That is ridiculous. Can they not make an installer that removes their own cruft before installing the new version? It's a chore to put a new driver in, and it shouldn't be.

Zoomer - Thursday, October 19, 2006 - link

I think this is partly microsoft's fault. .Net framework is a POS.ATi should shoulder some of the blame too, for choosing a crappy base to program on. I would think even java based apps are not this bad.

mesyn191 - Friday, October 20, 2006 - link

I dunno...I personally don't like the whole CCC/CP approach to config. the graphics card either, but nV's implementation aint' half bad.