ATI Radeon X1950 Pro: CrossFire Done Right

by Derek Wilson on October 17, 2006 6:22 AM EST- Posted in

- GPUs

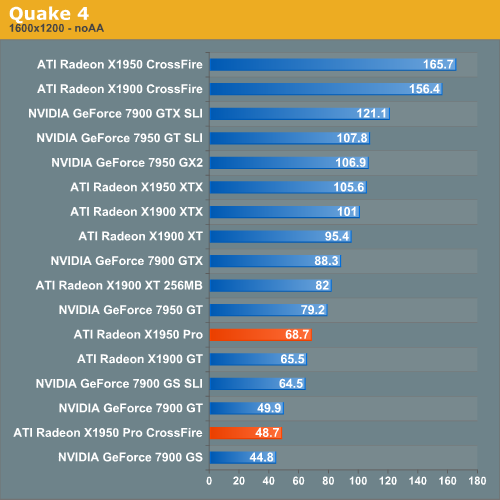

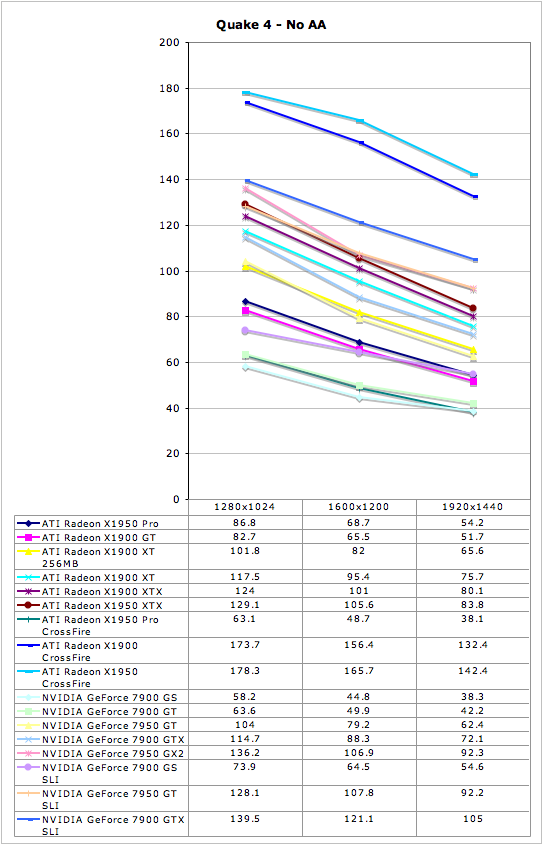

Quake 4 Performance

There has always been a lot of debate in the community surrounding pure

timedemo benchmarking. We have opted to stick with the timedemo test rather

than the nettimedemo option for benchmarking Quake 4. To be clear, this means

our test results focus mostly on the capability of each graphics card to

render frames generated by Quake 4. The frame rates we see here don't directly

translate into what one would experience during game play.

Additionally, Quake 4 limits frame rate to 60 fps during gameplay whether or

not VSync is enabled. Performance characteristics of a timedemo do not reflect

actual gameplay. So why do we do them? Because the questions we are trying to

answer have only to do with the graphics subsystem. We want to know what

graphics card is better at rendering Quake 4 frames. Any graphics card that

does better at rendering Quake 4 frames will handle Quake 4 better than

another card. While that doesn't mean the end user will necessarily see higher

performance throughout the game, it does mean that the potential for seeing

more performance is there. For instance, if the user upgrades CPUs while

keeping the same graphics card, having higher potential GPU performance is

going to be important.

What this means to the end user is that in-game performance will almost always

be lower than timedemo performance. It also means that graphics cards that do

slightly better than other graphics cards will not always show a tangible

performance increase on an end user's system. As long as we keep these things

in mind, we can make informed conclusions based on the data we collect.

Our benchmark consists of the first few minutes of the first level. This

includes both inside and outdoor sections, with the initial few fire fights.

We tested the game with Ultra Quality settings (uncompressed normal maps), and

we enabled all the advanced graphics options except for VSync. Id does a

pretty good job of keeping framerate very consistent, and so in-game

framerates of 25 are acceptable. While we don't have the ability to make a

direct mapping to what that means in the timedemo test, our experience

indicates that a timedemo fps of about 35 translates into an enjoyable

experience on our system. This will certainly vary on other systems, so take

it with a grain of salt. The important thing to remember is that this is more

of a test of relative performance of graphics cards when it comes to rendering

Quake 4 frames -- it doesn't directly translate to Quake 4 experience.

Before we get to performance analysis here, we must note that ATI has confirmed our numbers and indicated that Quake 4 performance with X1950 Pro CrossFire suffers from a driver issue that will be resolved in an upcoming version of Catalyst drivers.

A single 7900 GS loses quite handily to the X1950 Pro under Quake 4 without AA enabled. We won't be able to talk about X1950 Pro CrossFire performance until ATI fixes the current driver issue. For now, we do see proper scaling under Quake 4 with High Quality mode enabled rather than Ultra Quality.

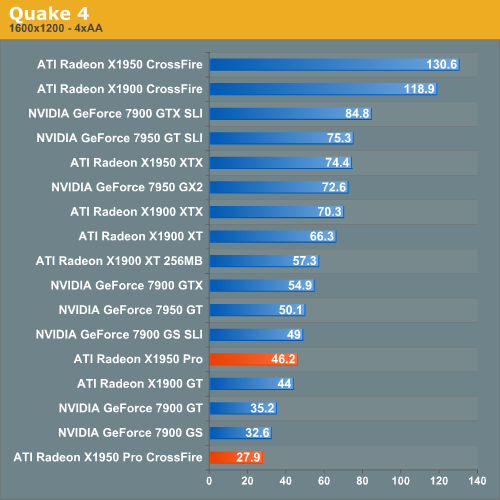

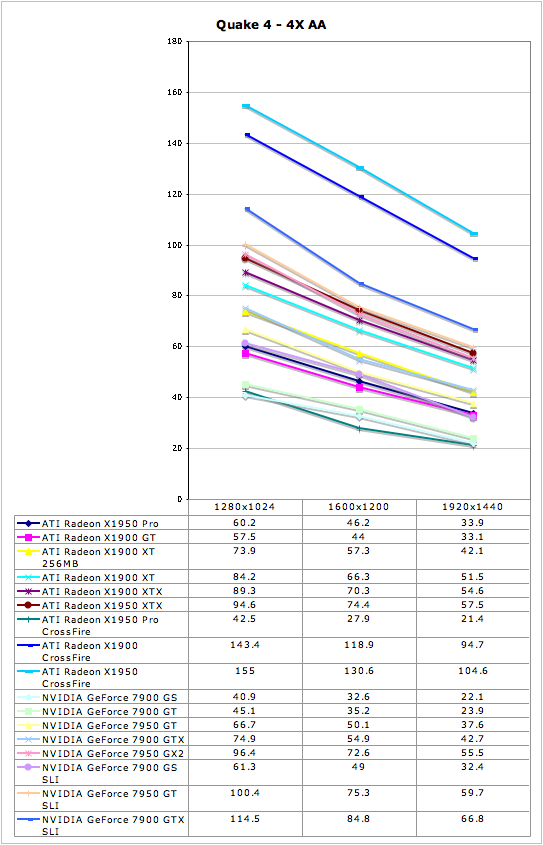

Performance characteristics with 4xAA enabled are similar to those without AA.

The 7900 GS does close the gap a little with the X1950 Pro, but it isn't

nearly enough to put them in the same category.

45 Comments

View All Comments

Zoomer - Thursday, October 19, 2006 - link

Is this a optical shrink to 80nm?Answering this question will put overclocking expectations in line. Generally, optically shrunk cores from TSMC overclock to the about the same as the original or perhaps slightly worse.

coldpower27 - Friday, October 20, 2006 - link

Well no as this piepline configuration doesn't exist natively before on the 90nm node. It's a 3 Quad Part, so it's basedon R580 but has 1 Quad Physical removed as well as being shrunk to 80nm. Not to mention Native Crossfire support was added onto the die.Spoelie - Friday, October 20, 2006 - link

Optical shrink, this is 80nm and the original was 90nm. You're normally correct because the first optical shrink usually does not have the same technologies as the proces higher up (low-k and SOI for example, this was the case with 130nm -> 110nm), but I don't think it's the case for this generation. Regardless, haven't seen any overclocking articles on it yet so I'm quite curious.Spoelie - Friday, October 20, 2006 - link

oie, maybe I should add that it's reworked as well, so both actually. Since this core didn't exist before (rv570 and that pipeline configuration), I don't think that they just sliced a part of the core...Zstream - Tuesday, October 17, 2006 - link

Beyond3D reported the spec change a month before anyone received the card. I think you need to do some FAQ checking on your opinions mate.All in all decent review but poor unknowledgeable opinions…

DerekWilson - Wednesday, October 18, 2006 - link

Just because ATI made the spec change public does not mean it is alright to change the specs of a product that has been shipping for 4 months.X1900 GT has been available since May 9 as a 575/1200 part.

The message we want to send isn't that ATI is trying to hide something, its that they shouldn't do the thing in the first place.

No matter how many times a company says it changed the specs of a product, when people search for reviews they're going to see plenty that have been written since May talking about the original X1900 GT.

Naming is already ambiguous enough. I stand by my opinion that having multiple versions of a product with the exact same name is a bad thing.

I'm sorry if I wasn't clear on this in the article. Please let me know if there's anything I can reword to help get my point across.

Zoomer - Thursday, October 19, 2006 - link

This is very common. Many vendors in the past have passed off 8500s that run at 250/250 instead of the stock 275/275, and don't label them as such.There are some Asus SKUs that have this same handicap, but I can't recall what models that were.

xsilver - Tuesday, October 17, 2006 - link

any word on what the new price for the x1900gt's will be now that the x1950pros are out?or are they being phased out and no price drop is being considered?

Wellsoul2 - Monday, November 6, 2006 - link

You guys are such cheerleaders..For a single card buy why would you get this?

Why would you buy the 1900GT even after the

1900XT 256MB came out?

I got my 1900XT 256MB for $240 shipped..

Except for power consumption it's a much better card.

You get to run Oblivion great with one card.

Two cards is such a scam. More expensive motherboard..power consumption etc.

This is progress? CPU's have evolved..

It's hard to even find a motherboard with 3 PCI slots..

What a scam! Where's my ultra-fast HDTV board for PCI Express?

Seriously..Why buy into SLI/Crossfire? Why not 2 GPU's on one card?

Too late..You all bought into it.

Sorry I am just so sick of the praise for this money-grab of SLI/Crossfire.

jcromano - Tuesday, October 17, 2006 - link

Are the power consumption numbers (98W idle, 181W load) for just the graphics card or are they total system power?Thanks in advance,

Jim