ATI's New High End and Mid Range: Radeon X1950 XTX & X1900 XT 256MB

by Derek Wilson on August 23, 2006 9:52 AM EST- Posted in

- GPUs

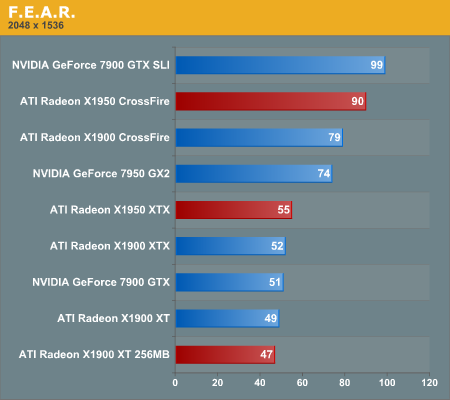

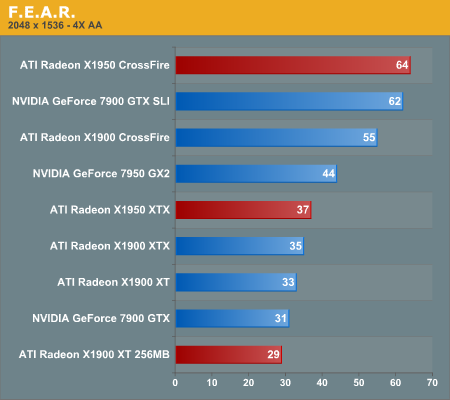

F.E.A.R. Performance

F.E.A.R. has a built in test that we make use of in this performance analysis. This test flies through some action as people shoot each other and things blow up. F.E.A.R. is very heavy on the graphics, and we enable most of the high end settings for our test.

During our testing of F.E.A.R., we noted that the "soft shadows" don't really look soft. They jumped out at us as multiple layers of transparent shadows layered on top of each other and jittered to appear soft. Unfortunately, this costs a lot in performance and not nearly enough shadows are used to make this look realistic. Thus, we disable soft shadows in our test even though its one of the large performance drains on the system.

Again we tested with antialiasing on and off and anisotropic filtering at 8x. All options were on their highest quality with the exception of soft shadows which was disabled. Frame rates for F.E.A.R. can get pretty low for a first person shooter, but the game does a good job of staying playable down to about 25 fps.

F.E.A.R. returns to NVIDIA's 7900 GTX SLI ruling the top of the charts by 10% over the X1950 CrossFire. What's more noteworthy however is the close to 14% performance boost the X1950 CrossFire gets over the X1900 CrossFire setup, due to its 4% CrossFire card core clock speed boost and faster GDDR4 memory. Once again we see that the 7950 GX2 provides a good middle ground between the price/performance of the fastest single cards and the slowest multi-card multi-GPU setups.

Single card performance, with the exception of the GX2, is pretty close between the top contenders. The X1950 XTX is fastest but its predecessor and the 7900 GTX are not far behind. Even the X1900 XT 256MB puts out a respectable frame rate here, but it's starting to show its limits.

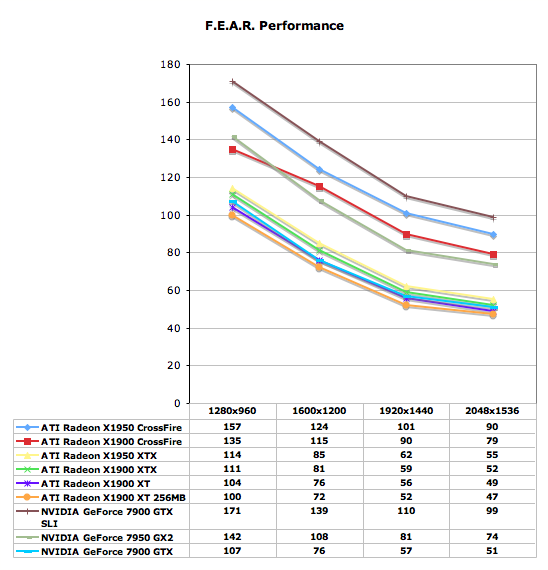

Without AA, nearly every configuration scales exactly the same from low resolution to high. The X1900 CrossFire score at 1280x960 is a little strange, but we confirmed the test a couple different times to make sure nothing was off. It is possible that this scaling issue is due to the lower memory clock, but we really aren't sure why we see the performance we do here. Luckily, there's no need to play at such a low resolution with a $1000 set of graphics cards.

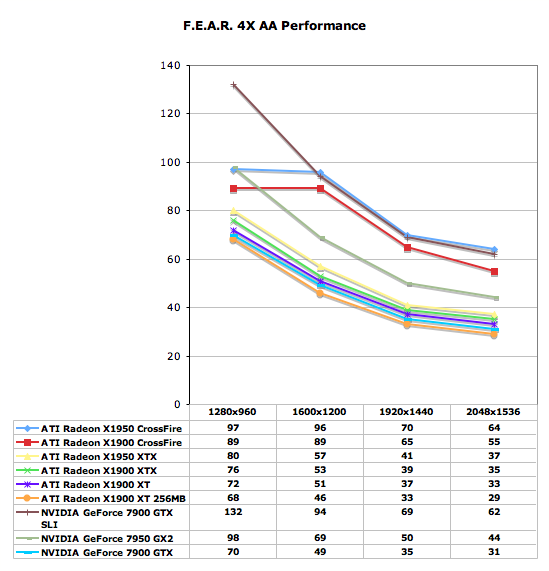

With AA enabled, ATI gains a small advantage over NVIDIA at the very high end with its X1950 CrossFire. The standings mostly remain the same through the rest of the charts, although the 7900 GTX and 512MB X1900 XT swap places in the single card comparisons.

With single cards able to offer playable performance at 2048 x 1536 with 4X AA, we can't wait to see what the next generation of GPUs will bring us in terms of single card performance. Or, alternatively, what game developers are going to be able to do with this much power at their disposal.

The AA graph looks even stranger than the non-AA graph. Both multi card ATI solutions hit a very strange limitation at 1280x960. This isn't a traditional, easy to explain, CPU limitation. A faster memory clock on the X1950 XTX gives it an advantage at 1280x960, and the 7900 GTX SLI has no problem reaching up over 130 fps. Again, we ran this test multiple times and decided to chalk it up to mysterious and unknown driver issues. Other than these anomalies, everything else scales similarly and nothing really trades places at any point in this test.

74 Comments

View All Comments

JarredWalton - Wednesday, August 23, 2006 - link

We used factory overclocked 7900 GT cards that are widely available. These are basically guaranteed overclocks for about $20 more. There are no factory overclocked ATI cards around, but realistically don't expect overclocking to get more than 5% more performance on ATI hardware.The X1900 XTX is clocked at 650 MHz, which isn't much higher than the 625 MHz of the XT cards. Given that ATI just released a lower power card but kept the clock speed at 650 MHz, it's pretty clear that there GPUs are close to topped out. The RAM might have a bit more headroom, but memory bandwidth already appears to be less of a concern, as the X1950 isn't tremendously faster than the X1900.

yyrkoon - Wednesday, August 23, 2006 - link

I think its obvious why ATI is selling thier cards for less now, and that reason is alot of 'tech savy' users, are waiting for Direct3D 10 to be released, and want to buy a capable card. This is probably to try an entice some people into buying technology that will be 'obsolete', when Direct3D 10 is released.Supposedly Vista will ship with Directx 9L, and Directx 10 (Direct3D 10), but I've also read to the contrary, and that Direct3D 10 wont be released until after Vista ships (sometime). Personally, I couldnt think of a better time to buy hardware, but alot of people think that waiting, and just paying through the nose for a Video card later, is going to save them money. *shrug*

Broken - Wednesday, August 23, 2006 - link

In this review, the test bed was an Intel D975XBX (LGA-775). I thought this was an ATI Crossfire only board and could not run two Nvidia cards in SLI. Are there hacked drivers that allow this, and if so, is there any penalty? Also, I see that this board is dual 8x pci-e and not dual 16x... at high resolutions, could this be a limiting factor, or is that not for another year?DerekWilson - Wednesday, August 23, 2006 - link

Sorry about the confusion there. We actually used an nForce4 Intel x16 board for the NVIDIA SLI tests. Unfortunately, it is still not possible to run SLI on an Intel motherboard. Our test section has been updated with the appropriate information.Thanks for pointing this out.

Derek Wilson

ElFenix - Wednesday, August 23, 2006 - link

as we all should know by now, Nvidia's default driver quality setting is lower than ATi's, and makes a significant difference in the framerate when you use the driver settings to match the quality settings. your "The Test" page does not indicate that you changed the driver quality settings to match.DerekWilson - Wednesday, August 23, 2006 - link

Drivers were run with default quality settings.Default driver settings between ATI and NVIDIA are generally comparable from an image quality stand point unless shimmering or banding is noticed due to trilinear/anisotropic optimizations. None of the games we tested displayed any such issues during our testing.

At the same time, during our Quad SLI followup we would like to include a series of tests run at the highest possible quality settings for both ATI and NVIDIA -- which would put ATI ahead of NVIDIA in terms of Anisotropic filtering or in chuck patch cases and NVIDIA ahead of ATI in terms of adaptive/transparency AA (which is actually degraded by their gamma correction).

If you have any suggestions on different settings to compare, we are more than willing to run some tests and see what happens.

Thanks,

Derek Wilson

ElFenix - Wednesday, August 23, 2006 - link

could you run each card with the quality slider turned all the way up, please? i believe that the the default setting for ATi, and the 'High Quality' setting for nvidia. someone correct me if i'm wrong.thanks!

michael

yyrkoon - Wednesday, August 23, 2006 - link

I think as long as all settings from both offerings are as close as possible per benchmark, there is no real gripe.Although, some people seem to think it nessisary to run AA as high resolutions (1600x1200 +), but I'm not one of them. Its very hard for me to notice jaggies even at 1440x900, especially when concentrating on the game, instead of standing still, and looking with a magnifying glass for jaggies . . .

mostlyprudent - Wednesday, August 23, 2006 - link

When are we going to see a good number of Core 2 Duo motherboards that support Crossfire? The fact that AT is using an Intel made board rather than a "true enthusiast" board says something about the current state of Core 2 Duo motherboards.DerekWilson - Wednesday, August 23, 2006 - link

Intel's boards are actually very good. The only reason we haven't been using them in our tests (aside from a lack of SLI support) is that we have not been recommending Intel processors for the past couple years. Core 2 Duo makes Intel CPUs worth having, and you definitely won't go wrong with a good Intel motherboard.