The Samsung Galaxy S10+ Snapdragon & Exynos Review: Almost Perfect, Yet So Flawed

by Andrei Frumusanu on March 29, 2019 9:00 AM ESTInference Performance: APIs, Where Art Thou?

Having covered the new CPU complexes of both new Exynos and Snapdragon SoCs, up next is the new generation neural processing engines in each chip.

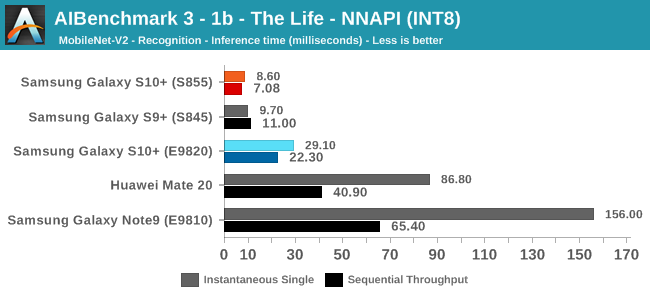

The Snapdragon 855 brings big performance improvements to the table thanks to a doubling of the HVX units inside the Hexagon 690 DSP. The HVX units in the last two generations of Snapdragon chips were the IP blocks who took the brunt of new integer neural network inferencing work, an area the IP is specifically adept at.

The new tensor accelerator inside of the Hexagon 690 was shown off by Qualcomm at the preview event back in January. Unfortunately one of the issues with the new block is that currently it’s only accessible through Qualcomm’s own SDK tools, and currently doesn’t offer acceleration for NNAPI workloads until later in the year with Android Q.

Looking at a compatibility matrix between what kind of different workloads are able to be accelerated by various hardware block in NNAPI reveals are quite sad state of things:

| NNAPI SoC Block Usage Estimates | |||

| SoC \ Model Type | INT8 | FP16 | FP32 |

| Exynos 9820 | GPU | GPU | GPU |

| Exynos 9810 | GPU? | GPU | CPU |

| Snapdragon 855 | DSP | GPU | GPU |

| Snapdragon 845 | DSP | GPU | GPU |

| Kirin 980 | GPU? | NPU | CPU |

What stands out in particular is Samsung’s new Exynos 9820 chipset. Even though the SoC promises to come with an NPU that on paper is extremely powerful, the software side of things make it as if the block wouldn’t exist. Currently Samsung doesn’t publicly offer even a proprietary SDK for the new NPU, much less NNAPI drivers. I’ve been told that Samsung looks to address this later in the year, but how exactly the Galaxy S10 will profit from new functionality in the future is quite unclear.

For Qualcomm, as the HVX units are integer only, this means only quantised INT8 inference models are able to be accelerated by the block, with FP16 and FP32 acceleration falling back what should be GPU acceleration. It’s to be noted my matrix here could be wrong as we’re dealing with abstraction layers and depending on the model features required the drivers could run models on different IP blocks.

Finally, HiSilicon’s Kirin 980 currently only offers NNAPI acceleration for FP16 models for the NPU, with INT8 and FP32 models falling back to the CPU as the device are seemingly not using Arm’s NNAPI drivers for the Mali GPU, or at least not taking advantage of INT8 acceleration ine the same way Samsung's GPU drivers.

Before we even get to the benchmark figures, it’s clear that the results will be a mess with various SoCs performing quite differently depending on the workload.

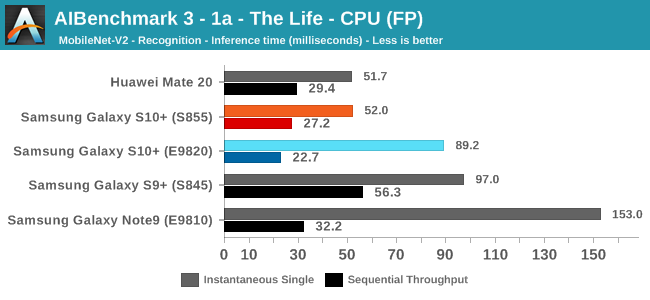

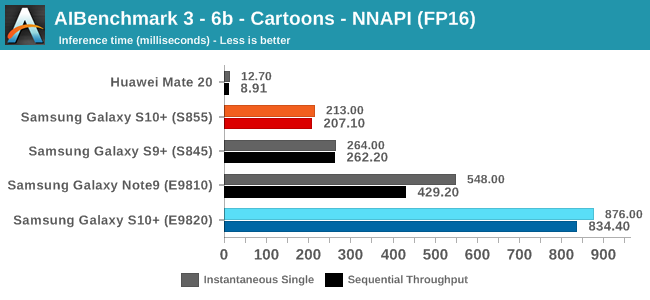

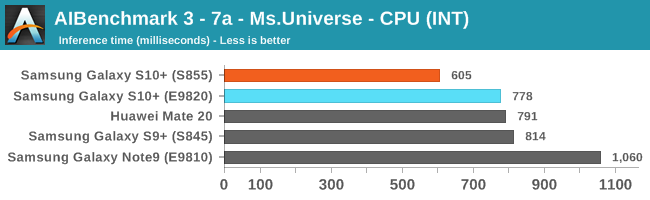

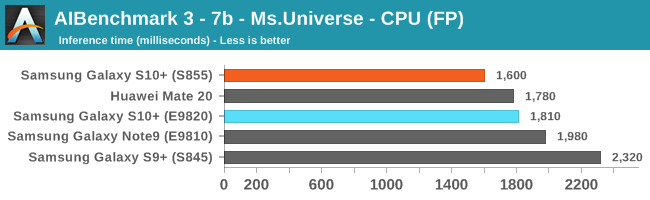

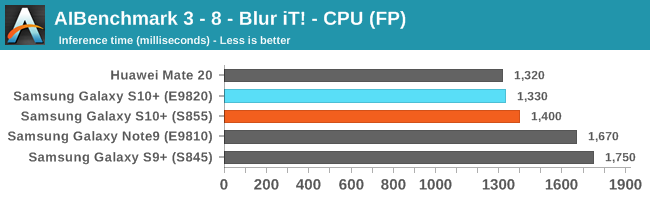

For the benchmark, we’re using a brand-new version of Andrey Ignatov’s AI-Benchmark, namely the just released version 3.0. The new version tunes the models as well as introducing a new Pro-Mode that most interestingly now is able to measure sustained throughput inference performance. This latter point is important as we can have very different performance figures between one-shot inferences and back-to-back inferences. In the former case, software and DVFS can vastly overshadow the actual performance capability of the hardware as in many cases we’re dealing with timings in the 10’s or 100’s of milliseconds.

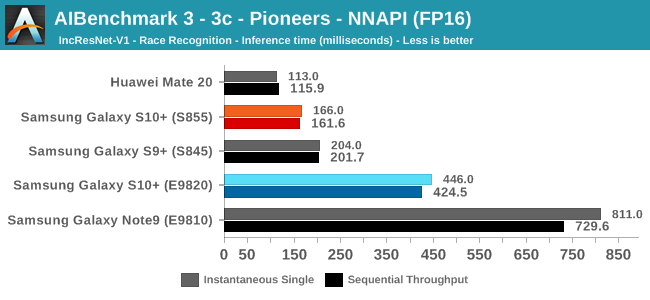

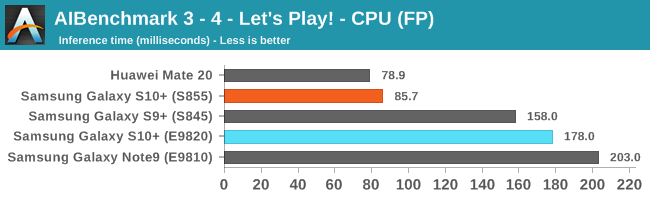

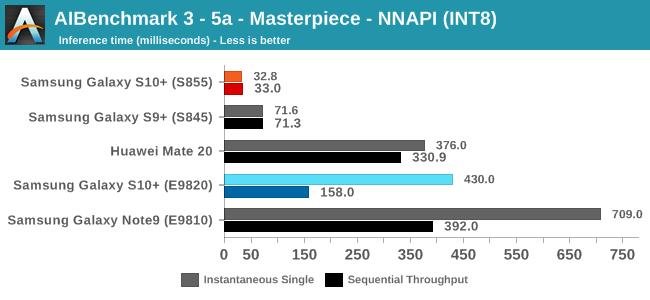

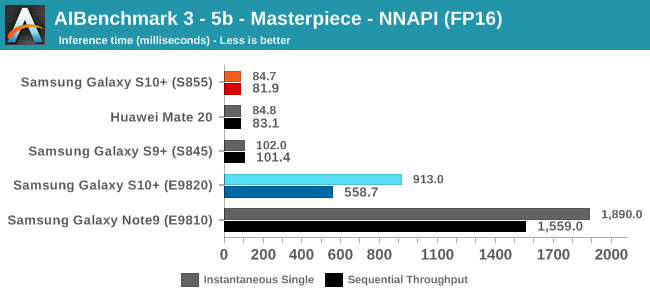

Going forward we’ll be taking advantage of the new benchmark’s flexibility and posting both instantaneous single inference times as well sequential throughput inference times; better showcasing and separating the impact of software and hardware capabilities.

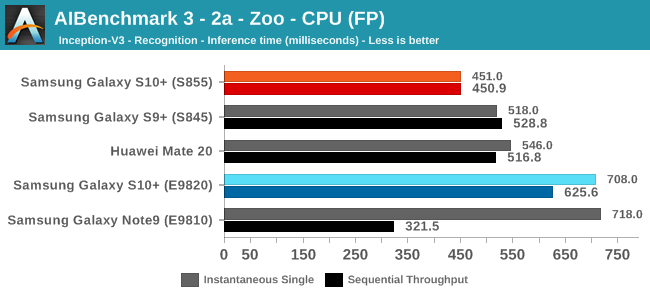

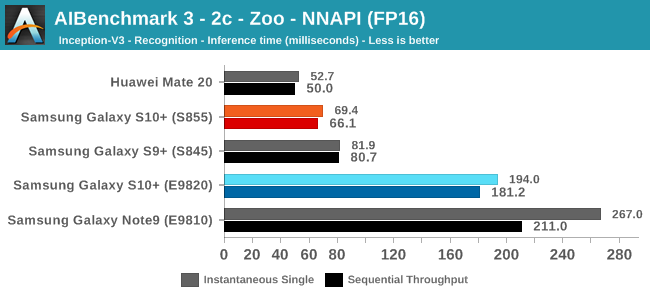

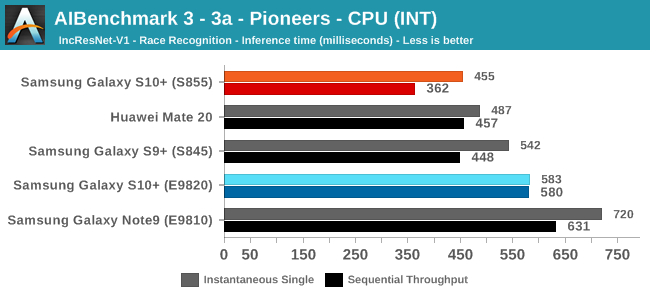

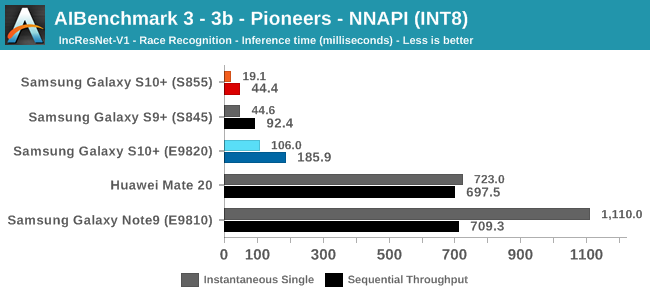

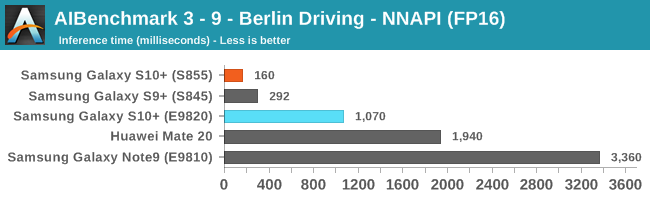

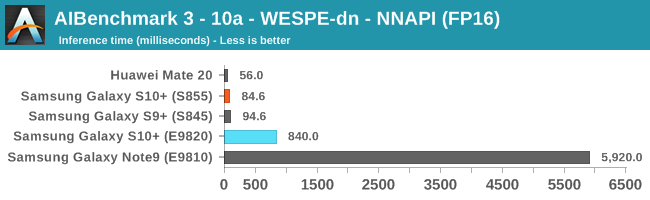

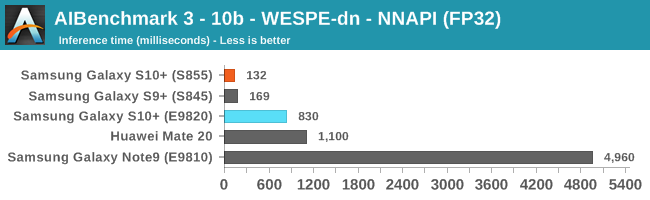

There’s a lot of data here, so for the sake of brevity I’ll simply put up all the results up and we’ll go over the general analysis at the end:

As initially predicted, the results are extremely spread across all the SoCs.

The new tests also include workloads that are solely using TensorFlow libraries on the CPU, so the results not only showcase NNAPI accelerator offloading but can also serve as a CPU benchmark.

In the CPU-only tests, we see the Snapdragon 855 and Exynos 9820 being in the lead, however there’s a notable difference between the two when it comes to their instantaneous vs sequential performance. The Snapdragon 855 is able to post significantly better single inference figures than the Exynos, although the latter catches up in longer duration workloads. Inherently this is a software characteristic difference between the two chips as although Samsung has improved scheduler responsiveness in the new chip, it still lags behind the Qualcomm variant.

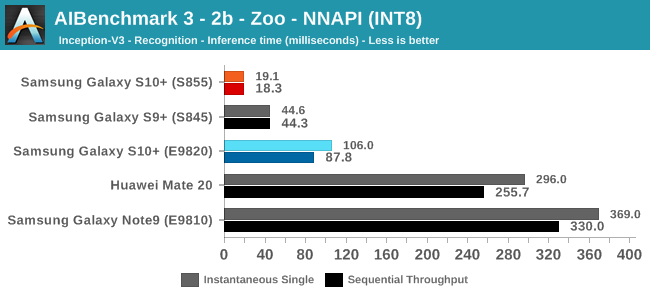

In INT8 workloads there is no contest as Qualcomm is far ahead of the competition in NNAPI benchmarks simply due to the fact that they’re the only vendor being able to offload this to an actual accelerator. Samsung’s Exynos 9820 performance here actually has also drastically improved thanks to the new Mali G76’s new INT8 dot-product instructions. It’s odd that the same GPU in the Kirin 980 doesn’t show the same improvements, which could be due to not up-to-date Arm GPU NNAPI drives on the Mate 20.

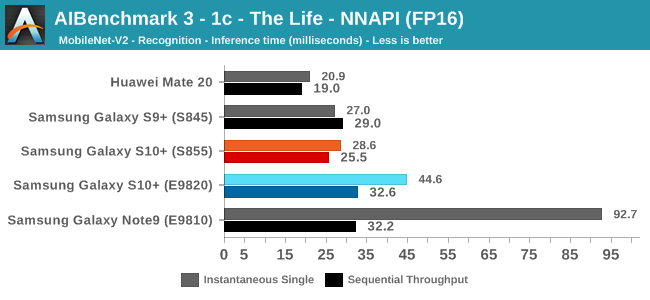

The FP16 performance crown many times goes to the Kirin 980 NPU, but in some workloads it seems as if they fall back to the GPU, and in those cases Qualcomm’s GPU clearly has the lead.

Finally for FP32 workloads it’s again the Qualcomm GPU which takes an undisputed lead in performance.

Overall, machine inferencing performance today is an absolute mess. In all the chaos though Qualcomm seems to be the only SoC supplier that is able to deliver consistently good performance, and its software stack is clearly the best. Things will evolve over the coming months, and it will be interesting to see what Samsung will be able to achieve in regards to their custom SDK and NNAPI for the Exynos NPU, but much like Huawei’s Kirin NPU it’s all just marketing until we actually see the software deliver on the hardware capabilities, something which may take longer than the actual first year active lifespan of the new hardware.

229 Comments

View All Comments

Tropicocity - Sunday, March 31, 2019 - link

Very true. I went from the S4 to the S7 edge, now my S10+ is on the way. Meaningful upgrades!catavalon21 - Thursday, August 22, 2019 - link

You've certainly never been an average Apple fan, then.../* sarcasm off

Monty1401 - Friday, March 29, 2019 - link

I would argue the battery life alone is worth the upgrade (exynos s9+ here)jordanclock - Friday, March 29, 2019 - link

All of the deep dive information is great, but the graphs having different scales makes them very difficult to compare. Can you normalize the Y-axis on the graphs so that switching between them can be immediately compared?jaju123 - Friday, March 29, 2019 - link

Andrei, got any opinions for us peasants of inferior knowledge and skill living in the EU whether to go for the S10+ or P30 pro based on your experience thus far? Seems like the P30 pro may be having performance issues to me, or so I've seen on some youtube videos, particularly with throttling. Many thanks.Andrei Frumusanu - Friday, March 29, 2019 - link

Don't worry I'm also an EU peasant.I only got the P30's a few days ago at release and haven't really used it much as I was busy finishing this up. Performance at first glance looks the same as other Kirin 980 devices which found to be good although missing some OS booster in Snapdragon chips or the new S10.

That review should follow up in the next week or two.

jaju123 - Friday, March 29, 2019 - link

Great thanks, looking forward to the review.I currently have a mate 20 pro, but have itchy trigger fingers and usually buy 2nd hand to save some cash and upgrade quite a bit a few months after these things release. Finding that the main issue with the camera on this device is the white balance being incorrect quite often, but the addition of OIS on the main lens of the P30 pro looks like it could make quite a difference. I am also wondering if the 40mp sensor is in reality all that different from the mate 30 pro/p20 pro?

Ironically, I think that if Oneplus released a true flagship Oneplus 7+ with waterproofing, 4200mah battery and epic camera quality at the 1000$ pricepoint I think that would blow away the rest of the competition.

Andrei Frumusanu - Friday, March 29, 2019 - link

> I am also wondering if the 40mp sensor is in reality all that different from the mate 30 pro/p20 pro?It should be fundamentally different due to the RYYB structure.

eastcoast_pete - Friday, March 29, 2019 - link

Andrei, thanks for this review. Question: How is the call quality, especially if used as speakerphone? The loudspeaker analysis looks promising, but calls also depend on microphones, noise suppression etc. Thanks!Andrei Frumusanu - Friday, March 29, 2019 - link

Call quality on this side is great, the speakers are fantastic, although I admit I didn't really test microphone quality on the other side.