AMD Zen Microarchiture Part 2: Extracting Instruction-Level Parallelism

by Ian Cutress on August 23, 2016 8:45 PM EST- Posted in

- CPUs

- AMD

- x86

- Zen

- Microarchitecture

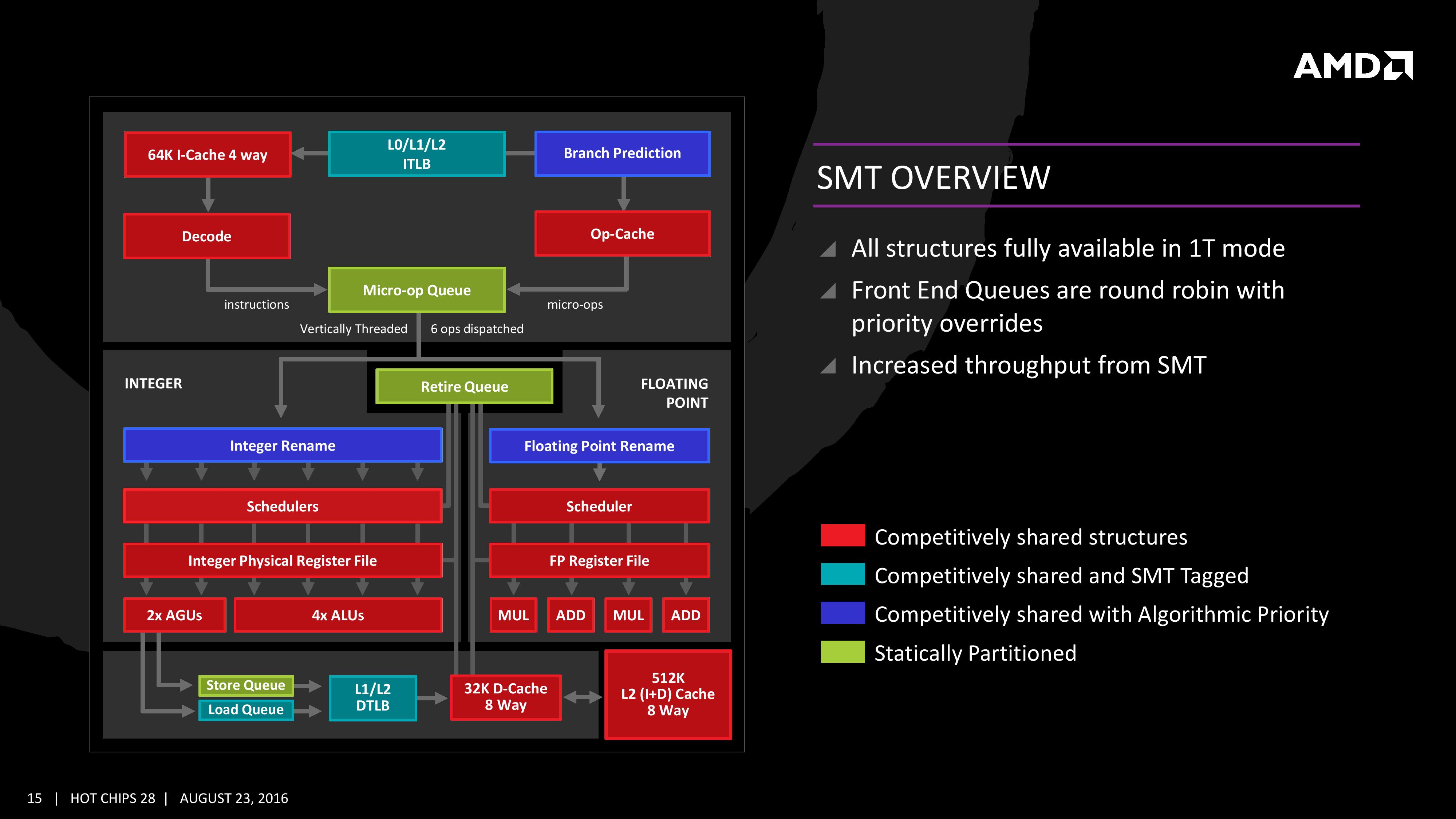

Simultaneous MultiThreading (SMT)

Zen will be AMD’s first foray into a true simultaneous multithreading structure, and certain parts of the core will act differently depending on their implementation. There are many ways to manage threads, particularly to avoid stalls where one thread is blocking another that ends in the system hanging or crashing. The drivers that communicate with the OS also have to make sure they can distinguish between threads running on new cores or when a core is already occupied – to achieve maximum throughput then four threads should be across two cores, but for efficiency where speed isn’t a factor, perhaps power gating/clock gating half the cores in a CCX is a good idea.

There are a number of ways that AMD will deal with thread management. The basic way is time slicing, and giving each thread an equal share of the pie. This is not always the best policy, especially when you have one performance dominant thread, or one thread that creates a lot of stalls, or a thread where latency is vital. In some methodologies the importance of a thread can be tagged or determined, and this is what we get here, though for some of the structures in the core it has to revert to a basic model.

With each thread, AMD performs internal analysis on the data stream for each to see which thread has algorithmic priority. This means that certain threads will require more resources, or that a branch miss needs to be prioritized to avoid long stall delays. The elements in blue (Branch Prediction, INT/FP Rename) operate on this methodology.

A thread can also be tagged with higher priority. This is important for latency sensitive operations, such as a touch-screen input or immediate user input elements required. The Translation Lookaside Buffers work in this way, to prioritize looking for recent virtual memory address translations. The Load Queue is similarly enabled this way, as typically low latency workloads require data as soon as possible, so the load queue is perfect for this.

Certain parts of the core are statically partitioned, giving each thread an equal timing. This is implemented mostly for anything that is typically processed in-order, such as anything coming out of the micro-op queue, the retire queue and the store queue.

The rest of the core is competitive, meaning that if a thread demands more resources it will try to get there first if there is space to do so each cycle.

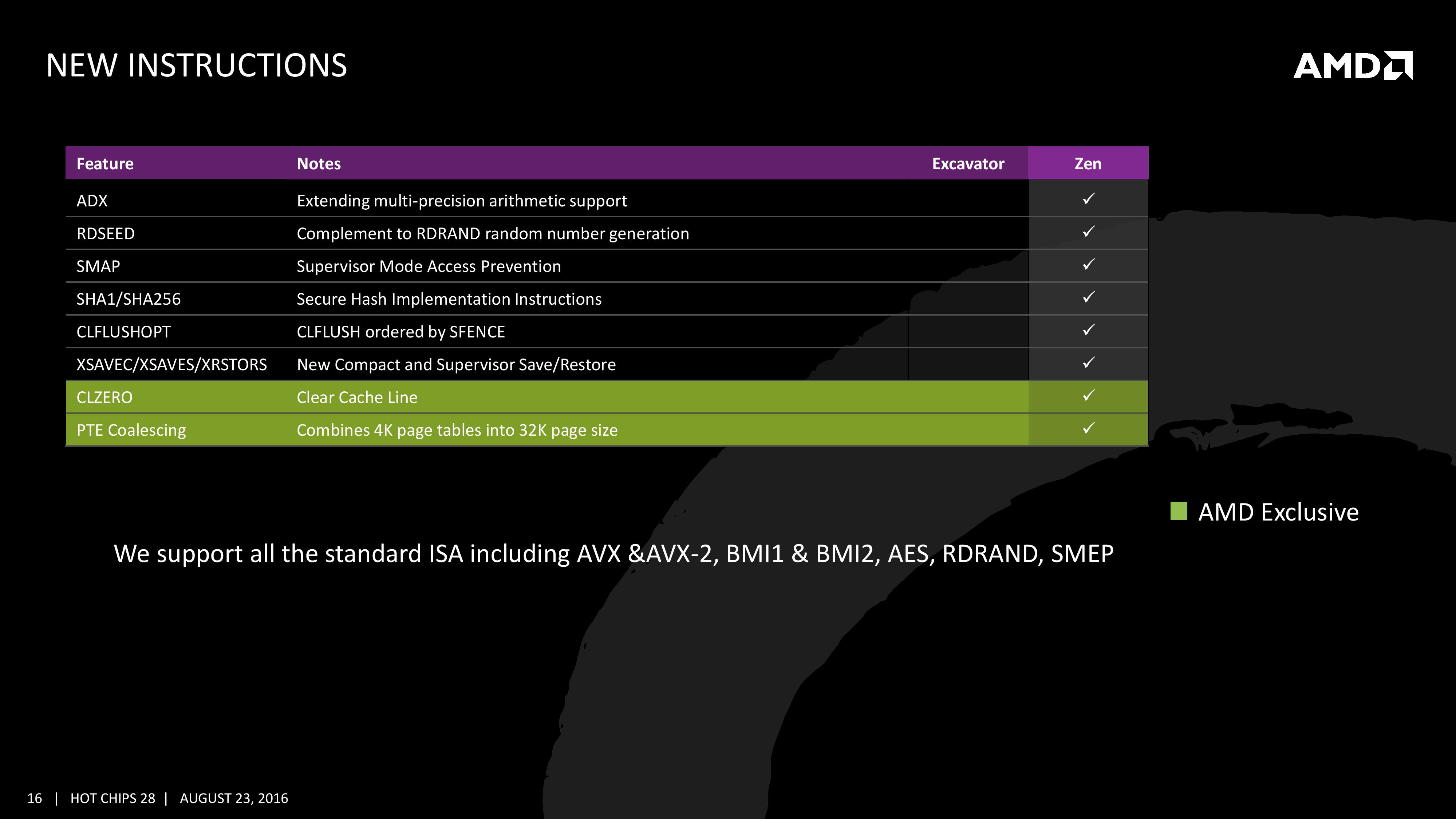

New Instructions

AMD has a couple of tricks up its sleeve for Zen. Along with including the standard ISA, there are a few new custom instructions that are AMD only.

Some of the new commands are linked with ones that Intel already uses, such as RDSEED for random number generation, or SHA1/SHA256 for cryptography. The two new instructions are CLZERO and PTE Coalescing.

The first, CLZERO, is aimed to clear a cache line and is more aimed at the data center and HPC crowds. This allows a thread to clear a poisoned cache line atomically (in one cycle) in preparation for zero data structures. It also allows a level of repeatability when the cache line is filled with expected data. CLZERO support will be determined by a CPUID bit.

PTE (Page Table Entry) Coalescing is the ability to combine small 4K page tables into 32K page tables, and is a software transparent implementation. This is useful for reducing the number of entries in the TLBs and the queues, but requires certain criteria of the data to be used within the branch predictor to be met.

106 Comments

View All Comments

extide - Monday, August 29, 2016 - link

No,k dude, it's not the same 4 ALU's, it's 4 ALU's per core. 2 threads a core, so 2 ALU's/thread, up to 16 threads, or 4 ALU's /thread up to 8 threads, but I would think it would be hard for a single thread to use 4 ALU's, so having 2 threads per 4 ALU seems fine, plus all the INT execution resources.Outlander_04 - Thursday, August 25, 2016 - link

40% improvement is not over Bulldozer but over Excavator which is already 20% or more ahead of Bulldozerlooncraz - Wednesday, August 24, 2016 - link

Highly scalar code or vector code will exceed 40% easily. The core execution resources relative to that execution is 75% to 100% greater. That will only translate to 50~60% performance improvement for said code, but a larger impact than the overall 40% improvement.The cache system, schedulers, issue width, AGUs, L/S, and other factors come more into play in the more common code paths, which reduces the maximum potential benefit derived from the additional execution resources.

However, multi-threaded performance should be HIGHER, not lower. Excavator had relatively poor MT scaling, Zen will be worlds better. Add SMT to the mix - and AMD's solution looks nearly exactly as I anticipated - and you have another 20% or so better SMT scaling.

It is easily conceivable, given what we now know, that AMD has met Haswell's average IPC outside of wider AVX workloads, and exceeded it in certain areas with heavy mixed compute (floating and integer concurrently). It is also now conceivable that AMD's first SMT implementation will be better than Intel's Sandy Bridge era Hyper-Threading. I didn't expect that at all, but the core flexibility is far ahead of Intel's flexibility - and that is largely what determines SMT performance in Zen's design.

Finally, <3Ghz @ 200W is way worse than the currently known figures for their 8C parts. They have 3.2Ghz boost clocks and just 95W TDP. It is expected that the clocks will increase, particularly for the quad core, 65W, parts.

You may not realize this, but these numbers put AMD slightly ahead of Intel in perf/W on 14nm.

niva - Wednesday, August 24, 2016 - link

So are you telling me my Phenom 2 black edition rig might be getting a worthy upgrade?I'm with you, but I don't trust these benchmarks, wait until the retail CPU samples are out then we can decide.

looncraz - Wednesday, August 24, 2016 - link

I'm saying you'll be able to match that level of performance with a Dual core Zen CPU w/ SMT... if AMD were actually to make one (doubtful).I do expect AMD to release triple core CPUs again, though, but possibly not right away.

Myrandex - Thursday, August 25, 2016 - link

Yay finally I've been holding onto my Phenom II as well and this might be it! :)Bulat Ziganshin - Thursday, August 25, 2016 - link

For vector code - they added 4'th ALU, it's almost nothing (Skylake added 4th scalar ALU and got laughable +3% IPC).For scalar code - they advertize +40% IPC. I'm pretty sure that they advertize the best part of perfromance, not the average one. It's ADVERTIZEMENT, after all.

Now, it's easy to analyze Zen as Carrizo+. M/t performance shouldn't change much since it's still 4-wide core (which was called module in Carrizo). S/t performance should improve much more since it changed from 2 alu to 4 alu. Overall, the core looks like Skylake, but it's not enough to put a lot of resources - they need to be carefully placed. Intel gone a long way optimizing their CPUs, and AMD have to repeat that. If you think that AMD can make Skylake-speed CPU in 2 years, then ask yourself - why Intel hasn't done the same in 2008 or so? Why IBM, having WIDER cpu, still slower than Intel in s/t tests?

All we know that AMD was able to SELECT single CPU that was able to run at 3 GHz using cooler looking like one they ship with 95W cpus. Just ask yourself - why they not tried to run their cpu at the same 3.2 GHz which is stock freq. for Intel CPU? And yes, it's way more effificent than Intel CPUs can, making me highly suspicious.

In one of pictures here AMD claims that Zen has the same power usage as Carrizo, that is 28nm CPU. AFAIR Carrizo with 2 modules at ~3GHz use 35-65 Wt. Multiple it by 4, please.

> It is also now conceivable that AMD's first SMT implementation will be better than Intel's Sandy Bridge era Hyper-Threading.

Why?? Intel's first SMT implementation in Pentium4 made a few percents improvement (over s/t), second one in Nehalem give me +20% on deflate, Sandy was +40%, and Haswell is +50%. Why you think that FIRST AMD attempt on SMT will be better than Pentium4?

Overall, i think that m/t perfromance of Zen is more predictable - it's Carrizo with some improvements, but still 4-wide, so i expect usual 10-20% generation-to-generation improvement.

For s/t, it less predictable, but i'm sure that it's impossible to beat Intel in single step, and AMD already advertized +40%, which i'm sure is about s/t perfromance.

looncraz - Thursday, August 25, 2016 - link

"For vector code - they added 4'th ALU, it's almost nothing (Skylake added 4th scalar ALU and got laughable +3% IPC)."Well, that was the average program performance increase, but the vector code itself sped up more than that.

Also, Zen's ability to leverage its resources should be better than Intel's, but its scheduler setup is really unique, so we need more details on how it will handle holes in a scheduler when its neighbor is full. Having six 14-deep schedulers is a significant part of the design that is almost completely overlooked, IMHO.

"Now, it's easy to analyze Zen as Carrizo+. M/t performance shouldn't change much since it's still 4-wide core"

Only if you are comparing a full module to a single Zen core... There were many bottlenecks in the modules that prevented full performance for multi-threading - Zen does not have that. On top, Zen has SMT, so it will have even better MT performance per core.

"Why IBM, having WIDER cpu, still slower than Intel in s/t tests?"

The width is, as you say, only a part of the equation. It's all about being able to exploit that extra width. Intel does so decently well, but has restrictions as a result of their unified scheduler. A heavy FPU load reduces integer performance, for example, due to shared ports of the scheduler. The impact of this is not easily quantifiable - it would require some very specialized testing. Zen will not have this issue thanks to dedicated schedulers.

Intel uses their unified scheduler to be able to provide results more quickly to dependent instructions. Zen, from appearances, allows each scheduler to make fetch and load requests directly, thereby nullifying what used to be an Intel advantage - and maybe even turning it into a hindrance.

"Just ask yourself - why they not tried to run their cpu at the same 3.2 GHz which is stock freq. for Intel CPU?"

Because you don't push engineering sample CPUs, and 3Ghz is the defacto industry standard speed for IPC comparison testing. Just look around, you'll find 3Ghz is the most commonly chosen frequency when doing IPC comparisons on modern CPUs. Pushing both to 3.2Ghz would not have changed anything, but a Zen engineering sample chip is worth thousands more than that Intel CPU at this time, and is not easily replaceable. If you have to run 500 more tests with it, and hand it over to other departments or teams, you probably aren't being allowed to overclock it any.

deltaFx2 - Friday, August 26, 2016 - link

The answer to the IBM question is easy. 1) IBM designed the Power8 with SMT-2 as the sweet spot. Like bulldozer, or Alpha EV6, they have execution clusters. In 2T, each cluster runs a thread, in 1T, the thread is split across these clusters, with a penalty for moving between them. Hence their 1T->2T uplift is a lot higher than intel's 1T->2T (worse baseline). (2) You're comparing different ISAs. x86 is a lot more CISC'y than POWER. x86 supports load+compute, compute+store, load+compute+store, and this is dispatched as a single uop. The same "work" in a more RISC'y machine needs 2 or 3 uops. For the same reason, an ARM core that hopes to achieve the same performance as x86 will need to dispatch more ops, or fuse more ops before dispatch.Spunjji - Saturday, August 27, 2016 - link

The CPU they tasted with is an early engineering sample. Simple answer. You write a lot to make yourself sound smart but you're exercising either clear bias or ignorance here.