The Intel Xeon E5 v4 Review: Testing Broadwell-EP With Demanding Server Workloads

by Johan De Gelas on March 31, 2016 12:30 PM EST- Posted in

- CPUs

- Intel

- Xeon

- Enterprise

- Enterprise CPUs

- Broadwell

Sharing Cache and Memory Resources

In a virtualized environment, the hosted VMs are sharing both the CPU caches and the overall DRAM memory bandwidth. One cache-hungry application can quickly hog most of the shared L3 caches, and a bandwidth intensive one can do the same with the available and shared memory bandwidth. These VMs create the "noisy neighbor" problem. That is bad news for anyone consolidating a lot of VMs on top of a Xeon server, but it is complete show stopper for telco and other scenarios where service providers want to guarantee "Quality-of-Service" (QoS) and thus predictable latency. For Intel this is a notable scenario to address, as the telco market is one of the few markets where the Xeons still have some room to grow. Many telco applications still run on proprietary boxes, which makes virtualization a tantalizing option if Intel can deliver the necessary latency.

Haswell had already some features to monitor cache usage, which in turn allowed you to identify the noisy neighbors. However the "Resource Director Technology" (RDT) of Broadwell can do a lot more.

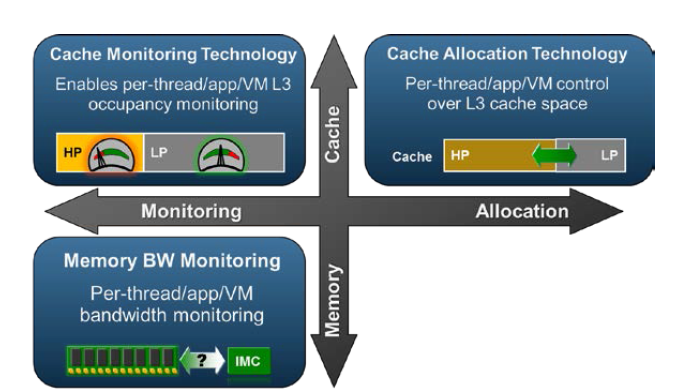

RDT can not only monitor L3 cache usage and memory bandwidth, but it can also allocate L3-cache space on a per thread/process/virtual machine basis. Threads are assigned a Resource Monitoring ID. Eight of these RMID are available per core/cache slice. Sixteen different classes of service can be assigned to an RMID: higher priority threads/applications can get a higher class, and thus a larger portion of the L3-cache.

Intel has already demonstrated an application that made use of these new MSRs to read out memory bandwidth and L3 cache consumption on different levels.

112 Comments

View All Comments

patrickjp93 - Friday, April 1, 2016 - link

Knight's Landing: 730 mm^2, also on the 14nm platformextide - Friday, April 1, 2016 - link

Is it really that big..? Wow, I knew it was big, but didn't know it was that big. Got a source on that?Kevin G - Friday, April 8, 2016 - link

I'll second a link for a source. I knew it'd be big but that big?extide - Friday, April 1, 2016 - link

I know you meant Reticle, but that was a pretty funny typo, heh.Kevin G - Friday, April 8, 2016 - link

Autocorrect has gotten the best of me yet again.extide - Friday, April 1, 2016 - link

And, I know how big GM200 and Fiji are, but I am talking about big GPU's on 14/16nm. All signs are currently pointing to <300mm^2 for the first round of 14/16nm GPU's.lorribot - Thursday, March 31, 2016 - link

Given the way Microsoft and others are now licensing by the core and in large non splitable packages (Windows 2016 Datacenter is in blocks of 16 cores, a dual socket server with 44 cores would need 48 core licences) the increasing core count has limited appeal over small numbers of faster cores when looking at virtualised environments.Those still in the physical world will still have to pay per core but may have to buy 4 std Windows licenses.

when it comes to doing your testing, it should reflect these costs and compare total bang per buck when dealing with performance.

Red Hat still licences per socket but don't be surprised if they go per core too.

JohanAnandtech - Friday, April 1, 2016 - link

Back in 2008, I had a sales person explaining the license models of Microsoft to me in our lab. From that point on, we have invested most of our time and resources in linux server software. :-Dextide - Friday, April 1, 2016 - link

Enterprise linux isn't free, either ya knowrahvin - Friday, April 1, 2016 - link

Support isn't free on the FOSS side but the software is. Redhat is never going to charge more per "cores" for support, that's ridiculous and would result in rivals stealing their support contracts. If licensing costs are that bad that you are dumping hardware you really should be looking at moving services to Linux and Visualizing the windows servers so you can limit the core count and provide more horsepower.Anyone putting Microsoft on bare hardware these days is nuts. Although the consolation is that they get to pay MS's exorbitant tax on software. Linux should be the core component of any IT services and virtualized servers where you need proprietary server software.