The Intel Xeon E5 v4 Review: Testing Broadwell-EP With Demanding Server Workloads

by Johan De Gelas on March 31, 2016 12:30 PM EST- Posted in

- CPUs

- Intel

- Xeon

- Enterprise

- Enterprise CPUs

- Broadwell

TSX

TSX or Transactional Synchronization Extensions is Intel's cache-based transactional memory system. Intel launched TSX with Haswell, but a bug threw a spanner in the works. Broadwell in turn got it right. The chicken is finally there, now it's time to enjoy the eggs.

Faster Virtualization

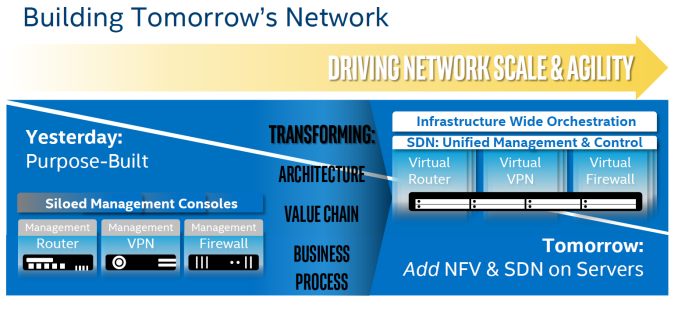

Virtualization overhead is (for most people) a thing of the past. The performance overhead with bare metal hypervisors (ESXi, Hyper-V, Xen, KVM..) is less than a few percent. There is one exception however: applications where I/O dominates. And of course, the packet switching telco applications are the prime examples. Intel, VMware and the server vendors really want to convert the telcos from their Firewall/Router/VPN "black boxes" to virtual ones using Software Defined Networking (SDN) infrastructure. To that end, Intel has continued to work on reducing the virtualization performance overhead. Virtualization overhead can be described as the number of VM exits (VM stops and hypervisor takes over) times the VM exit latency. In IO intensive application, VM exits happen frequently, which in turn leads to hard to predict and high IO latency, exactly what the telco people hate.

Intel wants to conquer the telco's datacenter by turning it into a SDN

So Intel worked on both factors. So Broadwell-DP VM exit latency is once again reduced from 500 cycles to 400.

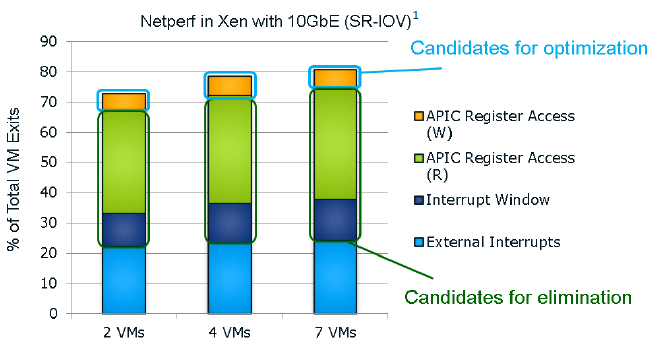

It seems that the "ticks" also get a VM exit reduction. This slide of the Ivy Bride EP presentation gives you a very good overview of the VM exits in a network intensive application; in this case a networkd bandwidth benchmark application.

I quote from our Ivy Bridge-EP review:

The Ivy Bridge core is now capable of eliminating the VMexits due to "internal" interrupts, interrupts that originate from within the guest OS (for example inter-vCPU interrupts and timers). The virtual processor will then need to access the APIC registers, which will require a VMexit. Apparently, the current Virtual Machine Monitors do not handle this very well, as they need somewhere between 2000 to 7000 cycles per exit, which is high compared to other exits.

The solution is the Advanced Programmable Interrupt Controller virtualization (APICv). The new Xeon has microcode that can be read by the Guest OS without any VMexit, though writing still causes an exit. Some tests inside the Intel labs show up to 10% better performance.

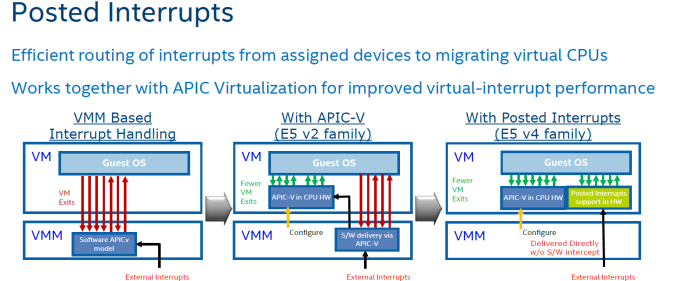

In summary, Intel eliminated the green and dark blue components of the VM exit overhead with APICv. Broadwell now takes on the VM exits due to the external interrupts.

The technology on Broadwell-EP to do this is called posted interrupt. Essentially, posted interrupts enables direct interrupt delivery to the virtual machine without incurring a VM exit, courtesy of an interrupt remapping table. It is very similar to VT-D, which allowed DMA remapping thanks to the physical to virtual memory mapping table. Telco applications - among others - are very latency sensitive. Intel's Edwin Verplancke gave us one such example: before posted interrupts, a telco application had a latency varying from 4 to 47 (!) µsec, depending on the load. Posted interrupts made this a lot less variable, and latency varied from 2.4 to 5.2 µsecs.

As far as we are aware, KVM and Xen seem to have already implemented support for posted interrupts.

112 Comments

View All Comments

petar_b - Saturday, August 27, 2016 - link

Thanks Phil_Oracle, Brutalizer and Anand for this discussion. I have learned a lot from reading different opinions. I am working with IBM and Oracle software products, and from my small experience, Xeons are pathetic when compared to POWER or SPARC. To do same operation at home Xeon it takes 10x more time than what it takes the corporate server to do. I have double memory than corporate server and yet no help from it.someonesomewherelse - Thursday, September 1, 2016 - link

Btw how locked down are these Xeons and their motherboards in regards to overclocking? Assuming you could provide enough power and cooling could you reach a decent overclock? Obviously nobody is going to do that for mission crittical servers/workstations, but if I had too much money could I get a quad or octa core system with as much cores possible and at least try to overclock them?