The LG G3 Review

by Joshua Ho & Anand Lal Shimpi on July 4, 2014 5:00 AM EST- Posted in

- Smartphones

- LG

- Mobile

- Laptops

- G3

Display

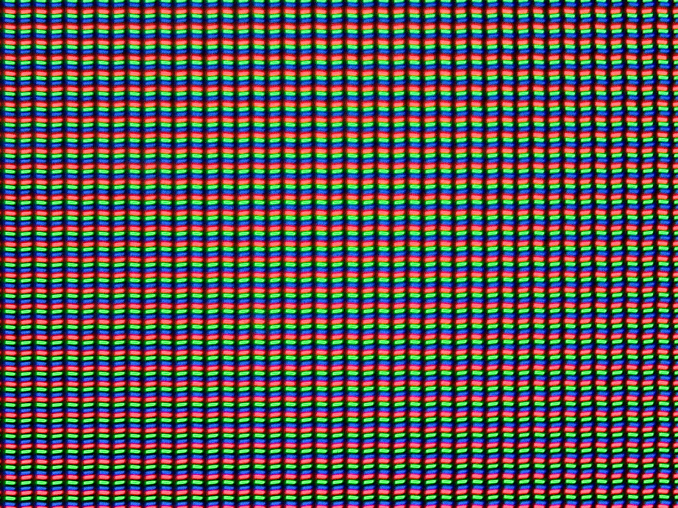

The LG G3’s display has been a choice subject to immense controversy. While the LG G3 is the first international phone to ship with a QHD (2560 x 1440) display resolution, those following the industry saw the inevitable trend as Android OEMs made the jump from 720p to 1080p displays at the 4.7-5” display size. While it’s now obvious that going from 720p to 1080p brought a significant increase to perceived resolution, the same dilemma is brought up when debating whether 1080p to 1440p will bring a significant increase to perceived resolution.

Answering this question requires an understanding of both human vision and the tradeoffs that come with increased pixel densities. The short explanation is effectively that while Apple was right to say that 300 PPI or so is the correct pixel density needed to no longer perceive individual pixels at 12 inches away, the issue is more complex than that. There are edge cases such as Vernier acuity that require pixel density up to 1800 pixels per degree (PPD) in one’s field of view. What this means is that once that pixel density is exceeded, it’s possible to make two lines appear to be aligned even if they aren’t. Of course, this is extremely difficult with current technology, although there are displays in existence that do approach the 2000-3000 PPI needed to reach those levels.

There are more edge cases though. While I’m not going to go into deep depth, the eye is effective capable of sampling detail at .8 to 1 arcminute for the most part. This ignores exceptional cases such as Vernier acuity where interpolation in the mind effectively achieves much higher resolution. While this means that 300 PPI at 12 inches is “enough” to match that sampling rate, the Nyquist-Shannon sampling theorem actually means that preventing aliasing requires twice the resolution. In other words, 600 PPI is the realistic upper bound for most displays. This also ignores cases where the display is held much closer for detailed examination. For those interested in learning more about this, I would refer back to our article on display resolution and human vision.

At any rate, this is the first time that we’ve actually used a 1440p display in a smartphone. In practice, it is possible to see more detail on the LG G3’s display, but it’s hard to tell in most cases. Examining the display closely brings out the differences much more, but it’s not quite the jump that going from 720p to 1080p was. Unfortunately, this doesn’t change the cost of increasing pixel density. As I explained in previous articles, increasing pixel density comes with a greater power cost due to the need for a stronger backlight due to lower active area on the display and smaller transistors. It’s clear that LG has had issues with this, with some rather drastic measures taken.

To save power, LG specifically called out three different mechanisms used to save power on the display. LG states that panel self-refresh is still present in the LG G3, but dumping information from SurfaceFlinger reveals that it's a MIPI video panel, not a command panel. This means that either LG has implemented panel self-refresh in another manner, or that it's no longer present.

Issues with panel self-refresh aside, LG specifically calls out dynamic display clocking as one aspect of their system to save battery life. Inspecting the system files shows that the refresh rate for the display is set by a software governor, which has interesting implications for the custom ROM community and the effect that OTA updates can have on battery life. The system files suggest that the dynamic clocking mechanism isn't quite as broad as one might expect, as the only two frequencies that seem to be exposed are 50 and 60 hertz. I suspect that the nature of an LTPS panel means that it's not quite possible to realize a 0 hertz refresh rate for still images, but it may be that this is effectively a replacement for panel self-refresh. I'll save the other that I've found for the battery life section, as it goes beyond normal power-saving measures.

Outside of just power saving measures, I've also noticed artificial sharpening. This effect is obvious enough that you will notice it immediately. As a result, halos are all over the display in certain situations, and in general I hope LG adds an option to turn this off.

I’ll also touch briefly upon some of the things that I’ve found regarding the touch panel. For one, this is a Synaptics solution, the S3528A. This is the same solution found in the One (M8). Unfortunately, there’s no other information that I could find online. Fortunately, digging through the phone reveals other information. It appears that the QuickCover window is actually defined by ranges in x and y coordinates, and I assume that the same is true for the LCD itself, however all of the information is presented in a circular format.

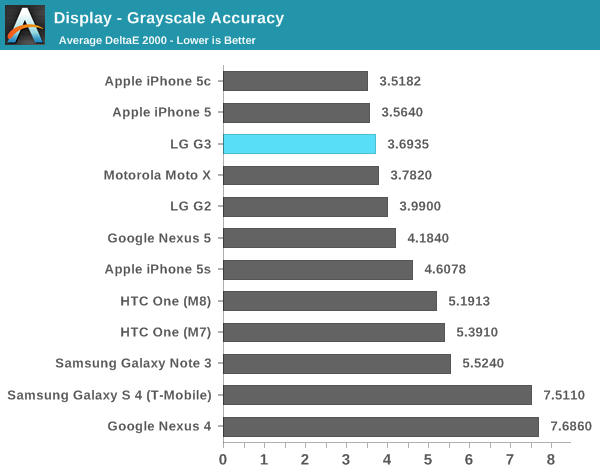

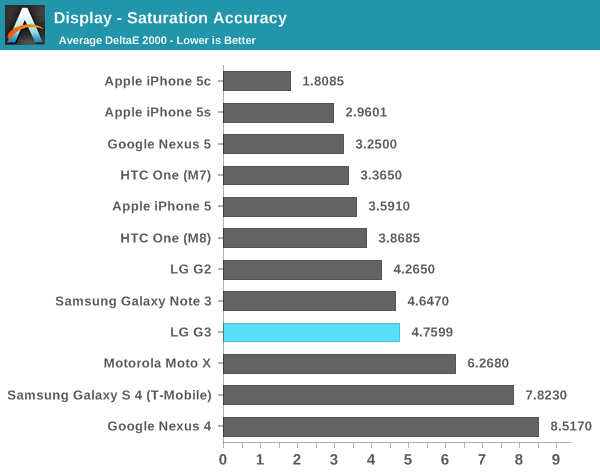

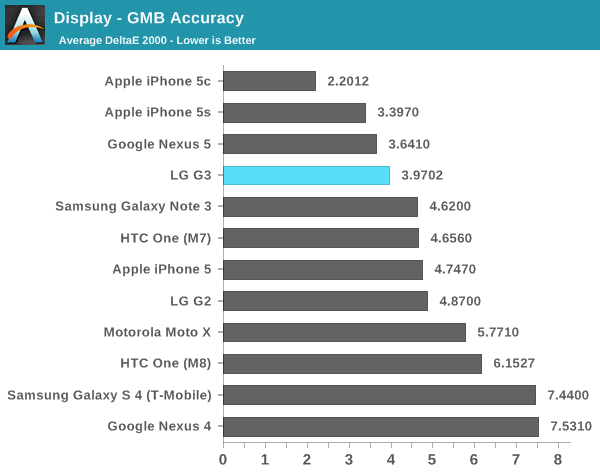

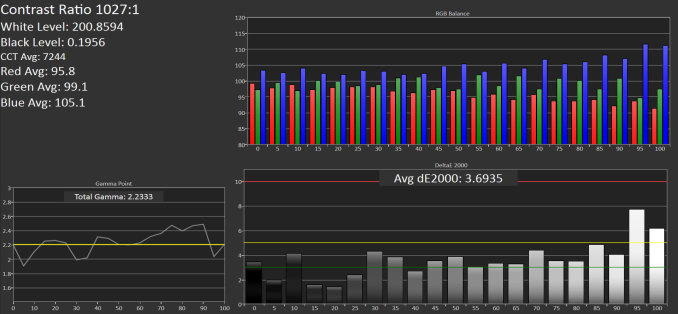

When it comes to how the rest of the display performs, we turn to Spectracal’s CalMAN 5, with a custom workflow to test our displays and quantify performance. To start things off, we’ll take a look at maximum brightness and contrast. We’ll then move onto grayscale accuracy, then saturation accuracy, and finally the Gretag MacBeth ColorChecker test.

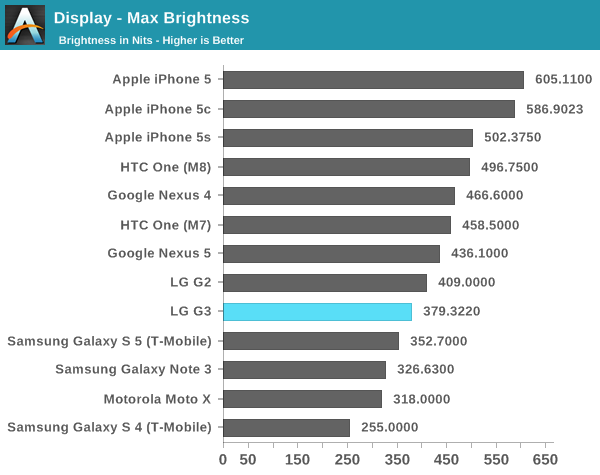

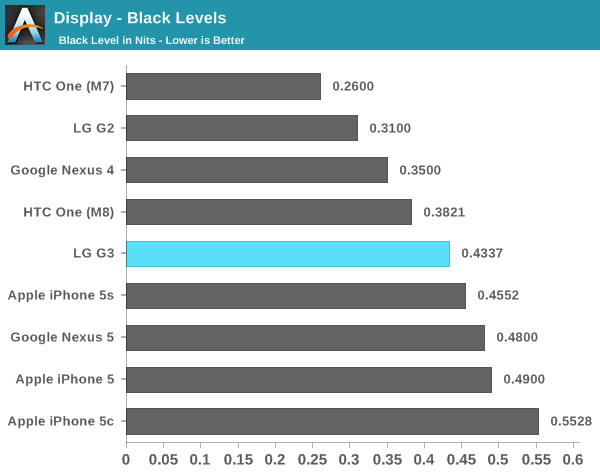

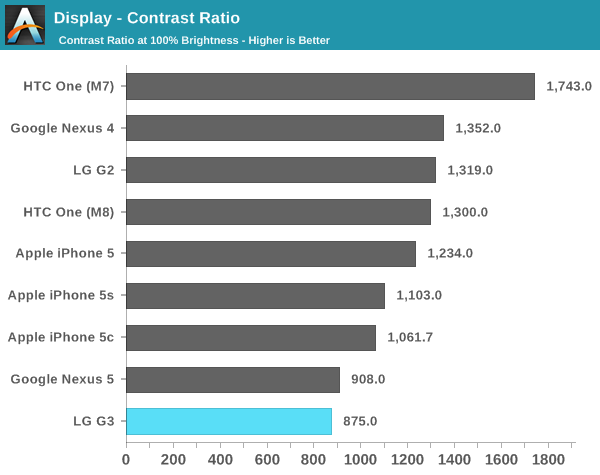

In the maximum brightness department, LG noticeably struggles here. While the G2 had around 410 nits peak luminance, the G3 regresses to around 390 nits maximum. I didn’t find any outdoor brightness boost function in this case either. This means that outdoors, the display will be worse than 1080p devices like the One (M8) and Galaxy S5. The other issue is contrast, which is around 900:1. This isn’t actually as bad as most have made it out to be. The big issue with contrast here is how it degrades with viewing angles. In most angles, a black test image will rapidly wash out towards white when viewing the display at oblique angles.

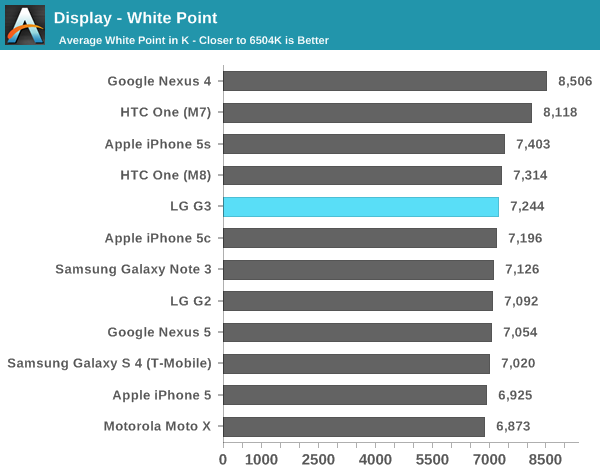

In grayscale, the LG G3 does quite well. Most OEMs continue to target around 7000k instead of 6500k, and the result is that there’s a lower bound on the average dE2000 scores. I’d still like to see OEMs include a mode that allows selection of a 6500k target, but LG does acceptably well here. As always, it's important to emphasize that the grayscale measurements will produce inaccurate contrast values due to the nature of the i1Pro.

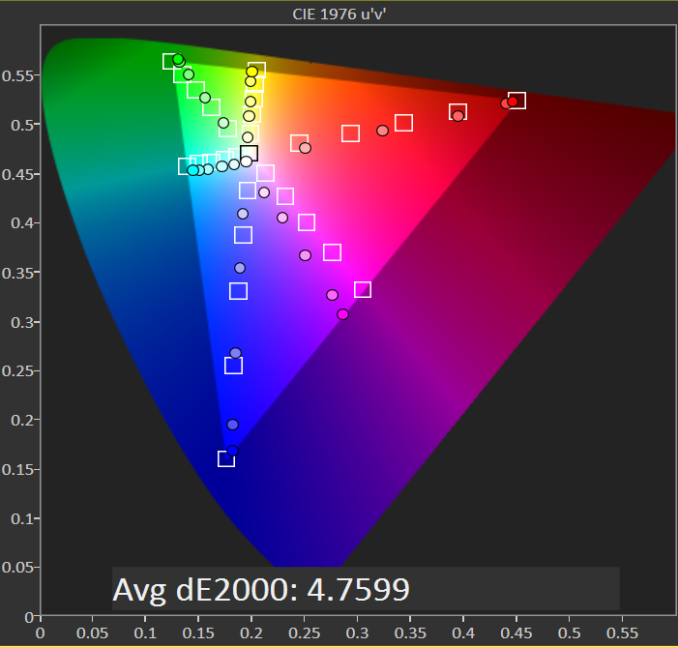

Saturations are where LG has gone a bridge too far. While some may enjoy “vivid” color, the saturation compression is insane here. In many cases, 80% and 100% saturations are effectively identical. This can be seen on the red and green sweeps. 60% saturation is often closer to the 80% saturation target. LG really, really needs to either stop doing this or give an option to disable it. This is simply just immensely detrimental to the viewing experience, especially in any situation where color accuracy is actually necessary. Editing photos is effectively impossible on this display because the results will look completely different on most other displays that are closer to following sRGB color standards.

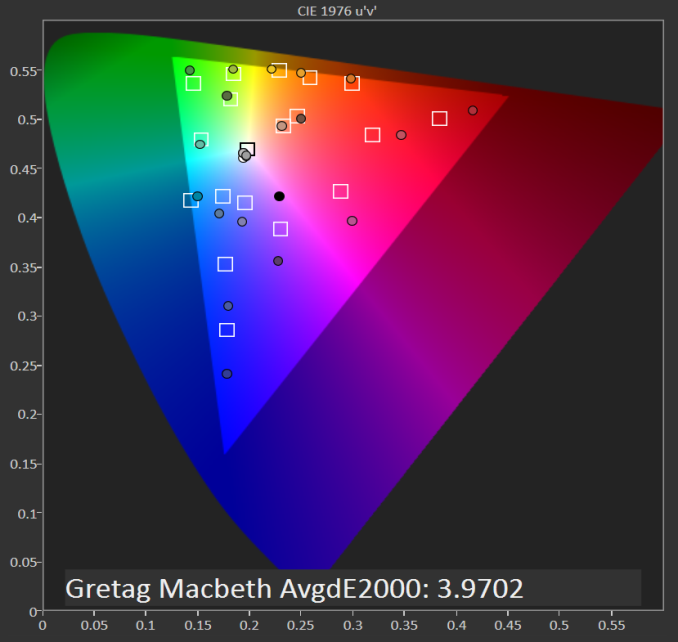

In the Gretag MacBeth Colorchecker, the G3 manages to do well, but it’s likely that its grayscale performance is lowering the dE2000 average. Overall, while this isn’t a terrible display, it’s disappointing that LG has decided to go for showroom appeal over great color calibration out of the box. While HTC’s saturation compression algorithms can be disabled with an init.d script, I haven’t found any evidence that the same is true for the LG G3. The low peak brightness is concerning as well, and likely a mitigation for the higher pixel density.

174 Comments

View All Comments

piroroadkill - Friday, July 4, 2014 - link

Basically, the screen is stupid, costs more, wastes battery, slows down performance, heats it up so it throttles more, and isn't actually noticeably different compared to 1920×1080 at viewing distance.Yeah, so predictable. LG is doing the worst kind of spec-sheet oneupmanship.

Homeles - Friday, July 4, 2014 - link

I picked up a G2 last year, and was a bit frustrated that the G3 came out so quickly. Looks like I'm not missing out.piroroadkill - Friday, July 4, 2014 - link

Yeah, the G2 is a fine device, and I'd choose the better battery life.mahalie - Friday, July 4, 2014 - link

The G3 has significantly better battery life than the G2. You can complain that the screen is unnecessary all you want, but the phone performs wells and has great battery life, so what's the issue?fokka - Friday, July 4, 2014 - link

the g3 beat the g2 in one test here, what numbers are you referring to?the issue, as i see it, is that the 1440p display adds cost, decreases performance, battery life and screen brightness, not to mention overall screen quality, compared to using a good 1080p display, all while adding very little in regards to usability and visual advantages.

many people, me included, think that LG should have gone with a "good ol'" 1080p display in this generation and improved upon the great battery life that the g2 offered, instead of using a 1440p screen with borderline useful benefits mainly for bragging rights.

of course not everybody agrees with this stance, but it seems to be the one main complaint about this otherwise mighty fine piece of technology.

and you are right, the g3 (still) performs well and (still) has great battery life, but with a more reasonable 1080p display those points could have improved even more. that's all i (!) am saying.

retrospooty - Friday, July 4, 2014 - link

You may be right about the screen. Looks like some trade-offs were made, but it's still a good phone that stacks up well against it's competitors. It's still a good 5.5 inch phone that is basically the same size as an S5 or One M8. That and I cant remember the last time I had any phone on 100% brightness. I have a G2 now and keep it as 66%. In rare cases I move it up to 70%. 75% is simply too bright to look at.flatrock - Tuesday, July 8, 2014 - link

I just checked the brightness on my G2. It's at 41% and is plenty bright for indoor viewing at that level. I might put it up to 75% while using it outdoors such as at my son's soccer game. Unless the sun is shining directly on the screen, 100% is overkill. In a dark rooms I set the brightness somewhere in the teens.ColinByers - Monday, September 29, 2014 - link

Well, LG G3 is definitely one of the better phones on the market, all though there are a few like HTC One M8 and Motorola Moto G that can compete with it (see http://www.consumertop.com/best-phone-guide/ for example).upatnite2 - Friday, July 4, 2014 - link

Same thoughts! I can deal with the battery life, but brightness and contrast are major issues. We're seeing at least a few reviews that mention "dim", which isn't a good sign, and I'm starting to wonder about performance after it heats.If they put in the same display as last year, LG would sell tons more, and myself included.

Now, I have to wait to see if the S805 vsn comes to the states and if it's any better, wait for the N6, or get a used G2..

HotInEER - Monday, July 7, 2014 - link

I agree. 3 things are keeping me from trading in my HTC One M8 for this. The brightness, contrast, and lack of built in wireless charging. I don't want a flip case for that feature. Can't stand them, and sure in the heck are not paying $60 for a stupid case for that. I'd consider buying a additional back for wireless charging, however I've read on numerous sites that the US models do not have the pins.