AMD Kaveri Review: A8-7600 and A10-7850K Tested

by Ian Cutress & Rahul Garg on January 14, 2014 8:00 AM ESTIntegrated GPU Performance: BioShock Infinite

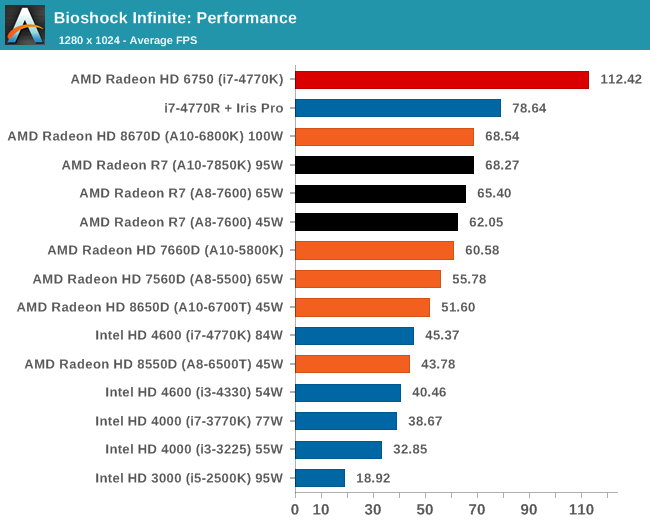

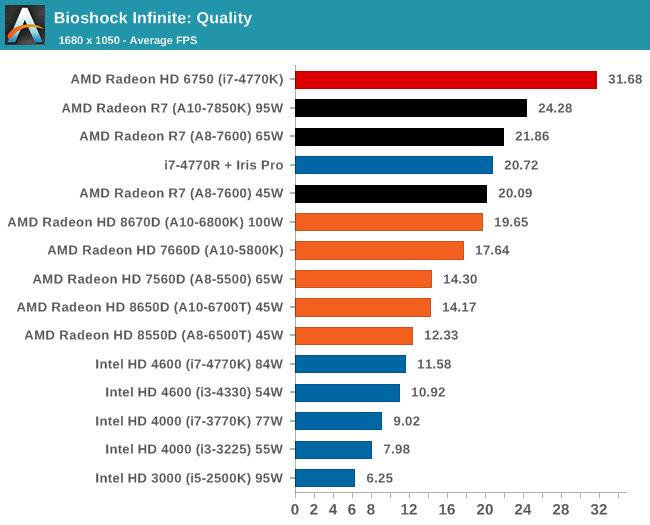

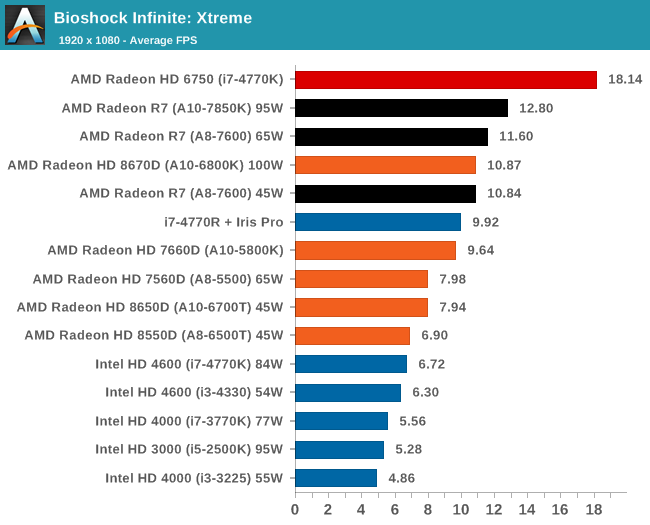

The first benchmark in our test is Bioshock Infinite, Zero Punctuation’s Game of the Year for 2013. Bioshock Infinite uses the Unreal Engine 3, and is designed to scale with both cores and graphical prowess. We test the benchmark using the Adrenaline benchmark tool and their three default settings of Performance (1280x1024, Low), Quality (1680x1050, Medium/High) and Xtreme (1920x1080, Maximum) noting down the average frame rates and the minimum frame rates.

Bioshock Infinite, Performance Settings

For BI: Performance we see the Iris Pro being top of the IGPs, although the next six in the list are all AMD. The Kaveri cores are all between the 6800K and 5800K for this test, and all comfortably above 60 FPS average.

Bioshock Infinite, Quality Settings

For the quality settings, the Iris Pro starts to struggle and all the R7 based Kaveri APUs jump ahead of the A10-6800K - the top two over the Iris Pro as well.

Bioshock Infinite, Xtreme Settings

The bigger the resolution, the more the Iris Pro suffers, and Kaveri takes three out of the top four IGP results.

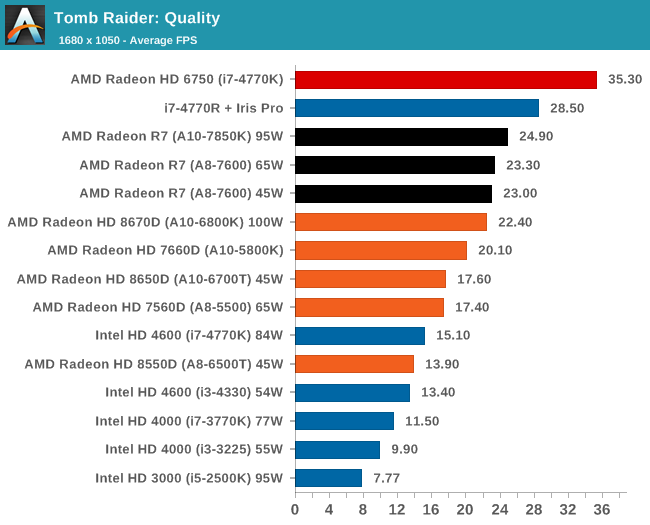

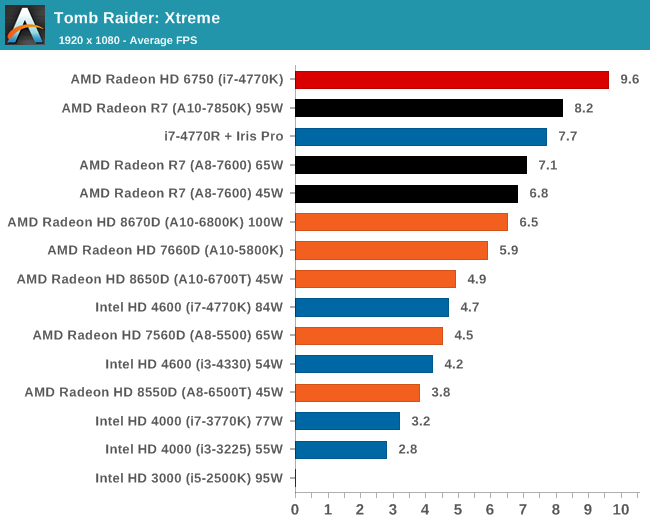

Integrated GPU Performance: Tomb Raider

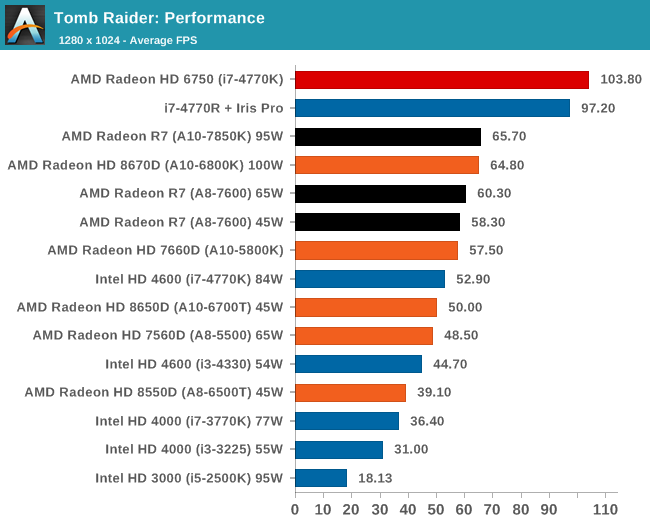

The second benchmark in our test is Tomb Raider. Tomb Raider is an AMD optimized game, lauded for its use of TressFX creating dynamic hair to increase the immersion in game. Tomb Raider uses a modified version of the Crystal Engine, and enjoys raw horsepower. We test the benchmark using the Adrenaline benchmark tool and their three default settings of Performance (1280x1024, Low), Quality (1680x1050, Medium/High) and Xtreme (1920x1080, Maximum) noting down the average frame rates and the minimum frame rates.

Tomb Raider, Performance Settings

The top IGP for Richland and Kaveri are trading blows in TR.

Tomb Raider, Quality Settings

The Iris Pro takes a small lead, while the Kaveri 95W APU show little improvement over Richland. The 45W APU however is pushing ahead.

Tomb Raider, Xtreme Settings

At the maximum resolution, the top Kaveri overtakes Iris Pro, and the 45W Kaveri it still a good margin ahead of the A10-6700T.

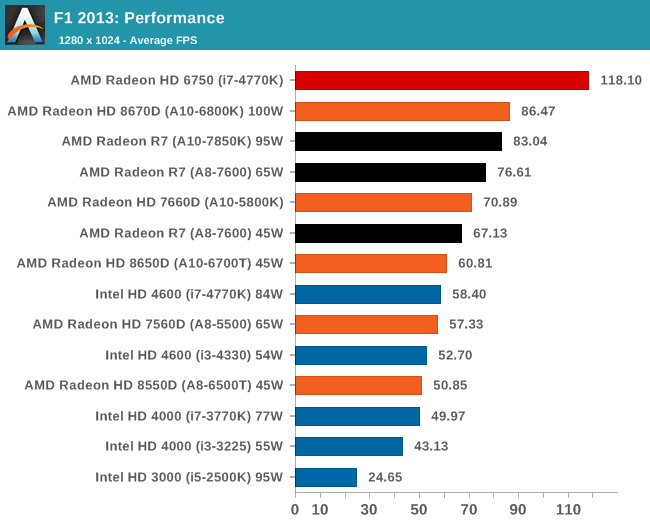

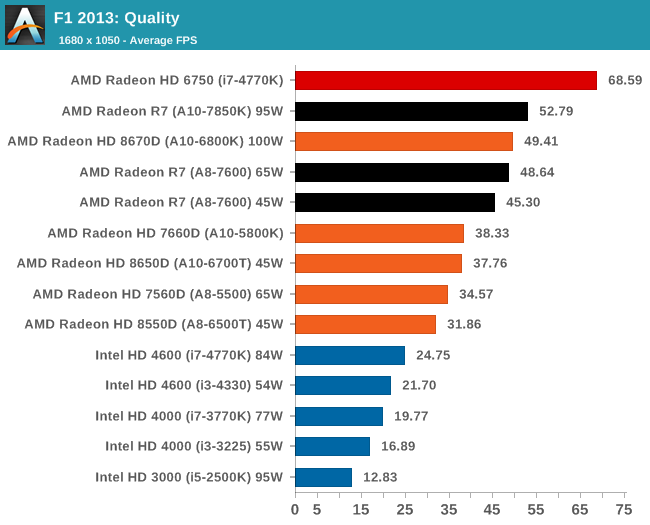

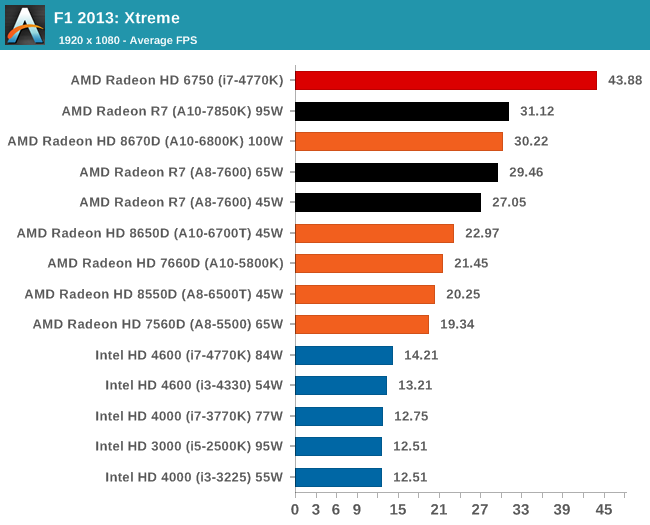

Integrated GPU Performance: F1 2013

Next up is F1 2013 by Codemasters. I am a big Formula 1 fan in my spare time, and nothing makes me happier than carving up the field in a Caterham, waving to the Red Bulls as I drive by (because I play on easy and take shortcuts). F1 2013 uses the EGO Engine, and like other Codemasters games ends up being very playable on old hardware quite easily. In order to beef up the benchmark a bit, we devised the following scenario for the benchmark mode: one lap of Spa-Francorchamps in the heavy wet, the benchmark follows Jenson Button in the McLaren who starts on the grid in 22nd place, with the field made up of 11 Williams cars, 5 Marussia and 5 Caterham in that order. This puts emphasis on the CPU to handle the AI in the wet, and allows for a good amount of overtaking during the automated benchmark. We test at three different levels again: 1280x1024 on Low, 1680x1050 on Medium and 1920x1080 on Ultra. Unfortunately due to various circumstances we do not have Iris Pro data for F1 2013.

F1 2013, Performance Settings

F1 likes AMD here, although moving from Kaveri to Richland at the high end seems a bit of a regression.

F1 2013, Quality Settings

Similarly in the Quality settings, none of the Intel integrated graphics solutions can keep up with AMD, especially Kaveri.

F1 2013, Xtreme Settings

On extreme settings, at 1080p, the top Kaveri APU manages to hit over 30 FPS average during the benchmark. The other A8 Kaveri data is not too far behind.

380 Comments

View All Comments

geniekid - Tuesday, January 14, 2014 - link

Would've been nice to see a discrete GPU thrown in the mix - especially with all that talk about Dual Graphics.Ryan Smith - Tuesday, January 14, 2014 - link

Dual graphics is not yet up and running (and it would require a different card than the 6750 Ian had on hand).Nenad - Wednesday, January 15, 2014 - link

I wonder if Dual Graphics can work with HSA, although I doubt due to cache coherence if nothing else.While on HSA, I must say that it looks very promising. I do not have experience with AMD specific GPU programming, or with OpenCL, but I do with CUDA (and some AMP) - and ability to avoid CPU/GPU copy would be great advantage in certain cases.

Interesting thing is that AMD now have HW that support HSA, but does not yet have software tools (drivers, compilers...), while NVidia does not have HW, but does have software: in new CUDA, you can use unified memory, even if driver simulate copy for you (but that supposedly means when NVidia deliver HW, your unaltered app from last year will work and use advantage of HSA)

Also, while HSA is great step ahead, I wonder if we will ever see one much more important thing if GPGPU is ever to became mainstream: PREEMPTIVE MULTITASKING. As it is now, still programer/app needs to spend time to figure out how to split work in small chunks for GPU, in order to not take too much time of GPU at once. It increase complexity of GPU code, and rely on good behavior of other GPU apps. Hopefully, next AMD 'unification' after HSA would be 'preemptive multitasking' ;p

tcube - Thursday, January 16, 2014 - link

Preemtion, dynamic context switching is said to come with excavator core/ carizo apu. And they do have the toolset for hsa/hsail, just look it up on amd's site, bolt i think it's called it is a c library.Further more project sumatra will make java execute on the gpu. At first via a opencl wrapper then via hsa and in the end the jvm itself will do it for you via hsa. Oracle is prety commited to this.

kazriko - Thursday, January 30, 2014 - link

I think where multiple GPU and Dual Graphics stuff will really shine is when we start getting more Mantle applications. With that, each GPU in the system can be controlled independently, and the developers could put GPGPU processes that work better with low latency to the CPU on the APU's built in GPU, and processes for graphics rendering that don't need as low of latency to the discrete graphics card.Preemptive would be interesting, but I'm not sure how game-changing it would be once you get into HSA's juggling of tasks back and forth between different processors. Right now, they do have multitasking they could do by having several queues going into the GPU, and you could have several tasks running from each queue across the different CUs on the chip. Not preemptive, but definitely multi-threaded.

MaRao - Thursday, January 16, 2014 - link

Instead AMD should create new chipsets with dual AMU sockets. Two A8-7600 APUs can give tremendous CPU and GPU performance, yet maintaining 90-100W power usage.PatHeist - Thursday, February 13, 2014 - link

Making dual socket boards scale well is tremendously complex. You also need to increase things like the CPU cache by a lot. Not to mention that performance would tend to scale very badly with the additional CPU cores for things like gaming.kzac - Monday, February 16, 2015 - link

Having 2 or more APUs on a logic board would defeat the purpose of having an APU in the first place, which was to eliminate processing being handled by the logic board controller. With dual APU sockets, there would need to be some controller interjected to direct work to the APUs which could create a bottle neck in processing time (clock cycles). This is the very reason for the existence of multi core APUs and CPUs of today.Its my expectation that we will start to observe much more memory being added to the APU at some point, to increase throughput speeds. Essentially think of future APUs becoming a mini computer within, the only limitations currently to this issue are heat extraction and power consumption.

5thaccount - Tuesday, January 21, 2014 - link

I'm not so interested in dual graphics... I am really curious to see how it performs as a standard old-fashioned CPU. You could even bench it with an nVidia card. No one seems to be reviewing it as a processor. All reviews review it as an APU. Funny thing is, several people I work with use these, but they all have discrete graphics.geniekid - Tuesday, January 14, 2014 - link

Nvm. Too early!