The iPhone 5 Review

by Anand Lal Shimpi, Brian Klug & Vivek Gowri on October 16, 2012 11:33 AM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone 5

Apple's Swift: Pipeline Depth & Memory Latency

Section by Anand Shimpi

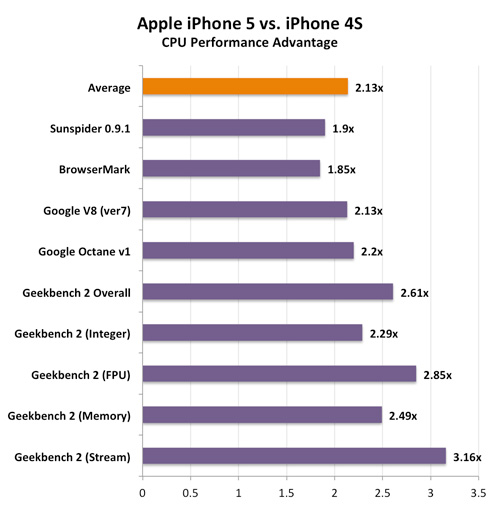

For the first time since the iPhone's introduction in 2007, Apple is shipping a smartphone with a CPU clock frequency greater than 1GHz. The Cortex A8 in the iPhone 3GS hit 600MHz, while the iPhone 4 took it to 800MHz. With the iPhone 4S, Apple chose to maintain the same 800MHz operating frequency as it moved to dual-Cortex A9s. Staying true to its namesake, Swift runs at a maximum frequency of 1.3GHz as implemented in the iPhone 5's A6 SoC. Note that it's quite likely the 4th generation iPad will implement an even higher clocked version (1.5GHz being an obvious target).

Clock speed alone doesn't tell us everything we need to know about performance. Deeper pipelines can easily boost clock speed but come with steep penalties for mispredicted branches. ARM's Cortex A8 featured a 13 stage pipeline, while the Cortex A9 moved down to only 8 stages while maintining similar clock speeds. Reducing pipeline depth without sacrificing clock speed contributed greatly to the Cortex A9's tangible increase in performance. The Cortex A15 moves to a fairly deep 15 stage pipeline, while Krait is a bit more conservative at 11 stages. Intel's Atom has the deepest pipeline (ironically enough) at 16 stages.

To find out where Swift falls in all of this I wrote two different codepaths. The first featured an easily predictable branch that should almost always be taken. The second codepath featured a fairly unpredictable branch. Branch predictors work by looking at branch history - branches with predictable history should be, well, easy to predict while the opposite is true for branches with a more varied past. This time I measured latency in clocks for the main code loop:

| Branch Prediction Code | ||||||

| Apple A3 (Cortex A8 @ 600MHz | Apple A5 (2 x Cortex A9 @ 800MHz | Apple A6 (2 x Swift @ 1300MHz | ||||

| Easy Branch | 14 clocks | 9 clocks | 12 clocks | |||

| Hard Branch | 70 clocks | 48 clocks | 73 clocks | |||

The hard branch involves more compares and some division (I'm basically branching on odd vs. even values of an incremented variable) so the loop takes much longer to execute, but note the dramatic increase in cycle count between the Cortex A9 and Swift/Cortex A8. If I'm understanding this data correctly it looks like the mispredict penalty for Swift is around 50% longer than for ARM's Cortex A9, and very close to the Cortex A8. Based on this data I would peg Swift's pipeline depth at around 12 stages, very similar to Qualcomm's Krait and just shy of ARM's Cortex A8.

Note that despite the significant increase in pipeline depth Apple appears to have been able to keep IPC, at worst, constant (remember back to our scaled Geekbench scores - Swift never lost to a 1.3GHz Cortex A9). The obvious explanation there is a significant improvement in branch prediction accuracy, which any good chip designer would focus on when increasing pipeline depth like this. Very good work on Apple's part.

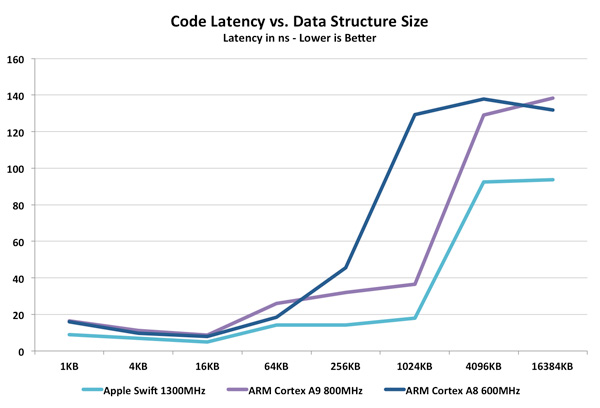

The remaining aspect of Swift that we have yet to quantify is memory latency. From our iPhone 5 performance preview we already know there's a tremendous increase in memory bandwidth to the CPU cores, but as the external memory interface remains at 64-bits wide all of the changes must be internal to the cache and memory controllers. I went back to Nirdhar's iOS test vehicle and wrote some new code, this time to access a large data array whose size I could vary. I created an array of a finite size and added numbers stored in the array. I increased the array size and measured the relationship between array size and code latency. With enough data points I should get a good idea of cache and memory latency for Swift compared to Apple's implementation of the Cortex A8 and A9.

At relatively small data structure sizes Swift appears to be a bit quicker than the Cortex A8/A9, but there's near convergence around 4 - 16KB. Take a look at what happens once we grow beyond the 32KB L1 data cache of these chips. Swift manages around half the latency for running this code as the Cortex A9 (the Cortex A8 has a 256KB L2 cache so its latency shoots up much sooner). Even at very large array sizes Swift's latency is improved substantially. Note that this data is substantiated by all of the other iOS memory benchmarks we've seen. A quick look at Geekbench's memory and stream tests show huge improvements in bandwidth utilization:

Couple the dedicated load/store port with a much lower latency memory subsystem and you get 2.5 - 3.2x the memory performance of the iPhone 4S. It's the changes to the memory subsystem that really enable Swift's performance.

276 Comments

View All Comments

dado023 - Tuesday, October 16, 2012 - link

These days for me is battery life and then screen usability, so my next buy will be 720p, with iPhone5 setting the bar, i hope other android makers will follow.Krysto - Tuesday, October 16, 2012 - link

Are you implying iPhone 5 is setting the bar for 720p displays? Because first of all, it doesn't have an 1280x720 resolution, but a 1136x640 one, and second, Android devices have been sporting 720p displays since a year ago.hapkiman - Tuesday, October 16, 2012 - link

I have an iPhone 5 and my wife has a Samsung Galaxy S III.Her Galaxy S III has a Super AMOLED 1280x720 display.

And yes my iPhone 5 "only" has a 1136 x 640 display.

But guess what - I'm holding both phones side by side right now looking at the exact same game and there is no perceivable difference. I looked at it, my son looked at it, and my wife looked at it. On about five or six different games, videos, apps, and a few photos. The difference is academic. You cant tell a difference unless you have a bionic eye.

They both look freakin' fantastic.

reuthermonkey1 - Tuesday, October 16, 2012 - link

I think you're missing Krysto's point. Of course looking at a 4" 1136 x 640 and a 4.8" 1280x720 display side by side will look equivalent to the eye. But his response wasn't to whether they're similar, but to the minimum requirement dado023 has set for their next purchase to be 720p.The iPhone5's screen looks fantastic, but it's not 720p, so it's not exactly setting the bar for 720p.

Samus - Wednesday, October 17, 2012 - link

I'm no Apple fan, but in their defense, it is completely unneccessary to have 720p resolution on a 4" screen.The ppi of the screen is already 20% higher than is discernible by the human eye. Having the resolution any higher would be a waste of processing power.

afkrotch - Wednesday, October 17, 2012 - link

More screen real estate. Higher resolution, more crap you can throw on it. Course ih a 4" or 4.8" display, how many icons can you really place on the screen. I have a 4" screen and I wished I could shrink my icons though. Would love to get more icons on there.I can't do large phones anymore. I had a 5" Dell Streak...no thanks. Too big.

rarson - Wednesday, October 17, 2012 - link

"The ppi of the screen is already 20% higher than is discernible by the human eye."Uh, no it's not. The resolution of a human retina is higher than 326 ppi.

Silma - Thursday, October 18, 2012 - link

This doesn't mean anything. It depends on how far away the reading material is from the eye.720p may not be needed for such a small screen but it is better than "not exactly" 720p in that the phone doesn't have to rescale 720p material.

In the same way retina marketing for macbook is pure BS as for the screen size and eye distance from the screen such a high resolution is not needed and will only burn batteries faster and make laptops warmer for next to no visual benefit. In addition 1080p materials will have to be rescaled.

rarson - Thursday, October 18, 2012 - link

Right, so if you have good vision, like I do, then at a foot away, you can see those pixels.MobiusStrip - Friday, October 19, 2012 - link

Yawn.