Intel's Ivy Bridge: An HTPC Perspective

by Ganesh T S on April 23, 2012 12:01 PM EST- Posted in

- Home Theater

- Intel

- HTPC

- Ivy Bridge

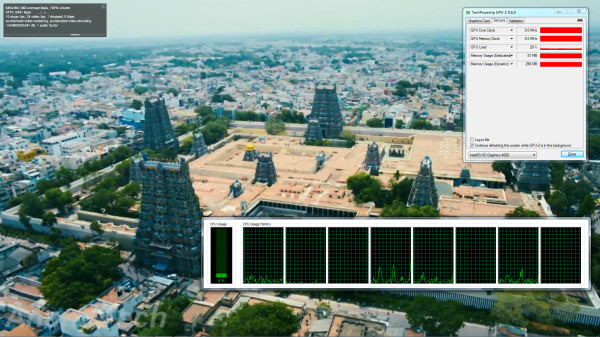

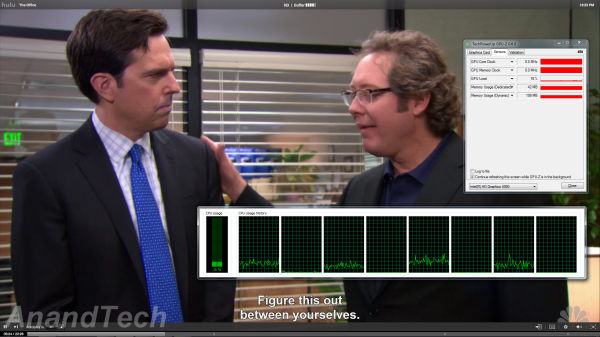

The last time we looked at Flash acceleration in the Intel drivers, we came away disappointed. Have things changed this time around? Intel seems to have taken extra care about this aspect and even supplied us with Flash player builds confirmed and tested to have full GPU acceleration and rendering capabilities. We took the Flash plugin for a test drive using our standard YouTube clip. This time around, we also added a 720p Hulu Plus clip. In the case of YouTube, there is visual confirmation of accelerated decoding and rendering. For Hulu Plus, we need to infer it from the GPU usage in GPU-Z. Hulu Plus streaming seems to be slightly more demanding on the CPU compared to YouTube.

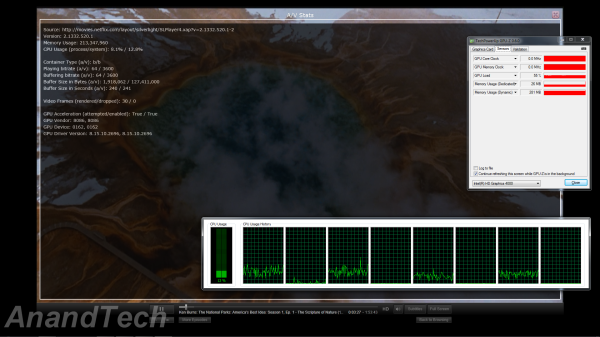

Netflix streaming, on the other hand, uses Microsoft's Silverlight technology. Unlike Flash, hardware acceleration for the video decode process is not controlled by the user. It is upto the server side code to attempt GPU acceleration. Thankfully, Netflix does try to take advantage of the GPU's capabilities.

This is evident from the A/V stats recorded while streaming a Netflix HD video at the maximum possible bitrate of 3.7 Mbps. The high GPU usage in GPU-Z also points to hardware acceleration being utilized.

70 Comments

View All Comments

anirudhs - Monday, April 23, 2012 - link

I can barely notice the difference between 720P and 1080I on my 32" LCD. Will people notice the difference between 1080P and 4K on a 61" screen?It seems we have crossed the point where improvements in HD video playback on Sandy Bridge and post-Sandy Bridge machines are discernible to normal people with normal screens.

I spoke to a high-end audiophile/videophile dealer, and he tells me that the state of video technology (Blu-Ray) is pretty stable. In fact, it is more stable than it has ever been in the past 40 years. I don't think "improvements" like 4K are going to be noticed by those other consumers in the top 1%. This seems like a first-world problem to me - how to cope with the arrival of 4K?

digitalrefuse - Monday, April 23, 2012 - link

... Anything being discussed on a Web site like Anandtech is going to be "a first-world problem"...That being said, there's not much of a difference between 720 lines of non-interlaced picture and 1080 lines of interlaced picture... If anything a 720P picture tends to be a little better looking than 1080I.

The transition to 4K can't come soon enough. I'm less concerned with video playback and more concerned with desktop real estate - I'd love to have one monitor with more resolution than two 1080P monitors in tandem.

ganeshts - Monday, April 23, 2012 - link

OK, one of my favourite topics :)Why does an iOS device's Retina Display work in the minds of the consumers? What prevents one from wishing for a Retina Display in the TV or computer monitor? The latter is what will drive 4K adoption.

The reason 4K will definitely get a warmer welcome compared to 3D is the fact that there are no ill-effects (eye strain / headaches) in 4K compared to 3D.

Exodite - Monday, April 23, 2012 - link

We can certainly hope, though with 1080p having been the de-facto high-end standard for desktops for almost a decade I'm not holding my breath.Until there's an affordable alternative for improving vertical resolution on the desktop I'll stick to my two 1280*1024 displays.

Don't get me wrong, I'd love to see the improvements in resolution made in mobile displays spill over into the desktop but I'd not be surprised if the most affordable way of getting a 2048*1536 display on the desktop ends up being a gutted Wi-Fi iPad blu-tacked to your current desktop display.

aliasfox - Monday, April 23, 2012 - link

It would be IPS, too!:-P

Exodite - Monday, April 23, 2012 - link

Personally I couldn't care less about IPS, though I acknowledge some do.Any trade-off in latency or ghosting just isn't worth it, as accurate color reproduction and better viewing angles just doesn't matter to me.

ZekkPacus - Monday, April 23, 2012 - link

Higher latency and ghosting that maybe one in fifty thousand users will notice, if that. This issue has been blown out of all proportion by the measurable stats at all costs brigade - MY SCREEN HAS 2MS SO IT MUST BE BETTER. The average human eye cannot detect any kind of ghosting/input lag in anything under a 10-14ms refresh window. Only the most seasoned pro gamers would notice, and only if you sat the monitors side by side.A slight loss in meaningless statistics is worth it if you get better, more vibrant looking pictures and something where you CAN actually see the difference.

SlyNine - Tuesday, April 24, 2012 - link

I take it you've done hundreds of hours of research and documented your studies and methodology so we can look at the results.What if Anand did videocard reviews the same way your spouting out these "facts". They would be worthless conjector, just like your information.

Drop the, but its a really small number argument. Until you really document what the human eye/brain is capable all your saying its a really small number.

Well Thz is a really small number to. And we can the human body can pick up things as little as 700 Tera Hz. Its called the EYE!.

Exodite - Tuesday, April 24, 2012 - link

Look, you're of a different opinion - that's fine.I, however, don't want IPS.

Because I can't appreciate the "vibrant" colors, nor the better accuracy or bigger viewing angles.

Indeed, my preferred display has a slightly cold hue and I always turn saturation and brightness way down because it makes the display more restful for my eyes.

I work with text and when I don't do that I play games.

I'd much rather have a 120Hz display with even lower latency than I'd take any improvement in areas that I don't care about and won't even notice.

Also, if you're going to make outlandish claims about how many people can or cannot notice this or that you should probably back it up.

Samus - Tuesday, April 24, 2012 - link

Exodite, you act like IPS has awful latency or something.If we were talking about PVA, I wouldn't be responding to an otherwise reasonable arguement, but we're not. The latency between IPS and TN is virtually identical, especially to the human eye and mind. High frame (1/1000) cameras are required to even measure the difference between IPS and TN.

Yes, TN is 'superior' with its 2ms latency, but IPS is superior with its <6ms latency, 97.4% Adobe RGB accuracy, 180 degree bi-plane viewing angles, and lower power consumption/heat output (either in LED or cold cathode configurations) due to less grid processing.

This arguement is closed. Anybody who says they can tell a difference between 2ms and sub 6ms displays is being a whiny bitch.