NVIDIA's GeForce GTX 570: Filling In The Gaps

by Ryan Smith on December 7, 2010 9:00 AM ESTCompute & Normalized Numbers

Moving on from our look at gaming performance, we have our customary look at compute performance, bundled with a look at theoretical tessellation performance. Unlike our gaming benchmarks where NVIDIA’s architectural enhancements could have an impact, everything here should be dictated by the core clock and SMs, with the GTX 570’s slight core clock advantage over the GTX 480 defining most of these tests.

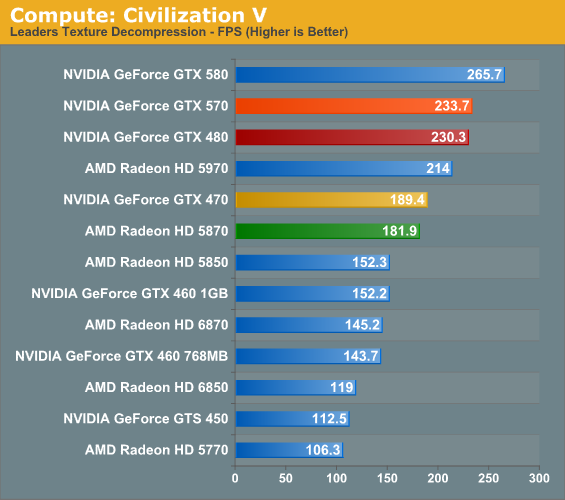

Our first compute benchmark comes from Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes.

The core clock advantage for the GTX 570 here is 4.5%; in practice it leads to a difference of less than 2% for Civilization V’s texture decompression test. Even the lead over the GTX 470 is a bit less than usual, at 23%. Nor should the lack of a competitive placement from an AMD product be a surprise, as NVIDIA’s cards consistently do well at this test, lending credit to the idea that it’s a compute application better suited for NVIDIA’s scalar processor design.

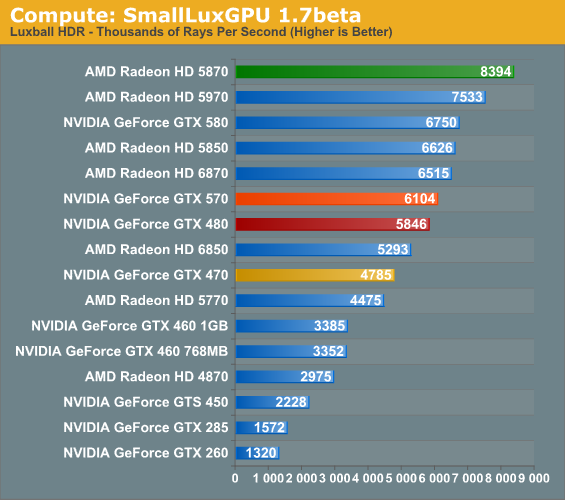

Our second GPU compute benchmark is SmallLuxGPU, the GPU ray tracing branch of the open source LuxRender renderer. While it’s still in beta, SmallLuxGPU recently hit a milestone by implementing a complete ray tracing engine in OpenCL, allowing them to fully offload the process to the GPU. It’s this ray tracing engine we’re testing.

SmallLuxGPU is rather straightforward in its requirements: compute and lots of it. The GTX 570’s core clock advantage over the GTX 480 drives a fairly straightforward 4% performance improvement, roughly in line with the theoretical maximum. The reduction in memory bandwidth and L2 cache does not seem to impact SmallLuxGPU. Meanwhile the advantage over the GTX 470 doesn’t quite reach its theoretical maximum, but the GTX 570 is still 27% faster.

However as was the case with the GTX 580, all of the NVIDIA cards fall to AMD’s faster cards here; the GTX 570 is only between the 6850 and 6870 in performance, thanks to AMD’s compute-heavy VLIW5 design that SmallLuxGPU excels at. The situation is quite bad for the GTX 570 as a result, with the top card being the Radeon 5870, which the GTX 570 underperforms by 27%.

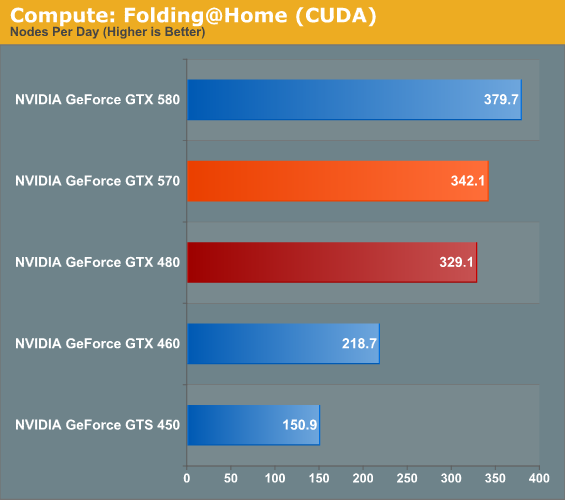

Our final compute benchmark is a Folding @ Home benchmark. Given NVIDIA’s focus on compute for Fermi and in particular GF110 and GF100, cards such as the GTX 580 can be particularly interesting for distributed computing enthusiasts, who are usually looking for the fastest card in the coolest package.

Once more the performance advantage for the GTX 570 matches its core clock advantage. If not for the fact that a DC project like F@H is trivial to scale to multi-GPU configurations, the GTX 570 would likely be the sweet spot for price, performance, and power/noise.

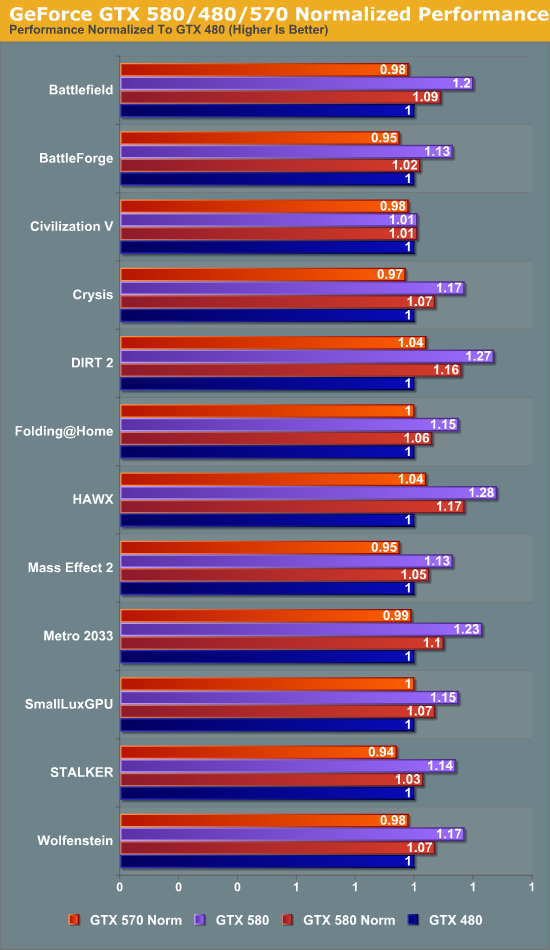

Finally, to take another look at GTX 570’s performance, we have the return of our normalized data view that we first saw with our look at the GTX 580. Unlike the GTX 580 which had similar memory/ROP abilities as the GTX 480 but more SMs, the GTX 570 contains the same number of SMs with fewer ROPs and a narrower memory bus. As such while a normalized dataset for the GTX 580 shows the advantage of the GF110’s architectural enhancements and the highter SM count, the normalized dataset for the GTX 570 shows the architectural enhancements alongside the impact of lost memory bandwidth, ROPs, and L2 cache.

The results certainly paint an interesting picture. Just about everything is ultimately affected by the lack of memory bandwidth, L2 cache, and ROPs; if the GTX 570 didn’t normally have its core clock advantage, it would generally lose to the GTX 480 by small amounts. The standouts here include STALKER, Mass Effect 2, and BattleForge which are all clearly among the most memory-hobbled titles.

On the other hand we have DIRT 2 and HAWX, both of which show a 4% improvement even though our normalized GTX 570 is worse compared to a GTX 480 in every way except architectural advantages. Clearly these were some of the games NVIDIA had in mind when they were tweaking GF110.

54 Comments

View All Comments

xxtypersxx - Tuesday, December 7, 2010 - link

If this thing can hit 900mhz it changes the price/performance picture entirely, why no overclock coverage in such a comprehensive review?Otherwise great write up as always!

Bhairava - Tuesday, December 7, 2010 - link

Yes good point.vol7ron - Tuesday, December 7, 2010 - link

Why do graphics cards cost more than cpu+mobo these days?I know there's a different design process and maybe there isn't as much an economy of scale, but I'm just thinking about the days when it was reverse.

Klinky1984 - Tuesday, December 7, 2010 - link

Well you're essentially buying a computer on a card with a CPU these days. High performance GPU w/ high performance, pricey ram, all of which needs high quality power components to run. GPUs are now computers inside of computers.lowlymarine - Tuesday, December 7, 2010 - link

I think it's simply that GPUs can't get cheaper to the extent that CPUs have, since the die sizes are so much larger. I certainly wouldn't say they're getting MORE expensive - I paid $370 for my 8800GTS back in early 2007, and $400 for a 6800 in early 2005 before that.DanNeely - Tuesday, December 7, 2010 - link

High end GPU chips are much larger than high end CPUchips nowdays. The GF110 has 3bn transistors. For comparison a quadcore i7 only has 700m, and a 6 core athlon 900m, so you get 3 or 4 times as many CPUs from a wafer as you can GPUs. The quad core Itanic and octo core I7 are both around 2bn transistors but cost more than most gaming rigs for just the chip.GDDR3/5 are also significantly more expensive than the much slower DDR3 used by the rest of the computer.

ET - Tuesday, December 7, 2010 - link

They don't. A Core i7-975 costs way more than any graphics card. A GIGABYTE GA-X58A-UD9 motherboard costs $600 at Newegg.ET - Tuesday, December 7, 2010 - link

Sorry, was short on time. I'll add that you forgot to consider the price of the very fast memory on high end graphics cards.I do agree, though, that a combination of mid-range CPU and board and high end graphics card is cost effective.

mpschan - Wednesday, December 8, 2010 - link

Don't forget that in a graphics card you're getting a larger chip with more processing power, a board for it to run on, AND memory. 1GB+ of ultra fast memory and the tech to get it to work with the GPU is not cheap.So your question needs to factory in cpu+mobo+memory, and even then it does not have the capabilities to process graphics at the needed rate.

Generic processing that is slower at certain tasks will always be cheaper than specialized, faster processing that excels at said task.

slagar - Wednesday, December 8, 2010 - link

High end graphics cards were always very expensive. They're for enthusiasts, not the majority of the market.I think prices have come down for the majority of consumers. Mostly thanks to AMDs moves, budget cards are now highly competitive, and offer acceptable performance in most games with acceptable quality. I think the high end cards just aren't as necessary as they were 'back in the day', but then, maybe I just don't play games as much as I used to. To me, it was always the case that you'd be paying an arm and a leg to have an upper tier card, and that hasn't changed.