OCZ's Vertex 2, Special Sauce SF-1200 Reviewed

by Anand Lal Shimpi on April 28, 2010 3:17 PM ESTStill Resilient After Truly Random Writes

In our Agility 2 review I did what you all asked: used a newer build of Iometer to not only write data in a random pattern, but write data comprised of truly random bits in an effort to defeat SandForce’s data deduplication/compression algorithms. What we saw was a dramatic reduction in performance:

| Iometer Performance Comparison - 4K Aligned, 4KB Random Write Speed | |||||

| Normal Data | Random Data | % of Max Perf | |||

| Corsair Force 100GB (SF-1200 MLC) | 164.6 MB/s | 122.5 MB/s | 74.4% | ||

| OCZ Agility 2 100GB (SF-1200 MLC) | 44.2 MB/s | 46.3 MB/s | 105% | ||

| Iometer Performance Comparison | |||||

| Corsair Force 100GB (SF-1200 MLC) | Normal Data | Random Data | % of Max Perf | ||

| 4KB Random Read | 52.1 MB/s | 42.8 MB/s | 82.1% | ||

| 2MB Sequential Read | 265.2 MB/s | 212.4 MB/s | 80.1% | ||

| 2MB Sequential Write | 251.7 MB/s | 144.4 MB/s | 57.4% | ||

While I don’t believe that’s representative of what most desktop users would see, it does give us a range of performance we can expect from these drives. It also gave me another idea.

To test the effectiveness and operation of TRIM I usually write a large amount of data to random LBAs on the drive for a long period of time. I then perform a sequential write across the entire drive and measure performance. I then TRIM the entire drive, and measure performance again. In the case of SandForce drives, if the applications I’m using to write randomly and sequentially are using data that’s easily compressible then the test isn’t that valuable. Luckily with our new build of Iometer I had a way to really test how much of a performance reduction we can expect over time with a SandForce drive.

I used Iometer to randomly write randomly generated 4KB data over the entire LBA range of the Vertex 2 drive for 20 minutes. I then used Iometer to sequentially write randomly generated data over the entire LBA range of the drive. At this point all LBAs should be touched, both as far as the user is concerned and as far as the NAND is concerned. We actually wrote at least as much data as we set out to write on the drive at this point.

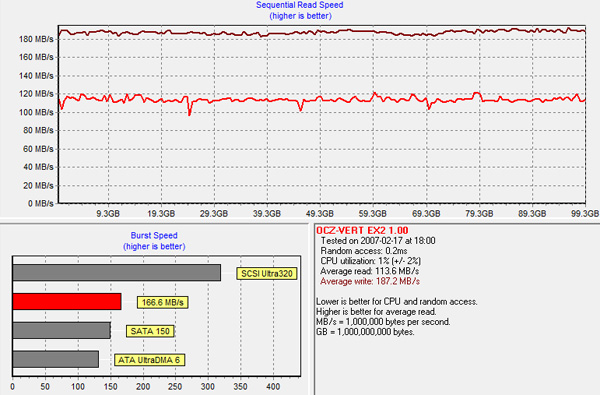

Using HDTach, I measured performance across the entire drive:

The sequential read test is reading back our highly random data we wrote all over the drive, which you’ll note takes a definite performance hit.

Performance is still respectably high and if you look at write speed, there are no painful blips that would result in a pause or stutter during normal usage. In fact, despite the unrealistic workload, the drive proves to be quite resilient.

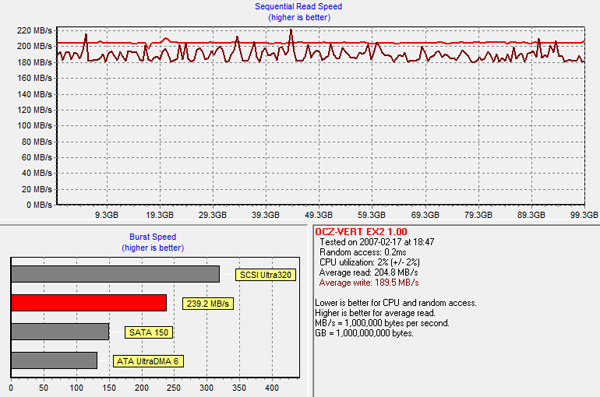

TRIMing all LBAs restores performance to new:

The takeaway? While SandForce’s controllers aren’t immune to performance degradation over time, we’re still talking about speeds over 100MB/s even in the worst case scenario and with TRIM the drive bounces back immediately.

I’m quickly gaining confidence in these drives. It’s just a matter of whether or not they hold up over time at this point.

The Test

With the differences out of the way, the rest of the story is pretty well known by now. The Vertex 2 gives you a definite edge in small file random write performance, and maintains the already high standards of SandForce drives everywhere else.

The real world impact of the high small file random write performance is negligible for a desktop user. I’d go so far as to argue that we’ve reached the point of diminishing returns to boosting small file random write speed for the majority of desktop users. It won’t be long before we’ll have to start thinking of new workloads to really start stressing these drives.

I've trimmed down some of our charts, but as always if you want a full rundown of how these SSDs compare against one another be sure to use our performance comparison tool: Bench.

| CPU | Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) |

| Motherboard: | Intel DX58SO (Intel X58) |

| Chipset: | Intel X58 + Marvell SATA 6Gbps PCIe |

| Chipset Drivers: | Intel 9.1.1.1015 + Intel IMSM 8.9 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

44 Comments

View All Comments

ScottHavens - Wednesday, April 28, 2010 - link

In the article, it was suggested that random data is not representative of what users will see. While the current sizes of drives may make that true, the importance of performance under loads of random data will become more important with time and with the growth of drive sizes. Why? Users will start to store a larger percentage of already-compressed files, like mp3s and movies, on their SSDs instead of buying additional bulk drives, and compressed files are, by nature, effectively identical to random data.ggathagan - Wednesday, April 28, 2010 - link

Anand made two important distinctions: *small* and *writes*.He wasn't referring to all random data, regardless of size, and wasn't referring to reads.

Those are important qualifications. Most mp3 files, and certainly movies fall under the *large* file size.

Additionally, the vast amount of activity with those types of files are going to be reads, not writes.

Lonyo - Wednesday, April 28, 2010 - link

Not to mention that compressed files such as XviD/etc films won't see anywhere near as much benefit from an SSD in the first place.Sure, your read/write speeds might double or triple, but when it comes to sequential activities, mechanical drives are fast. SSDs excel at random reads/writes, which large files don't have so much of, since it's typically stored in a big chunk, meaning sequential activity, not random. And that's only a copying scenario.

The point at which an SSD becomes useful for such large files will be further away than the point at which it becomes viable for a wider range of files. I would transfer my games to a large SSD long before I would start transferring compressed media files. They can stay on a mechanical for a loooooong time.

When we do finally have large enough drives that they might be the primary storage drives, I doubt Sandforce will necessarily be applying the same ideas, and if they are, they will have progressed significantly from their current state.

Talking about applying a realistic future problem to drives which exist today is meaningless when you consider how much progress has been already made up the this point, and how much progress will be made between now and when the future issue actually becomes a reality (when SSDs are affordable for mass storage purposes).

ScottHavens - Wednesday, April 28, 2010 - link

I'm not arguing about whether a particular disclaimer in the article was completely accurate. My primary point was that it's not unheard of for people to use only one drive and still have a significant portion of their data compressed, and the number of people affected by this will likely increase over time. Further, I missed a key scenario that other posters have mentioned: file/partition encryption would likely be affected as well, and it's reasonable to assume that scenario will become more common as well as disk-level encryption becomes easier and even required by some companies.Further, I should point out that the problem (insofar as it is one) by no means affects only small writes. Large reads, according to the tables, have a 20% perf hit, and large writes have a 43% perf hit.

I don't intend to denigrate SandForce's methods here, by any means. If it helps, it helps, and I'll probably get at least one of these myself. I'm just pointing out that the 'YMMV' caveat is applicable to more people and situations than some people realize.

TSnor - Wednesday, April 28, 2010 - link

Re: Encryption and point that encrypted data doesn't compress. This is true, but... the SF controller self encrypts. There is little/no point in doing software full disk encryption before sending the data to the SF controller to be encrypted. SF-1200 uses 128 bit AES which NIST counts as strong encryption.ScottHavens - Wednesday, April 28, 2010 - link

Unfortunately, the only way to use that encryption is to use the device (ATA) password. Management and real-world usage of that is a pain and not nearly as flexible as the options available with, say, BitLocker. I'd wager that most companies with OS-level encryption policies in place will want to continue with their current policies rather than make exceptions for using ATA passwords with drives like this, even if the quality of the encryption itself is just as good.beginner99 - Thursday, April 29, 2010 - link

Stupid question:Can I unplug my sandforce drive and put it in an other computer and read the data without knowing the encryption password?

if yes isn't then the drives native encryption useless?

ScottHavens - Thursday, April 29, 2010 - link

No, you'll be required to enter a password on startup.arehaas - Wednesday, April 28, 2010 - link

Most laptops, including mine, allow for only one HD. I bought a Corsair F200 (before Anand reported the compressed file issue) and planned to keep my jpg photos, mp3s, some movies on the laptop attached to a TV (or a receiver). I think this is a reasonably common scenario. Users are already dealing big time with large compressed files which are slower sequentially on Sandforce than on Indilinx.I wish Crucial fixed their firmware sooner because C300 256GB is about $50 cheaper than F200 or Vertex 2 and faster in all dimensions.

beginner99 - Thursday, April 29, 2010 - link

get a cheap small external hdd if your concerned about this. But generally I would not consider mp3's and jpgs as large files.Yes, compressed but by no means large.Yeah many small laptops only have 1 hdd/sdd. It's IMHO stupid. would be much better to have space for 2 and just ship an external dvd drive. i mean i need my drive like once every couple month. completely useless if you have to carry it around all the time. (that's one great thing about the hp envy 15) ;)