The Rest of Clarkdale: Intel's Pentium G6950 & Core i5 650/660/670 Reviewed

by Anand Lal Shimpi on March 24, 2010 4:00 PM EST- Posted in

- CPUs

Integrated Graphics Performance

In our 890GX review I looked into integrated graphics performance of the entire Clarkdale lineup vs. AMD's chipset offerings. You can get a look at the full set of data here, but I'll also provide a quick summary here.

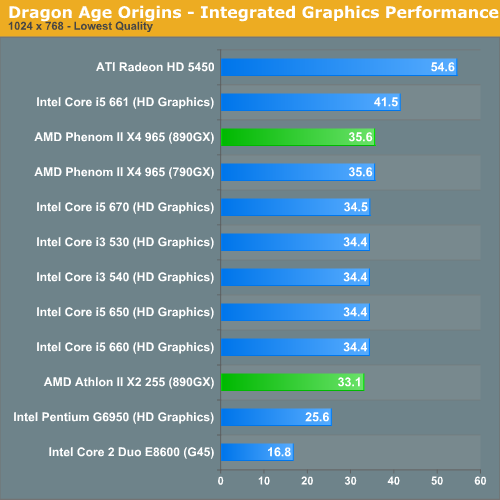

Intel's best case performance happens in our Dragon Age Origins benchmark:

Thanks to its 900MHz GPU clock the Core i5 661 does much better than AMD's integrated graphics. The rest of the Clarkdale lineup is basically on par, and the Pentium G6950 is a bit slower. Note that the G6950 is still over 50% faster than G45. That was just a terrible graphics core.

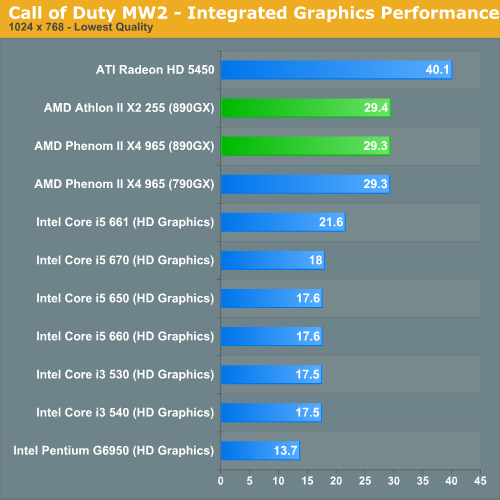

The worst case scenario for Intel's integrated graphics comes up in Call of Duty Modern Warfare 2:

Here even the Core i5 661 can't best AMD's 890GX. Intel's integrated graphics performance can range from much slower to competitive if not faster than AMD's depending on the game. Unfortunately in a couple of key titles Intel is much slower. Using GPU clock speed as a means to differentiate CPUs isn't a wise move if you're trying to build up your reputation for not having terrible graphics.

If you're not going to do any gaming and you're using the integrated graphics for Blu-ray playback, it's a much better story for Intel.

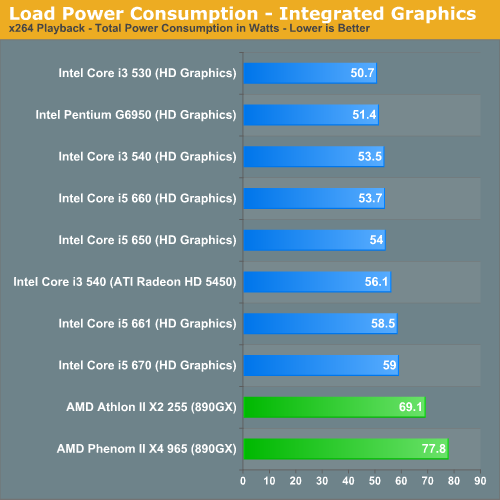

Under load the entire Clarkdale line is very conservative with power consumption.

Full Data in Bench & The Test

We're presenting an abridged set of benchmarks here in the review to avoid this turning into too much of a graph-fest. If you want to see data that you don't see here check out all of these CPUs and more than 100 others in Bench.

| Motherboard: | ASUS P7H57DV- EVO (Intel H57) Intel DX58SO (Intel X58) Intel DX48BT2 (Intel X48) Gigabyte GA-MA790FX-UD5P (AMD 790FX) |

| Chipset Drivers: | Intel 9.1.1.1015 (Intel) AMD Catalyst 8.12 |

| Hard Disk: | Intel X25-M SSD (80GB) |

| Memory: | Qimonda DDR3-1066 4 x 1GB (7-7-7-20) Corsair DDR3-1333 4 x 1GB (7-7-7-20) Patriot Viper DDR3-1333 2 x 2GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 280 |

| Video Drivers: | NVIDIA ForceWare 180.43 (Vista64) NVIDIA ForceWare 178.24 (Vista32) |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows Vista Ultimate 32-bit (for SYSMark) Windows Vista Ultimate 64-bit |

70 Comments

View All Comments

Teloy - Friday, March 26, 2010 - link

"It's GPU still only runs at 733MHz though"... "It's" is not quite right... Take care.8steve8 - Thursday, March 25, 2010 - link

why was the G45 omitted on pg2 in the graph of power consumption under load with integrated graphics?I was keen to see how much more efficient HD graphics were than g45.

-stephen

bpdski - Thursday, March 25, 2010 - link

It would have been nice to see the benchmarks run with the overclocked G6950 also.- Brian

Taft12 - Thursday, March 25, 2010 - link

I have a hard time comprehending what Intel/AMD's TDP ratings mean in light of the "Load Power Consumption" graph.How is it that Intel CPUs with higher TDP ratings consume MUCH LESS power at load than AMD CPUs with lower TDP ratings. Just looks at the results -- all of the Intel core i3/5 CPUs are 73W TDP but the Athlon X2 255 is rated at 65W yet consumes 20 more watts at load. Is the 790FX motherboard that inefficient? WTF???

Lower idle consumption for Intel I understand due to the smaller process and excellent design, but do the TDP ratings truly mean nothing? This is a disastrous result for AMD.

strikeback03 - Friday, March 26, 2010 - link

Both companies rate their TDP differently, and in either case it is not designed to be a measure of power consumption.Nickel020 - Thursday, March 25, 2010 - link

It would be nice if you could include the 661 in the power consumption charts. Does its higher TDP actually lead to higehr power consumption?Sunburn74 - Thursday, March 25, 2010 - link

If you're gonna talk about the value of a 4.4 ghz overclocked processor, you gotta include it the benchmarks. Duh!crochat - Thursday, March 25, 2010 - link

Why the hell is it necessary to always put discrete graphics card in systems with integrated graphics and do power consumption tests with it? Is the benchmark used for power independent from the graphics card?I understand that it is much simpler to measure watts only, but what is important is the resulting task energy consumption, taking into account the efficiency of the processor..

strikeback03 - Friday, March 26, 2010 - link

Because some of the tested systems don't have integrated graphics. So for the application performance comparisons shown to be valid, the discrete GPU has to be kept in there. Wouldn't want someone thinking they could get 69.1 FPS in Crysis Warhead with the power consumption of only the integrated graphics.Taft12 - Thursday, March 25, 2010 - link

It's necessary to save many hours of work the testers could spend on more articles. What counts is the delta in power consumption between CPUs/platforms at idle and load.I'm an AMD fan, but the bottom line is Intel systems are more power efficient, period.