Combating Input Lag and Final Words

Now that we know what's going on and what the factors are, what can we do about it?

Sometimes 1ms can be the difference between your input getting to the software in time to be included in the next frame. Most of the time it won't. Of course, the difference between 8ms and 2ms could actually make a frame of difference (up to 16.67ms) in input lag. A mouse that can handle 500 reports/second is what we recommend as a good balance.

It is possible to overclock your mouse. You will still be limited by the physical capabilities of the mouse, but running the USB port for the mouse at a higher rate can help, especially if you don't want to invest in a more expensive mouse. There are tools out there to both check your mouse report rate (with Direct Input Mouse Rate) and to change the rate by replacing the usb driver. When changing the rate on Vista SP1 or Windows 7, drivers will need to be signed. This can be accomplished by using testing signatures and forcing windows to load them. NGOHQ offers a good tutorial on this here.

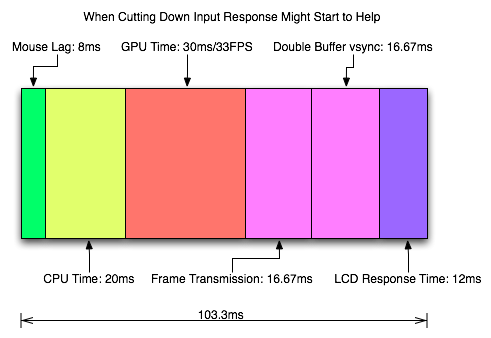

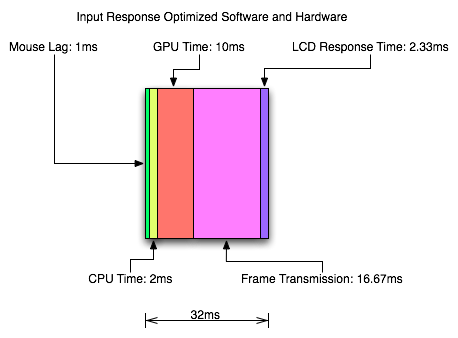

CPU, memory and GPU will impact the input lag between the mouse and the display. The GPU, as the main internal bottleneck in games, will likely have the largest single impact (higher framerate means less time between frames and less lag), but this is heavily dependent on game design. The basic recommendation is a modestly priced dual core CPU, inexpensive RAM, and a fast GPU. Faster CPUs and RAM could potentially benefit but will not likely provide a huge return on investment in this case.

For input lag reduction in the general case, we recommend disabling vsync. For NVIDIA card owners running OpenGL games, forcing triple buffering in the driver will provide a better visual experience with no tearing and will always start rendering the same frame that would start rendering with vsync disabled. Only input latency after the time we would see a tear in the frame would be longer, and this by less than a full frame of latency.

Unfortunately, all other implementations that call themselves triple buffering are actually one frame flip queues at this point. One frame render ahead is fine at framerates lower than the monitor refresh, but if the framerate ever goes past refresh you will experience much more input lag than with vsync alone. For everyone without multiGPU soluitons, we recommend setting flip queue or max pre-rendered frames to either 1 or 0. Set it to 1 if framerate is always less than monitor refresh and set it to 0 if framerate is always greater than or equal to monitor refresh. If it goes back and forth, only NVIDIA's OpenGL triple buffering will provide the best of both worlds without tearing and will further reduce input lag in high framerate situations.

Improperly handling vsync (enabling or disabling a 1 frame flip queue at the wrong time) can degrade performance by at least one additional whole frame. But with multiGPU options, we really don't have a choice. With more than one GPU in the system, you will want to leave maximum pre-rendered frames set to the default of 3 and allow the driver to handle everything. Input lag with multiGPU systems is something we will want to explore at a later time.

You will want a monitor that doesn't do much (if any) processing. Preferably with a "game" mode. We recently took a look at a few monitors to get a feel for the difference in input processing. While we didn't test it in this article, adding another 16ms to 33ms to input lag is just not a good idea.

One of the largest benefits to games that don't inherently carry a lot of input lag is refresh rate. A real 120Hz refresh rate can significantly benefit input lag especially in twitch shooters. While that impact would be less in games where the framerate can't keep up, the hail of additional frames that can be incurred between the computer and the monitor will still be significantly impacted. Additionally, vsync (even in the worst case) is much cheaper on a high refresh rate monitor. Triple buffering (or even 1 frame flip queues with performance lower than refresh) and 120Hz monitors are a match made in heaven.

Final Words

What started out as a short article on the concept of input lag ended up touching on quite a few key issues in gaming. We get into a few of the concepts of game design and program flow in addition to looking at hardware impact. While we hadn't planned on it, picking up a camera that can do 1200 FPS allowed us to actually measure the input lag of a couple real games.

There are quite a few good nuggets to take away. First, input lag is hugely dependent on the game. There will be games that optimize for reducing input lag and others that do not. In some games it is more important than others. For games that incur huge amounts of input lag, there is only so much that can be done. Using the tips we provided will definitely help get people on the path to lower input lag.

Unfortunately, sometimes reducing input lag to its minimum requires spending money especially on the display side of things. Just make sure to read reviews that look at display lag as avoiding a display that adds an extra 16ms to 33ms of input lag is definitely a good start. Beyond that, a faster GPU is the next most important upgrade, and a mouse that can do at least 500 reports per second is a good idea.

85 Comments

View All Comments

psilencer - Tuesday, August 18, 2009 - link

First time poster, so be gentle!For each of the cases you analyze the bandwidth and take the lag to be the inverse of the bandwidth. This is incorrect. Lag and bandwidth not related as such. Consider a road with a constant speed limit. Lag would be related to the length of the road (the time it takes for some signal starting at A to reach it's destination B). Bandwidth is related to number of lanes (how many signals you can send from A to B within some time). Although there is some relationship between the two, it is not the inverse.

With this in mind, everything analyzed by this article is incorrect.

Consider a mouse that has 500 reports/second. Taking the inverse gives 2ms, which is the average time between completed reports. However, you don't consider that multiple "reports" may be pipelined in the mouse. Say for example, your mouse has a camera, some simple processing logic to decipher the data from the camera, and then the usb interface. For simplicity, assume that these units process one and only report at a time (and bandwidth/latency would have the inverse relationship). In that case, each section works at 500 reports/second, and would have a latency of 2ms. However the total latency of the mouse would be at 6ms, since each report needs to go through each section.

This also applies to the CPU and GPU.

Sorry, if I'm completely wrong, just ignore this =P

siberx - Thursday, July 30, 2009 - link

Fantastic article - I smile each time AnandTech posts one of these groundbreaking articles that just cuts straight through the BS and gets to the truth behind issues that have been muddled in hearsay and rumours for years.I am personally particularly sensitive to input lag, and with my current LCD even in a fast game like TF2 or UT I find the lag intolerable if vsync is enabled - I have to run with it disabled in just about any game demanding fast response.

My question, however, is the effect that multi-gpu solutions have on input lag. I have never seen something describing exactly how both ATI and nVidia's multi-gpu solutions affect lag, as well as how different multi-gpu rendering modes (AFR, SFR, etc...) affect lag. I would assume that using a multi-gpu solution would, in most cases incur at least an extra frame of delay to mix or move frames between cards, etc... but an actual analysis of this would be very useful. It may, in fact, be worthwhile to disable multi-gpu when running an older twitch game to improve latency...

Additionally, testing with a couple other LCDs to see how they compare latency-wise would be interesting - I get the feeling your Dell panel is a fair step faster than your standard-issue modern panel doing overdriving to reduce switching times...

race2 - Saturday, August 1, 2009 - link

When you say that all non-Nvidia driver Triple Buffering for OpenGL programs are simply one frame flip queues, do you mean that D3DOverrider's forced Triple Buffering is a one frame flip queue as well?race2 - Saturday, August 1, 2009 - link

Sorry, first time posting here. Previous comment was not meant to be a reply.arcsign - Sunday, July 26, 2009 - link

It's nice to know that the whole input lag issue is finally getting some attention. I've been trying to find ways to improve it, without buying new hardware, for a little while now, and came across some options that might be of interest for future articles. (I don't have access to much in terms of equipment to measure these things, so my testing hasn't been so much empirical as it has "well, that seems a bit better... maybe.")-- The two that stick out in my mind as far as software options go are (at least for WinXP) the boot.ini options "/INTAFFINITY," and "/TIMERES= xxxxx." The former assigns all interrupts to the highest numbered core, and the latter changes the resolution of the Windows kernel timer.

-- It would also be interesting to see what sort of effects overclocking might have on various latencies, as I've noticed that Windows doesn't always agree with the BIOS/CPU-Z as to the processor's speed, and in cases where a game uses Windows Performance Counters to calculate time deltas for networking/inputs/etc, if there are any counters that depend on an accurate cpu speed, this could present a problem. (Although this isn't directly related to input lag, it is related to the interaction between the game and the player...)

-- AHCI multimedia timers versus TSC's (more of an issue in XP than more recent OS's, as I believe Vista and 7 both require the use of the AHCI timers) may also have a significant effect on gameplay.

Anyways, nice article, and keep up the good work.

William Gaatjes - Saturday, July 25, 2009 - link

Hello, you might find something interesting on the website of Avago .Avago technologies manufactures optical mouse chips.

Another manufacturer is SGS thomson or st electronics.

Here is a link to avago chips.

http://www.avagotech.com/pages/en/navigation_inter...">http://www.avagotech.com/pages/en/navig.../navigat...

You might find some information you seek there.

I noticed you where writing about 3 keynumbers but you mention 4 on the page : "Reflexes and Input Generation".

William Gaatjes - Saturday, July 25, 2009 - link

And a very nice article i forgot to add.camylarde - Tuesday, July 21, 2009 - link

Now all that remains is to incorporate a multiplayer fps game and dissect how network comunication affects it, and how that knowledge can be used to clearly select wallhackers and aimbotters from the regular pack, just by watching a demo of them, and doing basic math counts of their reported network lag.DerekWilson - Monday, July 20, 2009 - link

This is something we would love to do, and while it is on the table we may not have the time in the near term to get something like that up right now.But trust me, we've been thinking of many cool ways to use high speed footage :-)

JimboMahoney - Monday, July 20, 2009 - link

I also found Fallout 3 extremely laggy until I edited the Fallout.ini file from thisiPresentInterval=1

to this:

iPresentInterval=0

(Thanks to TweakGuides.com for this tip).

It seems that Fallout 3 has VSync enabled at all times, even if you disable it in the menu, unless you make this change. The game was pretty unpleasant to play before I did this (I never use VSync).