A Quick Overview of ESX

To start, we need to brush up on what a hypervisor actually looks like, and clarify its different components. The term "hypervisor" is at this point nicely marketed to encompass basically everything that allows us to run multiple virtual machines on a single physical platform. Of course, that's pretty vague on what exactly a hypervisor denotes. Johan wrote an excellent article about the inner workings of the hypervisor last year, but we'll quickly go over the basics to keep things clear.

Kernels and Monitors

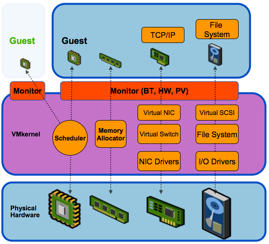

In VMware's ESX, the hypervisor is made up of two completely separate technologies that are both essential in keeping the platform running: the VMkernel and the Monitor. The Monitor provides the virtual interface to the virtual machines, displaying itself as a standard set of hardware components for the virtualized OS. This layer is responsible for the actual translation of the VM's calls for hardware (be it through the use of hardware virtualization, binary translation, or paravirtualization), and sends them through to the actual kernel, namely VMware's own kernel.

This monitor is the same across VMware's bare-metal (ESX) and hosted solutions (think VMware Workstation and Server). The difference in ESX is in its VMkernel, the kernel that acts as the miniature OS that supports all the others. This is the part that interacts with the actual hardware through various device drivers and takes care of things like memory management and resource scheduling.

This short explanation should make it a bit more clear what kind of workloads these two components need to handle. Limitations of the VMkernel are mostly rooted in its robustness and the amount of raw throughput it can handle efficiently through its scheduling mechanics: e.g. more or less the same limitations any OS would suffer in some way. On the other hand, limitations of the Monitor are mostly a matter of using the correct configuration for your workload, and depend largely on the features of the hardware you allow the Monitor to work with.

10 Comments

View All Comments

najames - Tuesday, June 23, 2009 - link

This is perfect timing for stuff going on at work. I'd like to see part 2 of this article.- Wednesday, June 17, 2009 - link

I am very curious how vmware effects timings during the logging of streaming data. Is there a chance that some light could be shed on this topic?I would like to use vmware to create a clean platform in which to collect data within. I am, however, very skeptical about how this is going to change the processing of the data (especially in regards to timings).

Thanks for any help in advance.

KMaCjapan - Wednesday, June 17, 2009 - link

Hello. First off I wanted to say I enjoyed this write up. For those out there looking for further information on this subject VMware recently released approximately 30 sessions from VMworld 2008 and VMworld Europe 2009 to the public free of charge, you just need to sign up for an account to access the information.The following website lists all of the available sessions

http://vsphere-land.com/news/select-vmworld-sessio...

and the next site is the direct link to VMworld 2008 ESX Server Best Practices and Performance. It is approximately a 1 hour session.

http://www.vmworld.com/docs/DOC-2380">http://www.vmworld.com/docs/DOC-2380

Enjoy.

Cheers

K-MaC

yknott - Tuesday, June 16, 2009 - link

Great writeup Liz. There's one more major setup issue that I've run into numerous times during my ESX installations.It has to do with IRQ sharing causing numerous interrupts on CPU0. Basically, ESX handles all interrupts (network, storage etc) on CPU0 instead of spreading them out to all CPUS. If there is IRQ sharing, this can peg CPU0 and cause major performance issues. I've seen 20% performance degradation due to this issue. For me, the way to solve this has been to disable the usb-uhci driver in the Console OS.

You can find out more about this issue here:http://www.tuxyturvy.com/blog/index.php?/archives/...">http://www.tuxyturvy.com/blog/index.php...ng-VMwar...

and http://kb.vmware.com/selfservice/microsites/search...">http://kb.vmware.com/selfservice/micros...&cmd...

This may not be an issue on "homebuilt" servers, but it's definitely cropped up for me on all HP servers and a number of IBM x series servers as well.

LizVD - Wednesday, June 17, 2009 - link

Thanks for that tip, yknott, I'll look into including that in the article after researching it a bit more!badnews - Tuesday, June 16, 2009 - link

Nice article, but can we get some open-source love too? :-)For instance, I would love to see an article that compares the performance of say ESX vs open-source technologies like Xen, KVM! Also, how about para-virtualised guests. If you are targeting performance (as I think most AT readers are) I would be interested what sort of platforms are best placed to handle them.

And how about some I/O comparisons? Alright the CPU makes a difference, but how about RAID-10 SATA/SAS vs RAID-1 SSD on multiple VMs?

LizVD - Wednesday, June 17, 2009 - link

We are actually working on a completely Open-Source version of our vApus Mark bench, and to give it a proper testdrive, we're using it to compare OpenVZ and Xen performance, which my next article will be about (after part 2 of this one comes out).I realize we've been "neglecting" the open source side of the story a bit, so that is the first thing I am looking into now. Hopefully I can include KVM in that equation as well.

Thanks alot for your feedback!

Gasaraki88 - Tuesday, June 16, 2009 - link

Thanks for this article. As an ESX admin, this is very informative.mlambert - Tuesday, June 16, 2009 - link

We must note here that we've found a frame size of 4000 to be optimal for iSCSI, because this allows the blocks to be sent through without being spread of separate frames.Can you post testing & results for this? Also would be interesting to know if 9000 was optimal for NFS datastores (as NFS is where most smart shops are using anyways...).

Lord 666 - Tuesday, June 16, 2009 - link

Excellent write up.