Overclocking Extravaganza: Radeon HD 4890 To The Max

by Derek Wilson on April 29, 2009 12:01 AM EST- Posted in

- GPUs

Cranking GDDR5 All the Way Up

The first stop on our overclocking tour is in the memory subsystem. We will be increasing the memory clock frequency which reduces latency slightly and increases bandwidth significantly. The stock clock speed is 975MHz with 1ns devices (which means they are rated at 1GHz). AMD mentioned that signaling and interference (caused by the graphics hardware) are bigger problems with 1GHz GDDR5 than actually running the memory at that speed, which is why they went with the 25MHz lower clock speed.

Even with the 975MHz default clock speed, we already have a data rate of 3.9GHz. Which is pretty intense. We found in playing with ATI's built in overclocking tools (overdrive), we were able achieve stable performance at the maximum clock speed the driver allowed: 1200MHz. Doing the math gives us a massive 4.8GHz of data rate. This means, with a 256-bit wide bus, we're talking about almost 154 GB/s of bandwidth. This is more memory bandwidth than the NVIDIA GeForce GTX 280 and just a little less than the GTX 285 (which both use GDDR3 but on 512-bit busses).

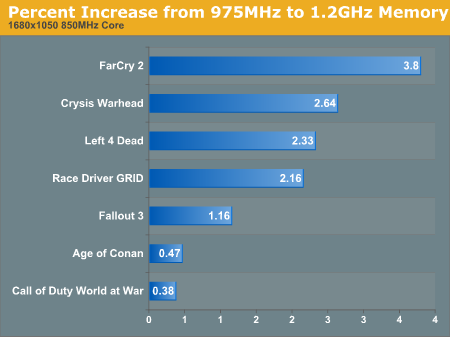

So armed with 1.2GHz GDDR5, what can the 850MHz core of the Radeon HD 4890 accomplish now? Let's take a look at percent increase in performance per game when just increasing memory clock.

1680x1050 1920x1200 2560x1600

Apparently not that much more, even at 2560x1600.

Because our tests are not 100% deterministic, there is some variability in our results. Generally, this is very low, though it does vary from game to game and benchmark to benchmark. We have a hard time calling anything less than a 3% difference significant, as it could be due to fluctuations in the tests. These numbers may indicate some positive change in performance, but not one that would matter. At 2560x1600, only Call of Duty showed a performance improvement that mattered. And this is from a 225MHz overclock (just about a 23.1% increase in clock speed), which is pretty large.

There really isn't a huge need to delve into the raw numbers here, as they are just not that different. We'll hold off on that until it matters. Next up, we're going to look at increasing only the core clock speed.

61 Comments

View All Comments

PC Reviewer - Monday, June 22, 2009 - link

I can vouch for this card...http://pcreviewer.org/new-radeon-hd-4890-video-car...">http://pcreviewer.org/new-radeon-hd-4890-video-car...

I prefer the XFX.. but either way, any single one of those cards is outstanding...

fausto412 - Tuesday, May 5, 2009 - link

All this talk of tuning video cards for max flexibility and performance beings to mind a great idea. why not have a write up on all the neat things you can do with Riva Tuner.i only know how to do 2 things. setup overclocking and fan profiles but i know there is more neat stuff in there.and can you undervolt an nvidia card with software? how?

ValiumMm - Tuesday, May 5, 2009 - link

Saphire and Powercolor have both announced a 1ghz GPU for the 4890, IF this is what got, i just thought you guys would have got higher since your seeing the max OCgold333 - Monday, May 4, 2009 - link

http://www.hexus.net/content/item.php?item=18232&a...">http://www.hexus.net/content/item.php?item=18232&a...SiliconDoc - Saturday, June 6, 2009 - link

Gee that's funny, the GTX275 wins against the 4890 in every single benchmark nearly - or everyone one completely.http://www.hexus.net/content/item.php?item=18232&a...">http://www.hexus.net/content/item.php?item=18232&a...

--

Gee imagine that - I guess Derek wasn't red roostering the testing with a special manufacturer edition sent exclusively to him from ati - and a pathetic 703 core nvidia.

--

Wow.

It's amazing what passes HERE for "a performance comparison".

gold333 - Monday, May 4, 2009 - link

Overclocking Review: HD 4890http://www.guru3d.com/article/overclocking-the-rad...">http://www.guru3d.com/article/overclocking-the-rad...

Overclocking Review GTX 275

http://www.guru3d.com/article/geforce-gtx-275-over...">http://www.guru3d.com/article/geforce-gtx-275-over...

Both are on the identical Core i7 system.

random2 - Monday, May 4, 2009 - link

Great article DerekThanks a ton for the not small effort made to put this together.

What I found very interesting, (besides the overclockability of the 4890) was just how close the 4890 is to the 285 in resolutions less than 30" monitor size. Close enough to be within the realm of "margin of error".

This is all good to know as I have a 24" monitor I cannot see giving up for a few years yet:-)

Thanks again. Oh, by the way....Those who can do...Those who cannot....criticize.

rgallant - Thursday, April 30, 2009 - link

-in every game ? when did this happen.frozentundra123456 - Thursday, April 30, 2009 - link

Just 2 topics:1. How would the 4890 compare in price and performance to the 4870x2 or 4850x2. Would these cards give similar performance at a lower price?

2. When is DX11 coming, how will it be implemented, and will it be any more efficient hardware wise than DX10? Even now, most games take a serious performance hit with DX10. Will DX11 require even better hardware?? If so I will either have to do a serious upgrade or run 2 generation old DX9. I have played Company of Heroes and World in Conflict, and I ran both is DX9 mode. The games looked fine and performance was so much better. DX10 to me has been a big disappointment in that it is so resource intensive without much visual improvement.

Captain828 - Thursday, April 30, 2009 - link

I have to say, this is probably the most worked out OC article I've ever seen... and I've seen a lot of them.Now I understand OC-ing the 4890 to show what a terrific overclocker it is, but it's just not fair to do this if you don't OC the competition's GPU in the same price range (the GTX 275).

Also, I failed to see you mention db ratings in the article. No one wants a goddamn leaf blower in their PC for usual gaming purposes.

Again, I know it took a lot of time and effort to get this done, but I would have gladly waited to see a GTX 275 OC comparison.

Regards,

Captain828