The Best Server CPUs part 2: the Intel "Nehalem" Xeon X5570

by Johan De Gelas on March 30, 2009 3:00 PM EST- Posted in

- IT Computing

The buyer's market approach: our newest testing methods

Astute readers have probably understood what we'll change in this newest server CPU evaluation, but we will let one of the professionals among our readers provide his excellent feedback on the question of improving our evaluations at it.anandtech.com:

"Increase your time horizon. Knowing the performance of the latest and greatest may be important, but most shops are sitting on stuff that's 2-3 years old. An important data point is how the new compares to the old. (Or to answer management's question: what does the additional money get us vs. what we have now? Why should we spend money to upgrade?)"

To help answer this question, we will include a 3 year old system in this review: a dual Dempsey system, which was introduced in the spring of 2006. The Dempsey or Xeon 5080 server might even be "too young", but as it is based on the "Blackford" chipset, it allows us to use the same FB-DIMMs as can be found in new Harpertown (Xeon 54xx) systems. That is important as most of our tests require quite large amounts of memory.

A 3.73GHz Xeon 5080 Dempsey performed roughly equal to a 2.3GHz Xeon 51xx Woodcrest and 2.6GHz dual-core Opteron in SAP and TPC-C. That should give you a few points of comparison, even though none of them are very meaningful. After all, we are using this old reference system to find out if the newest CPU is 2, 5, or 10 times faster; a few percent or more does not matter in that case.

In our Shanghai review, we radically changed our benchmark methodology. Instead of throwing every software box we happen to have on the shelf and know very well at our servers, we decided that the "buyers" should dictate our benchmark mix. Basically, every software type that is really important should have at least one and preferably two representatives in the benchmark suite. In the table below, you will find an overview of the software types servers are bought for and the benchmarks you can find in this review. If you want more detail about each of these software packages, please refer to this page.

| Benchmark Overview | ||

| Server Software Market | Importance | Benchmarks used |

| ERP, OLTP | 10-14% | SAP SD 2-tier (Industry Standard benchmark) Oracle Charbench (Free available benchmark) Dell DVD Store (Open Source benchmark tool) |

| Reporting, OLAP | 10-17% | MS SQL Server (Real world + vApus) |

| Collaborative | 14-18% | MS Exchange LoadGen (MS own load generator for MS Exchange) |

| Software Dev. | 7% | Not yet |

| e-mail, DC, file/print | 32-37% | MS Exchange LoadGen |

| Web | 10-14% | MCS eFMS (Real World + vApus) |

| HPC | 4-6% | LS-DYNA, LINPACK (Industry Standard) |

| Other | 2%? | 3DSMax (Our own bench) |

| Virtualization | 33-50% | VMmark (Industry standard) vApus test (in a later review) |

The combination of an older reference system and real world benchmarks that closely match the software that servers are bought for should offer you a new and better way of comparing server CPUs. We complement our own benchmarks with the more reliable industry standard benchmarks (SAP, VMmark) to reach this goal.

A look inside the lab

We had two weeks to test Nehalem, and tests like the exchange tests and the OLTP tests take more than half a day to set up and perform - not to mention that it sometimes takes months to master them. Understanding how to properly configure a mail server like Exchange is completely different from configuring a database server. It is clear that our testing is now clearly beyond what one person needs to know to perform all these tests. I would like to thank my colleagues at the Sizing Servers Lab for helping to perform all this complicated testing: Tijl Deneut, Liz Van Dijk, Thomas Hofkens, Joeri Solie, and Hannes Fostie. The Sizing Servers Lab is part of Howest, which is part of the Ghent University in Belgium. The most popular parts of our research are published here at it.anandtech.com.

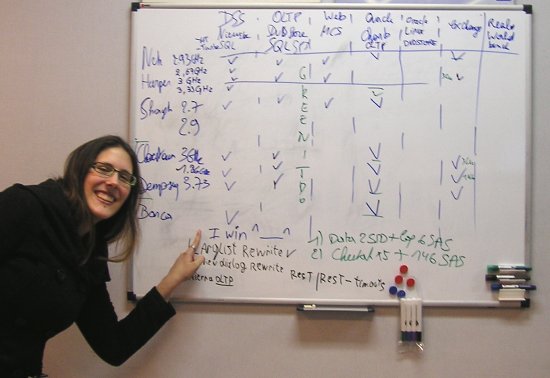

Liz proudly showing that she was first to get the MS SQL Server testing done. Notice the missing parts: the Shanghai at 2.9GHz (still in the air) and the Linux Oracle OLTP test that we are still trying to get right.

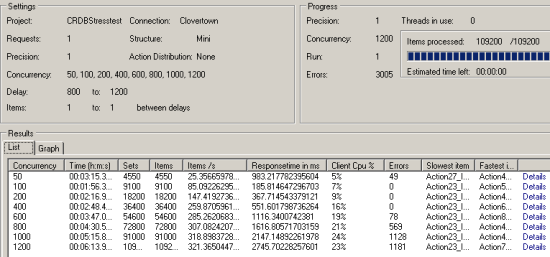

The SQL Server and website testing was performed with vApus, or "Virtual Application Unique Stress testing" tool. This tool took our team led by Dieter Vandroemme two years of research and programming, but it was well worth it. It allows us to stress test real world databases, websites, and other applications with the real logs that applications produce. vApus simulates the behavior not just by replaying the logs, but by intelligently choosing the actions that real users would perform using the different statistical distributions.

|

You can see vApus in action in the picture above. Note that the errors are time-outs. For each selection of concurrent users we see the number of responses and the average response time. It is possible to dig deeper to examine the response time of each individual action. An action is one or more queries (Databases) or a number of URLs that for example are necessary to open one webpage.

The reason why we feel that it is important to use real world applications of lesser-known companies is that these kind of benchmarks are impossible to optimize for. Manufacturers sometimes include special optimizations in their JVM, compilers, and other developer tools with the sole purpose of gaining a few points in well-known benchmarks. These benchmarks allows us to perform a real world sanity check.

44 Comments

View All Comments

rkchary - Tuesday, June 16, 2009 - link

We've a customer who is interested in upgrading to Nehalem. He's running on Windows with Oracle database for SAP Enterprise Portals.Could you kindly let us know your recommendations please?

The approximate concurrent users would be around 3000 Portal users.

Keenly looking forward for your response and if you could state any instances of Nehalem installed in SAP environment for production usage, that would be a great deal of help.

Regards,

Chary

Adun - Thursday, April 9, 2009 - link

Hello,I understand the PHP not-enough-threads explanation as to why Dual X5570 doesn't scale up.

But, can anyone please explain why when you add another AMD Opteron 2384 the increase is from 42.9 to 63.9, while when you add another Xeon X5570 there isn't such an increase?

Thank you for the article,

Adun.

stimudent - Thursday, April 2, 2009 - link

Was it really too much effort to clean off the processor before posting a picture of it? Or were they trying to show that it was used, tested?LizVD - Friday, April 3, 2009 - link

Would you perhaps like us to draw a smiley face on it as well? ;-)GazzaF - Wednesday, April 1, 2009 - link

Well done on an excellent review using as many real-world tests as possible. The VMWare test is a real eye opener and shows how the 55xx can match double the number of CPUs from the last generation of Xeons *AND* crucially save $$$$ on licensing from Windows and MS SQL and other per-socket licensed software, plus the power saving which is again a financial saving if you hire rack space in a datacentre.I eagerly await your own in-house VM tests. Please consider also testing using Windows 2008 Hyper-V which I think doesn't have the 55xx optimisations that the latest release of VMWare has (and might not have until R2?).

Thanks for the time you put in to running the endless tests. The results make a brilliant business case for anyone wanting to upgrade their servers. You must have had the chips a good week before Intel officially launched them. :-) I do feel sorry for AMD though. I'm sure they have plenty of motivation to come back with a vengeance like they did a few years ago.

JohanAnandtech - Thursday, April 2, 2009 - link

Thanks! Good to hear from another professional. I believe the current Hyper Beta R2 already has some form of support for EPT.Our virtualization testing is well under way. I'll give an update soon on our blog page.

Lifted - Wednesday, April 1, 2009 - link

You mention octal servers from Sun and HP for VM's, but does anybody really use these systems for VM's? I can't imagine why anybody would, since you are paying a serious premium for 8 sockets vs. 2 x 4 socket servers, or even 4 x 2 socket servers. Then the redundancy options are much lower when running only a few 8 socket servers vs many 2 or 4 socket servers when utilizing v-motion, and the expansion options are obviously far less w/ NIC's and HBA's. From what I've seen, most 8 socket systems are for DB's.Veteran - Wednesday, April 1, 2009 - link

What i mentioned after reading the review is there are very few benches on benchmarks a little bit favored by AMD.For example, only 1 3DSmax test (so unusefull) at least 2 are needed

Only 1 virtualization benchmark, which is really a shame....

Virtualization is becoming so important and you guys only throw in one test?

Besides that, the review feels a bit biased towards intel, but i will check some other reviews of the xeon 5570

duploxxx - Wednesday, April 1, 2009 - link

Virtualization benchmark come from the official Vmmark scores.However there is something real strange going on in the results...

HP HP ProLiant DL370 G6

VMware ESX Build #148783 VMmark v1.1

23.96@16tiles

View Disclosure 2 sockets

8 total cores

16 total threads 03/30/09

Dell Dell PowerEdge R710

VMware ESX Build #150817 VMmark v1.1

23.55@16tiles

View Disclosure 2 sockets

8 total cores

16 total threads 03/30/09

Inspur Inspur NF5280

VMware ESX Build #148592 VMmark v1.1

23.45@17tiles

View Disclosure 2 sockets

8 total cores

16 total threads 03/30/09

Intel Intel Supermicro 6026-NTR+

VMware ESX v3.5.0 Update 4 VMmark v1.1

14.22@10 tiles

View Disclosure 2 sockets

8 total cores

16 total threads 03/30/09

So lets see all the prebuilds of esx3.5 update 4 get a real high score of 16 tiles almost as much as a 4s shanghai while Vmware performance team themselves stated that we should never see the HT core as a real cpu in Vmware (even with the new code for HT) while yet the benchmark shows a high performance increase, no not like anandtech is stating that this is due to the more available memory and its bandwith, those Vmmarks are not memory starving. Now look at the official Intel benchmark with ESX update 4, it provides 10 tiles and a healthy increase, that from a technical point of view seems much more realistic. All other marketing stuff like switching time etc, all nice, but then again is within the same line of current shanghai.

JohanAnandtech - Wednesday, April 1, 2009 - link

What kind of tests are you looking for? The techreport guys have a lot of HPC tests, we are focusing on the business apps."very few benches on benchmarks a little bit favored by AMD."

That is a really weird statement. First of all, what is a test favored by AMD?

Secondly, this new kind of testing with OLTP/OLAP testing was introduced in the Shanghai review. And it really showed IMHO that there was a completely wrong perception about harpertown vs Shanghai. Because Shanghai won in the tests that mattered the most to the market. While many tests (inclusive those of Intels) were emphasizing purely CPU intensive stuff like Blackscholes, rendering and HPC tests. But that is a very small percentage of the market, and that created the impression that Intel was on average faster, but that was absolutely not the case.

"Only 1 virtualization benchmark, which is really a shame..."

Repeat that again in a few weeks :-). We have just succesfully concluded our testing on Nehalem.

Personally I am a bit shocked about the "not enough tests" :-). Because any professional knows how hard these OLTP/OLAP tests are to set up and how much time they take. But they might not appeal to the enthousiast, I am not sure.