Affordable storage for the SME, part one

by Johan De Gelas on November 7, 2007 4:00 AM EST- Posted in

- IT Computing

A different low-cost approach

In a first attempt to find affordable storage solutions, we gathered two interesting storage systems in our lab. The two systems are not really competitors but representatives of two different approaches to deliver high performance, centralized-but-affordable storage.

The Promise VTRAK E310f on top, the Intel SSR212MC2 on the bottom

The first option is the Promise VTRAK E310f, which is a fiber channel based storage rack. Promise's roots are in low-cost ATA RAID controllers. Recently Promise started to cater to the low-end and midrange enterprise storage market with a strong focus on keeping those racks affordable. The VTRAK E310f should thus give us a good idea whether or not it is possible to build a high performance but affordable solution with FC SAN building blocks.

The second system is the Intel SSR212MC2, which is an industry standard server optimized for storage. The reseller can turn it into anything he likes: a NAS, DAS, an iSCSI SAN, or an FC SAN. In this article, we will use the SSR212MC2 as an iSCSI SAN and (SAS) DAS. This will give us an idea what advantages and disadvantages an iSCSI device with industry standard parts has compared to a FC Appliance.

One of the big advantages that both the Intel and the Promise approach offer is that you can use industry standard hard disks and no one requires you to buy disks from the SAN manufacturer. While it is normal that a manufacturer tries to avoid users plugging unreliable hardware into their systems, this argument doesn't hold water when it comes to hard disks. After all, most OEMs also buy from Seagate, Fujitsu, and others. The big OEMs will force you to use their disks in two ways: either the controller will check the vendor of the disk ROM, or you will get "dummy trays". These trays don't allow you to add hard disks; the "real" hot-swappable trays come with the hard disks you order.

We decided to see how big the difference is between a "normal" hard disk and a "storage vendor hard disk". What you find below are the prices we could find for the end-user (second to last column) and the price range we could find when ordering extra disks when buying a storage systems from Dell/EMC, HP and IBM.

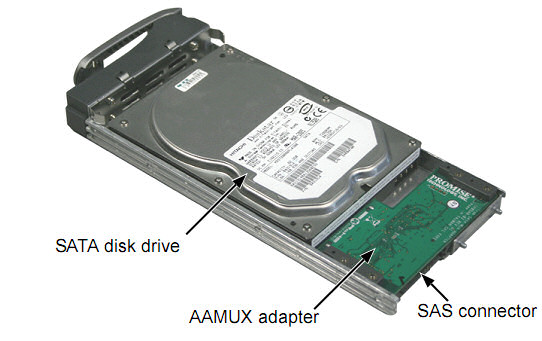

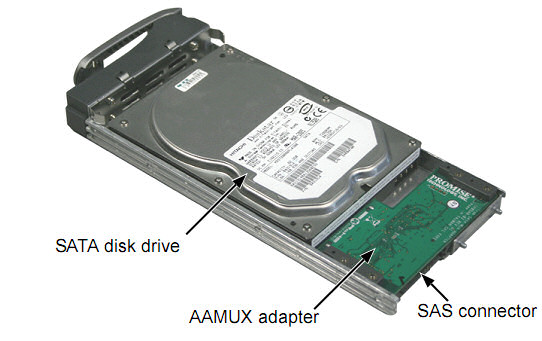

One reason why the "proprietary" SATA drives are a lot more expensive is that they usually include an Active-Active Multiplexer (AAMux). AAMUX technology enables single-ported SATA drives to connect like native dual-ported SAS drives for use in enterprise storage systems using SAS expanders. This is necessary if you want to mix SATA drives and SAS drives in the same enclosure. With an AAMux, two hosts can access a single SATA storage device independently, each through its own SATA interface. However, it is very unlikely the AAMUX device is enough to justify a $400 premium.

Granted, the premiums that vendors used to charge for "certified" disks used to be a lot bigger. Still, if you need high capacity courtesy of numerous hard disks, these premiums can rapidly add up to a significant amount of money. Let's take a closer look at pricing on a few options.

In a first attempt to find affordable storage solutions, we gathered two interesting storage systems in our lab. The two systems are not really competitors but representatives of two different approaches to deliver high performance, centralized-but-affordable storage.

The Promise VTRAK E310f on top, the Intel SSR212MC2 on the bottom

The first option is the Promise VTRAK E310f, which is a fiber channel based storage rack. Promise's roots are in low-cost ATA RAID controllers. Recently Promise started to cater to the low-end and midrange enterprise storage market with a strong focus on keeping those racks affordable. The VTRAK E310f should thus give us a good idea whether or not it is possible to build a high performance but affordable solution with FC SAN building blocks.

The second system is the Intel SSR212MC2, which is an industry standard server optimized for storage. The reseller can turn it into anything he likes: a NAS, DAS, an iSCSI SAN, or an FC SAN. In this article, we will use the SSR212MC2 as an iSCSI SAN and (SAS) DAS. This will give us an idea what advantages and disadvantages an iSCSI device with industry standard parts has compared to a FC Appliance.

One of the big advantages that both the Intel and the Promise approach offer is that you can use industry standard hard disks and no one requires you to buy disks from the SAN manufacturer. While it is normal that a manufacturer tries to avoid users plugging unreliable hardware into their systems, this argument doesn't hold water when it comes to hard disks. After all, most OEMs also buy from Seagate, Fujitsu, and others. The big OEMs will force you to use their disks in two ways: either the controller will check the vendor of the disk ROM, or you will get "dummy trays". These trays don't allow you to add hard disks; the "real" hot-swappable trays come with the hard disks you order.

We decided to see how big the difference is between a "normal" hard disk and a "storage vendor hard disk". What you find below are the prices we could find for the end-user (second to last column) and the price range we could find when ordering extra disks when buying a storage systems from Dell/EMC, HP and IBM.

| Hard Drive Price Comparison | |||

| Harddrive speed & interface | Capacity | Retail pricing (US) | Storage vendor pricing (US) |

| 15000 rpm SAS | 147 GB | $250-$300 | $370-$550 |

| 10000 rpm SAS | 300 GB | $200-$300 | $600-$850 |

| 10000 rpm FC | 300 GB | $450-$500 | $600-$950 |

| 7200 RPM SATA (Nearline) | 500 GB | $150-200 | $500-$800 |

One reason why the "proprietary" SATA drives are a lot more expensive is that they usually include an Active-Active Multiplexer (AAMux). AAMUX technology enables single-ported SATA drives to connect like native dual-ported SAS drives for use in enterprise storage systems using SAS expanders. This is necessary if you want to mix SATA drives and SAS drives in the same enclosure. With an AAMux, two hosts can access a single SATA storage device independently, each through its own SATA interface. However, it is very unlikely the AAMUX device is enough to justify a $400 premium.

Granted, the premiums that vendors used to charge for "certified" disks used to be a lot bigger. Still, if you need high capacity courtesy of numerous hard disks, these premiums can rapidly add up to a significant amount of money. Let's take a closer look at pricing on a few options.

21 Comments

View All Comments

Anton Kolomyeytsev - Friday, November 16, 2007 - link

Guys I really appreciate you throwing away StarWind! W/o even letting people know what configuration did you use, did you enable caching, did you use flat image files, did you map whole disk rather then partition, what initiator did you use (StarPort or MS iSCSI), did you apply recommended TCP stack settings etc. Probably it's our problem as we've managed to release the stuff people cannot properly configure but why did not you contact us telling you have issues so we could help you to sort them out?With the WinTarget R.I.P. (and MS selling it's successor thru the OEMs only), StarWind thrown away and SANmelody and IPStor not even mentioned (and they are key players!) I think your review is pretty useless... Most of the people are looking for software solutions when you're talking about "affordable SAN". Do you plan to have second round?

Thanks once again and keep doing great job! :)

Anton Kolomyeytsev

CEO, Rocket Division Software

Johnniewalker - Sunday, November 11, 2007 - link

If you get a chance, it would be great to see what kind of performance you get out of an iscsi hba, like the one from qlogic.When it gets down to it, the DAS numbers are great for a baseline, but what if you have 4+ servers running those io tests? That's what shared storage is for anyhow. Then compare the aggregate io vs DAS numbers?

For example, can 4 servers can hit 25MB/s each in the SQLio random read 8kb test for a total of 100MB/s ? How much is cpu utilization reduced with one or more iscsi hba in each server vs the software drivers? Where/how does the number of spindles move these numbers? At what point does the number of disk overwhelm one iscsi hba, two iscsi hba's, one FC hba, two FC hbas, and one or two scsi controllers?

IMHO iscsi is the future. Most switches are cheap enough that you can easily build a seperate dedicated iscsi network. You'd be doing that if you went with fiber channel anyhow, but at a much higher expense (and additional learning curve) if you don't already have it, right?

Then all we need is someone who has some really nice gui to manage the system - a nice purdy web interface that runs on a virtual machine somewhere, that shows with one glance the health, performance, and utilization of your system(s).

System(s) have Zero faults.

Volume(s) are at 30.0 Terabytes out of 40.00 (75%)

CPU utilization is averaging 32% over the last 15 minutes.

Memory utilization is averaging 85% over the last 15 minutes.

IOs peaked at 10,000 (50%) and average 5000 (25%) over the last 15 minutes.

Pinch me!

-johhniewalker

afan - Friday, November 9, 2007 - link

You can get one of the recently-released 10Gbps PCI-E TCP/IP card for <$800, and they support iSCSI.here's one example:

http://www.intel.com/network/connectivity/products...">http://www.intel.com/network/connectivi...oducts/p...

The chip might be used by Myricom and others, (I'm not sure), and there's a linux and a bsd driver - a nice selling point.

10gb ethernet is what should really change things.

They look amazing on paper -- I'd love to see them tested:

http://www.intel.com/network/connectivity/products...">http://www.intel.com/network/connectivi...ucts/ser...

JohanAnandtech - Saturday, November 10, 2007 - link

The problem is that currently you only got two choices: expensive CX4 copper which is short range (<15 m) and not very flexible (it is a like infiniband cables) or Optic fiber cabling. Both HBAs and cables are rather expensive and require rather expensive switches (still less than FC, but still). So you the price gap with FC is a lot smaller. Of course you have a bit more bandwidth (but I fear you won't get much more than 5 GBit, has to be test of course), and you do not need to learn fc.Personally I would like to wait for 10 gbit over UTP-cat 6... But I am open to suggestion why the current 10 gbit would be very interesting too.

afan - Saturday, November 10, 2007 - link

Thanks for your answer, J.first, as far as I know, CX4 cables aren't as cheap as cat_x, but they aren't all _that_ expensive to be a showstopper. If you need more length, you can go for the fibre cables -- which go _really_ far:

http://www.google.com/products?q=cx4+cable&btn...">http://www.google.com/products?q=cx4+ca...amp;btnG...

I think the cx4 card (~$800)is pretty damn cheap for what you get: (and remember it doesn't have pci-x limitations).

Check out the intel marketing buzz on iSCSI and the junk they're doing to speed up TCP/IP, too. It's good reading, and I'd love to see the hype tested in the real world.

I agree with you that UTP-cat 6 would be much better, more standardized, much cheaper, better range, etc. I know that, but if this is we've got now, so be-it, and I think it's pretty killer, but I haven't tested it : ).

Dell, cisco, hp, and others have CX4 adapters for their managed switches - they aren't very expensive and go right to the backplane of the switch.

here are some dell switches that support CX-4, at least:

http://www.dell.com/content/products/compare.aspx/...">http://www.dell.com/content/products/co...er3?c=us...

these are the current 10gbe intel flavors:

copper: Intel® PRO/10GbE CX4 Server Adapter

fibre:

Intel® PRO/10GbE SR Server Adapter

Intel® PRO/10GbE LR Server Adapter

Intel® 10 Gigabit XF SR Server Adapters

a pita is the limited number of x8 PCI-E slots in most server mobos.

keep up your great reporting.

best, nw

somedude1234 - Wednesday, November 7, 2007 - link

First off, great article. I'm looking forward to the rest of this series.From everything I've read coming out of MS, the StorPort driver should provide better performance. Any reason why you chose to go with SCSIPort? Emulex offers drivers for both on their website.

JohanAnandtech - Thursday, November 8, 2007 - link

Thanks. It is something that Tijl and myself will look into, and report back in the next article.Czar - Wednesday, November 7, 2007 - link

Love that anandtech is going into this direction :DRealy looking forward to your iscsi article. Only used fiber connected sans, have a ibm ds6800 at work :) Never used iscsi but veeery interested into it, what I have heard so far is that its mostly just very good for development purposes, not for production enviroments. And that you should turn of I think chaps or whatever it its called on the switches, so the icsci san doesnt overflow the network with are you there when it transfers to the iscsi target.

JohanAnandtech - Thursday, November 8, 2007 - link

Just wait a few weeks :-). Anandtech IT will become much more than just one of the many tabs :-)

We will look into it, but I think it should be enough to place your iSCSI storage on a nonblocking switch on separate VLAN. Or am I missing something?

Czar - Monday, November 12, 2007 - link

think I found ithttp://searchstorage.techtarget.com/generic/0,2955...">http://searchstorage.techtarget.com/generic/0,2955...

"Common Ethernet switch ports tend to introduce latency into iSCSI traffic, and this reduces performance. Experts suggest deploying high-performance Ethernet switches that sport fast, low-latency ports. In addition, you may choose to tweak iSCSI performance further by overriding "auto-negotiation" and manually adjusting speed settings on the NIC and switch. This lets you enable traffic flow control on the NIC and switch, setting Ethernet jumbo frames on the NIC and switch to 9000 bytes or higher -- transferring far more data in each packet while requiring less overhead. Jumbo frames are reported to improve throughput as much as 50%. "

This is what I was talking about.

Realy looking forward to the next article :)