More Mainstream DX10: AMD's 2400 and 2600 Series

by Derek Wilson on June 28, 2007 8:35 AM EST- Posted in

- GPUs

A Closer Look at RV610 and RV630

The RV6xx parts are similar to the R600 hardware we've already covered in detail. There are a few major differences between the two classes of hardware. First and foremost, the RV6xx GPUs include full video decode acceleration for MPEG-2, VC-1, and H.264 encoded content through AMD's UVD hardware. There was some confusion over this when R600 first launched, but AMD has since confirmed that UVD hardware is not at all present in their high end part.

We also have a difference in manufacturing process. R600 uses an 80nm TSMC process aimed at high speed transistors, while their RV610 and RV630 GPU based cards are fabbed on a 65nm TSMC process aimed at lower power consumption. The end result is that these GPUs will run much cooler and require much less power than their big brother the R600.

Transistor speed between these two processes ends up being similar in spite of the focus on power over performance at 65nm. RV610 is built with 180M transistors, while RV630 contains 390M. This is certainly down from the huge transistor count of R600, but nearly 400M is nothing to sneeze at.

Aside from the obvious differences of transistor count and the number of different units (shaders, texture unit, etc.), the only other major difference is in memory bus width. All RV610 GPU based hardware will have a 64-bit memory bus, while RV630 based parts will feature a 128-bit connection to memory. Here's the layout of each GPU:

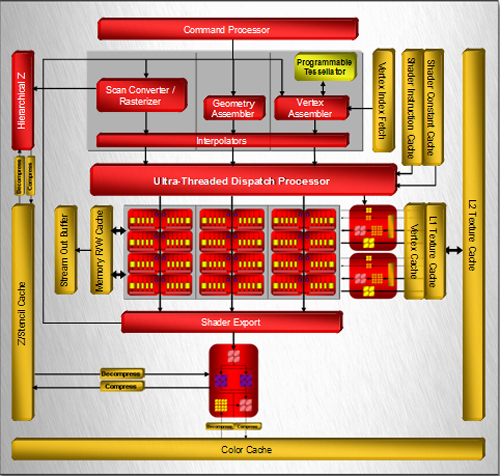

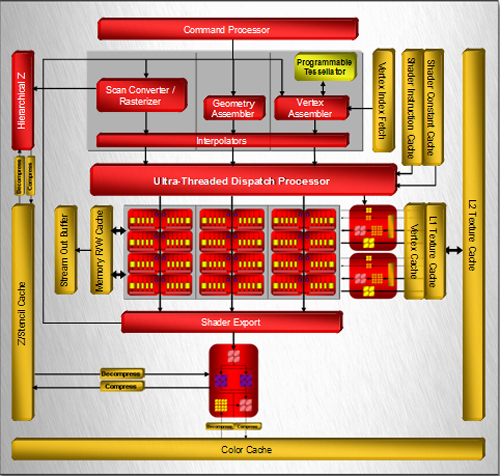

RV630 Block Diagram

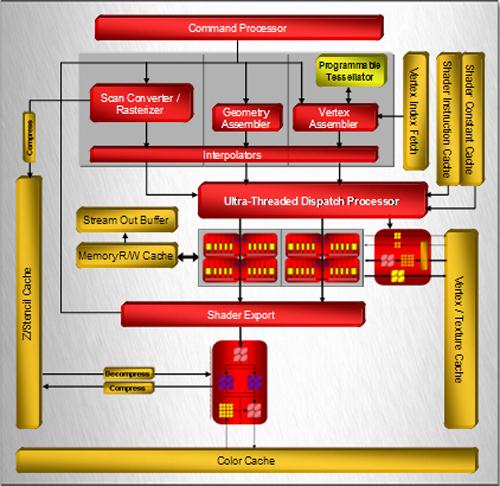

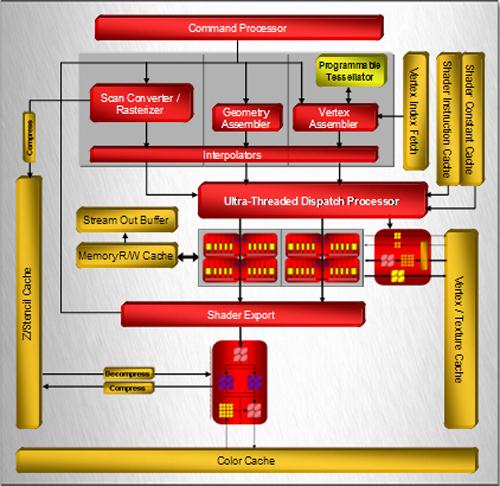

RV610 Block Diagram

One of the first things that jump out is that both RV6xx based designs feature only one render back end block. This part of the chip is responsible for alpha (transparency) and fog, dealing with final z/stencil buffer operations, sending MSAA samples back up to the shader to be resolved, and ultimately blending fragments and writing out final pixel color. Maximum pixel fill rate is limited by the number of render back ends.

In the case of both current RV6xx GPUs, we can only draw out a maximum of 4 pixels per clock (or we can do 8 z/stencil-only ops per clock). While we don't expect extreme resolutions to be run on these parts (at least not in games), we could run into issues with effects that make heavy use of MRTs (multiple render targets), z/stencil buffers, and antialiasing. With the move to DX10, we expect developers to make use of the additional MRTs they have available, and lower resolutions benefit from AA more than high resolutions as well. We would really like to see higher pixel draw power here. Our performance tests will reflect the fact that AA is not kind to AMD's new parts, because of the lack of hardware resolve as well as the use of only one render back end.

Among the notable features that we will see here are tessellation, which could have an even larger impact on low end hardware for enabling detailed and realistic geometry, and CFAA filtering options. Unfortunately, we might not see that much initial use made of the tessellation hardware, and with the reduced pixel draw and shading power of the RVxx series, we are a little skeptical of the benefits of CFAA.

From here, lets move on and take a look at what we actually get in retail products.

The RV6xx parts are similar to the R600 hardware we've already covered in detail. There are a few major differences between the two classes of hardware. First and foremost, the RV6xx GPUs include full video decode acceleration for MPEG-2, VC-1, and H.264 encoded content through AMD's UVD hardware. There was some confusion over this when R600 first launched, but AMD has since confirmed that UVD hardware is not at all present in their high end part.

We also have a difference in manufacturing process. R600 uses an 80nm TSMC process aimed at high speed transistors, while their RV610 and RV630 GPU based cards are fabbed on a 65nm TSMC process aimed at lower power consumption. The end result is that these GPUs will run much cooler and require much less power than their big brother the R600.

Transistor speed between these two processes ends up being similar in spite of the focus on power over performance at 65nm. RV610 is built with 180M transistors, while RV630 contains 390M. This is certainly down from the huge transistor count of R600, but nearly 400M is nothing to sneeze at.

Aside from the obvious differences of transistor count and the number of different units (shaders, texture unit, etc.), the only other major difference is in memory bus width. All RV610 GPU based hardware will have a 64-bit memory bus, while RV630 based parts will feature a 128-bit connection to memory. Here's the layout of each GPU:

One of the first things that jump out is that both RV6xx based designs feature only one render back end block. This part of the chip is responsible for alpha (transparency) and fog, dealing with final z/stencil buffer operations, sending MSAA samples back up to the shader to be resolved, and ultimately blending fragments and writing out final pixel color. Maximum pixel fill rate is limited by the number of render back ends.

In the case of both current RV6xx GPUs, we can only draw out a maximum of 4 pixels per clock (or we can do 8 z/stencil-only ops per clock). While we don't expect extreme resolutions to be run on these parts (at least not in games), we could run into issues with effects that make heavy use of MRTs (multiple render targets), z/stencil buffers, and antialiasing. With the move to DX10, we expect developers to make use of the additional MRTs they have available, and lower resolutions benefit from AA more than high resolutions as well. We would really like to see higher pixel draw power here. Our performance tests will reflect the fact that AA is not kind to AMD's new parts, because of the lack of hardware resolve as well as the use of only one render back end.

Among the notable features that we will see here are tessellation, which could have an even larger impact on low end hardware for enabling detailed and realistic geometry, and CFAA filtering options. Unfortunately, we might not see that much initial use made of the tessellation hardware, and with the reduced pixel draw and shading power of the RVxx series, we are a little skeptical of the benefits of CFAA.

From here, lets move on and take a look at what we actually get in retail products.

96 Comments

View All Comments

valnar - Friday, June 29, 2007 - link

Maybe I'm the opposite of most people here, but I'm glad ATI/AMD and Nvidia both produced mid-range cards that suck. Maybe we will finally get the game developers to slow down and produce tighter code or not waste GPU/CPU cycles on eye-candy and actually produce better game play. While I understand that most game companies write games that play acceptably on the $400 flagship video cards, I for one am not one of those people. It's not that I can't afford to buy a $400 card once in a awhile - it's having to spend that every year that ticks me off. I'd much rather upgrade my card every year to keep up the times if said card was $120.titan7 - Saturday, June 30, 2007 - link

Most game developers already do that. If you don't have the power to run the shaders and enable d3d10 features you can run in d3d9 mode. If your card still doesn't have the power for that you can run in pixel shader 1 mode.Take a game like Half Life 2 for example. Turn everything up and it was too much for most high end cards when it shipped. But you can turn it down so it looks like a typical d3d8 or d3d7 era game and play it just fine on your old hardware.

If you're hoping that developers can somehow make things run just as well on a PentiumIII as on a core2 duo you're hoping for the impossible. The 2600 only has about 1/3 the processing power as a 2900. The 2400 has about 10% of the power! Think about underclocking your CPU to 10% speed and seeing how your applications run ;)

Thank goodness we can disable features.

DerekWilson - Friday, June 29, 2007 - link

PLEASE READ THE UPDATE AT THE BOTTOM OF PAGE 1I would like to apologize for not catching this before publication, but the 8600 GTS we used had a slight overclock resulting in about 5% across the board higher performance than we should have seen.

We have re-run the tests on our stock clocked 8600 GTS and edited all the graphs in our article to reflect the changes. The overall results were not significantly affected, but we are very interested in being as fair and acurate as possible.

We have also added idle and load power numbers to The Test page.

Again, I am very sorry for the error, and I will do my best to make sure this doesn't happen again.

coldpower27 - Friday, June 29, 2007 - link

Meh, thanks Derek, but if you already have Factory overclocked results it may as well be lovely to leave them in as they are fair game, if Nvidia's partners are selling them in those configurations. This is of course in addition to the Nvidia reference clock rates.DerekWilson - Friday, June 29, 2007 - link

the issue is that overclocked 8600 GTS parts generally go for closer to $200, putting them well out of the price range the 2600 XT is expected to hit.it's not a fair comparison to make at this point (expecting the 2600 XT to come in at <= $150 anyway.

coldpower27 - Saturday, June 30, 2007 - link

Yeah, but you have all kinds of GPUs on the chart anyway from many different price points, 7950 GT is not close to $150 either, and neither is the 8800 GTS 320.I think people would be quite aware that the Factory OC cards if placed are indeed priced higher but if you already have the results leave them in, in addition to the Nvidia reference clock designs.

dm0r - Friday, June 29, 2007 - link

And please, keep us informed about performance with new drivers because im really interested in midrange video cards :)harpoon84 - Friday, June 29, 2007 - link

For gods sake, isn't it obvious by now that running DX10 games on these cards will result in LOWER performance, not HIGHER? If you are averaging 30fps @ 1280x1024 on DX9 games, it's only gonna get worse in DX10!http://www.extremetech.com/article2/0,1697,2151677...">http://www.extremetech.com/article2/0,1697,2151677...

Company of Heroes DX10 - SINGLE DIGIT FRAMERATES!!!

Yes, the 2600 cards are twice as fast as the 8600 cards, but we are talking totally unplayable framerates of 5 - 9 FPS!

Yeah, designed for DX10 alright! /SARCASM

Wheres TA152H now huh?

frostyrox - Friday, June 29, 2007 - link

To TA152H:Hi. It should've been painfully obvious about 10 comments ago that nobody here agrees with or, well, understands anything your saying. Can you please stop commenting on hardware articles when you don't know what you're talking about? To say that dx9 benchmarks aren't important or, heck, not the most important aspect of these cards makes 0 sense. These cards might be dx10 capable, but they obviously haven't even been given the hardware or raw horsepower to even handle dx9 (even at common resolutions). It also makes 0 sense whatsoever to even suggest these cards will somehow magically perform drastically different in pure dx10 games.

Furthermore...

How does it make any sense for AMD/ATI to have a card thats over 400 dollars (2900xt) that trades blows with the 320 and 640mb 8800gts (which are cheaper), but then have nothing between that card and a heavily hardware-castrated x2600xt for 150 dollars (a 250~ dollar difference). Also consider the fact that Nvidias dx10 mid-low end cards have matured, so even if you were froogle and wanted the cheapest possible clownshoes videocard around you should just go Nvidia. Don't even bother calling me a fanboy unless you feel like making me laugh. I currently own a radeon x800xl. I'm just being honest. It's about time the rest of you do the same. Rant over.

GlassHouse69 - Thursday, June 28, 2007 - link

Crossfire gets better results than SLI always.... two crossfire 2600XT cards would take MUCH less wattage than any other option around for its framerate. At least some azn websites are noticing.