ATI Radeon X1950 Pro: CrossFire Done Right

by Derek Wilson on October 17, 2006 6:22 AM EST- Posted in

- GPUs

The Elder Scrolls IV: Oblivion Performance

While it is disappointing that Oblivion doesn't have a built in benchmark, our

FRAPS tests have proved to be fairly repeatable and very intensive on every

part of a system. While these numbers will reflect real world playability of

the game, please remember that our test system uses the fastest processor we

could get our hands on. If a purchasing decision is to be made using Oblivion

performance alone, please check out our two articles on the

CPU

and

GPU

performance of Oblivion. We have used the most graphically intensive benchmark

in our suite, but the rest of the platform will make a difference. We can

still easily demonstrate which graphics card is best for Oblivion even if our

numbers don't translate to what our readers will see on their systems.

Running through the forest towards an Oblivion gate while fireballs fly by our

head is a very graphically taxing benchmark. In order to run this benchmark,

we have a saved game that we load and run through with FRAPS. To start the

benchmark, we hit "q" which just runs forward, and start and stop FRAPS at

predetermined points in the run. While not 100% identical each run, our

benchmark scores are usually fairly close. We run the benchmark a couple times

just to be sure there wasn't a one time hiccup.

As for settings, we tested a few different configurations and decided on this

group of options:

| Oblivion Performance Settings | |

| Texture Size | Large |

| Tree Fade | 100% |

| Actor Fade | 100% |

| Item Fade | 66% |

| Object Fade | 90% |

| Grass Distance | 50% |

| View Distance | 100% |

| Distant Land | On |

| Distant Buildings | On |

| Distant Trees | On |

| Interior Shadows | 95% |

| Exterior Shadows | 85% |

| Self Shadows | On |

| Shadows on Grass | On |

| Tree Canopy Shadows | On |

| Shadow Filtering | High |

| Specular Distance | 100% |

| HDR Lighting | On |

| Bloom Lighting | Off |

| Water Detail | High |

| Water Reflections | On |

| Water Ripples | On |

| Window Reflections | On |

| Blood Decals | High |

| Anti-aliasing | Off |

Our goal was to get acceptable performance levels under the current generation

of cards at 1600x1200. This was fairly easy with the range of cards we tested

here. These settings are amazing and very enjoyable. While more is better in

this game, no current computer will give you everything at high res. Only the

best multi-GPU solutions and a great CPU are going to give you settings like

the ones we have at high resolutions, but who cares about grass distance,

right?

While Oblivion is very graphically intensive and is played mostly from a first

person perspective (and some third person), this definitely isn't a twitch

shooter. Our experience leads us to conclude that 20fps gives a good

experience. It's playable a little lower, but watch out for some jerkiness

that may pop up. Getting down to 16fps and below is a little too low to be

acceptable. The main point to bring home is that you really want as much eye

candy as possible. While Oblivion is an immersive and awesome game from a

gameplay standpoint, the graphics certainly help draw the gamer

in.

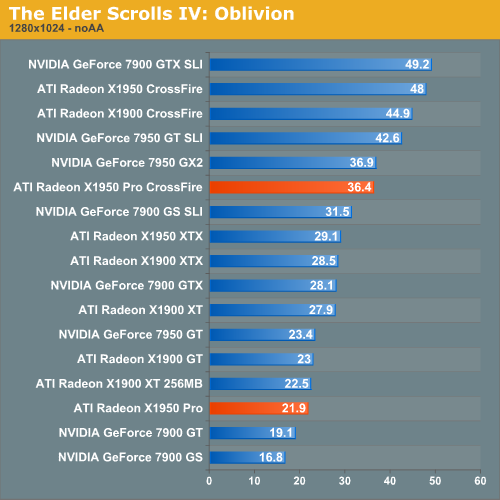

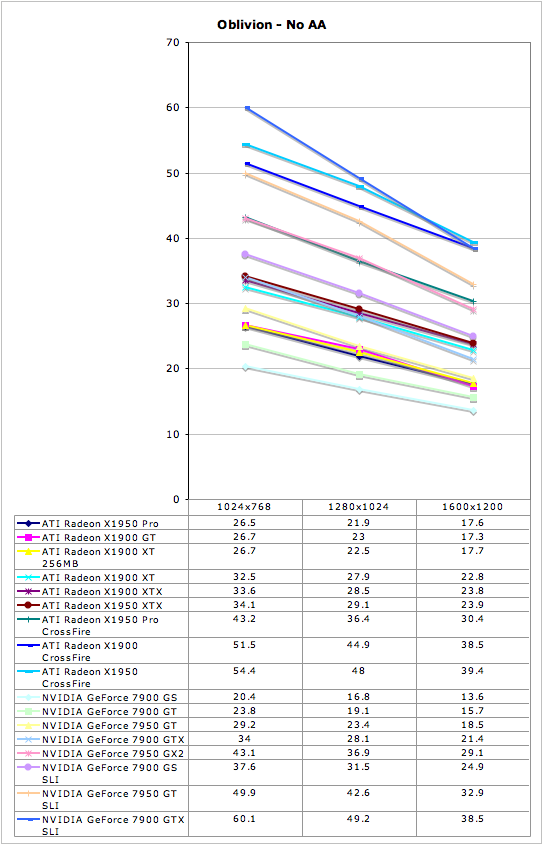

Oblivion finally shows an advantage for CrossFire as compared to SLI at the $200 card pricepoint. It still looks like SLI scales better over all (i.e. 7900 GS SLI is 88% faster than a single card, while X1950 Pro CF is only 66% faster than a single X1950 Pro), but this time even double the performance of a single 7900 GS card wouldn't be enough to beat the X1950 Pro CrossFire. We see the X1900 GT, X1950 Pro and X1900 XT 256MB all clustered together here in a rather unexpected order, but the variance of our oblivion benchmark is the culprit here. We can say that these cards all perform about the same under Oblivion, but pinning it down more than that isn't easy. No matter how we slice it though, ATI owns this benchmark.

45 Comments

View All Comments

Zoomer - Thursday, October 19, 2006 - link

Is this a optical shrink to 80nm?Answering this question will put overclocking expectations in line. Generally, optically shrunk cores from TSMC overclock to the about the same as the original or perhaps slightly worse.

coldpower27 - Friday, October 20, 2006 - link

Well no as this piepline configuration doesn't exist natively before on the 90nm node. It's a 3 Quad Part, so it's basedon R580 but has 1 Quad Physical removed as well as being shrunk to 80nm. Not to mention Native Crossfire support was added onto the die.Spoelie - Friday, October 20, 2006 - link

Optical shrink, this is 80nm and the original was 90nm. You're normally correct because the first optical shrink usually does not have the same technologies as the proces higher up (low-k and SOI for example, this was the case with 130nm -> 110nm), but I don't think it's the case for this generation. Regardless, haven't seen any overclocking articles on it yet so I'm quite curious.Spoelie - Friday, October 20, 2006 - link

oie, maybe I should add that it's reworked as well, so both actually. Since this core didn't exist before (rv570 and that pipeline configuration), I don't think that they just sliced a part of the core...Zstream - Tuesday, October 17, 2006 - link

Beyond3D reported the spec change a month before anyone received the card. I think you need to do some FAQ checking on your opinions mate.All in all decent review but poor unknowledgeable opinions…

DerekWilson - Wednesday, October 18, 2006 - link

Just because ATI made the spec change public does not mean it is alright to change the specs of a product that has been shipping for 4 months.X1900 GT has been available since May 9 as a 575/1200 part.

The message we want to send isn't that ATI is trying to hide something, its that they shouldn't do the thing in the first place.

No matter how many times a company says it changed the specs of a product, when people search for reviews they're going to see plenty that have been written since May talking about the original X1900 GT.

Naming is already ambiguous enough. I stand by my opinion that having multiple versions of a product with the exact same name is a bad thing.

I'm sorry if I wasn't clear on this in the article. Please let me know if there's anything I can reword to help get my point across.

Zoomer - Thursday, October 19, 2006 - link

This is very common. Many vendors in the past have passed off 8500s that run at 250/250 instead of the stock 275/275, and don't label them as such.There are some Asus SKUs that have this same handicap, but I can't recall what models that were.

xsilver - Tuesday, October 17, 2006 - link

any word on what the new price for the x1900gt's will be now that the x1950pros are out?or are they being phased out and no price drop is being considered?

Wellsoul2 - Monday, November 6, 2006 - link

You guys are such cheerleaders..For a single card buy why would you get this?

Why would you buy the 1900GT even after the

1900XT 256MB came out?

I got my 1900XT 256MB for $240 shipped..

Except for power consumption it's a much better card.

You get to run Oblivion great with one card.

Two cards is such a scam. More expensive motherboard..power consumption etc.

This is progress? CPU's have evolved..

It's hard to even find a motherboard with 3 PCI slots..

What a scam! Where's my ultra-fast HDTV board for PCI Express?

Seriously..Why buy into SLI/Crossfire? Why not 2 GPU's on one card?

Too late..You all bought into it.

Sorry I am just so sick of the praise for this money-grab of SLI/Crossfire.

jcromano - Tuesday, October 17, 2006 - link

Are the power consumption numbers (98W idle, 181W load) for just the graphics card or are they total system power?Thanks in advance,

Jim