NVIDIA Single Card, Multi-GPU: GeForce 7950 GX2

by Derek Wilson on June 5, 2006 12:00 PM EST- Posted in

- GPUs

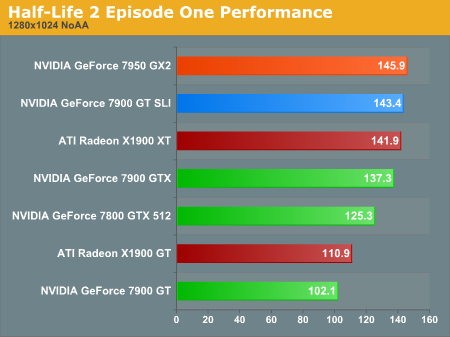

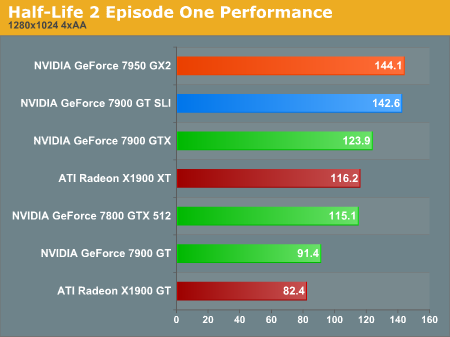

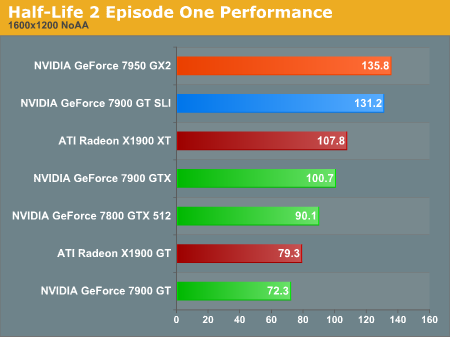

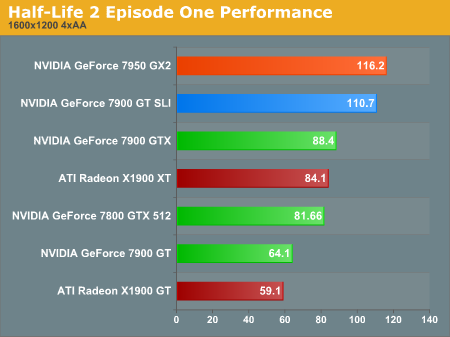

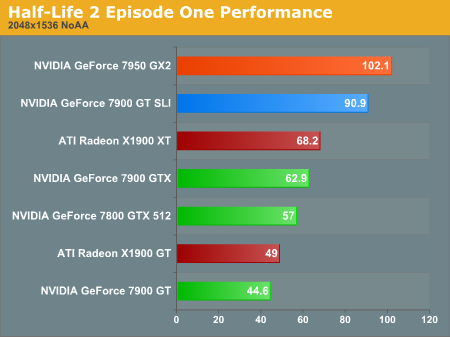

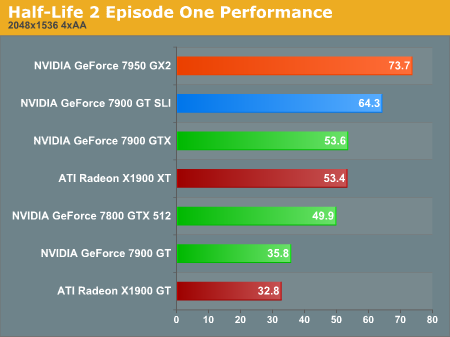

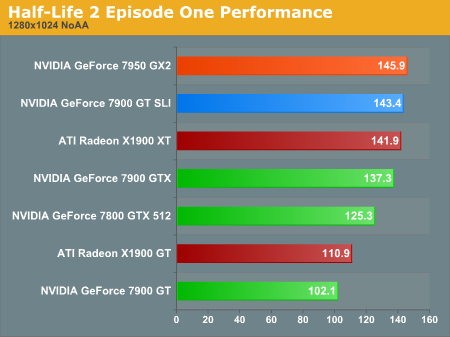

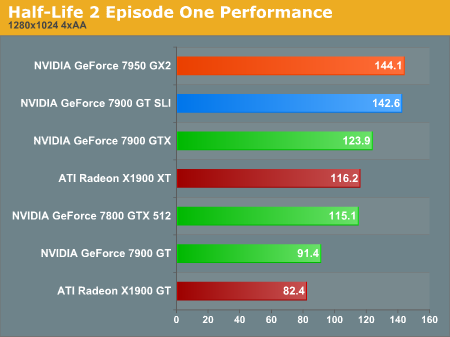

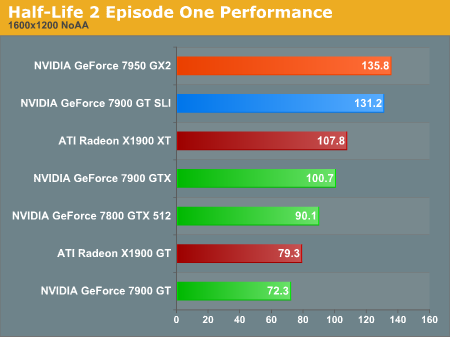

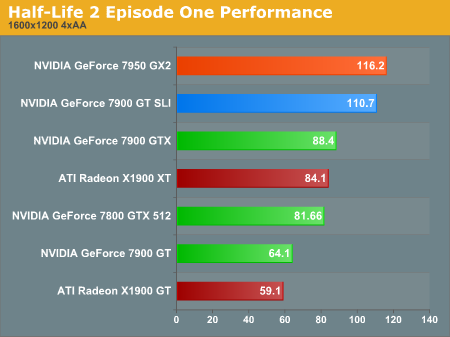

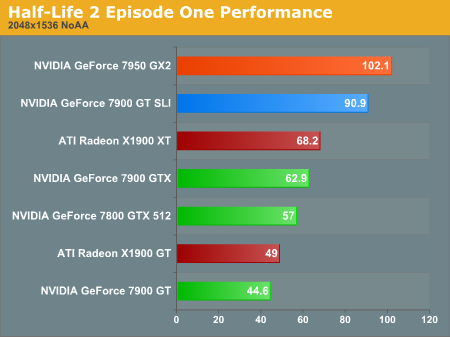

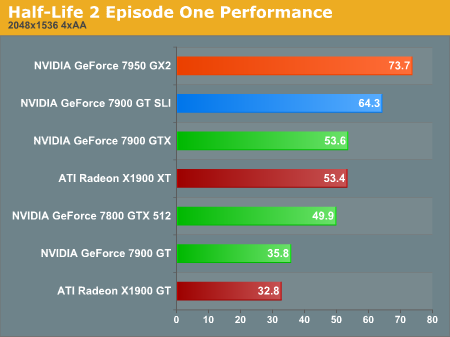

Half-Life 2 Episode One Performance

Even the newest installment of HL2 is CPU limited at the high end under 1600x1200. These tests do show that, in spite of the CPU limitation, the multi-GPU overhead isn't incredibly damaging under the Source engine.

The performance advantage of 7950 GX2 increases moving up in resolution and adding AA. While there is a benefit due to the hardware at this level of quality, framerates this high are just not necessary for playing HL2. Those with a 1600x1200 resolution limit who play HL2 style games won't need to drop $600 on hardware to get a great experience.

At the top end of our performance tests, we don't see any surprises. The 7950 GX2 is the king of HL2 as far as single board solutions go. Getting this baby in SLI for a quad GPU solution will be quite interesting indeed.

Even the newest installment of HL2 is CPU limited at the high end under 1600x1200. These tests do show that, in spite of the CPU limitation, the multi-GPU overhead isn't incredibly damaging under the Source engine.

The performance advantage of 7950 GX2 increases moving up in resolution and adding AA. While there is a benefit due to the hardware at this level of quality, framerates this high are just not necessary for playing HL2. Those with a 1600x1200 resolution limit who play HL2 style games won't need to drop $600 on hardware to get a great experience.

At the top end of our performance tests, we don't see any surprises. The 7950 GX2 is the king of HL2 as far as single board solutions go. Getting this baby in SLI for a quad GPU solution will be quite interesting indeed.

60 Comments

View All Comments

bluebob950 - Tuesday, June 27, 2006 - link

thinking about getting a 7950 but i cant find monitor any monitors that do 2048 my boss says the dell 21" at work do but i have yet to see it what do you use? and where can i find them online?bluebob950 - Tuesday, June 27, 2006 - link

thinking about getting a 7950gt but i cant find monitor any monitors that do 2048 my boss says the dell 21" at work do but i have yet to see it what do you use? and where can i find them online?JNo - Wednesday, June 7, 2006 - link

I think the review at Hexus.net bares a very interesting negative conclusion, somewhat in contrast to anandtech (not that I'm dissing anandtech), and provides food for sobering thought...http://lifestyle.hexus.net/content/item.php?item=5...">http://lifestyle.hexus.net/content/item.php?item=5...

Think seriously about what's been presented to you in this article thus far, concerning GeForce 7950 GX2, and you should (if I can do my job properly) come to a conclusion like this: NVIDIA GeForce 7950 GX2 is probably the most caveat-laden graphics purchase yet released.

The conditions that have to be satisfied before it makes sense to get one are pretty much as follows:

* Are you willing to live with less-than-absolute best image quality from the high-end generation right now?

* Are you sure the games you play all have SLI support, or you're at least happy to wait for support to come in a future driver?

* Do you have a PC platform that supports it properly, which is realistically just nForce SLI of some flavour?

* Do you have a very well ventilated PC chassis, able to assist in the significant cooling challenges it presents?

* Do you own a high resolution PC display, since it's built for at least 1600x1200 in current supported games?

* If you run dual displays, are you happy for one to go blank when in multi-GPU mode?

Be sure and really consider the first two questions, and ponder the fact that ATI Crossfire is arguably even worse at satisfying the second, given its lack of a user-adjustable game profiling system. Done so? Good.

Now given yes to all of the above, are you then willing to spend £450 on a single graphics board? You are? Brilliant, they're available today, you'll enjoy the framerates and overall IQ, happy shopping!

Tephlon - Wednesday, June 7, 2006 - link

I just wanted to point out... that list isn't just particular to this card. That's all SLI setups.All have to watch the heat. Drivers don't allow any SLI setup to dual-view+multi-GPU. SLI does dominate primarily in Hi-Res configs. and so on and so on.

Its still a great list to consider... but please remember that it isn't limited to just the 7950GX2.

DerekWilson - Wednesday, June 7, 2006 - link

except the 3rd point doesn't apply at all to 7950 GX2 -- It seems there has been a lot of confusion around the support requied for this card. It will run on Intel, ATI, VIA, SiS, an NVIDIA chipsets as long as the BIOS supports non-video devices in x16 PCIe slots along with supporting add-in PCIe switches. Suprisingly many boards support these features as of this week.the 7950 has a bunch of advantages over a 7900 GT SLI setup, not the least of which is performance. The lenient platform requirements, power draw less than an x1900xt, potential for expansion to quad sli, and it's really very transparently plug and play (just plug it in, install drivers, and no more tweaking is required for it to work as expected).

Jeff7181 - Tuesday, June 6, 2006 - link

On page three in the chart it says the 7900GTX core clock is 700 MHz (650 for vertex core). Is that accurate? So what I'm seeing and adjusting in the driver settings with coolbits is actually the speed of the vertex core?DerekWilson - Tuesday, June 6, 2006 - link

you know, I borrowed that chard from NVIDIA's reviewers guide on the 7950 GX2 ...I am under the impression that the vertex core of the 7900 GTX is 700MHz. As this is NVIDIA's chart, I'm not sure if vertex clock is listed first or second ...

Jeff7181 - Tuesday, June 6, 2006 - link

Based on the other values in the chart, and the row/column titles, I assumed the number given was supposed to be the core clock, and the one in parenthasis was supposed to be the vertex clock. I guess it could be a type-o... otherwise I want a new 7900GTX since the core speed of mine is only 650 MHz and it should be 700 according to that chart. :Dv12 - Tuesday, June 6, 2006 - link

Could you post comparative scores of the X1900XTX as well? does it beat the 7950 or not? I cant tell from this review.V12

AnnonymousCoward - Tuesday, June 6, 2006 - link

Thanks for the sweet article. 2 GPUs on a non-SLI board is the way to go...until dual-core GPUs. When are those coming out, anyway?