BFG PhysX and the AGEIA Driver

Let us begin with the BFG PhysX card itself. The specs are the exact same as the ASUS card we previewed. Specifically, we have:130nm PhysX PPU with 125 million transistors

128MB GDDR3 @ 733MHz Data Rate

32-bit PCI interface

4-pin Molex power connector

The BFG card has a bonus: a blue LED behind the fan. Our BFG card came in a retail box, pictured here:

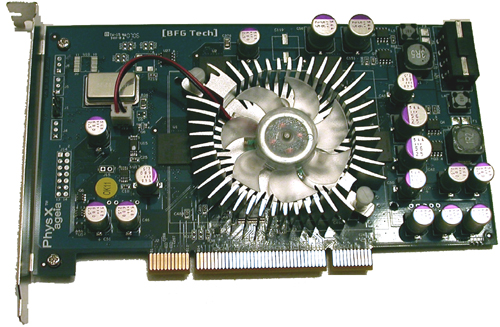

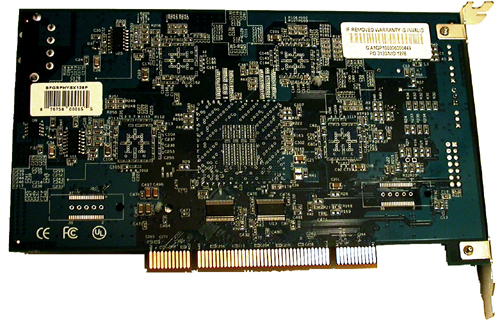

Inside the box, we find CDs, power cables, and the card itself:

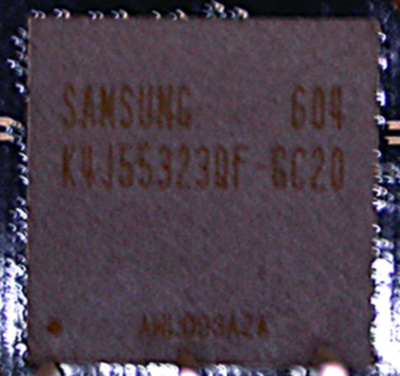

As we can see here, BFG opted to go with Samsung's K4J55323QF-GC20 GDDR3 chips. There are 4 chips on the board, each of which is 4 banks of 2Mb x 32 RAM (32MB). The chips are rated at 2ns, giving a maximum clock speed of 500MHz (1GHz data rate), but the memory clock speed used on current PhysX hardware is only 366MHz (733MHz data rate). It is possible that lower than rated clock speeds could be implemented to save on power and hit a lower thermal envelope. It might be possible that a lower clock speed allows board makers to be more aggressive with chip timing if latency is a larger concern than bandwidth for the PhysX hardware. This is just speculation at this point, but such an approach is certainly not beyond the realm of possibility.

The pricing on the BFG card costs about $300 at major online retailers, but can be found for as low as $280. The ASUS PhysX P1 Ghost Recon Edition is bundled with GRAW for about $340, while the BFG part does not come with any PhysX accelerated games. It is possible to download a demo of CellFactor now, which does add some value to the product, but until we see more (and much better) software support, we will have to recommend that interested buyers take a wait and see attitude towards this part.

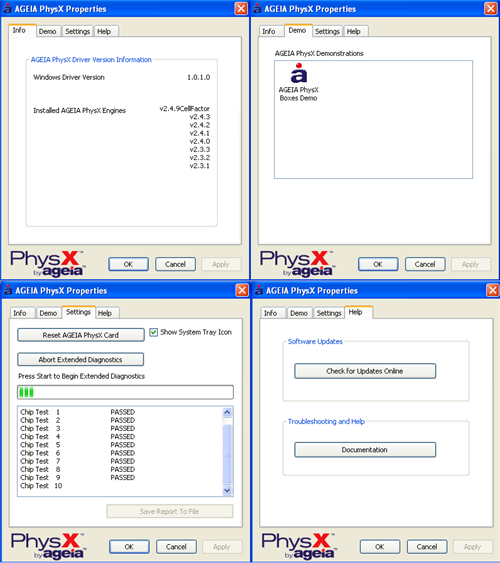

As for software support, AGEIA is constantly working on their driver and pumping out newer versions. The driver interface is shown here:

|

| Click to enlarge |

There isn't much to the user side of the PhysX driver. We see an informational window, a test application, a diagnostic tool to check or reset hardware, and a help page. There are no real "options" to speak of in the traditional sense. The card itself really is designed to be plugged in and forgotten about. This does make it much easier on the end user under normal conditions.

We also tested the power draw and noise of the BFG PhysX card. Here are our results:

Noise (in dB)

Ambient (PC off): 43.4

No BFG PhysX: 50.5

BFG PhysX: 54.0

The BFG PhysX Accelerator does audibly add to the noise. Of course, the noise increase is nowhere near as bad as listening to an ATI X1900 XTX fan spin up to full speed.

Idle Power (in Watts)

No Hardware: 170

BFG PhysX: 190

Load Power without Physics Load

No Hardware: 324

BFG PhysX: 352

Load Power with Physics Load

No Hardware: 335

BFG PhysX: 300

At first glance these results can be a bit tricky to understand. The load tests were performed with our low quality Ghost Recon Advanced Warfighter physics benchmark. Our test "without Physics Load" is taken before we throw the grenade and blow up everything, while the "with Physics Load" reading is made during the explosion.

Yes, system power draw (measured at the wall with a Kill-A-Watt) decreases under load when the PhysX card is being used. This is made odder by the fact that the power draw of the system without a physics card increases during the explosion. Our explanation is quite simple: The GPU is the leading power hog when running GRAW, and it becomes starved for input while the PPU generates its data. This explanation fits in well with our observations on framerate under the games we tested: namely, triggering events which use PhysX hardware in current games results in a very brief (yet sharp) drop in framerate. With the system sending the GPU less work to do per second, less power is required to run the game as well. While we don't know the exact power draw of the PhysX card itself, it is clear from our data that it doesn't pull nearly the power that current graphics cards require.

67 Comments

View All Comments

yanyorga - Monday, May 22, 2006 - link

Firstly, I think it's very likely that there is a slowdown due to the increased number of objects that need to be rendered, giving credence to the apples/oranges arguement.However, I think it is possible to test where there are bottlenecks. As someone already suggested, testing in SLI would show whether there is an increased GPU load (to some extent). Also, if you test using a board with a 2nd GPU slot which is only 8x and put only 1 GPU in that slot, you will be left with at least 8x left on the pci bus. You could also experiment with various overclocking options, focusing on the multipliers and bus.

Is there any info anywhere in how to use the PPU for physics or development software that makes use of it?

Chadder007 - Friday, May 26, 2006 - link

That makes wonder why City of Villans was tested with PPU at 1500 Debris objects comparing it to software at 422 Debris objects. Anandtech needs to go back and test WITH a PPU at 422 Debris objects to compare it to the software only mode to see if there is any difference.rADo2 - Saturday, May 20, 2006 - link

Well, people have now pretty hard time justifying spending $300 on a decelerator.I am afraid, however, that Ageia will be more than willing to "slow down a bit" their future software drivers, to show some real-world "benefits" of their decelerator. By adding more features to their SW (by CPU) emulation, they may very well slow it down, so that new reviews will finally bring their HW to the first place.

But these review will still mean nothing, as they compare Ageia SW drivers, made intentionally bad performing, with their HW.

Ageia PhysX is a totally wrong concept, Havok FX can do the same via SSE/SSE2/SSE3, and/or SM 3.0 shaders, it can also use dualcore CPUs. This is the future and the right approach, not additional slow card making big noise.

Ageia approach is just a piece of nonsense and stupid marketing..

Nighteye2 - Saturday, May 20, 2006 - link

Do not take your fears to be facts. I think Ageia's approach is the right one, but it'll need to mature - and to really get used. The concept is good, but execution so far is still a bit lacking.rADo2 - Sunday, May 21, 2006 - link

Well, I think Ageia approach is the worst possible one. If game developers are able to distribute threads between singlecore CPU and PhysX decelerator, they should be able to use dualcore CPUs for just the same, and/or SM3.0 shaders. This is the right approach. With quadcore CPUs, they will be able to use 4 core, within 5-6 yers about 8 cores, etc. PhysX decelerator is a wrong direction, it is useful only for very limited portfolio of calculations, while CPU can do them as well (probably even faster).I definitely do NOT want to see Ageix succeed..

Nighteye2 - Sunday, May 21, 2006 - link

That's wrong. I tested it myself running Cellfactor without PPU on my dual-core PC. Even without the liquid and cloth physics, large explosions with a lot of debree still caused large slowdowns, after which it stayed slow until most of the flying debree stopped moving.On videos I saw of people playing with a PPU, slowdowns also occurred but lasted only a fraction of a second.

Also, the CPU is also needed for AI, and does not have enough memory bandwidth to do proper physics. If you want to get it really detailed, hardware physics on a dedicated PPU is the best way to go.

DigitalFreak - Thursday, May 18, 2006 - link

Don't know how accurate this is, but it might give the AT guys some ideas...http://www.hardforum.com/showthread.php?t=1056037">HardForum

Nighteye2 - Saturday, May 20, 2006 - link

I tried it without the PPU - and there's very notable slowdowns when things explode and lots of crates are moving around. And that's from running 25 FPS without moving objects. I imagine performance hits at higher framerates will be even bigger. At least without PPU.Clauzii - Thursday, May 18, 2006 - link

The German site Hartware.de showed this in their test:Processor Type: AGEIA PhysX

Bus Techonology: 32-bit PCI 3.0 Interface

Memory Interface: 128-bit GDDR3 memory architecture

Memory Capacity: 128 MByte

Memory Bandwidth: 12 GBytes/sec.

Effective Memory Data Rate: 733 MHz

Peak Instruction Bandwidth: 20 Billion Instructions/sec

Sphere-Sphere collision/sec: 530 Million max

Convex-Convex(Complex) collisions/sec.: 533,000 max

If graphics are moved to the card, a 12GB/s memory will be limiting, I think :)

Would be nice to see the PhysiX RAM @ the specced 500MHz, just to see if it has anything to do with that issue..

Clauzii - Thursday, May 18, 2006 - link

Not test - preview, sorry.