Gigabyte's i-RAM: Affordable Solid State Storage

by Anand Lal Shimpi on July 25, 2005 3:50 PM EST- Posted in

- Storage

We All Scream for i-RAM

Gigabyte sent us the first production version of their i-RAM card, marked as revision 1.0 on the PCB.There were some obvious changes between the i-RAM that we received and what we saw at Computex.

First, the battery pack is now mounted in a rigid holder on the PCB. The contacts are on the battery itself, so there's no external wire to deliver power to the card.

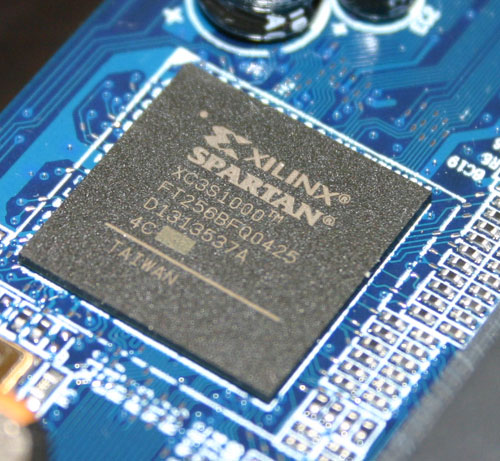

The Xilinx FPGA has three primary functions: it acts as a 64-bit DDR memory controller, a SATA controller and a bridge chip between the memory and SATA controllers. The chip takes requests over the SATA bus, translates them and then sends them off to its DDR controller to write/read the data to/from memory.

Gigabyte has told us that the initial production run of the i-RAM will only be a quantity of 1000 cards, available in the month of August, at a street price of around $150. We would expect that price to drop over time, and it's definitely a lot higher than what we were told at Computex ($50).

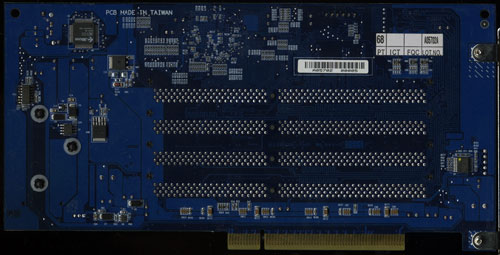

The i-RAM is outfitted with 4 184-pin DIMM slots that will accept any DDR DIMM. The memory controller in the Xilinx FPGA operates at 100MHz (DDR200) and can actually support up to 8GB of memory. However, Gigabyte says that the i-RAM card itself only supports 4GB of DDR SDRAM. We didn't have any 2GB unbuffered DIMMs to try in the card to test its true limit, but Gigabyte tells us that it is 4GB.

The Xilinx FPGA also won't support ECC memory, although we have mentioned to Gigabyte that a number of users have expressed interest in having ECC support in order to ensure greater data reliability.

Although the i-RAM plugs into a conventional 3.3V 32-bit PCI slot, it doesn't use the PCI connector for anything other than power. All data is transfered via the Xilinx chip and over the SATA connector directly to your motherboard's SATA controller, just like any regular SATA hard drive.

With SATA as the only data interface, Gigabyte made the i-RAM infinitely more useful than software based RAM drives because to the OS and the rest of your system, the i-RAM appears to be no different than a regular hard drive. You can install an OS, applications or games on it, you can boot from it and you can interact with it just like you would any other hard drive. The difference is that it is going to be a lot faster and also a lot smaller than a conventional hard drive.

The size limitations are pretty obvious, but the performance benefits really come from the nature of DRAM as a storage medium vs. magnetic hard disks. We have long known that modern day hard disks can attain fairly high sequential transfer rates of upwards of 60MB/s. However, as soon as the data stops being sequential and is more random in nature, performance can drop to as little as 1MB/s. The reason for the significant drop in performance is the simple fact that repositioning the read/write heads on a hard disk takes time as does searching for the correct location on a platter to position them. The mechanical elements of hard disks are what make them slow, and it is exactly those limitations that are removed with the i-RAM. Access time goes from milliseconds (1 x 10-3) down to nanoseconds (1 x 10-9), and transfer rate doesn't vary, so it should be more consistent.

Since it acts as a regular hard drive, theoretically, you can also arrange a couple of the i-RAM cards together in RAID if you have a SATA RAID controller. The biggest benefit to a pair of i-RAM cards in RAID 0 isn't necessarily performance, but now you can get 2x the capacity of a single card. We are working on getting another i-RAM card in house to perform some RAID 0 tests. However, Gigabyte has informed us that presently, there are stability issues with running two i-RAM cards in RAID 0, so we wouldn't recommend pursuing that avenue until we know for sure that all bugs are worked out.

133 Comments

View All Comments

RobRedman - Saturday, October 7, 2006 - link

I must have 50 sticks of unused PC100 and PC133 SDRAM.Something like this for old RAM would be a value, (for me).

Does anyone know of an adapter that would take, lets say, 10 sticks of SDRAM and give me an IDE or USB connector?

ITLisa - Saturday, October 1, 2005 - link

I spent a little time looking for this and not even the manufacturer lists it on their sitemtownshend - Tuesday, March 14, 2006 - link

It was on Gigabytes site as I looked today and the past month while making the descion to get it.There's been a lag while retailers get rid of the v.1.2 and Gigabyte sends out the v1.3 cards.

I just got one of the new ones and will use it to run my FTP server application. I have 14 or 16 drives connected (6TB) to the server and previous reviews by others have pointed to the performance increse from the FTP app. searching and retaining the disk locations.

since I just got it I am not %100 on the reality, and the real benifit will be realized by the client seeking a file from the server. Using it for MS SQL Server is also a great idea. Other than that I haven't heard any real world uses, I mean users might be able to load Doom faster, but this device seems to be a bit expensive for most.

Also this card is bigger in area than most video cards, so if your box is crammed w/ wires or liquid pumps and resivours. The logistics of getting say 2 video cards and the RamDisk in a midsized case are pretty obsurd. Plus you need a fan or 2 in there to swirl around the heat generated by 3 heat monger cards) ...There goes more money in a bigger case.

For the general user, I would go with the new Raptor (the clear one) if you want to compromise speed, size and cost on a rational level.

Peace all

lrohrer - Tuesday, September 13, 2005 - link

The simplelist and best use of the i-ram is to store the "Temp DB" for SQL Server. MS SQL Server constanly writes to this database in most larger installations. It is temporary and by definitions does need to exist after reboot. (Alas SQL Server does not/CAN NOT keep this database in RAM) So on reboot a script will need to verify that it is still formatted and the appropriate file system/ files exist -- copied from the hard disk. SQL Serve is fussy about hardware so it masquerading as a disk is perfect.In an hour on google I can't find someone to sell it to my boss to try it out. sigh.

My prediction is a 5-10% boost to overall throughput on a SQL server installation with lots of "temp DB" activilty -- well worth the cost of the ram chips.

brandonbates - Friday, August 5, 2005 - link

I've been keeping an eye on ram disks for a little while now, but other than software they are just too expensive. The earlier post that had links to them (both flash and DRAM based disks) was the same stuff that I found. More recently I had been relieved by the availability of 64bit Systems and OSes with more slots/address space for ram and thus bigger ram disks. But it still really burned me that someoune couldn't make something really cheap that didn't rely on a big fat motherboard (which still has only so many slots, but admittedly faster).This qualifies. The second I heard about this while reading computex stuff I said to myself: Self, this thing only takes power from the PCI bus, therefore it would be a trivial thing to buy some PCI slots (like 8) and wire them for power, then raid or jbod these together and get one heck of database drive at a fraction of the cost of other solutions, and scaleable at that (I can start out with 2 or 3).

I also think it would be a nice (and easy) thing for them to put it in a 3.5" form factor with both molex and/or 3.3v standby loopthrough (through a pci dummy card or something). And yes 8 slots would be much more saleable, understanding that the mem controller may not support that (though some sort of bank switch would work since you have time to wait for the SATA or SATA2 bus, 3.5 form factor would get difficult with 8 slots though).

The situation that got me looking at this stuff is I have a mysql database (tested others as well) that has to do a table scan each time I do a query since it is a '%something%' query (loading web logs and running user demanded reports on them) The database is at around 4 gigs already (about 6 months worth, including 0.5GB packed indexes) and the report takes about two minutes (2 15k drives in RAID 1, not bad) But I still have to run it at night and make a summary table. (maybe a database with multithreaded partitions or grid would do it, but how much does that cost???!?) Anyway, my 2 cents (sorry for the long post). I'd really, really like to know what benchmarks say the latency for this thing is.

Zar0n - Tuesday, August 2, 2005 - link

For a mass product gigabyte needs to add.8 dimm slots

SATA2

Let's hope they do it fast...

ybbor - Friday, July 29, 2005 - link

What would happen if you stored a SQL database on the drive... wonder what it would do for database proformance benchmarks.you would probably back up to HD every night, or cluster with db on HD for data integrety

Chadder007 - Friday, July 29, 2005 - link

They should have went with a SATA II capable interface instead of regular SATA since it has much more capable bandwidth sitting there waiting to be used. Also the 4 gig of mem only hurts it a tad too.optiguy - Friday, July 29, 2005 - link

Now just as a thought to scary uses for the i-RAM. Law enforcement will hate these things. Peadophiles will have instant access to wiping there files without a trace, terrorist won't have to worry about the good guys being able to track their files.mindless1 - Friday, July 29, 2005 - link

Nope, pedos have a compulsive urge to collect stuff, 4GB wouldn't even come close. Besides, if the pedo was thinking that far in advance, there are plenty of already-existent technologies far more secure. When the cops come busting down someone's door, do you think they'll saw something like "freeze, don't move, unless you prefer to go over to your computer and wipe data!" ? Then again, general ignorance about the need to keep the evidence battery charged could be an issue.