Linux Database Server CPU Comparison

by Johan De Gelas on June 17, 2005 12:05 AM EST- Posted in

- IT Computing

Words of thanks

A lot of people gave us assistance with this project, and we like to thank them of course:David Van Dromme, Iwill Benelux Helpdesk (http://www.iwill-benelux.com)

Ilona van Poppel, MSI Netherlands (http://www.msi-computer.nl)

Frank Balzer, IBM DB2/SUSE Linux Expert

Jasmin Ul-Haque, Novell Corporate Communications - SUSE LINUX

Matty Bakkeren, Intel Netherlands

Trevor E. Lawless, Intel US

Larry.D. Gray, Intel US

Markus Weingartner, Intel Germany

Nick Leman, MySQL expert

Bert Van Petegem, DB2 Expert

Ruben Demuynck, Vtune and OS X expert

Yves Van Steen, developer Dbconn

Damon Muzny, AMD US

I would also like to thank Lode De Geyter, Manager of the PIH, for letting us use the infrastructure of the Technical University of Kortrijk in which to test the database servers.

Benchmark configuration

To ensure that our databases were stable and reliable, we followed the guidelines of SUSE and IBM. For example, DB2 is only certified to run on the SLES versions of SUSE Linux - you cannot run it - in theory - on any Linux distribution. We also used the MySQL version (4.0.18) that came with the SUSE SLES9 CD's, which was certified to work on our OS.Network performance wasn't an issue. We used a direct Gigabit Ethernet link between client and server. On average, the server received 4 Mbit/s and sent 19 Mbit/s of data, with a peak of 140 Mbit/s, way below the limits of Gigabit. The disk system wasn't overly challenged either: up to 600 KB of reads and at most 23 KB/s writes. You can read more about our MySQL and DB2 test methods.

Software:

IBM DB2 Enterprise Server Edition 8.2 (DB2ESE), 32 bit and 64 bitMySQL 4.0.18, 32 en 64 bit, MyISAM and InnoDB engine SUSE SLES 9 (SUSE Entreprise Edition) , Linux kernel 2.6.5, 64 bit.

Hardware

We'll discuss the different servers that we tested in more detail below. Here is the list of the different configurations:Intel Server 1:

Dual Intel Nocona 3.6 GHz 1 MB L2-cache, 800 MHz FSB - Lindenhurst Chipset

Dual Intel Irwindale 3.6 GHz 2 MB L2-cache, 800 MHz FSB - Lindenhurst Chipset

Intel® Server Board SE7520AF2

8 GB (8x1024 MB) Micron Registered DDR-II PC2-3200R, 400 MHz CAS 3, ECC enabled

NIC: Dual Intel® PRO/1000 Server NIC (Intel® 82546GB controller)

Intel Server 2:

Dual Xeon DP 3.06 GHz 1 MB L3-cache, Dual Xeon 3.2 GHz 2MB L3-cache

Dual Xeon 3.2 GHz

Intel SE7505VB2 board - Dual DDR266

2 GB (4x512 MB) Crucial PC2100R - 250033R, 266 MHz CAS 2.5 (2.5-3-3-6)

NIC: 1 Gb Intel RC82540EM - Intel E1000 driver.

Intel Server 3:

Pentium-D EE 840

Intel SE7505VB2 board - Dual DDR266

2 GB (4x512 MB) Crucial PC2100R - 250033R, 266 MHz CAS 2.5 (2.5-3-3-6)

NIC: 1 Gb Intel RC82540EM - Intel E1000 driver.

Opteron Server 1: Dual Core Opteron 875 (2.2 GHz), Dual/ Single Opteron 850, Dual/Single Opteron 848

Iwill DK8ES Bios version 1.20

4 GB: 4x1GB MB Transcend (Hynix 503A) DDR400 - (3-3-3-6)

NIC: Broadcom BCM5721 (PCI-E)

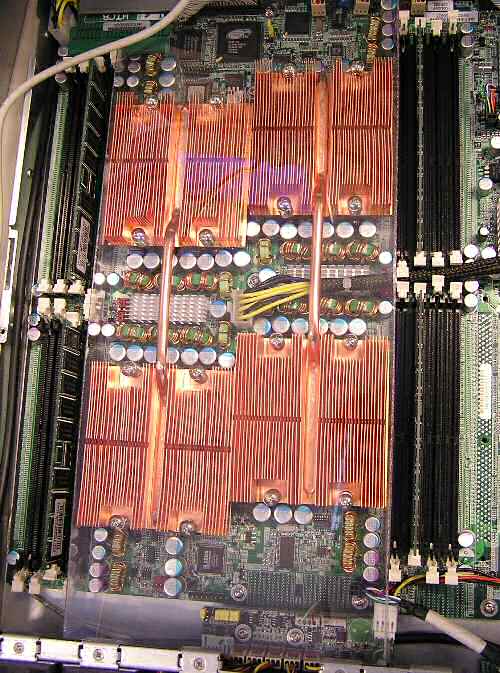

Quad Opteron Server 2: Iwill H4103: Quad Opteron 844, 848

Iwill H4103

4-8 GB: 4-8x1GB MB Transcend (Hynix 503A) DDR400 - (3-3-3-6)

NIC: Intel 82546EB (PCI-E)

Opteron Server 3: Dual Core Opteron 875 (2.2 GHz), Dual/ Single Opteron 848

MSI K8N Master2-FAR

4 GB: 4x1GB MB Transcend (Hynix 503A) DDR400 - (3-3-3-6)

NIC: Broadcom BCM5721 (PCI-E)

Opteron Server 4: AMD Quartet: Dual Opteron 848, Quad 848

Quartet motherboard, Zildjian personality board, Tobias backplane board and Rivera power distribution board.

Quad configurations: 4 GB: 8x512 MB infineon PC2700 Registered, ECC enabled

Dual configurations: 2 GB: 4x512 MB infineon PC2700 Registered, ECC enabled

NIC: Broadcom NetExtreme Gigabit

Client Configuration: Dual Opteron 850

MSI K8T Master1-FAR

4x512 MB infineon PC2700 Registered, ECC enabled

NIC: Broadcom 5705

Shared Components

1 Seagate Cheetah 36 GB - 15000 RPM - 320 MB/s

Maxtor 120 GB DiamondMax Plus 9 (7200 RPM, ATA-100/133, 8 MB cache)

Software

Vtune for Windows version 7.2, Vtune for Linux remote agent 3.0Code Analyst for Linux 3.4.8

Code Analyst for Windows 2.3.4

More about the servers in this test

Although our main focus is the database server performance of the different AMD and Intel platforms, allow me to introduce some of the motherboards and server barebones that we used in this test.Iwill H4103

The amount of power that the Iwill H4103 can pack in a 1U rack mounted case is nothing short of amazing. As you can see, there are no less than 4 Opterons in this pizza box, which also allows you to put up to 32 GB of DDR RAM in there. Even more impressive is the inclusion of two redundant 700 Watt power supplies.

What about cooling? Well, this is a server of course and a whole battery of 10,000 RPM fans keep the copper heat sinks cool. In our poorly cooled lab, temperatures rose easily to 30°C and higher, but the Iwill H4103 heat sinks hardly became warm under full load. According to Iwill, you should be able to use dual core Opterons in this pizza box, but we haven't been able to verify this as the necessary BIOS version still had yet to arrive.

The H4103 left a very stable and highly performing impression upon us. The only thing left that would make this ultra compact quad Opteron machine complete is the integration of a SCSI controller or at least a very good SATA controller.

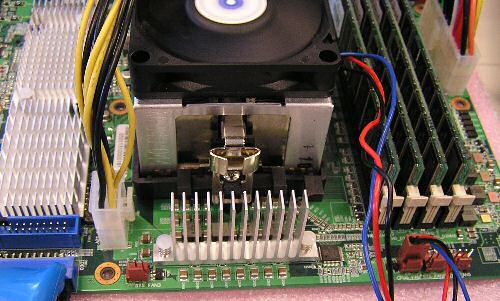

Iwill DK8ES

The Iwill DK8ES is a server board, based on NVIDIA's nForce 4 2200 Professional chipset, which includes two x16 PCI Express slots. The board also integrates an ATI RageXL VGA controller, 2 x PCI-Express x16 expansion slots (one in PCI-Express x2 mode), 3 PCI-X 64bit 133/100/66MHz expansion slots and 4 SATA ports. Two Broadcom BCM5721PCI-E Gigabit Ethernet Controllers are connected to a PCI-E port.

The board has proven to be fully stable during 6 weeks of heavy database server testing. The only problem was that Linux didn't like running Dual core Opterons on this board. A BIOS update (to 1.20) made the Opteron 275 and 875 run stable on this board, but Linux (kernel 2.6.12) still didn't use both cores. Both cores were reported, but only one was used. We suspect that Iwill may have to work out a BIOS issue, or that some of the NVIDIA Linux drivers still need some tuning.

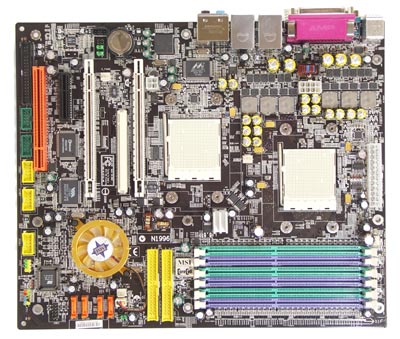

MSI's K8Master-FAR2

MSI sent us a completely different, relatively cheap workstation board that should enable very compact quad core (two dual cores) Opteron machines. The MSI K8Master-Far2 is based on the NVIDIA nForce 4 Pro chipset.In order to get two Opteron sockets, one 32-bit/33 MHz PCI slot, one PCI Express x4 slot and two PCI Express x16 slots (SLI mode supported) on a standard ATX board, MSI didn't give the second CPU local memory. Despite this limitation, the MSI K8Master-FAR2 proved to be an excellent performer in our database server tests.

Six memory slots allow up to 12 GB of RAM, not bad for such a compact board.

But at the end of the tests, the MSI K8Master-FAR2 (BIOS version 1.0) proved to be a very capable board. Supported by our Vantec 470W power supply, it had no trouble at all with two 875 Opterons running heavy database server tests for more than 3 weeks.

45 Comments

View All Comments

linuxnizer - Tuesday, July 19, 2005 - link

Late contribution...The article mentions that Linux didn't work well with AMD Dual Core. The reason could be this:

http://www.iwill.net/inews.asp?n_id=35

it says:

NVIDIA CK804 does not support dual core under Linux yet, only under Microsoft Windows.

Illissius - Saturday, June 18, 2005 - link

Nice article. I'd also be interested in PostgreSQL, being the "other" major open source database... specifically, whether it's any better at scaling with multiple CPUs. (Not that I have any practical use for this information, I'm just curious.)Viditor - Saturday, June 18, 2005 - link

Seriously, mickyb and elmo may be correct about the Intel compilers (I frankly don't have a clue what's used in most shops)...The real problem is that it's a virtual impossibilty to create a "level playing field", but I have to say to the critiques of the article that Johan has done a stellar job of coming as close as possible!

Viditor - Saturday, June 18, 2005 - link

"They aren't testing compilers"Oh sure...just throw REALITY into the mix why don't you...!

;-)

Icehawk - Saturday, June 18, 2005 - link

They aren't testing compilers.Viditor - Saturday, June 18, 2005 - link

mickyb - Thanks for the input! Fair enough...maybe Johan could use both the Pathscale compiler (which is optimized highly for Opteron) and the Intel optimized compiler on his next series of tests?mickyb - Saturday, June 18, 2005 - link

I dissagree with the comment that a large number of people don't use the Intel compiler. I (other developers and IT shops) only use Intel compiler's for Linux. It is the fastest one out there for x86 and Itanium.If you are running a large database that requires a large server (compared with a desktop loaded with RAM to run a personal blog site) like this article is testing, you will be setting up the environment with a trained IT professional that will use the compiler that is fast and stable.

When we build our product for all the UNIX platforms, we always use the vendor compiler instead of gnu. gnu works great and is free, but it is not optimized nearly as much.

This is like saying the same audience won't recompile Linux on the platform they are going to install it on. This is the first thing you should do....and with an Intel compiler. There should be no real reason why one vendor Linux is faster than the others except for compile options and loaded modules. You cannot run Linux out of the box, it doesn't come in a box where I get it. :)

DonPMitchell - Saturday, June 18, 2005 - link

We need to see TPC-C benchmark results for MySQL and other new database systems. Why won't they step up and allow themselves to be compared to the major commercial systems?Viditor - Saturday, June 18, 2005 - link

ElMoIsEviL - "as much as I am un-biased"C'mon mate...anybody who has read your posts knows you're heavily biased towards Intel, just as people who have read mine know that I am biased towards AMD. The important thing is to try and set aside the bias to look at things from both sides...I do try, but admittedly don't ALWAYS succeed. :-)

I imagine you probably posted before you read the explanation of what a query cache is...understandable.

As to not using an Intel specific compiler, I suppose that if it HAD been used I would be complaining as well. We have to rely on Johan and Anand (who frankly know a Hell of a lot more about this than either of us) to choose based on what the market actually uses...if you can site impartial industry sources that show otherwise, I'm sure we would all (especially the AT staff) would love to see them.

I do know that over the years, Johan and Anand have shown themselves to be quite unbiased in their articles (you should go read some of them on Aces as well)!

There are certainly things that I could pick apart as well..e.g. when he states

"In the second half of 2004, already one million EM64T Xeons were shipped"

Yes they were shipped, but that doesn't mean they were sold. The majority of those shipments were probably to OEMs for inventory buildup. Remember that Intel had a huge inventory write-off at the same time, and this was most likely a shift in inventory.

Regardless, none of this has to do with the validity of the article which is excellent and makes sense. If you think about it, it should have been expected...the only for AMD to have increased their marketshare in servers is by performance. They certainly don't have the budget or marketing clout that Intel has!

S390 guy - Saturday, June 18, 2005 - link

About ISAM and DB/2... ISAM (Indexed Sequential Access Method) is NOT a database! It has no referential integrity nor rollback/commit features (although those can be activated on mainframe). ISAM was popular on mainframe when there wasn't any database (or rather when database was a too massive application to run!) and even there they were superseeded by VSAM. They're not much different from DOS random access files (an index file pointing to the relative record number on the main file).And it's no suprise that DB/2 scales well: mainframes rarely feature a single CPU, at least as far as I know.... IBM have had some 20 years to practice on multi-cpu machines!