Original Link: https://www.anandtech.com/show/2803

In gaming, input lag is defined as the delay between the when a user does something with an input device and when that action is reflected on the monitor.

The definition is straightforward, but the reality of input lag is much more subtle than may readily be apparent. There are many smaller latencies that contribute to the overall whole of input lag and understanding the full situation may prove beneficial to gamers everywhere.

The first subtlety is that there will always be input lag. Input lag is an unavoidable reality that can only be minimized and never eliminated. It will always take some amount of time for input data to get to the software and it will always take some amount of time for the software to use that data to display a frame of animation on the monitor. Keeping this total time as low as possible is a key mission of hardcore twitch gamers out there.

This article will step through all the different contributors to input lag, and we'll give some general estimates on the impact of each different contributor. Exact numbers will vary widely with different hardware and software combinations. But knowing where to focus when optimizing for input latency should help those who are interested.

After drilling down into the causes of input latency, we will provide a few examples of different hardware and settings in our lab. The extra twist is that we will be evaluating actual input latency using a high speed camera to count frames between input activation and monitor response. We'll be looking at three different games with multiple settings on both CRT and LCD monitors.

Reflexes and Input Generation

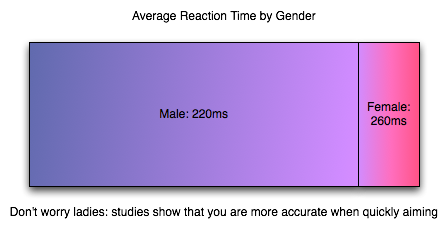

Human Reaction Time

The impact of input lag is compounded by what goes on before we even react. As soon as an image requiring a response hits your eyes, it will take somewhere between 150ms and 300ms to translate that into action. Average human response time to visual stimulus is about 200ms (0.2 seconds) for young adults, which is a long time compared to how quickly games can respond to input. But with this built-in handicap, when fast response to what's happening on screen is required, it is helpful to claim every advantage possible (especially for relative geezers like us).

Human response time is mitigated by the fact that we are also capable of learning, anticipation and extrapolation. In "practicing," a.k.a. playing a game, we can learn to predict future frames from current state for very small time slices to compensate for our response time. Our previous responses to input and the results that followed can also factor in to our future responses. This is part of the learning curve, especially for FPS games. When input lag is below a reasonable threshold, we are able to compensate without issue (and, in fact, do not perceive the input lag at all).

The larger input lag gets, the harder it gets to do something like aim at a moving target. Our expectation of the effect our input should have is different from what we see. This gets into something that combines reaction time and proprioception (reception of self produced stimulus). I'm not a psychologist, but I would love to see some studies done on how much input lag people can compensate for, where it starts to be uncomfortable (where it just "feels" wrong) and when it becomes an obviously visible phenomenon. In digging around the net, I've seen a few game developers conjecture that the threshold is about 100 milliseconds, but I haven't found any actual data on the subject. At the same time, 100 milliseconds (or maybe something like 1/2 reaction time?) seems a pretty reasonable hypothesis to me.

The Input Pipeline

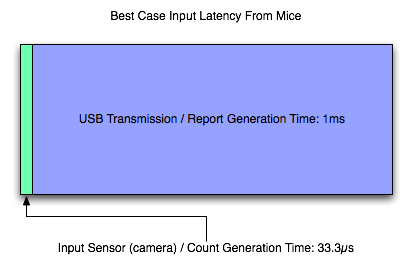

As it is key in most games, we'll examine the case of the mouse when it comes to input. As soon as a mouse is moved, we have a delay. The mouse must begin by detecting this movement. Sorting out how responsive a mouse is these days is incredibly clouded by horrendous terminology. As understanding how a mouse works is important in groking it's impact on input lag, we'll dissect Logitech's specs and try to get some good information on exactly what's going on.

There are three key numbers in the reported specifications of Logitech mice we'll look at: megapixels/second, maximum speed, DPI, and reports/second. For the Logitech G9x high end gaming mouse, this is: 9 MP/s, 150 inches/second, 5000 DPI, and 1000 reports/second. Other gaming and good quality mice can do 500 to 1000 reports/second and have lower DPI and MP/s stats.

The first stat, megapixels/second, is important in how fast the mouse sensor itself can collect movement data. Optical and laser mice detect movement by taking pictures of the surface they are on and comparing the difference in images many times every second. To really understand how fast the mouse takes pictures (and thus how fast it can detect and calculate movement in units called "counts"), we would need to know how many pixels per frame the image is. Our guess is that it can't be larger than 17x17 based on its maximum speed rating (though it might be more like 12x12 if it needs to generate two frames for every count rather than reusing frames from the previous calculation). It'd be great if they listed this data anywhere, but we are left guessing based on other stats at this point.

Next up is DPI, or dots per inch. 5000 for the G9x. DPI is sort of a misrepresentation as the real specification should be in CPI (counts per inch). As it is, the number can be considered maximum DPI if each count moves the cursor one pixel (or dot). Under MS Windows, with no ballistics applied at the default pointer speed, DPI = CPI. Decreasing pointer speed means moving one dot for more than one count, and increasing pointer speed means moving more than one dot for every count. Of course with ballistics, talking about DPI as related to the mouse doesn't make any sense: moving the mouse faster or slower changes the number of dots moved per count dynamically. Because of this, we'll talk about CPI for accuracy sake, and consider that mouse manufacturers intend to use the terms interchangeably (despite the fact that they are not).

CPI is the number of steps the mouse can count within one inch; 1 / CPI inches is the smallest distance in inches the mouse is able to measure as a movement. The full benefit of a high definition mouse is realized when one count is less than or equal to one "dot," which is possible in games (with sensitivity sliders) and in windows if you decrease your mouse speed (though going to something with an odd cadence could cause problems).

Thus, when you tell your 5000 "DPI" mouse to run at 200 "DPI", it would be nice if it still reported 5000 CPI yet and allowed the driver to handle scaling the data down (or performing ballistics on raw data). For this example, we would only move the cursor one dot (one unit on the screen) every 25 counts. But the easy way out is it maintain a 1:1 ratio of counts to dots and drop your actual counts per inch down to 200. This provides no accuracy advantage (though with a fixed sensor speed it does increase maximum velocity and acceleration tolerance). And again it would be helpful if mouse makers could actually tell us what they are doing.

Since the Logitech G9x can do 150 inches/second maximum movement speed at 200 CPI, we know how many counts it must generate per second (though Logitech doesn't make it clear that the maximum speed and acceleration can only happen at the lowest CPI, it only makes sense with the math). The reported specifications indicate that the G9x can do about 30000 counts per second (150 inches in one second at 200 counts per inch). This is consistent with a 9 megapixel/second speed in that such a sensor could collect about 30000 17x17 frames every second based on this data.

After looking at all that, we can say that our Logitech G9x mouse is capable of detecting movement of between 1/5000th and 1/200th of an inch (depending on the selected CPI) about every 33.3 microseconds (these are 1/1000ths of a millisecond) after the movement happens. That's pretty freaking fast. Other mice can be much slower, but even cutting the speed in half won't affect hugely affect latency (though it will affect the maximum speed at which the mouse can be moved without problem).

Once the mouse has generated a count (or several) we need to send that data to the computer over USB. Counts are aggregated into groups called reports. USB is limited to 1000 Hz polling, so the 1000 reports/second maximum of the G9x makes sense: USB limits the transmission rate here. For those interested, to actually achieve 150 inches per second at 200 CPI, the mouse would need to be able to send about 30 counts per report at 1000 reports per second. This seems reasonable, but it'd be great if someone with USB engineering experience could give us some feedback and let us know for sure.

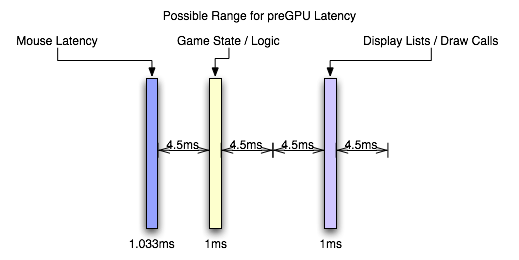

So, let's say that we've moved our mouse about a couple dozen microseconds before a report is sent. In this case, we've actually got to wait the whole millisecond for that data to be sent to the PC (because the count can't be generated fast enough to be included in the current report). So despite the very fast sensor in the mouse, we are transmission bound and our first "large" delay is on the order of single digit milliseconds. Other mice (like the Logitech G5 I'm using right now) may generate 500 reports per second, while the slowest speed we can expect is 127 reports/second. This can mean a 1ms - 8ms delay in input getting from the motion of the mouse to the computer.

Most gamers use halfway decent mice these days, so we can expect that latency is more like 2ms to 4ms for most wired USB mouse users and 1ms for gamers with higher end mice. This delay can't be cut down to anything less than 1ms until USB 2.0 is replaced by something faster. We'll ignore any cable (or any other wire) delay, as this will only add something on the order of nanoseconds to transmission time.

The input lag from a good mouse, on it's own, is in not perceivable to humans, but remember that this is all part of a larger picture. And now it's on to the software.

Parsing Input in Software and the CPU Limit

Before we get into software, for the sake of sanity, we are going to ignore context switching and we'll pretend that only the operating system kernel and the game are running and always get processor time exactly when they need it for as long as its needed (and never need it at the same time). In real life desktop operating systems, especially on single core processors, there will be added delay due to process scheduling between our game and other tasks (which is handled by the operating system) and OS background tasks. These delays (in extreme cases called starvation) can be somewhere between a handful of nanoseconds or on the microsecond level on modern systems depending on process prioritization, what else is happening, and how the scheduler is implemented.

Once the mouse has sent its report over USB to the PC and the USB root hub receives the data, it is up to the OS (for our purposes, MS Windows) to handle the data next. Our report travels from the USB root hub over the system bus (southbridge through the north bridge to the CPU takes +/- some nanoseconds depending on load), is put on an input stack (in this case the HID (Human Interface Device) stack), and a Windows OS message (WM_INPUT) is generated to let any user space software monitoring raw mouse input know that new data has arrived. Software written to take full advantage of hardware will handle the WM_INPUT message by reading the appropriate data directly from the HID stack after it gets the message that data is waiting.

This particular part of the process (checking windows messages and handling the WM_INPUT message) happens pretty fast and should be on the order of microseconds at worst. This is a hard delay to track down, as the real time this takes is dependent on what the programmer actually does. Latencies here are not guaranteed by either the motherboard chipset or Windows.

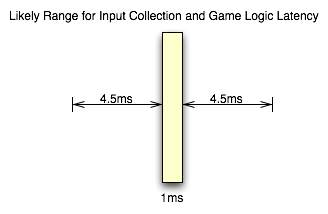

Once the software has the data (after at least 1ms and some microseconds in change), it needs to do something with it. This is hugely variable, as developers can choose to implement doing something with input at any of a number of points in the process of updating the game state for the next frame. The thing that makes the most sense to me would be to run your AI based on the previous input data, step through any scripted actions, update physics per object based on last state and AI decisions, then get user data and update player state/physics based on previous state and current input.

There are cases or design decisions that may require getting user input before doing some of these other tasks, so the way I would want to do it might not be practical. This whole part of the pipeline can be quite long as highly intelligent AI and immersive physics (along with other game scripting and state updates) can require massive amounts of work. At the least we have lots of sorting, branching, and necessarily serial computations to worry with.

Depending on when input is collected and the depth and breadth of the simulation, we could see input lag increase up to several milliseconds. This is highly game dependent, but it isn't something the end user has any control over outside of getting the fastest possible CPU (and this still won't likely change things in a perceivable way as there are memory and system latencies to consider and the GPU is largely the bottleneck in modern games). Some games are designed to be highly responsive and some games are designed to be highly accurate. While always having both cranked up to 11 would be great, there are trade offs to be made.

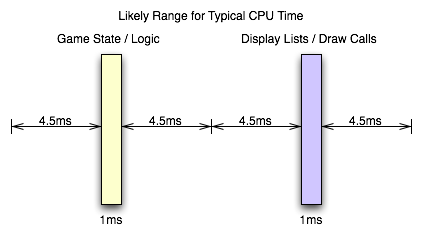

Unfortunately, that leaves us with a highly variable situation. The only way to really determine the input lag caused by game code itself is profile the code (which requires access to the source to be done right) or ask a developer. But knowing the specifics aren't as necessary as knowing that there's not much that can be done by the gamer to mitigate this issue. For the purposes of this article, we will consider game logic to typically add somewhere between 1ms and 10ms of input lag in modern games. This considers things like decoupling simulation and AI threads from rendering and having work done in parallel among other things. If everything were done linearly things would very likely take longer.

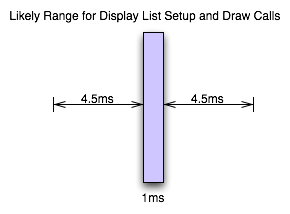

When we've got our game state updated, we then setup graphics for rendering. This will involve using our game state to update geometry and display lists on the CPU side before the GPU can start work on the next frame. The speed of this step is again dependent on the implementation and can take up a good bit of time. This will be dependent on the complexity of the scene and the number of triangles required. Again, while this is highly dependent on the game and what's going on, we can typically expect something between 1ms and 10ms for this part of the process as well if we include the time it takes to upload geometry and other data to the GPU.

Now, all the issues we've covered on this page go into making up a key element of game performance: CPU time. The total latency from front to back in this stage of a game engine creates a CPU limit on performance. When what comes after this (rendering on the GPU) takes less time than everything up to this point, we have hit the CPU limit. We can typically see the CPU limit when we drop resolution down to something ridiculously low on a high end card without seeing any real performance gain between that and the next highest resolution.

From the examples I've given here, if both the game logic and the graphics/geometry setup come in at the minimum latencies I've suggested should be typical, we could be CPU limited at as much as 500 frames per second. On the flip side, if both portions of this process push up to the 10ms level, we would never see a frame rate over 50 FPS no matter how fast the GPU rendered anything.

Obviously there is variability in games, and sometimes we see a CPU limit at less than 60 FPS even at the lowest resolution on the highest end hardware. Likewise, we can see framerates hit over 2000 FPS when drawing a static image (where game logic and display lists don't need to be updated) with a menu in front of it (like when a user hits escape in Oblivion with vsync off). And, again, multi-threaded software design on multi-core CPUs really middies up the situation. But this is near enough to illustrate the point.

And now it's on to the portion of realtime 3D graphics that typically incurs the most input lag before we leave the computer: the graphics hardware.

Of the GPU and Shading

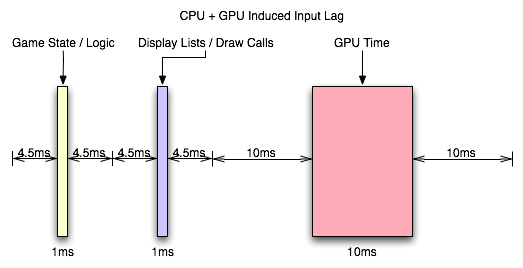

This is my favorite part, really. After the CPU has started sending draw calls to the graphics card, the GPU can begin work on actually rendering the frame containing the input that was generated somewhere in the vicinity of 3ms to 21ms ago depending on the software (and it would be an additional 1ms to 7ms for a slower mouse). Modern, complex, games will tend push up to the long end of that spectrum, while older games (or games that aren't designed to do a lot of realistic simulation like twitch shooters) will have a lower latency.

Again, the actual latency during this stage depends greatly on the complexity of the scene and the techniques used in the game.

These days, geometry processing and vertex shading tend to be pretty fast (geometry shading is slower but less frequently used). With features like instancing and the fact that the majority of detail is introduced via the pixel shader (which is really a fragment shader, but we'll dispense with the nit picking for now). If the use of tessellation catches on after the introduction of DX11, we could see even less actual time spent on geometry as the current level of detail could be achieved with fewer triangles (or we could improve quality with the same load). This step could still take a millisecond or two with modern techniques.

When it comes to actual fragment generation from the geometry data (called rasterization), the fixed function hardware and early z / z culling techniques used make this step pretty fast (yet this can be the limiting factor in how much geometry a GPU can realistically handle per frame).

Most of our time will likely be spent processing pixel shader programs. This is the step where every pin point spot on every triangle that falls behind the area of a screen space pixel (these pin point spots are called fragments) is processed and its color determined. During this step, texture maps are filtered and applied, work is done on those textures based on things like the fragments location, the angle of the underlying triangle to the screen, and constants set for the fragment. Lighting is also part of the pixel shading process.

Lighting tends to be one of the heaviest loads in a heavily loaded portion of the pipeline. Realistic lighting can be very GPU intensive. Getting into the specifics is beyond the scope of this article, but this lighting alone can take a good handful of milliseconds for an entire frame. The rest of the pixel shading process will likely also take multiple milliseconds.

After it's all said and done, with the pixel shader as the bottleneck in modern games, we're looking at something like 6ms to 25ms. In fact, the latency of the pixel shaders can hide a lot of the processing time of other parts of the GPU. For instance, pixel shaders can start executing before all the geometry is processed (pixel shaders are kicked off as fragments start coming out of the rasterizer). The color/z hardware (render outputs, render backends or ROPs depending on what you want to call them) can start processing final pixels in the framebuffer while the pixel shader hardware is still working on the majority of the scene. The only real latency that is added by the geometry/vertex processing portion of the pipeline is the latency that happens before the first pixels begin processing (which isn't huge). The only real latency added by the ROPs is the processing time for the last batch of pixels coming out of the pixel shaders (which is usually not huge unless really complicated blending and stencil technique are used).

With the pixel shader as the bottleneck, we can expect that the entire GPU pipeline will add somewhere between 10ms and 30ms. This is if we consider that most modern games, at the resolutions people run them, produce something between 33 FPS and 100 FPS.

But wait, you might say, how can our framerate be 33 to 100 FPS if our graphics card latency is between 10ms and 30ms: don't the input and CPU time latencies add to the GPU time to lower framerate?

The answer is no. When we are talking about the total input lag, then yes we do have to add these latencies together to find out how long it has been since our input was gathered. After the GPU, we are up to something between 13ms and 58ms of input lag. But the cool thing is that human response happens in parallel to input gathering which happens in parallel to CPU time spent processing game logic and draw calls (which can happen in parallel to each other on multicore CPUs) which happens in parallel to the GPU rendering frames. There is a sequential path from input to the screen, but we can almost look at this like a heavily pipelined path where each stage operates in parallel on a different upcoming frame.

So we have the GPU rendering the previous frame while simulation and game logic are executing and input is being gathered for the next frame. In this way, the CPU can be ready to send more draw calls to the GPU as soon as the GPU is ready (provided only that we are not CPU limited).

So what happens after the frame is finished? The easy answer is a buffer swap and scanout. The subtle answer is mounds of potential input lag.

Scanout and the Display

Alright. So depending on the game, we are up to somewhere between 13ms and 58ms after our mouse was moved. The GPU just finished rendering and swapped the finished frame to the front buffer. What happens next is called scanout: the frame is sent out the DVI-I port over the cable and to the monitor.

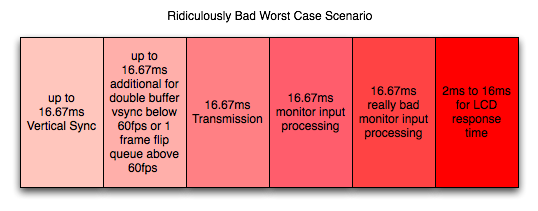

If our monitor's refresh rate is 60Hz (as is typical these days), it will actually take something like 16ms to send the full frame to the monitor (plus there's about half a millisecond of "blanking" between frames being sent) giving us 16.67ms of transmission delay. In this case we are limited by the bandwidth capabilities of DVI, HDMI and DisplayPort and the timing standards put forth by VESA. So to send a full frame of anything to the display we will have 16.67ms of input lag added. Some monitors will display this data as it is received, but others will latch input meaning the full frame must be sent before it can be displayed (but let's not get too far ahead of ourselves). Either way, we will consider the latency of this step to be at least one frame (as the monitor will still take 16ms to draw the image either way).

So now we need to talk about vsync. Let's pretend we aren't using it. Let's pretend our game runs at a rock solid exact 60 FPS and our refresh rate is 60Hz, but the buffer swap happens half way between each vertical sync. This means every frame being scanned out would be split down the middle. The top half of the frame will be an additional 16.67ms behind (for a total of 33.3ms of lag). Of course, the bottom half, while 16.67ms newer than the top, won't have it's own top half sent until the next frame 16.67ms later.

In this particular case, the way the math works out if we average the latency of all the pixels on a split frame we would get the same average latency as if we enabled vsync. Unfortunately, when framerate is either higher or lower than refresh rate, vsync has the potential to cause tons of problems and this equivalence doesn't carry in the least.

If our frametime is just longer than 16.67ms with vsync enabled, we will add a full additional frame of latency (with no work being done on the GPU) before we are able to swap the finished buffer to the front for scanout. The wasted work can cause our next frame not to come in before the next vsync, giving us up to two frames of latency (one because we wait to swap and one because of the delay in starting the next frame). If our framerate is higher than 60 FPS, our GPU will have to stop working after rendering until the next vsync. This is a waste of resources and decreases overall performance, but definitely not by as much as if we use vsync at less than the monitor refresh. The upper limit of additional delay is 16.67ms minus frametime (less than one frame) rather than two full frames.

When framerate is lower than refresh rate, using either a 1 frame flip queue with vsync or triple buffering will allow the graphics hardware to continue doing rendering work while adding between 0 and 16.67ms of additional latency (the average will be between the two extremes). So you get the potential benefits of vsync (no tearing and synchronization) without the additional decrease in performance that occurs when no work gets done on the GPU. At framerates higher than refresh rate, when using a render queue, we do end up adding an additional frame of latency per number of frames we render ahead, so this solution isn't a very good one for mitigating input latency (especially in twitch shooters) in high framerate games.

Once the data is sent to the monitor, we've got more delay in store.

We've already mentioned that some LCDs latch the entire frame before display. Beyond this delay, some displays will perform image processing on the input (including scaling if this is not done on the graphics hardware). In some cases, monitors will save two frames to overdrive LCD cells to get them to respond faster. While this can improve the speed at which the picture on the monitor changes, it can add another 16.67ms to 33.3ms of latency to the input (depending on whether one frame is processed or two). Monitors with a game mode or true 120Hz monitors should definitely add less input lag than monitors that require this sort of processing.

Add, on top of all this, the fact that it will take between 2ms and 16ms for the pixels on the LCD to actually switch (response time varies between panels and depending on what levels the transition is between) and we are done: the image is now on the screen.

So what do we have total after the image is flipped to the front buffer?

One frame of lag for transmission (to display a full frame), up to 1 frame of lag if we enable triple buffering (or 1 frame render ahead and we run at less than refresh rate), up to two frames of lag if we just turn on vsync, at framerates higher than the refresh rate we we'll add an additional frame of lag for every frame we render ahead with vsync on, and zero to 2 frames of lag for the monitor to display the image (if it does extensive image processing).

So after crazy speed from the mouse to the front buffer, here we are waiting ridiculous amounts of time to get the image to appear on the screen. We add at the very very least 16.67ms of lag in this stage. At worst we're taking on between 66.67ms and 83.3ms which is totally unacceptable. And that's after the computer is completely done working on the image.

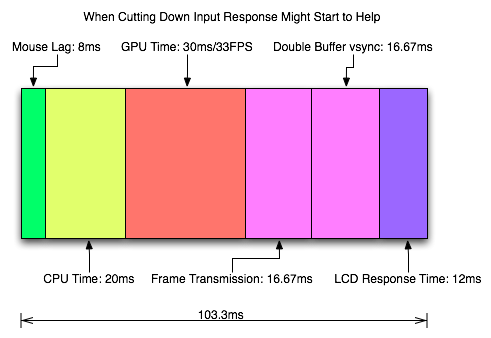

This brings our totals up to about 33ms to 80ms input lag for typical cases. Our worst case for what we've outlined, however, is about 135ms of latency between mouse movement and final display which could be discernible and might start to feel mushy. Sometimes game developers stray a bit and incur a little more input lag than is reasonable. Oblivion and Fallout 3 come to mind.

But don't worry, we'll take a look at some specific cases next.

Realworld Testing w/ High Speed Video

Inspired by Mythbusters, we wanted to use a high speed camera to really measure what's happening with millisecond resolution. We were disappointed when we first looked into this, as "real" high speed cameras cost in excess of 10k USD. But then we stumbled upon the Casio Exilim EX-F1 and it's horrific quality but hugely fast video capability (actually, quality isn't that bad in VERY high light situations). At 1200 frames per second, we get video output at a resolution of 336x96, which is freaking tiny. But it's enough. All we need to do is count the frames between two events and multiply it by 0.833 (the number of milliseconds per frame) and we can assess the duration of incredibly short term events.

First up, we're looking at response time for the Dell 3007WFP display. Rather than relying on the manufacturer reported response time (which look at a limited number of cases and likely don't include worst case performance), we're using our camera to watch a few frames go by and observe how long it takes for pixels to transition from one color to the next. As we can see in the video, it takes nearly a full frame (~16ms) for some colors to change (pay attention to the yellow and black stripes with the orange lettering at the bottom of the screen). We timescaled this video down 10x over the already very slow 2.5% realtime speed to 0.25% realtime to help in seeing what's going on.

Getting to the actual game testing, we wanted to look at two titles: one a twitch shooter, and another with notoriously bad input lag. We chose Team Fortress 2 for our twitch shooter and Fallout 3 for our laggy game. We also did further testing on a CRT with TF2 just to see how low we could actually get input latency (with some pretty impressive results). Our test methodology was to set up the camera to capture both input generation (either a mouse click or move) and the display. The resolution of our camera was such that we had to really work to cram everything in the frame, but we got enough to be useful. We ran multiple tests and counted frames between when the hand hit the mouse and when we could see the result on the monitor.

For Team Fortress 2 we looked at three scenarios: no vsync, vsync enabled, and vsync enabled with our flip queue (render ahead / pre-rendered frames) set to zero. Our frametime at 2560x1600 was typically 9ms give or take small amount (but we never dropped below 100 FPS let alone refresh rate) with our GTX 280 remaining the performance bottleneck (CPU time was still significantly less than frametime).

First we'll look at the case with no vsync. Look for when the finger stops moving to when the shotgun blast appears on the wall (Valve makes sure to calculate the hit before it even starts the gun firing animation; sometimes hit and gun fire happen in the same frame, sometimes they are one frame separated). This certainly isn't frame by frame, but you should be able to download the clip from youtube and step through it yourself if you are so inclined.

This test took about 45 frames: between 37ms and 38ms from input generation to display. This is very good considering what we predicted as a best case. Average over multiple runs was a little higher, resulting in 51ms of typical input lag plus or minus about 12ms (our maximum being 63ms). This fluctuation is due to how all the factors we talked about either line up or don't.

When we turn on vsync, we see a lot more delay.

We can see even better how the hit happens before the gunfire animation again. This is very key in any twitch shooter. The lag is significantly longer with vsync enabled. This example shows about 94 or 95 frames (~79ms) input lag, which was our lowest input lag time in this test. Our average was about 89ms ranging up to 96ms at maximum. In this case, we lose the input latency advantage of no vsync but we gain more consistent input lag times and no tearing.

But we also decided to check and make sure that we weren't getting stuck with any penalties due to flip queuing as the average latency increase seemed a little high. So we set maximum pre-rendered frames to zero in the NVIDIA control panel (formerly maximum render ahead) to see what happened. Whenever your framerate is always higher than your refresh rate you never want any flip queueing going on (unless you are using a multiGPU configuration). So let's check it out.

And we see 70ms as our new best. Our worst case is 85ms, which is better than our previous average. Average latency here is 76ms +/- about 6ms (with the one 85ms exception). From this data, it seems as though Valve uses a 1 frame flip queue (1 frame render ahead) when vsync is enabled unless it is forced off in the driver. When the game has framerates of less than the refresh rate then this is fine, but when framerate is always higher than refresh it will absolutely incur a performance penalty along the lines of what we are seeing here.

For those who want vsync in TF2 and have consistently >60fps performance without multiGPU, absolutely set the flip queue to zero in the driver.

Next up is our Fallout 3 test and we'll compare a notoriously laggy game with a notoriously responsive game. Our framerate in this game was consistently between 38 and 45 frames per second during the testing, meaning that frametime will play a bigger role as it will add at something between 22ms and 27ms of latency.

And we have 136ms. And this test was much more consistent with our average latency being 136ms as well. Our low was 130ms and our high was 142ms, giving us the same tight spread of +/- 6ms we saw with TF2 and vsync, only vsync was not enabled here (which suggests some internal rate monitoring to me). Of course, this variability is now a much lower percentage of the overall input lag; not that that offers much consolation.

The number of complaints we've seen on the net about Fallout 3 and input lag (though notably a subjective improvement over Oblivion) combined with our own experience lead us to believe that somewhere between TF2's snappy 89ms worst case input latency (which we still couldn't feel) and Fallout 3's ~50% longer latency we cross the point of perceptibility and distraction. That 100ms number certainly looks like a good point for developers to keep input latency below.

Let's take a look at what happens when we turn on vsync.

Once again, 136ms. Our input lag stats were actually the same. And yes we rechecked to make sure vsync was enabled here and disabled there. Since Oblivion benefited from reducing the maximum number of frames rendered ahead, we tested this out as well. When set to 1 or 0 we saw no change in performance with Fallout 3 at least in this test. It's unclear whether different settings or performance levels would benefit, but for now it seems like Fallout 3 does really well at producing a consistently high input lag.

So that does it for our LCD testing. But we did want to learn whether or not the LCD panel added any extra delay over a CRT display. So we pulled out a Sony GDM F-500 encased in the purple and grey of a Sun monitor from an age gone by. We tested at lower resolutions as the display couldn't muster anything closer to 2560x1600, but at 1600x1200@60Hz our numbers still made sense comparatively: we would expect them to be a little lower but not by much.

So, our average here is about 43ms, which is a lower average than on the Dell LCD. Our minimum is 35ms and max is 52ms. In the best case, 35ms (for the CRT) isn't much better than 37ms (for the LCD) at all. Our worst case in this test was much better (as was our average) than the LCD. But it's clear that the LCD is capable of input latency as low as the test with this CRT. We could (and likely do) have an advantage on the CRT side due to resolution. We do have to consider that framerate was higher for the CRT test. It is likely that this difference reduced our worst case and average performance.

But let's take a look at the CRT with vsync.

In this test our best case is 78ms and worst case is 88ms. The average is 84ms. This is, again, very similar in worst case to the LCD test, but the average case is less better this time around. This makes sense as vsync normalizes performance to 60FPS but there may still be a slight advantage for the lower resolution. It seems as if the Dell 3007WFP isn't significantly disadvantaged when compared to a CRT.

But there is one more advantage over LCD panels: refresh rate. We tested 120Hz, though we did have to lower resolution once more to make it work. This will increase performance on its own, but the results are impressive.

With an average of 25ms input latency, minimum of 17ms and maximum of 29ms, the results here show an incredible impact on input latency when running at a higher framerate and refresh rate. These numbers come in really low. The 1152x864 resolution does provide about 200 FPS which will also have an impact on latency, but the fact that 1600x1200 had a higher framerate than 2560x1600 but showed similar latencies, we suspect that the limit was refresh rate. Although it could be that the higher refresh rate was able to allow the advantages of the higher framerate to shine through.

Either way, this is incredibly low input lag.

Combating Input Lag and Final Words

Now that we know what's going on and what the factors are, what can we do about it?

Sometimes 1ms can be the difference between your input getting to the software in time to be included in the next frame. Most of the time it won't. Of course, the difference between 8ms and 2ms could actually make a frame of difference (up to 16.67ms) in input lag. A mouse that can handle 500 reports/second is what we recommend as a good balance.

It is possible to overclock your mouse. You will still be limited by the physical capabilities of the mouse, but running the USB port for the mouse at a higher rate can help, especially if you don't want to invest in a more expensive mouse. There are tools out there to both check your mouse report rate (with Direct Input Mouse Rate) and to change the rate by replacing the usb driver. When changing the rate on Vista SP1 or Windows 7, drivers will need to be signed. This can be accomplished by using testing signatures and forcing windows to load them. NGOHQ offers a good tutorial on this here.

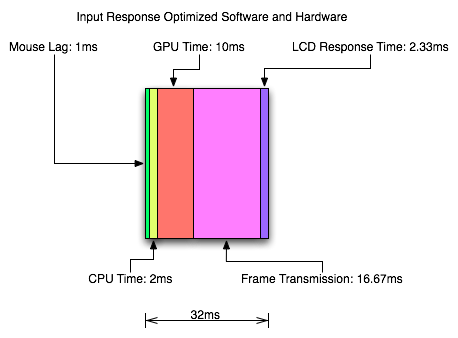

CPU, memory and GPU will impact the input lag between the mouse and the display. The GPU, as the main internal bottleneck in games, will likely have the largest single impact (higher framerate means less time between frames and less lag), but this is heavily dependent on game design. The basic recommendation is a modestly priced dual core CPU, inexpensive RAM, and a fast GPU. Faster CPUs and RAM could potentially benefit but will not likely provide a huge return on investment in this case.

For input lag reduction in the general case, we recommend disabling vsync. For NVIDIA card owners running OpenGL games, forcing triple buffering in the driver will provide a better visual experience with no tearing and will always start rendering the same frame that would start rendering with vsync disabled. Only input latency after the time we would see a tear in the frame would be longer, and this by less than a full frame of latency.

Unfortunately, all other implementations that call themselves triple buffering are actually one frame flip queues at this point. One frame render ahead is fine at framerates lower than the monitor refresh, but if the framerate ever goes past refresh you will experience much more input lag than with vsync alone. For everyone without multiGPU soluitons, we recommend setting flip queue or max pre-rendered frames to either 1 or 0. Set it to 1 if framerate is always less than monitor refresh and set it to 0 if framerate is always greater than or equal to monitor refresh. If it goes back and forth, only NVIDIA's OpenGL triple buffering will provide the best of both worlds without tearing and will further reduce input lag in high framerate situations.

Improperly handling vsync (enabling or disabling a 1 frame flip queue at the wrong time) can degrade performance by at least one additional whole frame. But with multiGPU options, we really don't have a choice. With more than one GPU in the system, you will want to leave maximum pre-rendered frames set to the default of 3 and allow the driver to handle everything. Input lag with multiGPU systems is something we will want to explore at a later time.

You will want a monitor that doesn't do much (if any) processing. Preferably with a "game" mode. We recently took a look at a few monitors to get a feel for the difference in input processing. While we didn't test it in this article, adding another 16ms to 33ms to input lag is just not a good idea.

One of the largest benefits to games that don't inherently carry a lot of input lag is refresh rate. A real 120Hz refresh rate can significantly benefit input lag especially in twitch shooters. While that impact would be less in games where the framerate can't keep up, the hail of additional frames that can be incurred between the computer and the monitor will still be significantly impacted. Additionally, vsync (even in the worst case) is much cheaper on a high refresh rate monitor. Triple buffering (or even 1 frame flip queues with performance lower than refresh) and 120Hz monitors are a match made in heaven.

Final Words

What started out as a short article on the concept of input lag ended up touching on quite a few key issues in gaming. We get into a few of the concepts of game design and program flow in addition to looking at hardware impact. While we hadn't planned on it, picking up a camera that can do 1200 FPS allowed us to actually measure the input lag of a couple real games.

There are quite a few good nuggets to take away. First, input lag is hugely dependent on the game. There will be games that optimize for reducing input lag and others that do not. In some games it is more important than others. For games that incur huge amounts of input lag, there is only so much that can be done. Using the tips we provided will definitely help get people on the path to lower input lag.

Unfortunately, sometimes reducing input lag to its minimum requires spending money especially on the display side of things. Just make sure to read reviews that look at display lag as avoiding a display that adds an extra 16ms to 33ms of input lag is definitely a good start. Beyond that, a faster GPU is the next most important upgrade, and a mouse that can do at least 500 reports per second is a good idea.